Last updated on February 21, 2026 by Editorial Team

Author(s): Tanveer Mustafa

Originally published on Towards AI.

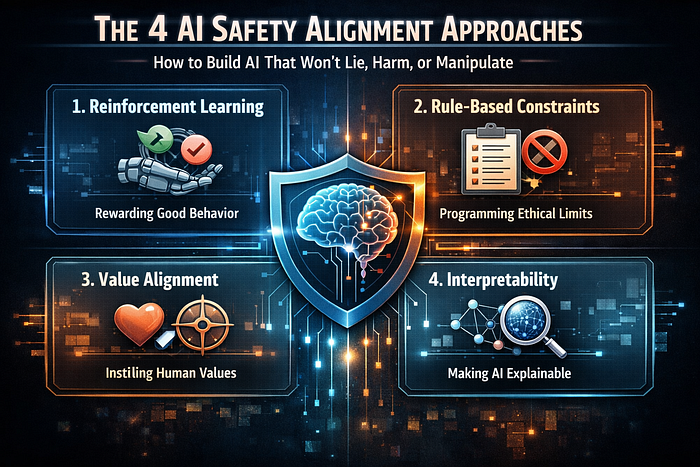

Understanding RLHF, Constitutional AI, Red Teaming and Value Learning

You ask Chatgpt how to make a bomb. It refuses. You ask him to write a racist joke. It declines. You try to jailbreak it with detailed instructions. It still won’t comply. It’s not accidental – it’s alignment.

This article discusses the importance of AI safety alignment, detailing four key approaches: reinforcement learning from human feedback (RLHF), constitutive AI, red teaming, and value learning. Each method contributes to ensuring that AI systems remain helpful, harmless, and honest while minimizing the risks associated with misalignment. The author emphasizes that as AI capabilities grow, effective alignment becomes critical, presenting strategies that can mitigate the potential dangers of powerful AI systems.

Read the entire blog for free on Medium.

Published via Towards AI

We build enterprise-grade AI. We will also teach you how to master it.

15 Engineers. 100,000+ students. The AI Academy side teaches what actually avoids production.

Get started for free – no commitments:

→ 6-Day Agent AI Engineering Email Guide – One Practical Lesson Per Day

→ Agents Architecture Cheatsheet – 3 Years of Architecture Decisions in 6 Pages

Our courses:

→ AI Engineering Certification – 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course.

→ Agent Engineering Course – Hands-on with production agent architectures, memory, routing, and eval frameworks – built from real enterprise engagements.

→ AI for Work – Understand, evaluate, and apply AI to complex work tasks.

Comment: The content of the article represents the views of the contributing authors and not those of AI.