Everyone talks about LLMs – but today’s AI ecosystem is much bigger than just language models. Behind the scenes, a whole family of specialized architectures are quietly changing the way machines see, plan, act, segment, represent concepts, and even run efficiently on small devices. Each of these models solves a different part of the intelligence puzzle, and together they are shaping the next generation of AI systems.

In this article, we will explore five major players: large language models (LLM), vision-language models (VLM), mixture of experts (MOE), large action models (LAM) and small language models (SLM).

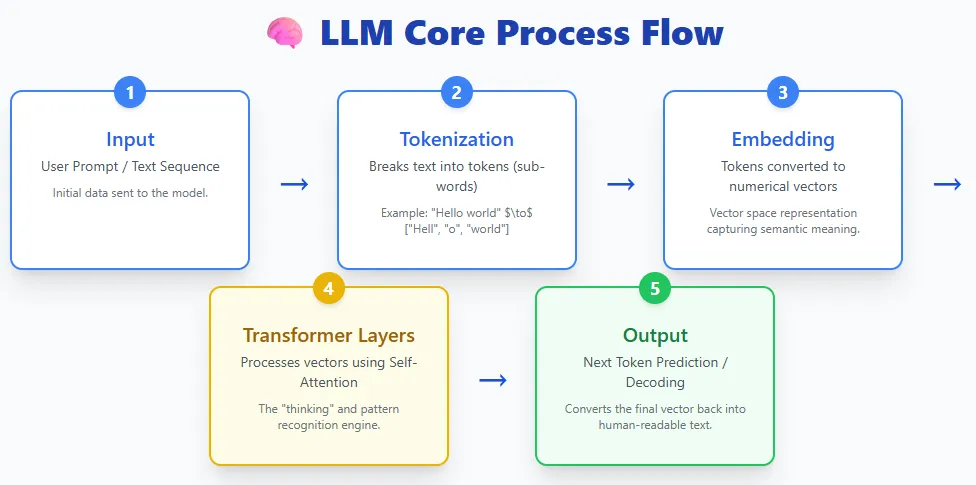

LLMs take text, break it into tokens, turn those tokens into embeddings, pass them through layers of transformers, and generate text back. Models like ChatGPT, Cloud, Gemini, Llama and others follow this basic process.

Basically, LLMs are deep learning models trained on massive amounts of text data. This training allows them to understand language, generate responses, summarize information, write code, answer questions, and perform a variety of tasks. They use the Transformer architecture, which is extremely good at handling long sequences and capturing complex patterns in the language.

Today, LLMs are widely accessible through consumer tools and assistants – from OpenAI’s ChatGPT and Anthropic’s Cloud to Meta’s Llama model, Microsoft Copilot, and Google’s Gemini and BERT/PALM families. They have become the foundation of modern AI applications due to their versatility and ease of use.

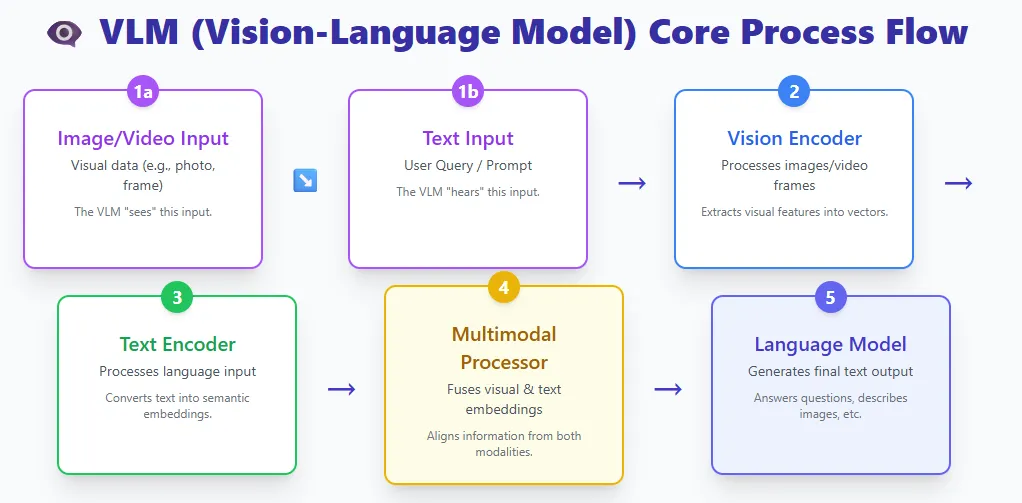

VLMs connect two worlds:

- A vision encoder that processes images or video

- A text encoder that processes language

Both streams are combined in a multimodal processor, and a language model produces the final output.

Examples include GPT-4V, Gemini Pro Vision, and LLaVA.

VLM is essentially a large language model given the ability to visualize. By combining visual and textual representations, these models can understand images, interpret documents, answer questions about images, describe videos, and much more.

Traditional computer vision models are trained for a narrow task — like classifying cats versus dogs or extracting text from an image — and they can’t generalize beyond their training classes. If you need a new class or task, you will have to train them afresh.

VLMs remove this limitation. Trained on huge datasets of images, videos and text, they can zero-shot many vision tasks, just by following natural language instructions. They can do everything from image captioning and OCR to visual reasoning and multi-step document comprehension – all without task-specific retraining.

This flexibility makes VLMs one of the most powerful advances in modern AI.

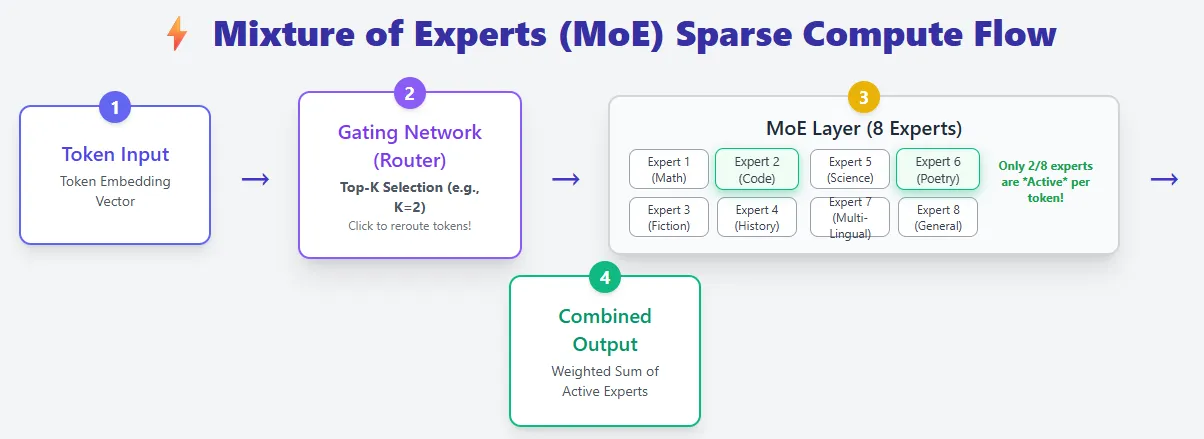

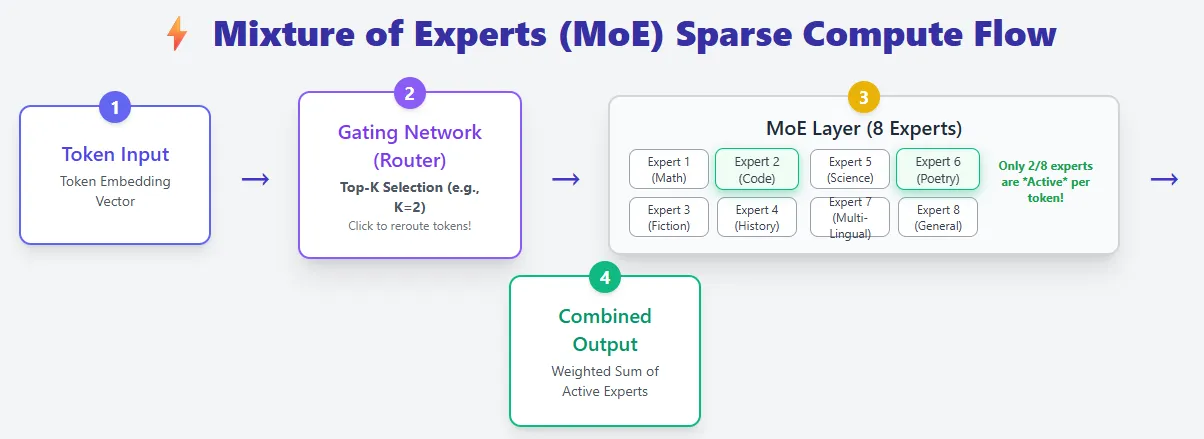

Mixing experts models build on the standard Transformer architecture but introduce a significant upgrade: instead of one feed-forward network per layer, they use multiple smaller expert networks and activate only a few for each token. This makes the MoE model extremely efficient while providing huge capacity.

In a regular transformer, each token flows through the same feed-forward network, meaning that all parameters are used for each token. MoE layers replace this with a pool of experts, and a router decides which experts should process each token (top-k selection). As a result, MoE models may have far more total parameters, but they compute with only a small fraction of them at a time – giving sparse calculations.

For example, Mixtral 8×7B has 46B+ parameters, yet each token only uses 13B.

This significantly reduces design estimation costs. Instead of scaling the model by making it deeper or wider (which increases FLOPs), the MOE model scales by adding more experts, increasing capacity without increasing the per-token count. This is why MoE is often described as having “big brains at low runtime cost”.

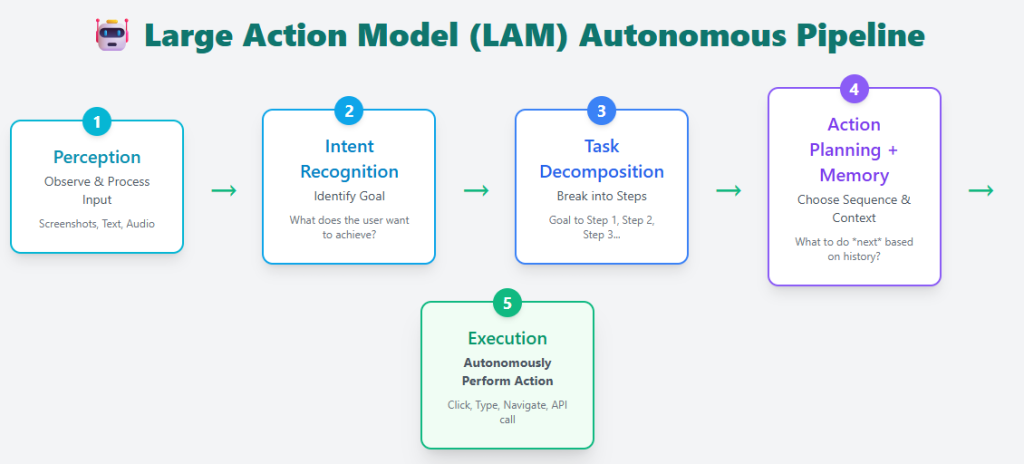

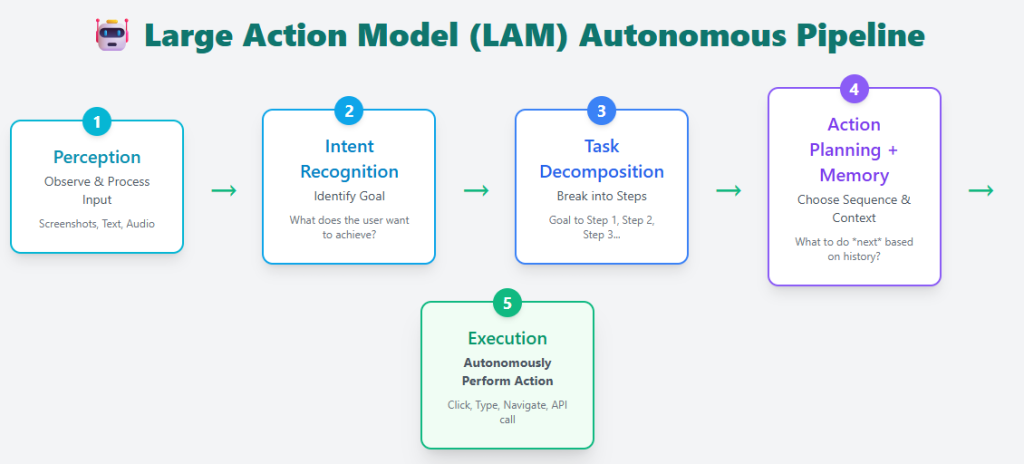

Big action models go one step further than generating text – they turn intent into action. Instead of simply answering questions, a LAM can understand what the user wants, break the task into steps, plan the necessary tasks, and then execute them in the real world or on a computer.

A typical LAM pipeline includes:

- Perception – Understanding user input

- Intent recognition – identifying what the user is trying to achieve

- Task decomposition – breaking the goal into actionable steps

- Action planning + memory – choosing the correct sequence of actions using past and present context

- Execution – carrying out tasks autonomously

Examples include Rabbit R1, Microsoft’s UFO framework, and cloud computer usage, all of which can operate apps, navigate interfaces, or complete tasks on the user’s behalf.

LAMs are trained on huge datasets of real user actions, giving them the ability to not only respond, but also perform tasks – booking rooms, filling out forms, organizing files, or executing multi-step workflows. This transforms AI from a passive assistant to an active agent capable of making complex, real-time decisions.

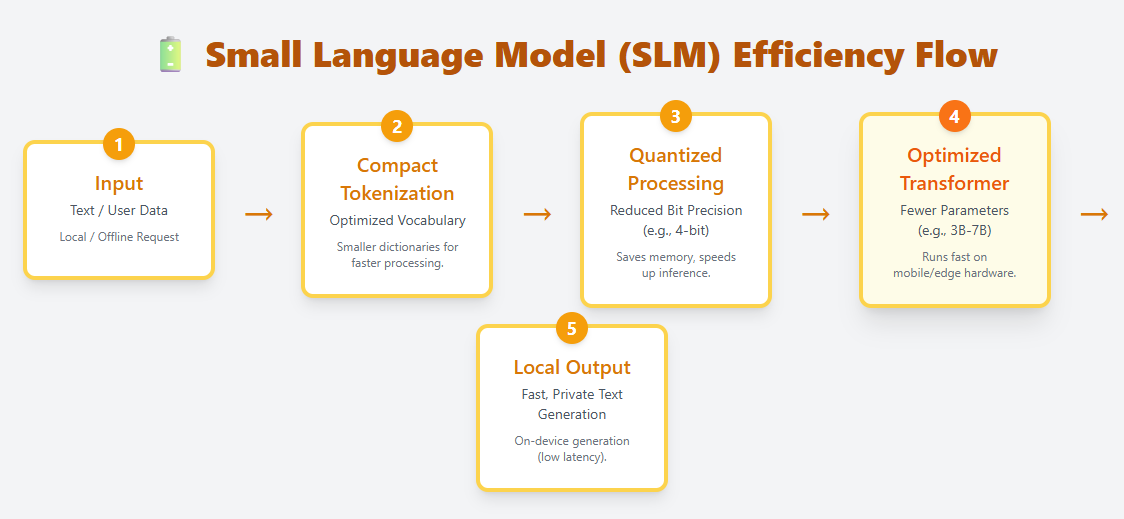

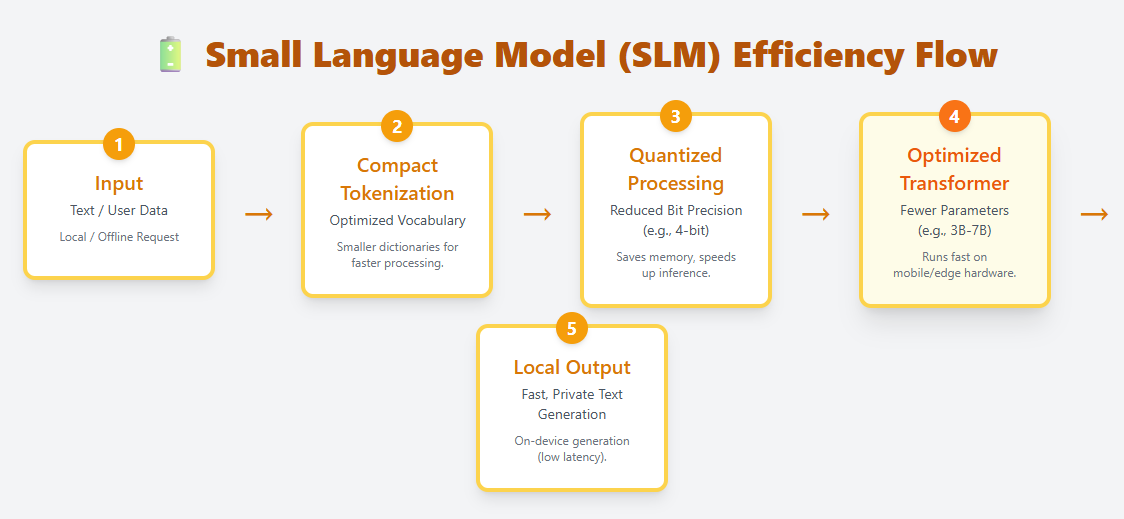

SLMs are lightweight language models designed to run efficiently on edge devices, mobile hardware, and other resource-constrained environments. They use compact tokenization, optimized transformer layers, and aggressive quantization to enable local, on-device deployment. Examples include Phi-3, Gemma, Mistral 7B, and Llama 3.2 1B.

Unlike LLMs, which can have hundreds of billions of parameters, SLMs typically range from a few million to a few billion. Despite their small size, they can still understand and generate natural language, making them useful for chat, summarization, translation, and task automation without the need for cloud computation.

Because they require very little memory and computation, SLMs are ideal for:

- mobile apps

- IoT and edge devices

- Offline or privacy-sensitive scenarios

- Low-latency applications where cloud calls are too slow

SLMs represent a growing shift toward fast, personal, and cost-efficient AI, bringing language intelligence directly to personal devices.

I am a Civil Engineering graduate (2022) from Jamia Millia Islamia, New Delhi, and I have a keen interest in Data Science, especially Neural Networks and their application in various fields.