Image by author

# (Re)introducing the hugging face

By the end of this tutorial you will learn and understand the importance of hugging face In Modern Machine Learning, explore its ecosystem, and set up your local development environment to start your practical journey of learning Machine Learning. You’ll also learn how Hugging Face is free for everyone and learn about the tools available to both beginners and experts. But first, let’s understand what a hugging face is.

Hugging Face is an online community for AI that has become a cornerstone for anyone working with AI and machine learning, enabling researchers, developers, and organizations to use machine learning in ways previously inaccessible.

Think of Hugging Face as a library filled with books written by the best authors from around the world. Instead of writing your own books, you can borrow one, understand it, and use it to solve problems – whether it’s summarizing articles, translating text, or categorizing emails.

Similarly, Hugging Face is full of machine learning and AI models written by researchers and developers around the world, which you can download and run on your local machine. You can use the model directly using the Hugging Face API without the need for expensive hardware.

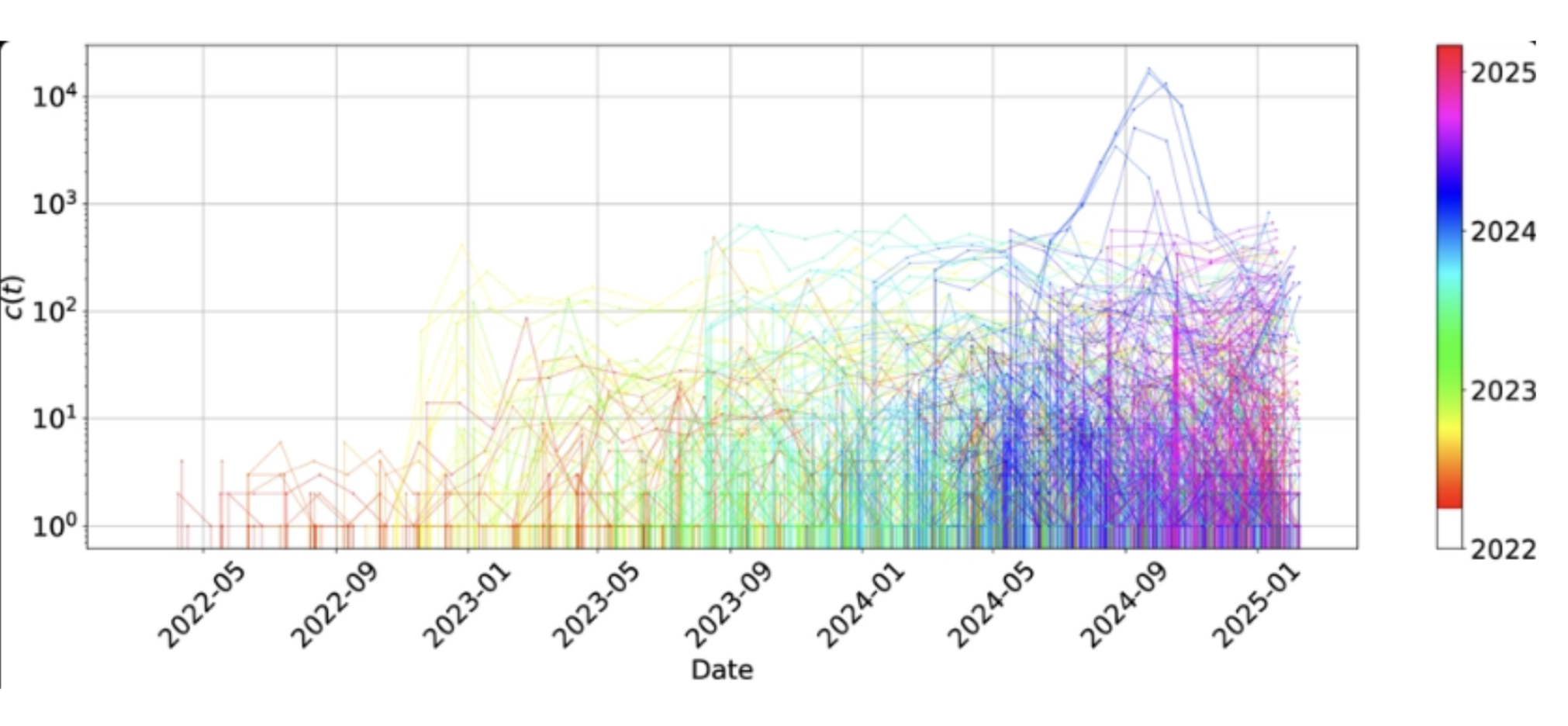

Today, Hugging Face Hub hosts millions of pre-trained models, hundreds of thousands of datasets, and a large collection of demo applications, all contributed by a global community.

# Tracing the Origin of Hugging Faces

Hugging Face was founded by French entrepreneurs Clément Delangué, Julien Chaumond, and Thomas Wolf, who initially planned to build a powered chatbot and found that developers and researchers were having difficulty accessing pre-trained models and applying cutting-edge algorithms. Hugging Face then turned its attention to building tools for machine learning workflows and open-sourcing machine learning platforms.

Image by author

# Connecting with the Hugging Face open source AI community

Hugging Face is at the heart of the tools and resources that provide everything you need for a machine learning workflow. Hugging Face provides all these tools and resources for AI. Hugging Face is not just a company but a global community that is driving the AI era forward.

Hugging Face offers a suite of tools, such as:

- Transformer Library: To access pre-trained models in tasks like text classification and summarization etc.

- Dataset Library: Provide easy access to curated natural language processing (NLP), vision and audio datasets. This saves you time by saving you from having to start from scratch.

- Model Hub: This is where researchers and developers share and give you access to tests, and download pre-trained models for any type of project you’re building.

- Blank Space: This is where you can create and host your demo using Gradio And Streamlight.

What really sets Hugging Face apart from other AI and machine learning platforms is its open-source approach, which allows researchers and developers from all over the world to contribute, develop, and improve the AI community.

# Addressing key machine learning challenges

Machine learning is transformative, but it has faced many challenges over the years. This involves training large-scale models from scratch and requiring vast computational resources, which are expensive and not accessible to most individuals. Preparing the dataset, changing the model architecture, and deploying the model to production is highly complex.

Hugging Face addresses these challenges by:

- Reduces computational costs with pre-trained models.

- Simplifies machine learning with intuitive APIs.

- To facilitate collaboration through a central repository.

Embracing the face reduces these challenges in many ways. By offering pre-trained models, developers can skip the expensive training phase and start using state-of-the-art models immediately.

Transformers The library provides easy-to-use APIs that allow you to implement sophisticated machine learning tasks with just a few lines of code. Additionally, Hugging Face serves as a central repository, enabling seamless sharing, collaboration, and discovery of models and datasets.

Finally, we have democratized AI, where anyone, regardless of race or resources, can build and deploy machine learning solutions. This is why the hugging face is acceptable in all industries Microsoft, Google, metaAnd others who integrate it into their workflow.

# Exploring the Hugging Face Ecosystem

Hugging Face’s ecosystem is extensive, consisting of multiple integrated components that support the full lifecycle of AI workflows:

// Navigating the Hugging Face Hub

- A central repository for AI artifacts: models, datasets, and applications (SPACE).

- Supports public and private hosting with versions, metadata, and documentation.

- Users can upload, download, search, and benchmark AI resources.

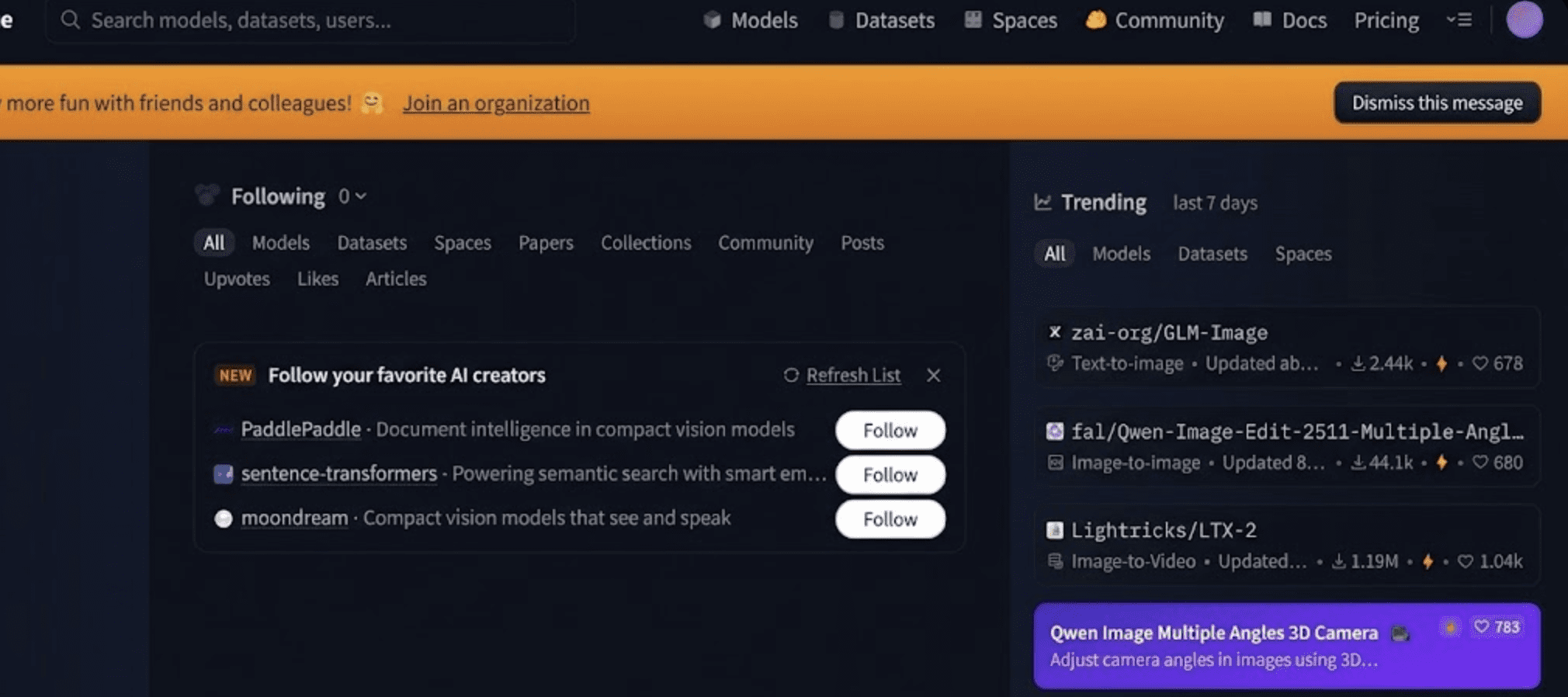

To get started, visit Hugging Face website In your browser. The homepage presents a clean interface with options to explore models, datasets, and spaces.

Image by author

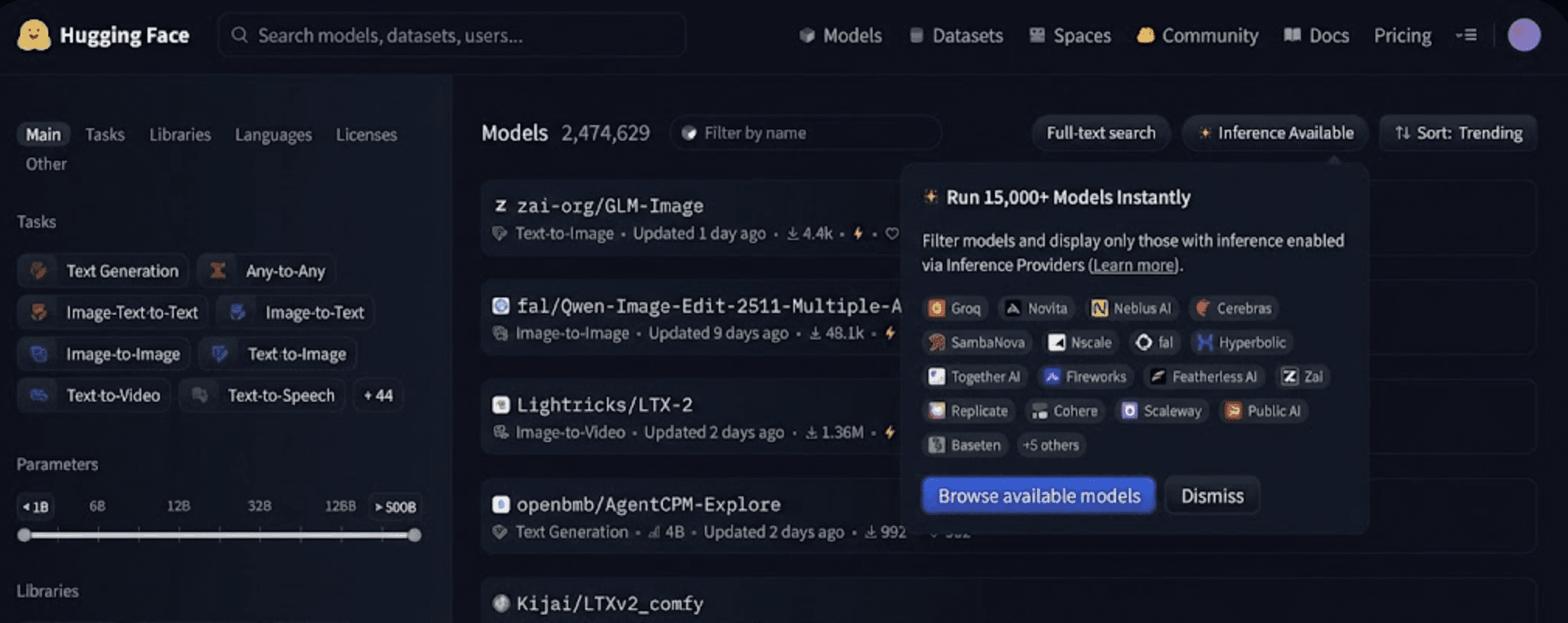

// working with models

The model section serves as the center of the hugging face hub. It offers thousands of pre-trained models across a variety of machine learning tasks, enabling you to leverage pre-trained models for tasks like text classification, summarization, and image recognition without having to build everything from scratch.

Image by author

- Dataset: Easy-to-use datasets for training and evaluating your models.

- Spaces: interactive demos and apps built using tools like Gradio And Streamlight.

// Taking advantage of the Transformers library

transformer library is the leading open-source SDK that standardizes how Transformer-based models are used for inference and training in tasks including NLP, computer vision, audio, and multimodal learning. it:

- Supports over thousands of model architectures (for example, BERT, GPT, T5, ViT).

- Provides pipelines for common tasks including text creation, classification, question answering, and visualization.

- integrates with pytorch, tensorflowAnd Jax For flexible training and inference.

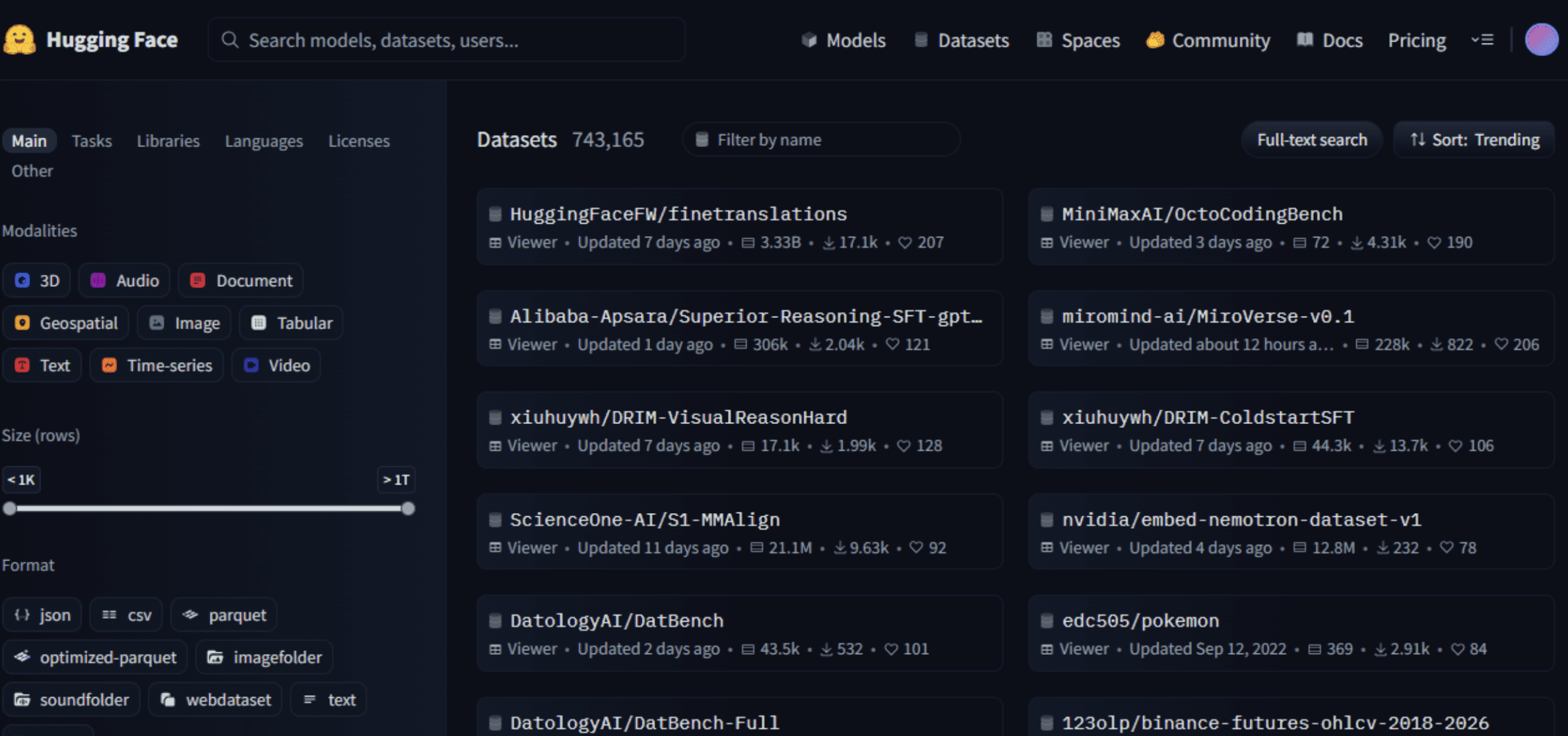

// Accessing the Dataset Library

dataset The library provides tools for:

- Search, load, and preprocess datasets from the Hub.

- Handle large datasets with streaming, filtering, and transformation capabilities.

- Manage training, evaluation and testing partitions efficiently.

This library makes it easy to experiment with real-world data across all languages and functions without complex data engineering.

Image by author

Hugging Face also maintains several supporting libraries that complement model training and deployment:

- diffuser:For generative image/video models using diffusion techniques.

- tokenizer:ultra-fast tokenization implementation in Rust

- PEFT:Parameter-efficient fine-tuning methods (LoRA, QLoRA)

- To Accelerate: Simplifies distributed and high-performance training

- transformer.js:Enables model inference directly in the browser or Node.js

- TRL (Transformers Reinforcement Learning):tools for training language models with reinforcement learning methods

// building with empty space

free space are lightweight interactive applications that display models and demos, usually created using frameworks such as Gradio or Streamlit. They allow developers to:

- Deploy machine learning demos with minimal infrastructure.

- Share interactive visual tools for text generation, image editing, semantic search, and more.

- Experiment visually without writing backend services.

Image by author

# Deploy and use production tools

In addition to open-source libraries, Hugging Face offers production-ready services such as:

- Estimate API: These APIs enable hosted model inference via REST APIs without server provisioning and also support scaling models (including large language models) to live applications.

- inference endpoint: This is for managing GPU/TPU endpoints, enabling teams to serve models at scale with monitoring and logging

- cloud integration: Hugging Face integrates with major cloud providers like AWS, Azure, and Google Cloud, enabling enterprise teams to deploy models within their existing cloud infrastructure.

# Following a simplified technical workflow

Here’s a typical developer workflow on Hugging Face:

- Search and select pre-trained models on Hub

- Load and fine-tune locally or using a cloud notebook

Transformers - Upload refined models and datasets back to the Hub with versioning

- Deploy using the Estimate API or Estimate Endpoint

- Share demos via Spaces.

This workflow dramatically accelerates prototyping, experimentation, and production development.

# Creating an Interactive Demo with Gradio

import gradio as gr

from transformers import pipeline

classifier = pipeline("sentiment-analysis")

def predict(text):

result = classifier(text)(0) # extract first item

return {result("label"): result("score")}

demo = gr.Interface(

fn=predict,

inputs=gr.Textbox(label="Enter text"),

outputs=gr.Label(label="Sentiment"),

title="Sentiment Analysis Demo"

)

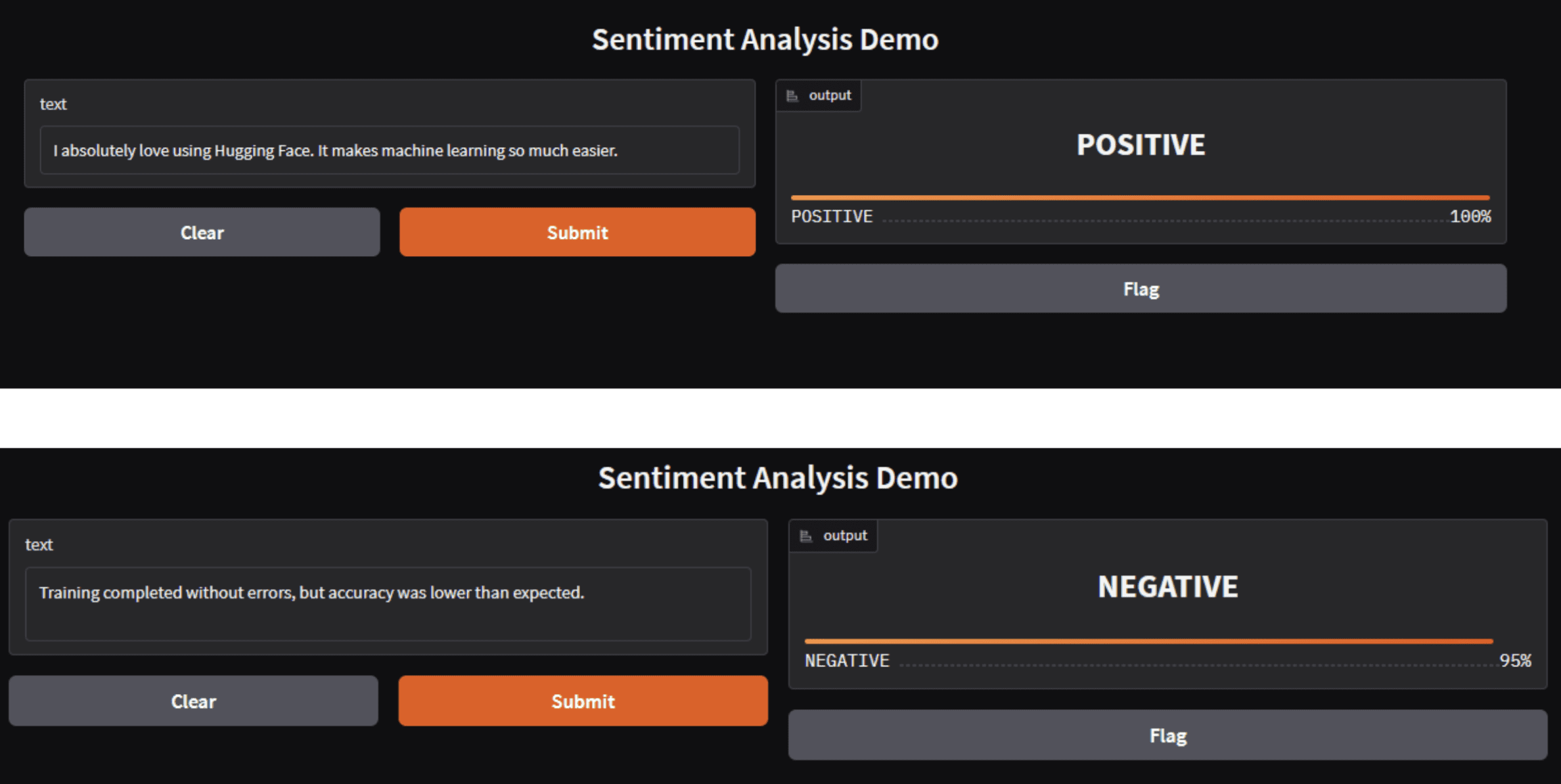

demo.launch()You can run this code by running Python after the file name. In my case, it is python demo.py Which allows it to be downloaded, and you will have something like this below.

Image by author

The same app can be directly deployed as Hugging Face Space.

Note that hugging face

pipelinesReturn predictions as lists, even for a single input. When integrating with Gradio label component, you need to extract the first result and return either a string label or a dictionary mapping labels to the confidence score. The consequence of not implementing this is that price error Due to mismatch in output types.

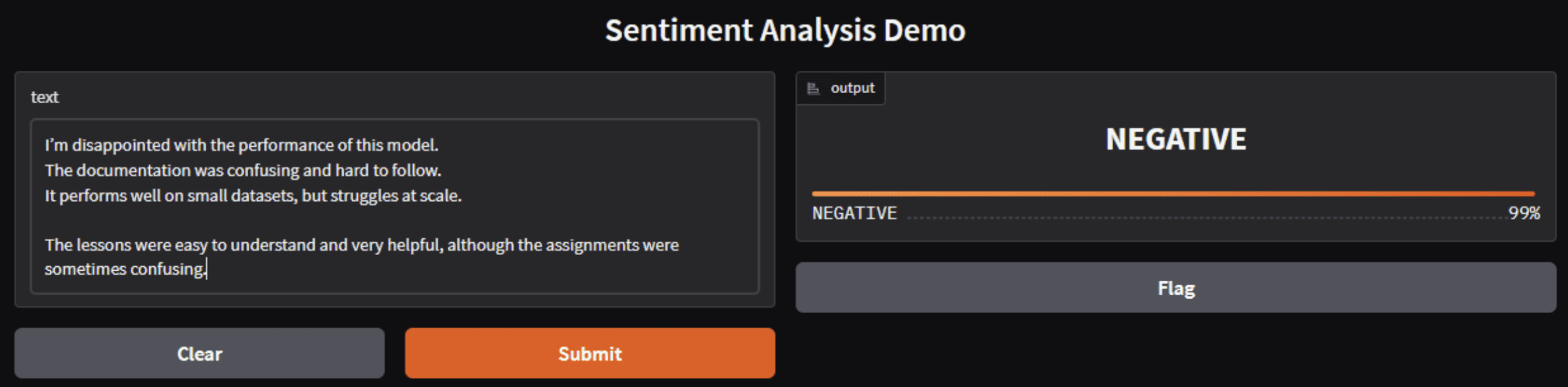

Image by author

Hugging face emotion models classify overall emotional tone rather than individual opinions. When negative signals are stronger or more frequent than positive signals, the model confidently predicts negative sentiment even when some positive feedback is present.

You may be wondering why developers and organizations use the hugging face; Well, here are some reasons:

- standardization: Hugging Face provides consistent APIs and interfaces that unify the way models are shared and consumed across languages and functions.

- community support: The open governance of the platform encourages contributions from researchers, educators, and industry developers, accelerating innovation and enabling community-driven improvements to models and datasets.

- democratization: By offering easy-to-use tools and ready-made models, AI development becomes more accessible to learners and organizations without large-scale computing resources.

- Enterprise Ready Solutions: Hugging Face offers enterprise features like private model hubs, role-based access controls, and critical platform support for regulated industries.

# Keeping in mind the challenges and limitations

While Hugging Face simplifies many parts of the machine learning lifecycle, developers should keep these things in mind:

- Documentation Complexity: As tools grow, the depth of documentation varies; Some advanced features may require deeper exploration to properly understand. (Community feedback notes mixed documentation quality in some parts of the ecosystem).

- model search: With millions of models on Hub, finding the right model often requires careful filtering and semantic search approaches.

- Ethics and licensing: Open repositories can raise content access and licensing challenges, especially with user-uploaded datasets that may include proprietary or copyrighted content. Effective governance and diligence in labeling licenses and intended use cases is essential.

# concluding remarks

In 2026, Hugging Face stands as the cornerstone of open AI development, offering a rich ecosystem spanning research and production. The combination of community contributions, open source tooling, hosted services, and collaborative workflows has reshaped the way developers and organizations approach machine learning. Whether you’re training cutting-edge models, deploying AI apps, or participating in a global research effort, Hugging Face provides the infrastructure and community to accelerate innovation.

Shittu Olumide He is a software engineer and technical writer who is passionate about leveraging cutting-edge technologies to craft compelling narratives, with a keen eye for detail and the ability to simplify complex concepts. You can also find Shittu Twitter.