Last updated on February 17, 2026 by Editorial Team

Author(s): mohammedabdelmenem

Originally published on Towards AI.

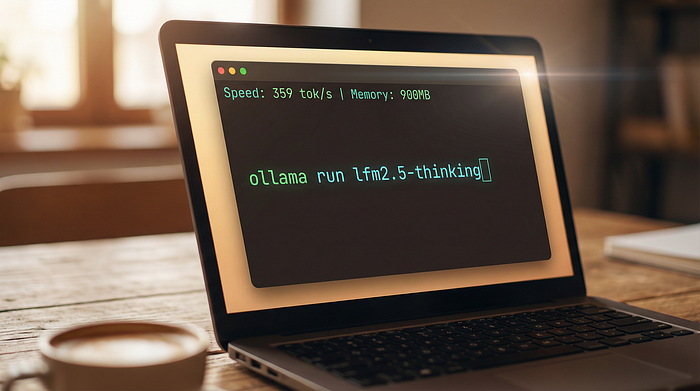

Forget GPT-5 for agent tasks. LFM 2.5 runs at 359 tokens/second in 900MB. Here’s why it works and how to fix it for your use case.

1400x overtraining. 900MB memory. 359 tokens/sec.

The article discusses the performance of the Liquid LFM 2.5 AI model, emphasizing its efficiency in tasks that typically require significantly larger models. It highlights how this tiny model overcame traditional scaling laws, achieving faster inference speeds and lower operating costs, thus reshaping expectations in AI economics. The author argues that speed and efficiency are now more important than raw size or training cost, signaling a transformational shift in the way AI agents are developed and deployed in real-world applications.

Read the entire blog for free on Medium.

Published via Towards AI

We build enterprise-grade AI. We will also teach you how to master it.

15 Engineers. 100,000+ students. The AI Academy side teaches what actually avoids production.

Get started for free – no commitments:

→ 6-Day Agent AI Engineering Email Guide – One Practical Lesson Per Day

→ Agents Architecture Cheatsheet – 3 Years of Architecture Decisions in 6 Pages

Our courses:

→ AI Engineering Certification – 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course.

→ Agent Engineering Course – Hands-on with production agent architectures, memory, routing, and eval frameworks – built from real enterprise engagements.

→ AI for Work – Understand, evaluate, and apply AI to complex work tasks.

Comment: The content of the article represents the views of the contributing authors and not those of AI.