Introduction

The most performing, cost-effective Lakehouse is one that adapts itself to growing data volumes, query patterns, and organizational usage. Predictive Optimization (PO) in Unity Catalog enables this behavior by continuously analyzing how data is written and queried, then automatically applying appropriate maintenance actions without requiring manual work from users or platform teams. In 2025, Predictive Optimization moved from an optional automation feature to default platform behavior, removing the operational burden traditionally associated with table tuning while managing performance and storage efficiency across millions of production tables. Here’s a look at the milestones that have brought us here, and what’s coming next in 2026.

Mass adoption throughout Lakehouse

Through 2025, predictive optimization on the Databricks platform saw rapid adoption as customers relied on autonomous maintenance to manage growing data assets. Predicted adaptation has grown rapidly over the past year:

- Exabytes of unreferenced data Were vacuumedresulting in millions of dollars of savings in storage costs

- Hundreds of petabytes of data were compacted and clustered to improve query performance and file pruning efficiency

- Millions of tables adopted automatic fluid clustering for autonomous data layout management

Based on the consistent performance improvements seen at this scale, predictive optimization is now enabled by default for all new Unity Catalog managed tables, workspaces, and accounts.

How does predictive optimization work?

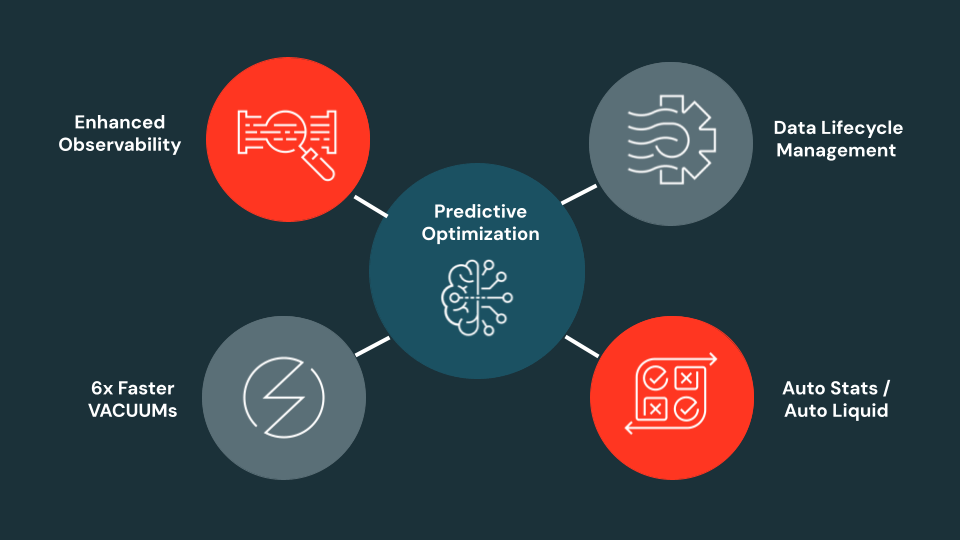

predictive optimization (PO) acts as the platform intelligence layer for Lakehouse, continuously optimizing your data layout, reducing storage footprint, and maintaining the accurate file statistics needed for efficient query planning. uc managed tables.

Based on observed usage patterns, PO automatically determines when and how to run commands:

- Adaptationwhich compresses smaller files and improves data locality for efficient access

- vacuumwhich removes unreferenced files to control storage costs

- cluster byWhich selects optimal clustering columns for tables with automatic liquid clustering

- Analyze itWhich maintains accurate statistics for query planning and data skipping

All optimization decisions are workload-driven and adaptive, eliminating the need to re-manage schedules, tune parameters, or optimization strategies when query patterns change.

Major advances in adaptation predicted in 2025

Automated statistics for 22% faster queries

Accurate statistics are important for creating efficient query plans, yet as data volume and query diversity increases, managing statistics manually becomes impractical.

with automated statistics (Now generally available), predictive optimization determines which columns matter based on observed query behavior and ensures that statistics remain up to date without manual analysis commands.

Statistics are maintained through two complementary mechanisms:

- States-on-write captures statistics as data is written with minimal overhead, a method that is 7-10 times more performant than running an analysis table.

- Background refreshes statistics when they become outdated due to data changes or evolving query patterns.

In real customer production workloads, this approach provided up to twenty-two percent faster queries while removing the operational cost of manual statistics management.

6x faster and 4x cheaper vacuum

VACUUM plays a vital role in managing storage costs and compliance by removing non-referenced data files. Standard vacuuming requires listing all files in the table directory to identify candidates for deletion, an operation that can take more than 40 minutes for tables containing 10 million files.

Predictive Optimization now applies an optimized VACUUM execution path that leverages the delta transaction log to directly identify removable files, avoiding expensive directory listings whenever possible.

On a larger scale, this resulted in:

- Up to 6x faster VACUUM performance

- Computation cost up to 4 times lower than standard methods

The engine dynamically determines when to use this log-based approach and when to perform a full directory scan to clean up fragments from aborted transactions.

Automated Liquid Clustering

Automated Liquid Clustering reaches general availability in 2025 and is already optimizing millions of tables in production.

This process is completely workload-driven:

- First, PO analyzes telemetry from all queries on your tables, observing key metrics such as predicate columns, filter expressions, and the number and size of reads and truncated files.

- Next, it performs workload modeling, identifying and testing various candidate clustering key combinations (for example, clustered on date, or customer_id, or both).

- Finally, the PO runs a cost-benefit analysis to select the single best clustering strategy that will maximize query pruning and minimize scanned data, even determining whether the table’s existing insertion order is already sufficient.

You get fast queries with zero manual tuning. By automatically analyzing the workload and applying the optimal data layout, PO clustering removes the complex work of key selection and ensures that your tables remain highly performant as your query patterns evolve.

Platform-wide coverage

Predictive optimization has moved beyond traditional tables to support a broader set of Databricks platforms.

- PO is now seamlessly integrated Lakeflow Spark Declarative Pipeline (SDP)Bringing autonomous background maintenance to both materialized views and streaming tables.

- PO works on both managed delta And iceberg Table

- PO is enabled by default for all new Unity Catalog-managed tables, workspaces, and accounts.

This ensures autonomous maintenance across your entire data estate rather than isolated optimization of individual tables.

What’s going to happen next in 2026?

We are committed to providing features that replace manual table tuning with automated maintenance. In parallel, we plan to go beyond physical table health and address Total Data Lifecycle Intelligence-Automated storage cost savings, data lifecycle management, and row deletion. we are also giving priority increased observation abilityIntegrating predictive optimization insights into common table operations and governance hubs to provide clear visibility into PO operations and their ROI.

Auto-TTL (Automatic Row Deletion)

Managing data retention or controlling storage costs is an important, yet often manual task. We’re excited to introduce Auto-TTL, a new predictive optimization capability that fully automates row deletion. Using this feature, you will be able to set a simple time-to-live policy on any UC managed table directly using the command:

Once the policy is set, predictive optimization takes care of the rest. It automates the entire two-step process by first running a DELETE operation to soft-delete the expired rows and then with VACUUM to permanently delete them from physical storage.

Contact your account team today Try it in private preview!

enhanced observability

Better predictive optimization observability

You will be able to track the direct impact and ROI of predictive optimization in new Data Governance Hub. This overview dashboard will come out of the box with a centralized view into the PO’s operations, revealing the key metrics that determine its value.

Use it to see what the PO is doing under the hood, including clear views of compressed bytes, bytes clustered by Liquid, vacuumed bytes, and analyzed bytes. Most importantly, the Hub converts these actions into direct business value by showing your estimated storage cost savings. This will make it easier than ever to understand and communicate the positive impact of PO on both your storage costs and query performance.

In the expanded description, you will also be able to see the reasons why predictive optimization skipped the optimization (e.g. table already well clustered, table too small to benefit from compaction, etc.).

Additionally, we have also added the ability to view column selection for data skipping and auto liquidate po system table.

Contact your account team to try the Data Governance Hub today In private preview!

Improved table-level storage observability

To provide greater clarity into your storage footprint, we will introduce advanced overview features for predictive optimization. You’ll be able to monitor the health and growth of your tables through high-level metrics like file count and storage growth. By bringing these insights straight to the table, we’re making it easier to visualize the impact of automated maintenance and identify new opportunities to reduce costs and streamline your data estate.

Get started with predictive optimization

Predictive optimization for Unity Catalog managed tables is available today and is enabled by default for new workloads.

When enabled, customers benefit from autonomous data layout through fast VACUUM execution, workload-aware automated statistics, and automated fluid clustering.

You can also explore Auto TTL and Predictive Optimization observability (Data Governance Hub) through a private preview by contacting your account team.