The development of large language models (LLM) has been defined by the exploration of raw scale. While increasing the parameter count into the trillions initially increased performance, it also introduced significant infrastructure overhead and diminishing marginal utility. release of Quen 3.5 medium model series This signals a change in Alibaba’s Quan approach, which prioritizes architectural efficiency and high-quality data over traditional scaling.

The series consists of a lineup Qwen3.5-flash, Qwen3.5-35B-A3B, Qwen3.5-122B-A10BAnd QUEEN3.5-27B. These models demonstrate that strategic architectural choices and reinforcement learning (RL) can achieve marginal levels of intelligence with significantly lower computation requirements.

Efficiency Breakthrough: 35B overtakes 235B

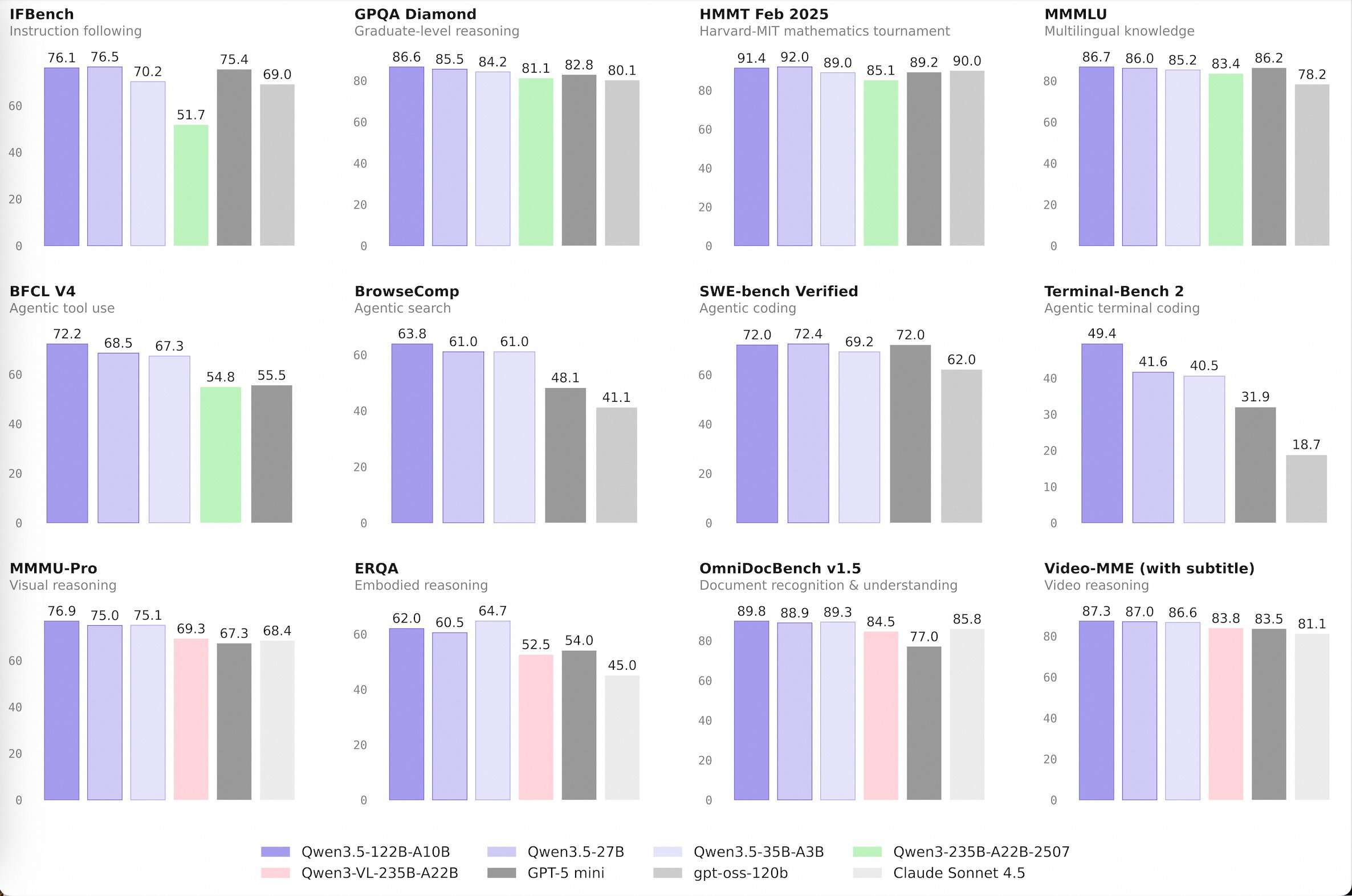

The most notable technological milestone is the demonstration of Qwen3.5-35B-A3Bwhich now performs better than the old one Qwen3-235B-A22B-2507 and vision-able Qwen3-VL-235B-A22B.

The ‘A3B’ suffix is the key metric. this indicates Active Parameters Mix of Experts (MOE) in Architecture. Although the model has a total of 35 billion parameters, it activates only 3 billion during a single inference pass. The fact that a model with 3B active parameters can outperform its predecessor with 22B active parameters highlights a huge leap in logic density.

This efficiency is driven by a hybrid architecture that integrates Gated Delta Network (Linear Attention) with standard gated attention blocks. This design enables high-throughput decoding and a low memory footprint, making high-performance AI more accessible on standard hardware.

Qwen3.5-flash: optimized for production

Qwen3.5-flash Serves as a hosted production version of the 35B-A3B model. It has been developed specifically for software developers who require low-latency performance in agentive workflows.

- 1M Reference Length: By providing a 1-million-token context window by default, Flash reduces the need for complex RAG (recovery-augmented generation) pipelines when handling large document sets or codebases.

- Official built-in tools: The model features native support for tool usage and function calling, allowing it to interface directly with APIs and databases with high precision.

Highly-reasoning agent scenario

Qwen3.5-122B-A10B And QUEEN3.5-27B Models are designed for ‘agent’ tasks – scenarios where a model must plan, reason and execute a multi-step workflow. These models bridge the gap between open-weight options and proprietary frontier models.

The Alibaba Quen team used a four-stage post-training pipeline for these models, including a long chain-of-thought (COT) cold start and logic-based RL. This allows the 122B-A10B model, using only 10 billion active parameters, to maintain logical consistency on long-horizon tasks, rivaling the performance of much larger dense models.

key takeaways

- Architectural Efficiency (MOE): Qwen3.5-35B-A3B The model, with only 3 billion active parameters (A3B), outperforms the previous generation 235B model. This demonstrates that mixture-of-experts (MOE) architectures, when combined with improved data quality and reinforcement learning (RL), can provide ‘frontier-level’ intelligence at a fraction of the compute cost.

- Production Ready Display (Flash): Qwen3.5-flash The hosted production version is aligned with the 35B model. It is specifically optimized for high-throughput, low-latency applications, making it a ‘workhorse’ for developers moving from prototyping to enterprise-scale deployment.

- Huge context window: The series features a 1M reference length by default. This enables long-context tasks such as full-repository code analysis or large-scale document retrieval without the need for complex RAG (Retrieval-Augmented Generation) ‘chunking’ strategies, significantly simplifying the developer workflow.

- Basic device usage and agentive capabilities: Unlike those models, which require extensive rapid engineering for external interactions, QUEN 3.5 includes official built-in tools. This native support for function calling and API interactions makes it highly effective for ‘agent’ scenarios where the model must plan and execute multi-step workflows.

- ‘Medium’ sweet spot: By focusing on models ranging from 27B to 122B (A10B active)Alibaba is targeting the ‘Goldilocks’ area of the industry. These models are small enough to run on private or localized cloud infrastructure, while maintaining the complex logic and logical consistency typically reserved for large-scale, closed-source proprietary models.

check it out model weight And flash api. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.