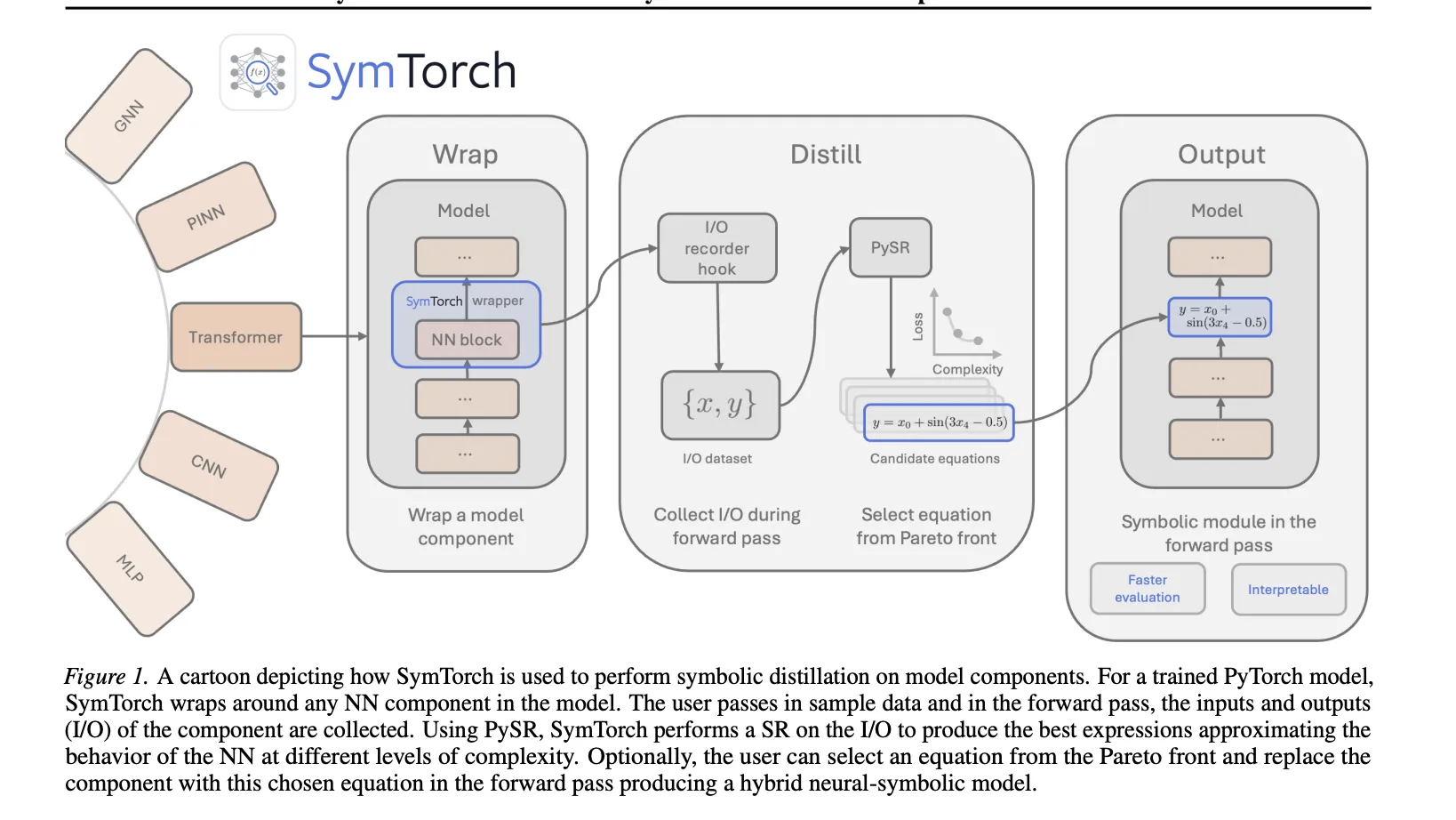

Could symbolic regression be the key to transforming opaque deep learning models into interpretable, closed-form mathematical equations? Or say you have trained your deep learning model. It works. But do you know what it has actually learned? A team of researchers at the University of Cambridge have proposed ‘SymTorch’, a library designed to integrate Symbolic Regression (SR) In deep learning workflow. This enables researchers to estimate neural network components with closed-form mathematical expressions, facilitating functional interpretation and potentially inference acceleration.

Main mechanism: wrap-distill-switch workflow

SymTorch simplifies the engineering required to extract symbolic equations from trained models by automating data movement and hook management.

- wrap: users apply it

SymbolicModelanyone rappernn.ModuleOr callable function. - Distillation: The library registers forward hooks to record input and output activations during the forward pass. These are cached and transferred from the GPU to the CPU for symbolic regression via PySR.

- Change: Once distilled, the original neural weights can be replaced with the equation discovered using the forward pass

switch_to_symbolic.

interfaces with the library PESRwhich uses multi-population genetic algorithms to find equations that balance accuracy and complexity Pareto front. The ‘best’ equation is chosen by maximizing the fractional decline in log mean absolute error relative to the increase in complexity.

Case Study: Accelerating LLM Estimation

A primary application explored in this research is replacing Multi-Layer Perceptron (MLP) Layers in Transformer models with symbolic surrogates to improve throughput.

Implementation Details

Due to the high dimensionality of LLM activations, the research team employed Principal Component Analysis (PCA) To compress the input and output before executing SR. For Quen2.5-1.5b model, they selected 32 principal components for input and 8 principal components for output in three target layers.

performance trade-off

As a result of interference 8.3% increase in token throughput. However, this gain came with a non-trivial increase in perturbation, which was primarily driven by the reduction in PCA dimensionality rather than the symbolic approximation..

| metric | Baseline(Qwen2.5-1.5B) | symbolic surrogate |

| Confusion (Wikipedia-2) | 10.62 | 13.76 |

| Throughput (token/s) | 4878.82 | 5281.42 |

| Average Latency (ms) | 209.89 | 193.89 |

gnn and pinon

SymTorch was validated on its ability to recover known physical laws from latent representations in scientific models.

- Graph Neural Network (GNN): By training GNNs on particle dynamics, the research team used SimTorch to recover empirical force laws such as gravity (1/R)2) and spring force, directly from edge messages.

- Physics-Informed Neural Networks (PINON): The library successfully distilled the analytical solution to a 1-D heat equation from a trained PINN. Pinon’s inductive bias allowed it to achieve a mean squared error (MSE) of 7.40 x 10-6.

- LLM Arithmetical Analysis: Symbolic distillation was used to observe how models such as Llama-3.2-1B perform 3-digit addition and multiplication. The distilled equations revealed that while the models are often correct, they rely on internal assumptions that include systematic numerical errors.

key takeaways

- automatic symbolic distillationSymTorch is a library that automates the process of replacing complex neural network components with interpretable, closed-form mathematical equations by wrapping the components and aggregating their input-output behavior.

- engineering barrier removal:The library handles key engineering challenges that previously hindered the adoption of symbolic regression, including GPU-CPU data transfer, input-output caching, and seamless switching between neural and symbolic forward passes.

- llm estimation acceleration: A proof-of-concept demonstrated that replacing MLP layers in the Transformer model with symbolic surrogates yielded an 8.3% throughput improvement, although confounded with some performance degradation.

- discovery of scientific law:SimTorch was successfully used to recover physical laws from Graph Neural Networks (GNN) and analytical solution of 1-D heat equation from Physics-Informed Neural Networks (PINON).

- Functional interpretation of LLM: By distilling the end-to-end behavior of an LLM, researchers can inspect the explicit mathematical heuristics used for tasks such as arithmetic, revealing where the internal logic deviates from precise operations.

check it out paper, repo And project page. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.

Max is an AI analyst at Silicon Valley-based MarkTechPost, actively shaping the future of technology. He teaches robotics at Brainvine, fights spam with ComplyMail, and leverages AI daily to translate complex technological advancements into clear, understandable insights.