Call center analytics plays a vital role in improving customer experience and operational efficiency. With the Foundation Model (FM), you can improve the quality and efficiency of call center operations and analytics. Organizations can use generative AI to assist human customer support agents and managers of contact center teams to gain more nuanced insights that will help redefine how and what questions can be asked of call center data.

While some organizations look for turnkey solutions to introduce generic AI into their operations, like Amazon Connect Contact Lenses, others build custom customer support systems using AWS services for their microservices backend. With this comes the opportunity to integrate FM into systems to provide AI assistance to human customer support agents and their managers.

One of the key decisions these organizations face is which model to use to power AI support and analytics in their platforms. To this end, the Generative AI Innovation Center has developed a demo application that includes a collection of use cases powered by Amazon’s latest FM family, Amazon Nova. In this post, we discuss how Amazon Nova demonstrates capabilities in conversational analytics, call classification, and other use cases often relevant to contact center solutions. We examine these capabilities for both single-call and multi-call analytics use cases.

Amazon Nova FM for scale

Amazon Nova FMs deliver leading cost-performance, making them suitable for large-scale generative AI. These models are pre-trained on large amounts of data, enabling them to perform a wide range of language tasks with remarkable accuracy and efficiency while scaling effectively to support large demand. In the context of call center analytics, Amazon Nova models can understand complex conversations, extract important information, and generate valuable insights that were previously difficult or impossible to obtain at scale. The demo application demonstrates the capabilities of the Amazon Nova model for a variety of analytical tasks, including:

- sentiment analysis

- subject identification

- weak customer evaluation

- Checking protocol compliance

- interactive question-answer

By using these advanced AI capabilities of Amazon Nova FM, businesses can gain a deeper understanding of their customer interactions and make data-driven decisions to improve service quality and operational efficiency.

solution overview

The Call Center Analytics demo application is built on a simple architecture that seamlessly integrates Amazon Bedrock and Amazon Nova to enable end-to-end call center analytics for both single-call and multi-call analytics. The following figure shows this architecture.

- Amazon Bedrock – Provides access to Amazon Nova FM, enabling powerful natural language processing capabilities

- Amazon Athena – used to query call data stored in a structured format, allowing efficient data retrieval and analysis

- Amazon Transcribe – Fully managed, automatic speech recognition (ASR) service

- Amazon Simple Storage Service (Amazon S3) – object storage service offering industry-leading scalability, data availability, security, and performance

- Streamlight – Powers the web-based UI, providing an intuitive and interactive experience for users

The application is divided into two main components: single call analytics and multi-call analytics. These scripts work together to provide a comprehensive solution that combines post-call analysis with historical data insights.

Single Call Analytics

The single call analytics functionality of the application provides detailed analysis of individual customer service calls. This feature is implemented in the Single_Call_Analytics.py script. In this section, we explore some of the key capabilities.

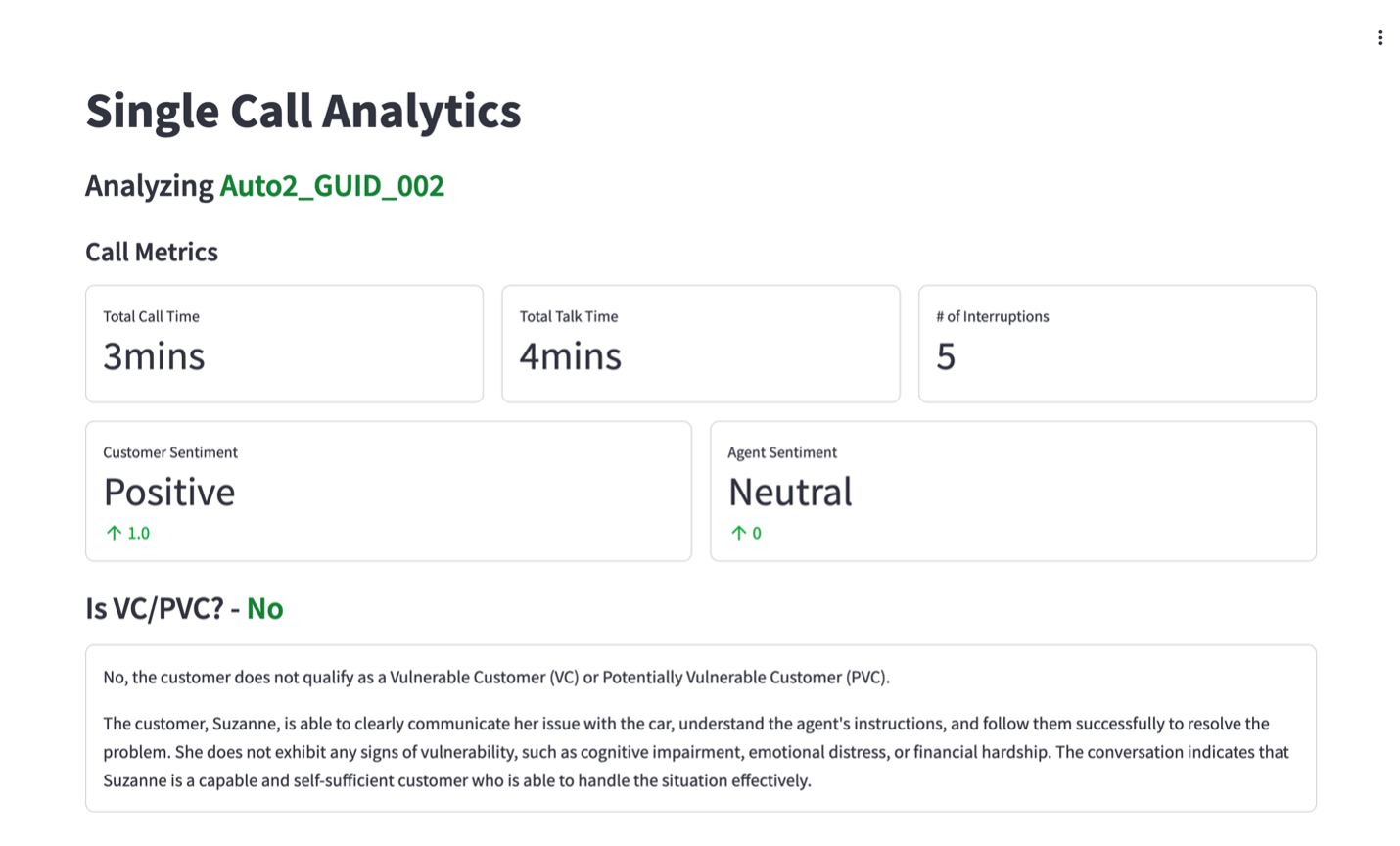

Sentiment Analysis and Vulnerable Customer Evaluation

The solution uses Amazon Nova FM to gain insight on both customer and agent sentiment, as shown in the following screenshot.

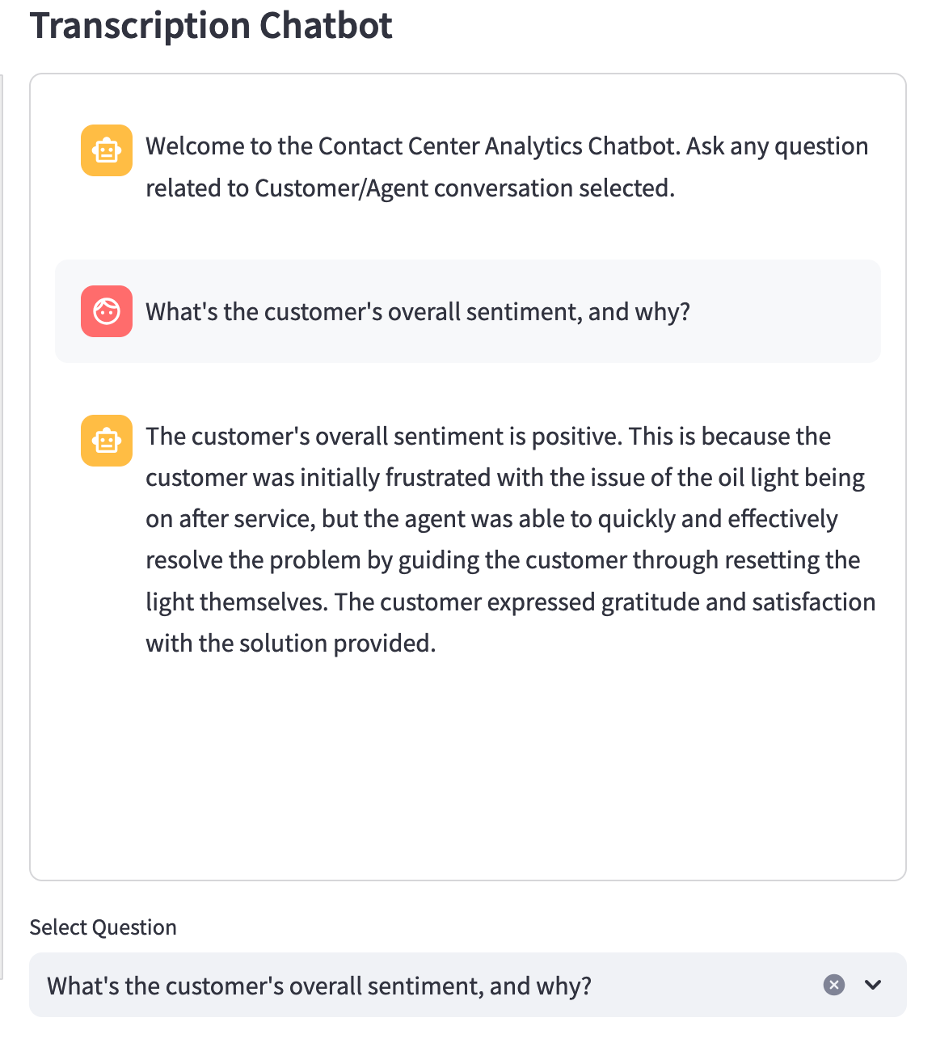

Using the chatbot feature, users can ask for clarification as to why the emotion was classified that way and can also get context from the transcription. This feature provides greater understanding on a sentiment category by quickly finding supporting phrases from the transcription itself, which can then be used for other analyses.

A vulnerable customer or potentially vulnerable customer is a person who, because of their personal circumstances, is particularly vulnerable to financial loss or who requires special consideration in financial services. The application assesses whether the called subscriber can be considered vulnerable or potentially vulnerable by passing the call transcript of the selected call with the following indication:

In this prompt, Amazon Nova FM uses a general definition of a vulnerable or potentially vulnerable customer to evaluate. However, if a business has its own definition of vulnerable or potentially vulnerable customers, they may use this custom definition to prompt the FM to make the classification. This feature helps call center managers identify potentially sensitive situations and ensure that vulnerable customers receive appropriate care and attention, along with an explanation of why the customer was identified as such.

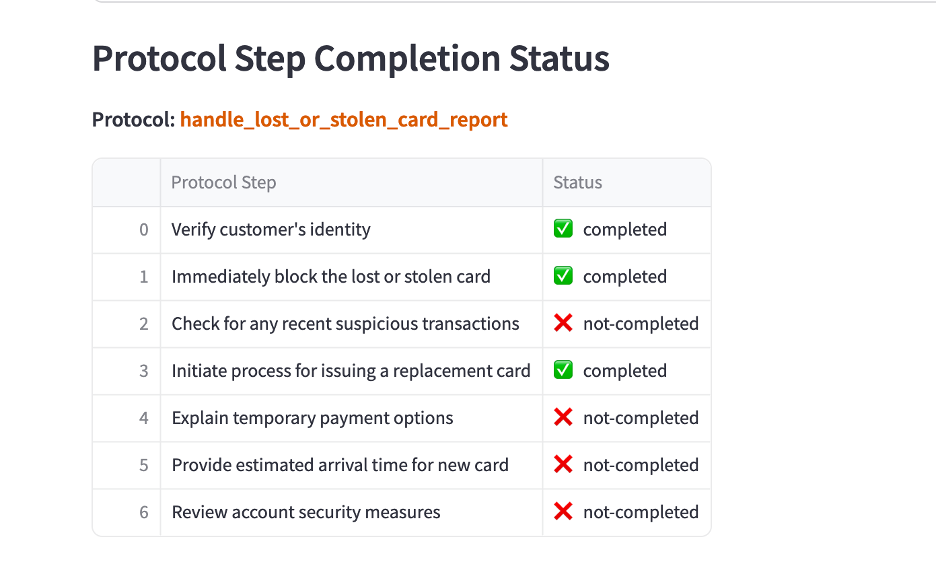

Protocol Assistance and Completion of Steps

The application uses the Amazon Nova model to identify the relevant protocols for each call and check whether the agent followed the prescribed steps. The protocol is currently defined in a JSON file that is ingested locally at runtime. The following code shows an example of how this is implemented:

This code snippet shows how the application first identifies the relevant protocol using the call transcript and the list of available protocols. Once the protocol is identified, the call transcript and protocol steps for the determined protocol are passed together to check whether each step of the protocol was completed by the agent. Results are displayed in a user-friendly format, helping managers quickly assess agent performance and adherence to guidelines.

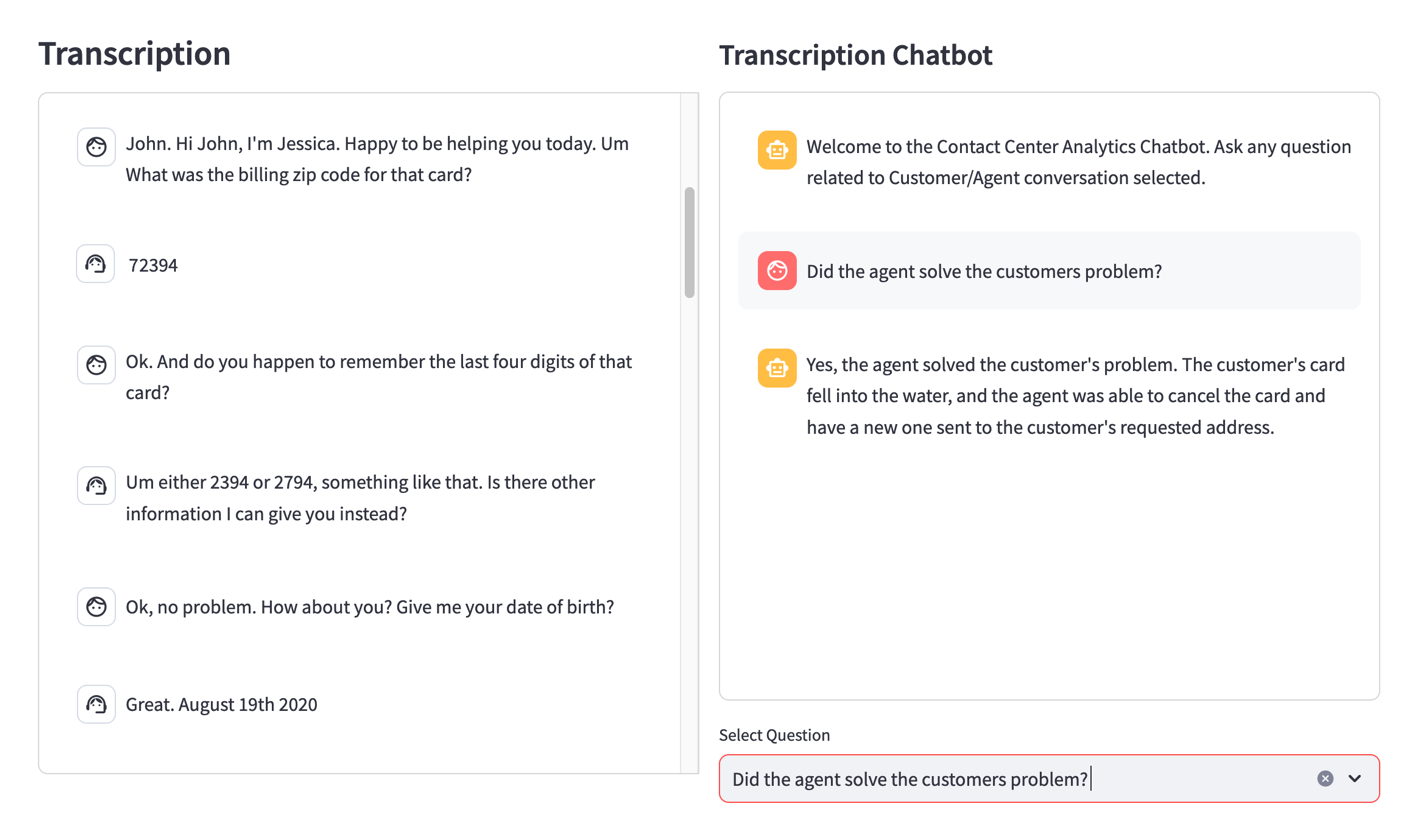

Interactive transcription visualization and AI assistant

The Single Call Analytics page provides an interactive transcription view, allowing users to read the conversation between an agent and a customer. Additionally, it also includes an AI assistant feature so users can ask specific questions about the call:

This Assistant functionality, powered by the Amazon Nova model, helps users gain deeper insight into specific aspects of a call without having to manually search the transcript.

Multi-Call Analytics

Multi-call analytics functionality, implemented in the Multi_Call_Analytics.py script, provides holistic analytics across multiple calls and enables powerful Business Intelligence (BI) queries.

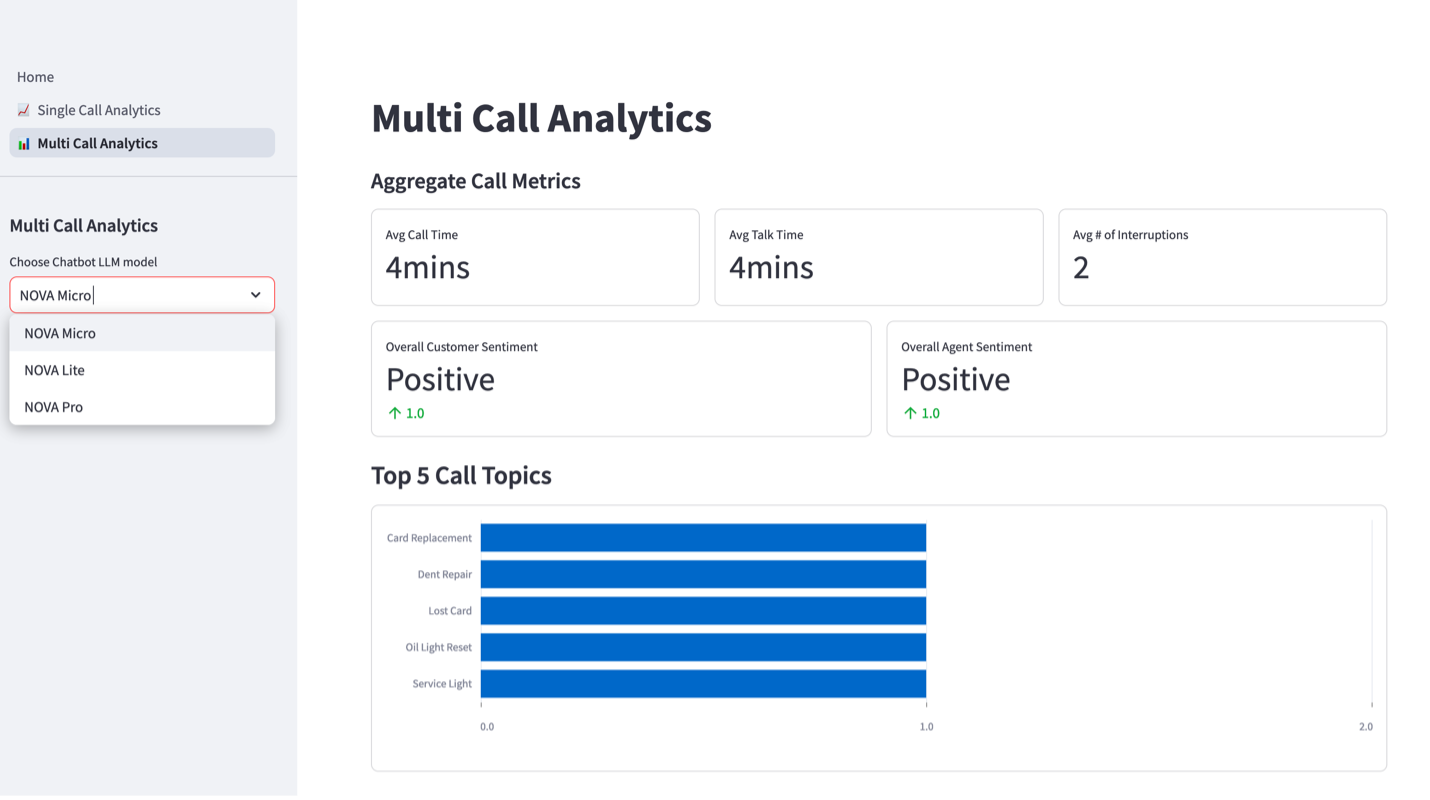

Data visualization and flexible model selection

This feature helps users quickly see trends and patterns across multiple calls, making it easier to identify areas for improvement or success.

The “Top 5 Call Topics” view in the previous screenshot is also powered by the Amazon Nova model; Users can classify the topic of a call by passing in the call transcript and then let the model determine what the main topic of the call was. This feature can help users to instantly classify calls and place them into defined topic buckets to generate views. By looking at the top reasons customers call, businesses can focus on devising strategies to reduce call volume for these subject categories. Additionally, the application offers flexible model selection options, allowing users to choose between different Amazon Nova models (such as Nova Pro, Nova Lite, and Nova Micro) for different analytical tasks. This flexibility means that users can select the model best suited to their specific needs and use cases.

Analytical AI Assistant

One of the key features of the Multi-Call Analytics page is the Analytical AI Assistant, which can handle complex BI queries using SQL.

The following code demonstrates how the application uses Amazon Nova models to generate SQL queries based on natural language queries:

The Assistant can understand complex queries, translate them into SQL, and even suggest appropriate chart types to visualize the results. SQL queries are run on the processed data from Amazon Transcribe and queried using Athena, which are then exposed to the analytical AI assistant.

execution

The call analytics demo application is implemented using Streamlit UI for speed and simplicity of development. The applications are a mix of specific use cases and AI functions that provide a sample of what the Amazon Nova model can do for call center operations and analytics use cases. See the following for more information on how this demo application is implemented GitHub repo.

conclusion

In this post, we discussed how Amazon Nova FM powers the call center analytics demo application, which represents a significant advancement in the field of call center analytics. By harnessing the power of these advanced AI models, businesses can gain unique insights into their customer interactions, improve agent performance and increase overall operational efficiency. The application’s comprehensive features, including sentiment analysis, protocol adherence checks, vulnerable customer assessments, and powerful BI capabilities, provide call center managers with the tools they need to make data-driven decisions and continuously improve their customer service operations.

As Amazon Nova FM continues to develop and improve, we can expect even more powerful and sophisticated analytics capabilities in the future. This demo serves as an excellent starting point for customers who want to explore the potential of AI-powered call center analytics and implement it in their environment. We encourage readers to explore the Call Center Analytics demo to learn more details about how the Amazon Nova Model is integrated into the application.

About the authors