Image by author

# Introduction

When applying for a job at Meta (formerly Facebook), Apple, Amazon, Netflix, or Alphabet (Google) – known collectively as FAANG – interviews rarely test whether you can recite textbook definitions. Instead, interviewers want to see whether you analyze data critically and whether you will identify poor analysis before sending it into production. Statistical net is one of the most reliable ways to test this.

These crises replicate the types of decisions that analysts face on a daily basis: a dashboard number that looks OK but is actually misleading, or an experiment result that seems actionable but contains a structural flaw. The interviewer already knows the answer. What they’re looking at is your thought process, including whether you ask the right questions, notice missing information, and emphasize numbers that look good at first glance. Candidates fall into these traps again and again, even those with strong mathematical backgrounds.

We’ll examine the five most common traps.

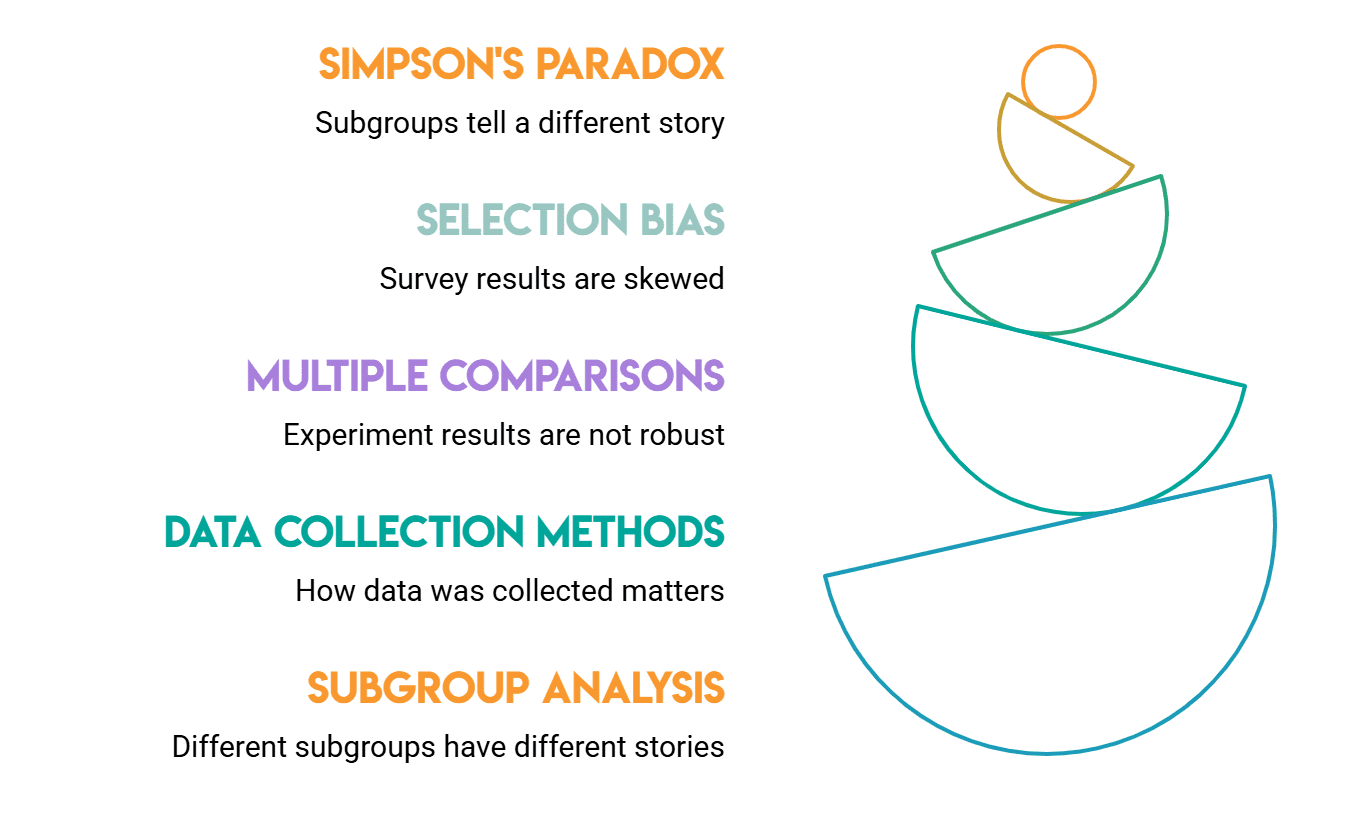

# Understanding Simpson’s Paradox

The purpose of this trap is to catch people who implicitly trust the collected numbers.

Simpson’s paradox occurs when a trend appears in different groups of data but disappears or reverses when those groups are combined. The classic example of this is UC Berkeley’s 1973 admissions data: overall admissions rates favored men, but when broken down by department, women’s admissions rates were the same or better. The total numbers were misleading because women applied to more competitive departments.

Whenever groups have different sizes and different base rates, conflict is inevitable. Only understanding this can differentiate the surface level answer from the deeper answer.

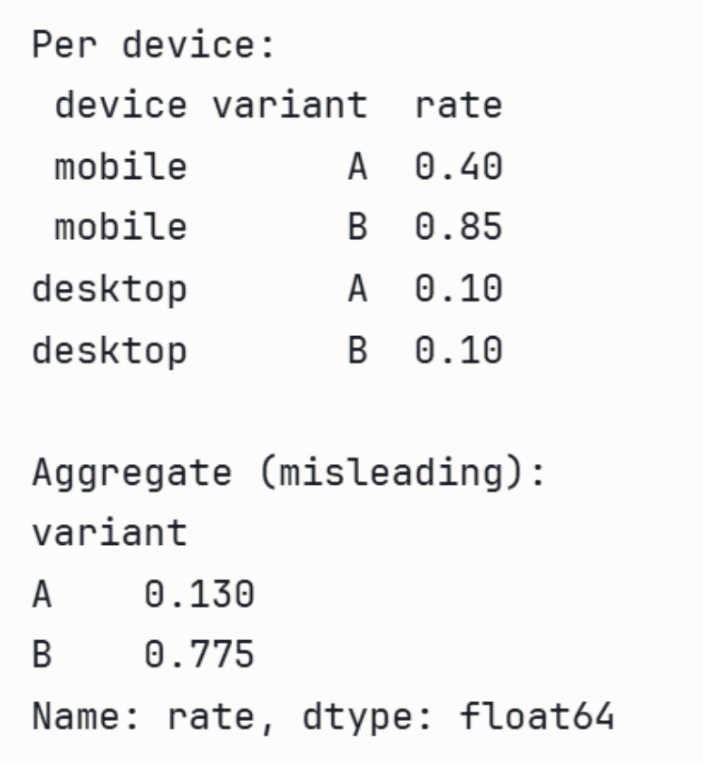

In an interview, a question might look like this: “We ran A/B testing. Overall, variant B had a higher conversion rate. However, when we broke it down by device type, variant A performed better on both mobile and desktop. What’s happening?” A strong candidate refers to Simpson’s paradox, explains the reason for it (group proportions differ between the two types), and asks to see the breakdown rather than relying on the overall statistic.

Interviewers use this to check if you are comfortable asking about subgroup distributions. If you only report overall numbers, you lose points.

// Performance with A/B test data

Using in the following demonstration PandaWe can see how the aggregate rate can be misleading.

import pandas as pd

# A wins on both devices individually, but B wins in aggregate

# because B gets most traffic from higher-converting mobile.

data = pd.DataFrame({

'device': ('mobile', 'mobile', 'desktop', 'desktop'),

'variant': ('A', 'B', 'A', 'B'),

'converts': (40, 765, 90, 10),

'visitors': (100, 900, 900, 100),

})

data('rate') = data('converts') / data('visitors')

print('Per device:')

print(data(('device', 'variant', 'rate')).to_string(index=False))

print('nAggregate (misleading):')

agg = data.groupby('variant')(('converts', 'visitors')).sum()

agg('rate') = agg('converts') / agg('visitors')

print(agg('rate'))Output:

# Identifying Selection Bias

This test lets interviewers assess whether you think about where data comes from before analyzing it.

Selection bias occurs when the data you have is not representative of the population you are trying to understand. Since bias is in the data collection process rather than the analysis, it is easy to overlook.

Consider these possible interview frames:

- We analyzed our users’ surveys and found that 80% are satisfied with the product. Does this tell us that our product is good? A solid candidate will point out that satisfied users are more likely to respond to surveys. The 80% figure probably overstates satisfaction as unhappy users likely chose not to participate.

- We examined customers who left last quarter and found that their engagement scores were predominantly poor. Should our focus be on a commitment to reducing churn? The problem here is that you only have engagement data for converted users. You don’t have engagement data for retained users, making it impossible to know if low engagement actually predicts churn or if it’s a characteristic of churned users in general.

A related version worth knowing about is survivorship bias: You only observe outcomes that make it through certain filters. If you only use data from successful products to analyze why they succeeded, you are ignoring products that failed for the same reasons you are considering as strengths.

// Simulating survey non-response

We can simulate how non-response bias distorts results by using numpy.

import numpy as np

import pandas as pd

np.random.seed(42)

# Simulate users where satisfied users are more likely to respond

satisfaction = np.random.choice((0, 1), size=1000, p=(0.5, 0.5))

# Response probability: 80% for satisfied, 20% for unsatisfied

response_prob = np.where(satisfaction == 1, 0.8, 0.2)

responded = np.random.rand(1000) < response_prob

print(f"True satisfaction rate: {satisfaction.mean():.2%}")

print(f"Survey satisfaction rate: {satisfaction(responded).mean():.2%}")Output:

Interviewers use selection bias questions to see if you separate “what the data shows” from “what is true about users.”

# Preventing P-Hacking

P-hacking (also called data dredging) is when you run multiple tests and only report the tests with (p < 0.05). The point is that the ( p )-values are only for individual tests. If 20 tests were run at the 5% significance level one false positive would be expected by chance alone. Fishing for significant results increases the false discovery rate. An interviewer might ask you the following: "Last quarter, we conducted fifteen feature experiments. At p < 0.05, three were found significant. Do all three need to be sent?" A weak answer says yes. A strong answer would first ask what the hypotheses were before running the test, whether significance thresholds were set in advance, and whether the team corrected for multiple comparisons. Follow-up often includes how you would design experiments to avoid this. Pre-registering hypotheses before data collection is the most straightforward solution, as it removes the option of deciding after the fact which tests were "real".

// seeing false positives accumulate

We can see how false positive results occur by chance SciPy.

import numpy as np

from scipy import stats

np.random.seed(0)

# 20 A/B tests where the null hypothesis is TRUE (no real effect)

n_tests, alpha = 20, 0.05

false_positives = 0

for _ in range(n_tests):

a = np.random.normal(0, 1, 1000)

b = np.random.normal(0, 1, 1000) # identical distribution!

if stats.ttest_ind(a, b).pvalue < alpha:

false_positives += 1

print(f'Tests run: {n_tests}')

print(f'False positives (p<0.05): {false_positives}')

print(f'Expected by chance alone: {n_tests * alpha:.0f}')Output:

Even with zero real effect, ~1 in 20 trials passes ( p < 0.05) by chance. If a team runs 15 experiments and only reports the important experiments, those results will likely be noisy. It is equally important to consider exploratory analysis as a form of hypothesis generation rather than confirmation. Before taking any action based on an investigation result, a confirmatory experiment is required.

# Management of Multiple Testing

This test is closely related to P-hacking, but it is worth understanding in its own right.

The multiple testing problem is a formal statistical issue: when you run multiple hypothesis tests simultaneously, the probability of at least one false positive increases exponentially. Even if the treatment has no effect, if you test 100 metrics in an A/B test and declare any with (p < 0.05) as significant, you should expect about five false positives. The improvements for this are well known: Bonferroni correction (divide alpha by the number of tests) and Benjamini-Hochberg (controls the false discovery rate rather than the family-wise error rate).

Bonferroni is a conservative approach: for example, if you test 50 metrics, your per-test threshold drops to 0.001, making it harder to detect real effects. Benjamini–Hochberg is more appropriate when you are willing to accept some false discoveries in exchange for greater statistical power.

In interviews, this discussion comes up when it comes to how a company tracks usage metrics. A question might be: “We monitor 50 metrics per experiment. How do you decide which ones are important?” A concrete response discusses pre-specifying primary metrics before performing the experiment and treating secondary metrics as exploratory while acknowledging the issue of multiple testing.

The interviewer is trying to find out if you know that taking more tests leads to more noise rather than more information.

# Addressing Confounding Variables

This trap captures candidates who assume correlation to be causation, without asking what other explanations the relationship might have.

A confounding variable is that which affects both the independent and dependent variables, creating the illusion of a direct relationship where none exists.

Classic example: Ice cream sales and drowning rates are correlated, but the cause is summer heat; Both go up in the warmer months. Acting on that correlation without taking the founder into account leads to bad decisions.

Confounding is particularly dangerous in observational data. Unlike a randomized experiment, observational data do not distribute potential confounders equally between groups, so the differences you see may not be due to the variable you are studying.

A common interview framing is: “We’ve noticed that users who use our mobile app more have significantly higher revenue. Should we push notifications to increase app opens?” A weak candidate says yes. A stronger one asks what type of user opens the app most frequently at the beginning: presumably the most engaged, highest-value users.

Engagement drives both app openings and spending. The app is not generating revenue from opening; They are a symptom of the same underlying user quality.

Interviewers use confounding to test whether you separate correlation from causation before drawing conclusions, and whether you would insist on randomized experiments or propensity score matching before recommending action.

// imitation of an established relationship

import numpy as np

import pandas as pd

np.random.seed(42)

n = 1000

# Confounder: user quality (0 = low, 1 = high)

user_quality = np.random.binomial(1, 0.5, n)

# App opens driven by user quality, not independent

app_opens = user_quality * 5 + np.random.normal(0, 1, n)

# Revenue also driven by user quality, not app opens

revenue = user_quality * 100 + np.random.normal(0, 10, n)

df = pd.DataFrame({

'user_quality': user_quality,

'app_opens': app_opens,

'revenue': revenue

})

# Naive correlation looks strong — misleading

naive_corr = df('app_opens').corr(df('revenue'))

# Within-group correlation (controlling for confounder) is near zero

corr_low = df(df('user_quality')==0)('app_opens').corr(df(df('user_quality')==0)('revenue'))

corr_high = df(df('user_quality')==1)('app_opens').corr(df(df('user_quality')==1)('revenue'))

print(f"Naive correlation (app opens vs revenue): {naive_corr:.2f}")

print(f"Correlation controlling for user quality:")

print(f" Low-quality users: {corr_low:.2f}")

print(f" High-quality users: {corr_high:.2f}")Output:

Naive correlation (app opens vs revenue): 0.91

Correlation controlling for user quality:

Low-quality users: 0.03

High-quality users: -0.07The naive number looks like a strong signal. Once you take control of the confounder, it disappears completely. Interviewers who see a candidate running this kind of stratified check (rather than accepting total correlation) know that they are talking to someone who will not send a broken recommendation.

# wrapping up

All five of these traps have something in common: You need to slow down and question the data before accepting what the numbers appear at first glance. Interviewers use these scenarios specifically because your first instinct is often wrong, and the depth of your answer beyond that first instinct is what separates a candidate who can work independently from one who needs direction on every analysis.

None of these ideas are difficult to understand, and interviewers inquire about them because they are typical failure modes in real data work. The candidate who recognizes Simpson’s paradox in a product metric, catches selection bias in a survey, or questions whether the result of an experiment survives multiple comparisons will make fewer bad decisions.

if you go in FAANG By being mindful of asking the following questions in an interview, you are already ahead of most candidates:

- How was this data collected?

- Are there subgroups that tell a different story?

- How many tests contributed to this result?

Besides helping in interviews, these habits can also prevent bad decisions from reaching production.

Nate Rosidi Is a data scientist and is into product strategy. He is also an adjunct professor teaching analytics, and is the founder of StratScratch, a platform that helps data scientists prepare for their interviews with real interview questions from top companies. Nate writes on the latest trends in the career market, gives interview advice, shares data science projects, and covers everything SQL.