The following article was originally published on Tim O’Brien medium Reposting here with permission of the page and author.

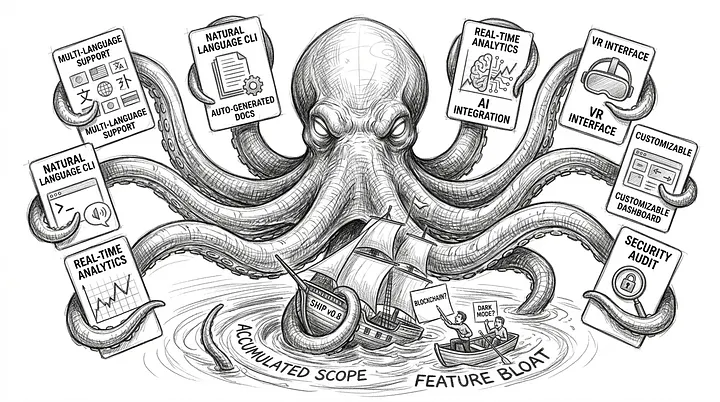

If you’ve spent any time in AI-assisted software work, you already know the moment when Scope Creep Kraken First of all he pitches a tent on the boat.

The project starts with a realistic goal and, usually, with a sensible goal. Create internal device. Clear reporting flow. Add missing admin screen. Then someone realizes that the model can generate a Swift application in minutes to render it on an iPhone, and the mood in the room changes.

“Why not? We can present it on an iOS application, and it will only take 10 minutes. Go for it. These tools are amazing. Wow.”

That first idea is often really useful. What could have taken a week is now taking an hour. This is part of what makes the pattern so attractive. It doesn’t start with disability. It starts with equipment-driven movement.

The meeting continues, “Let’s put an entire year’s backlog into the system and see if we can get it all done in a week. Never mind the token spending limit, let’s get it done.” A proper weekly release meeting has now set the stage for rapid expansion in scope, and that’s how Scope Creep Kraken has taken over.

Of course, scope creep is older than AI. The fear of “while we’re at it” has been haunting software teams long before pasting a stack trace in a chat window. What AI changed was the growth rate. In the older version of this problem, additional scope still had to make its way through staff shortages. Someone had to create this feature, debug it, test it, and explain why it is there. That friction was often the only thing standing between a focused project and an overextended team.

AI broke it.

Now additional features often come with a demo attached. “Can we add multilingual support?” Forty-five seconds later, there’s a branch. “What about the documentation generated?” sure why not? “Can the CLI accept natural language commands?”. The model appears optimistic, enough to make the whole thing seem tentatively reasonable. Each addition looks manageable in isolation. This is how Kraken works. It does not attack simultaneously. This wraps a small grip at a time around the project.

Signs that the Kraken is already on your boat

- Non-ticketed exhibits

- no one asked for branches

- Demo instead of design decisions

- “It only took the model 30 seconds.”

The part I keep seeing in teams is not reckless ambition, but confident improvement. People are responding to real potential. It is not wrong for them to be excited that so much has suddenly become possible.

The problem starts when “We can produce it quickly” gets quietly replaced with “We decided it belongs in the project.” Those are not the same sentences.

For the time being, Kraken looks helpful too. Output increases. Screens appear. The branches grow. People feel productive, and sometimes they really are productive in the narrow local sense. What’s hidden in that explosion of visible progress is integration costs. Each tentacle should be tested along with every other tentacle. Every feature generated becomes a maintenance liability. Each small addition takes the project a little further away from the problem it originally set out to solve.

The product manager might say, “A mobile app? I didn’t ask for it, but I think it’s cool. We’ll see. Who will review it with the customer?”

This usually happens when the team discovers that the Kraken is already on the boat. The original sponsor asked for a hammer and is now watching a Swiss Army knife unfold in real time, with multiple blades that no one asked for and at least one that doesn’t appear to be turning properly.

AI also makes it dangerously easy to confuse demonstrations with decisions.

A useful response is not to doubt every experiment. It’s worth pitching the first few tents. The response is to bring back the old discipline where AI has made it easier to remove. Have a written scope. Consider additional effects as real decisions rather than quick side effects. Ask what impact each new feature will have on testing, documentation, support, and the team’s ability to explain the system six months from now. If no one can answer those questions, the feature is not “completed” simply because the model has produced a solid draft.

what makes Scope Creep Kraken A good name is one that teams can use at that time. Once people can say, “This is another tent,” the conversation becomes clear. You’re no longer arguing about whether the idea is clever or not. You’re asking if it’s driven by a need or a capability.