In this article, you will learn how TurboQuant, a new algorithm suite recently launched by Google, achieves advanced compression of large language models and vector search engines without loss of accuracy.

Topics we’ll cover include:

- What is TurboQuant and why it represents a meaningful advance over prior quantification techniques.

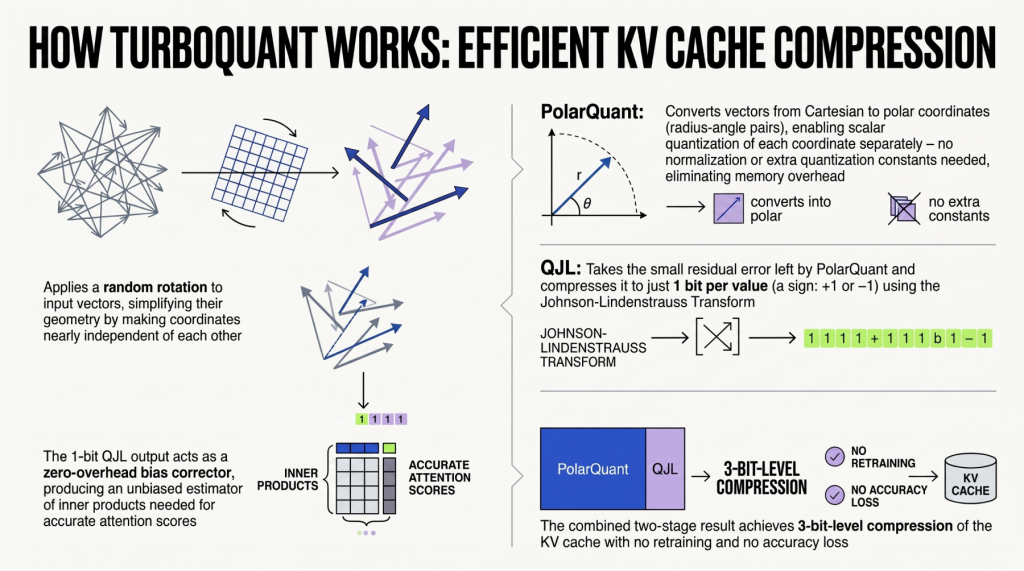

- How a two-stage compression process – PolarQuant followed by QJL – works together to eliminate memory overhead and hidden bias.

- Why TurboQuant’s approach to KV cache compression is based on strong theoretical foundations rather than purely practical engineering.

Effective KV Compression with TurboQuant

Image by editor

Introduction

turboquant Recently launched by Google as a novel algorithm suite and library to implement large language models (LLM) and advanced quantization and compression in vector search engines – an essential element of the RAG system. Simply put, the goal is to drastically improve the efficiency of these massive AI systems. TurboQuant has been shown to successfully reduce cache memory consumption to just 3 bits, without having to retrain the model or compromise accuracy.

This article takes a look at the steps behind the origin turboquant algorithm For advanced compression, with a special focus on how Key-Value (KV) Cache Compression Works – Recall that keys (K) and values (V) are two of the three main heuristics of text embeddings implemented inside the attention mechanism of LLM, which play a key role in autoregressive text generation models.

Turboquant in brief

LLM and vector search engines use high-dimensional vectors to process information with impressive results. However, this process requires large amounts of memory, which usually causes major bottlenecks in the so-called key-value (KV) cache – a quick-access “digital cheat sheet” containing frequently used information for real-time retrieval. Since managing large reference lengths scales KV cache accesses in a linear fashion, memory capacity and computing speed can be severely limited.

Vector Quantization (VQ) Techniques used in recent years with LLM and RAG systems help reduce the size of text vectors to reduce bottlenecks, but they often introduce “memory overhead” side effects. They also need to calculate full-precision quantization constants on small blocks of data. For these reasons, the potential benefits of compression may ultimately be partially negated.

TurboQuant was proposed by Google with a Python library as a suite of next-generation algorithms for advanced compression with zero accuracy loss. TurboQuant better deals with the memory overhead problem by employing a two-step process aided by two complementary techniques:

- polarquant: This is the compression technique applied in the first stage. It compresses high-dimensional data by mapping vector coordinates to the polar coordinate system. This simplifies the data geometry and removes the need to store additional quantization constants – the main cause of memory overhead.

- QJL (Quantized Johnson-Lindenstrauss): The second step in the compression process. It focuses on removing potential biases introduced in the previous step, acting as a mathematical checker that applies a minimum of one-bit compression to remove hidden errors or residual biases resulting from PolarQuant.

Inside the KV Compression Process

To fully understand why TurboQuant’s KV compression is so highly effective, we need to take a closer look at its methodological steps. The algorithm addresses a fundamental mathematical challenge: when the quantizer is optimized based only on mean-square error, hidden biases are naturally introduced during the estimation of inner products between vector data objects – for example, an essential operation when computing precise attention scores inside LLMs.

To address this bias challenge, the first stage of the algorithm (PolarQuant) applies a random rotation to the data vectors. As a result, the data geometry is simplified by generating a compact beta distribution at each coordinate. In higher-dimensional vectors, different coordinates become almost completely independent of each other. This high degree of freedom is the key to easily and optimally applying a standard scalar quantizer to each part of the vector separately. Instead of using Cartesian coordinates, PolarQuant converts the vector to polar coordinates described by a radius-angle pair, such that the data is mapped onto a “spherical grid”, eliminating the need for expensive data normalization and the associated memory overhead. In short, most of the compression effort takes place in this first stage, which captures the main semantics and intensity of the original vector.

The second stage (QJL) aims to remove biases and hidden errors, as the MSE-optimization-driven first stage may leave a small residual error that potentially causes bias in the attention score calculation. It applies a minimal level of compression – only 1-bit – using the QJL algorithm directly on the residual error. The Johnson–Lindenstrauss transform shrinks high-dimensional residual data while preserving essential relationships, properties, and distances between data points. Each resulting number is reduced to just one sign bit (+1 or -1), behaving as a zero-overhead mathematical error checker. The result is an unbiased estimator that completely removes hidden residual biases introduced in the first step, yielding highly accurate attention scores.

final thoughts

The methods underlying the TurboQuant algorithm for KV compression go beyond mere practical engineering solutions. They represent fundamental algorithmic solutions supported by strong theoretical evidence. TurboQuant has set a new benchmark for achievable efficiency near the theoretical low cost limit, while maintaining higher precision than classical quantization while operating under an astonishing 3-bit-level efficiency approach.