Author(s): Rishav Sehgal

Originally published on Towards AI.

TL;DR

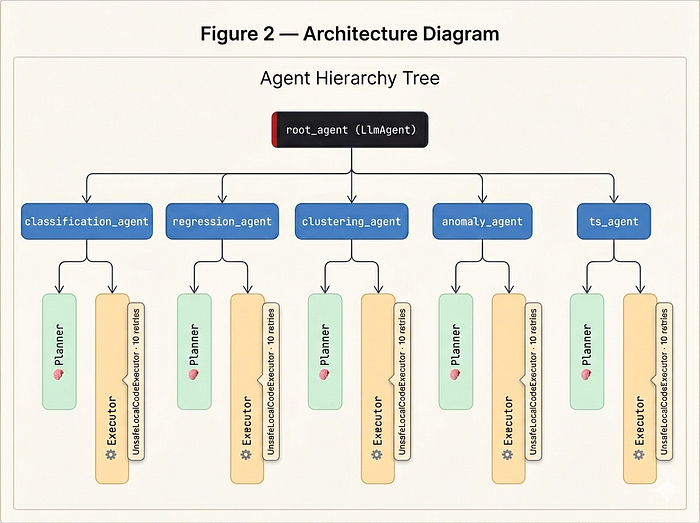

- Google wraps PyCaret’s AutoML engine in an ADK agent hierarchy

- A natural language prompt → plan → code → execution → MLflow tracking

- Self-correction up to 10 times upon failure; isolates artifacts per session

- Includes classification, regression, clustering, anomaly detection, time series

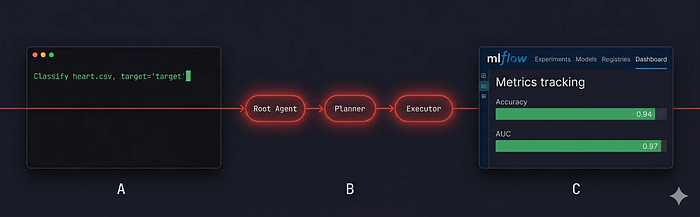

If you’ve used PyCaret, you know that it already cuts out ML boilerplate dramatically. PyCaretAgent goes further: a root agent reads your intent, a planner designs the pipeline, and an executor writes and runs the code – all without you touching a line of Python.

how it works

Three layers. inert agent Validates your CSV and routes it to the right expert. Every expert is one SequentialAgent: A planner Designs the pipeline and creates a session ID; One Executor Writes code, runs it, and logs everything to MLflow.

smart bits

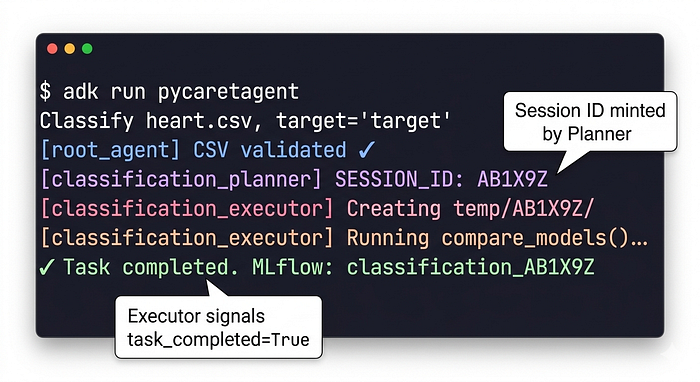

Session ID via callback. Planner outputs a free-text plan SESSION_ID: AB1X9Z Token. A regex callback extracts it and leaves it in the shared session state – no structured output format needed.

10-Try self-improvement again. UnsafeLocalCodeExecutor(error_retry_attempts=10) Automatically re-runs the generated code on failure, allowing the model to diagnose and fix its own bugs.

Failure short-circuit. A before_model_callback checks a check_failure_status Flags and skips re-runs if the task has already succeeded – no wasted API calls.

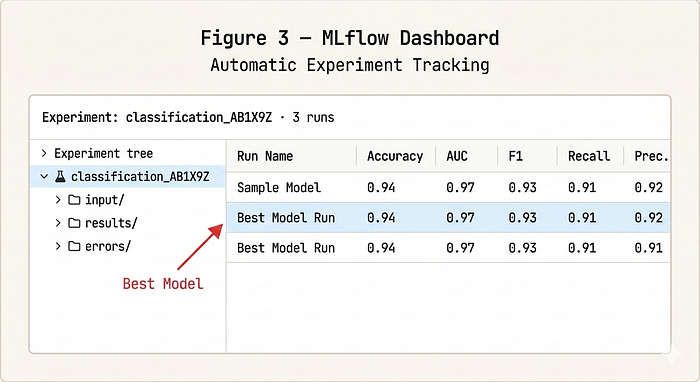

classification_AB1X9Z For immediate recovery.The agent doesn’t just run your ML pipeline – it tracks, isolates, and fixes itself every failure.

run it

git clone https://github.com/Rishav1996/PyCaretAgent.git

cd PyCaretAgent && uv pip install .

uv run mlflow ui --port 5000

uv run adk run pycaretagent

Hint: “Classify heart.csv where target is ‘target’.” That’s the entire interface. The agent verifies the file, plans, codes, executes, and delivers a tracked experiment.

what will happen next

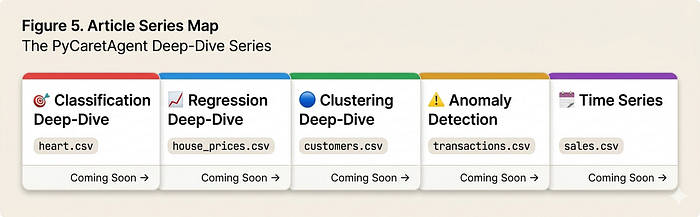

This article is the first in this series. Each next piece dives deeper into a task type, walking through a real dataset from start to finish – prompt, plan, generated code and the final MLFlow result.

Classification Deep-Dive (Coming Soon)

with a predisposition to heart disease heart.csv. We trace the entire agent run – from CSV validation to compare_models() – and explain each decision taken by the planner.

Regression Deep-Dive (coming soon)

House price prediction. How the executor plays the tune tune_model()And why the 10-retry mechanism matters when XGBoost hits a dependency mismatch in mid-run.

Clustering Deep-Dive (coming soon)

Customer segmentation without target column. Notice that the route agent skips target validation altogether and goes straight to the unsupervised pipeline.

Deep-dive into anomaly detection (coming soon)

Fraud detection on transaction datasets. The planner chooses the isolation forest; We explain why, and show how anomaly scores are revealed in the form of MLflow metrics.

Time Series Deep-Dive (Coming Soon)

Sales forecasting with seasonality identification. Most complex setup – index parsing, horizon selection and MASE vs MAPE in MLflow comparison table.

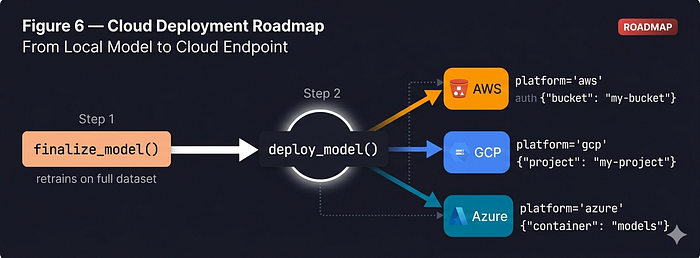

Future: Deploy directly to the cloud

The current version trains, tracks, and saves models locally. The next major milestone closes the loop – pushing the final model to cloud storage and using PyCaret’s built-in inference endpoint. deploy_model()Triggered directly by the agent without any manual steps.

The target UX user prompt has an additional sentence: “Classify heart.csv, target=’target’, deploy to AWS.” The root agent will parse the platform, pass it as a session state variable, and the executor will add a deploy_model() call later finalize_model() – Credentials injected from environment variables. A dedicated article in this series will cover full credential handoff patterns and multi-cloud configurations.

PyCaretAgent is a clean, reusable template for any agent-wrapped AutoML system. The planner/executor pattern, state handoff via callbacks, and retry-based self-correction are all generalized far beyond PyCaret.

Github link: https://github.com/Rishav1996/PyCaretAgent

Published via Towards AI