Just when you thought you’ve heard it all, an AI system designed to detect cancer surprises researchers Deep interest in racism.

there were surprising findings published in the journal cell report medicineShows that four major AI-augmented pathology diagnostic systems vary in accuracy depending on patients, The disturbing thing is that AI is extracting demographic data – age, gender and race – directly from pathology slides, a feat that is impossible for human doctors.

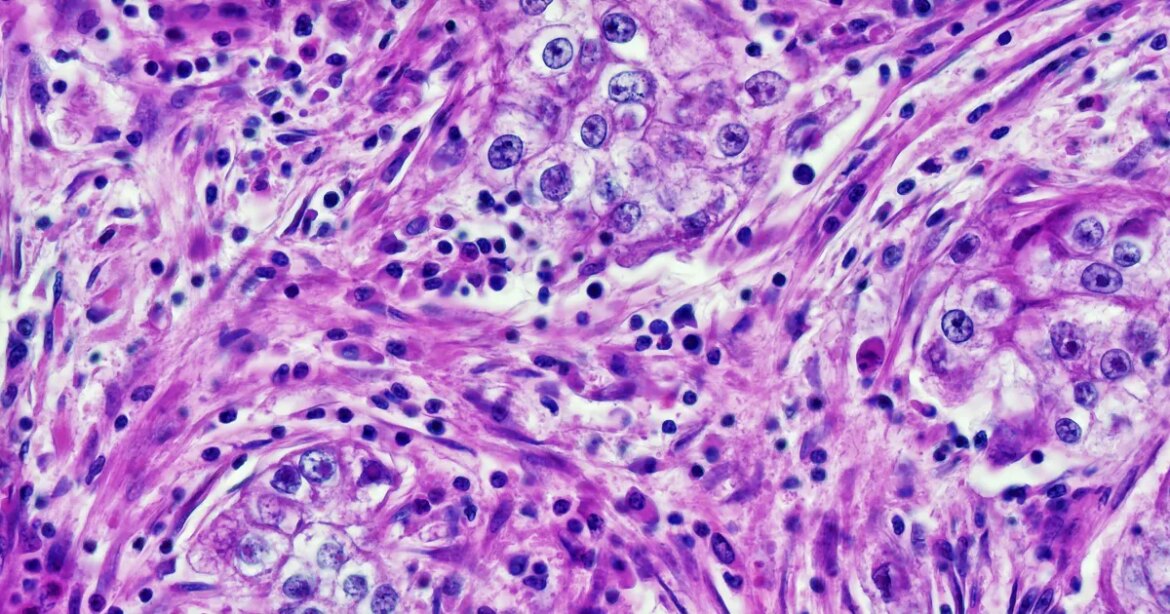

To conduct the study, Harvard University researchers combed through nearly 29,000 cancer pathology images from approximately 14,400 cancer patients. Their analysis found that deep learning models displayed worrying biases 29.3 percent of the time — in other words, on about a third of all diagnostic tasks assigned to them.

“We found that the AI is so powerful, it can distinguish many obscure biological signals that cannot be detected by standard human assessment,” said Harvard researcher Kun-Hsing Yu, a senior author of the study. Press release“Reading demographics from pathology slides is considered ‘mission impossible’ for a human pathologist, so the bias in pathology AI was a surprise to us,”

Yu said these bias-based errors are the result of AI models relying on patterns associated with different demographics when analyzing cancer tissue. In other words, once the four AI tools are focused on a person’s age, race, or gender, those factors will become the backbone of tissue analysis. In fact, AI will continue to have replication bias resulting from gaps in AI training data.

To give a concrete example, AI tools were able to specifically identify samples taken from black people. The authors wrote that these cancer slides had higher numbers of atypical, neoplastic cells and fewer supporting elements than white patients, allowing the AI to eliminate them, even though the samples were anonymized.

Then trouble came. Once AI pathology tools identified a person’s race, they became extremely obsessed with finding previous analyzes corresponding to that particular identifier. But when the model was trained on data from mostly white people, the tools would struggle with those who are not as represented. For example, AI models had trouble distinguishing subclasses of lung cancer cells in black people — not because they lacked lung cancer data, but because they lacked lung cancer data. Black Derived from lung cancer cells.

Yu said, it was unexpected Press release“Because we would expect pathology assessment to be objective. When evaluating images, we do not need to know the patient’s demographics to make a diagnosis.”

Back in June, medical researchers discovered a similar racial bias Large language models (LLM) in psychiatric diagnostic tools. In that case, results showed that AI tools often proposed “inferior treatment” plans for black patients even when their race was clearly known.

In the case of AI cancer-screening tools, the Harvard research team has also developed a new AI-training approach called FAIR-Path. When this training framework was introduced into the AI tool before analysis, they found that it missed 88.5 percent of the disparities in performance.

That there is a solution to this is good news, although the remaining 11.5 percent are also in doubt. And until such training frameworks are made mandatory for all AI tools in the pathology field, questions will remain over the systems’ inherent biases.

More information on cancer: Amazon data center linked to cluster of rare cancer