Author(s): Rashmi

Originally published on Towards AI.

Adversarial NLP in 2026: When Text Attacks Text

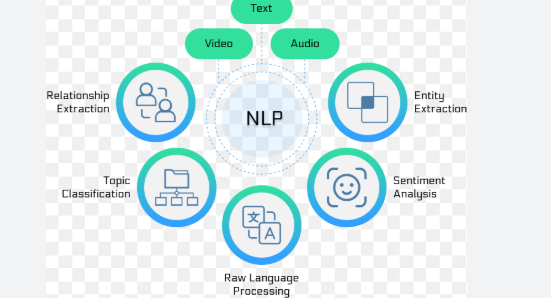

Adversarial NLP is the study and practice of preparing text inputs that cause NLP systems to behave inappropriately – misclassifying, leaking secrets, following malicious instructions, or taking unsafe actions. Think of it this way: The model reads the language… so attackers use the language as an exploit.

The article discusses the growing importance of adversarial NLP, with an emphasis on threats such as manipulation of natural language processing (NLP) systems through crafted text input to exploit vulnerabilities. It explores the mechanisms of various adversarial attacks, various families of attacks, and their implications for system design and security. Furthermore, it highlights the essential need for better defense mechanisms and evaluation that consider the potentially adversarial nature of all inputs, thereby fundamentally establishing language security in NLP and generative AI systems.

Read the entire blog for free on Medium.

Published via Towards AI

Take our 90+ lessons from Beginner to Advanced LLM Developer Certification: This is the most comprehensive and practical LLM course, from choosing a project to deploying a working product!

Towards AI has published Building LLM for Production – our 470+ page guide to mastering the LLM with practical projects and expert insights!

Find your dream AI career at Towards AI Jobs

Towards AI has created a job board specifically tailored to machine learning and data science jobs and skills. Our software searches for live AI jobs every hour, labels and categorizes them and makes them easily searchable. Search over 40,000 live jobs on AI Jobs today!

Comment: The content represents the views of the contributing authors and not those of AI.