As context length grows into the tens and hundreds of thousands of tokens, the key value cache in Transformer decoders becomes the primary deployment bottleneck. The cache stores the key and value for each layer and vertex with shape (2, L, H, T, D). For vanilla transformers like Llama1-65B, the cache reaches about 335 GB at 128k tokens in bfloat16, which directly limits the batch size and increases the time to the first token.

Architectural compression leaves sequence axis untouched

Production models already compress caches along multiple axes. Grouped query attention shares keys and values across multiple queries and produces compression factors up to 4 in Llama3, 12 in GLM 4.5, and 16 in Qwen3-235B-A22B along the head axis. DeepSeek V2 compresses key and value dimensions via multi-head latent attention. Hybrid models combine attention with sliding window attention or state space layers to reduce the number of layers that maintain the full cache.

These changes are not compressed along the sequence axis. Sparse and retrieval style attention retrieves only a subset of the cache at each decoding step, but all tokens still occupy memory. Therefore rendering a practical long reference requires techniques that remove cache entries that will have negligible impact on future tokens.

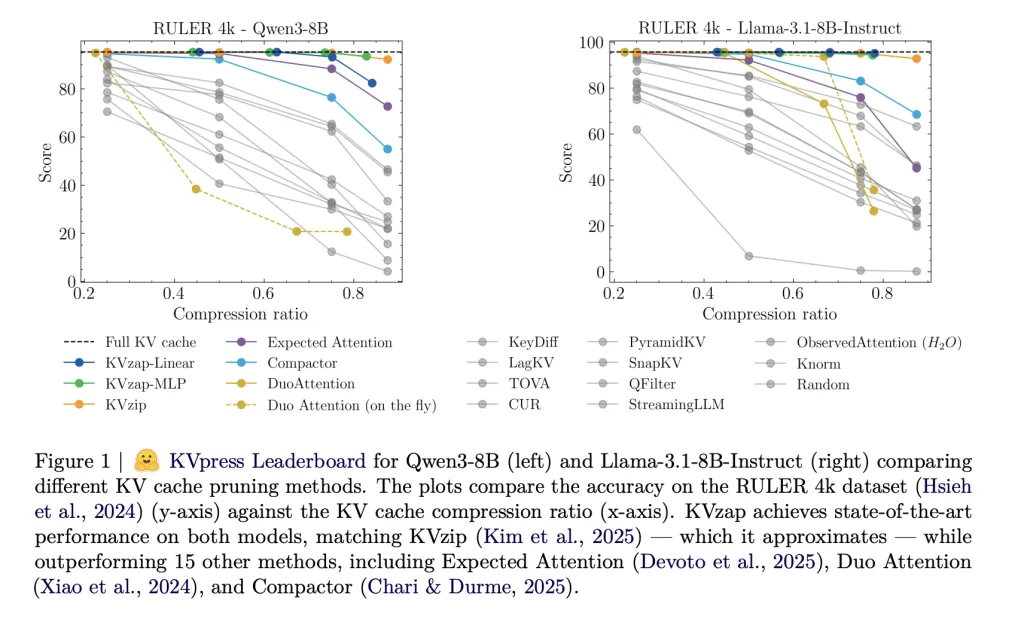

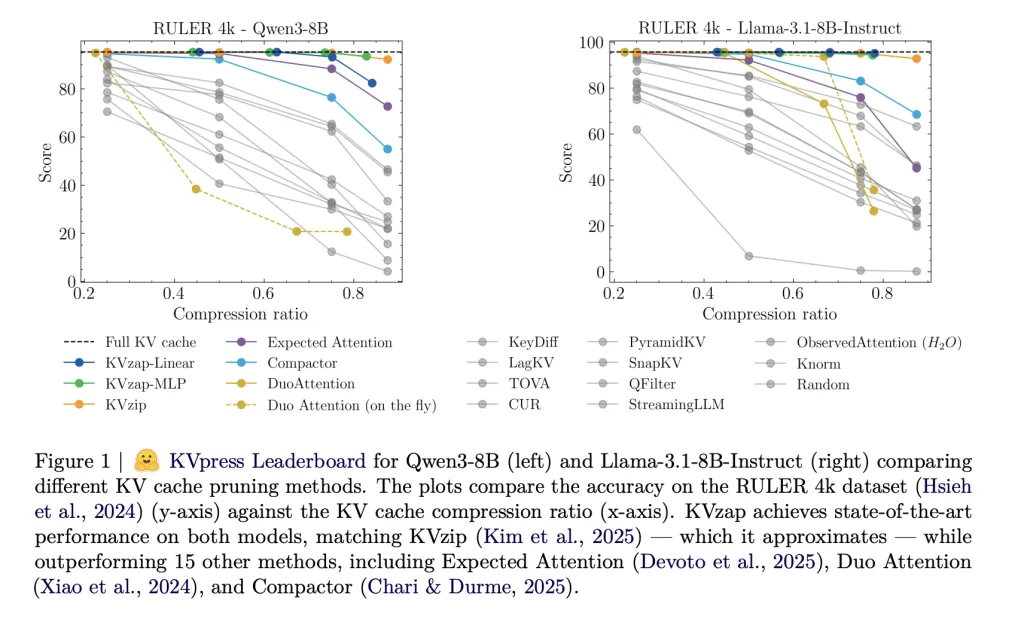

KVPress Project More than twenty such pruning methods from NVIDIA are collected into one codebase and exposed through a public leaderboard on Hugging Face. Methods such as H2O, Expected Attention, DuoAttention, Compactor, and KVzip are evaluated in a consistent manner.

KVZip and KVZip Plus as scoring oracle

KVzip is currently the strongest cache pruning baseline KVPress Leaderboard. It defines an importance score for each cache entry using the copy and paste pretext function. The model runs on an extended prompt where it is asked to exactly replicate the original context. For each token position in the original prompt, the score is the maximum attention weight that any position in the repeated clause assigns back to that token when grouped query attention is used. Entries with lower scores are excluded until the global budget is completed.

KVzip+ refines this score. It multiplies the attention weight by the value contribution criterion in the residual stream and normalizes it by the obtained hidden state criterion. This better matches the actual change that a token induces in the residual stream and improves the correlation with downstream accuracy compared to the original score.

These Oracle scores are effective but expensive. KVZip requires prefilling at the extended prompt, which doubles the reference length and makes it much slower for production. It also cannot move during decoding because the scoring process assumes a fixed signal.

KVzap, a surrogate model on hidden states

KVzap replaces oracle scoring with a small surrogate model that operates directly on the hidden states. For each Transformer layer and each sequence position t, the module obtains the hidden vector hₜ and outputs the predicted log score for each key value vertex. Two architectures are considered, a single linear layer (KVzap Linear) and a two layer MLP with GELU and hidden width equal to one eighth of the model’s hidden size (KVzap MLP).

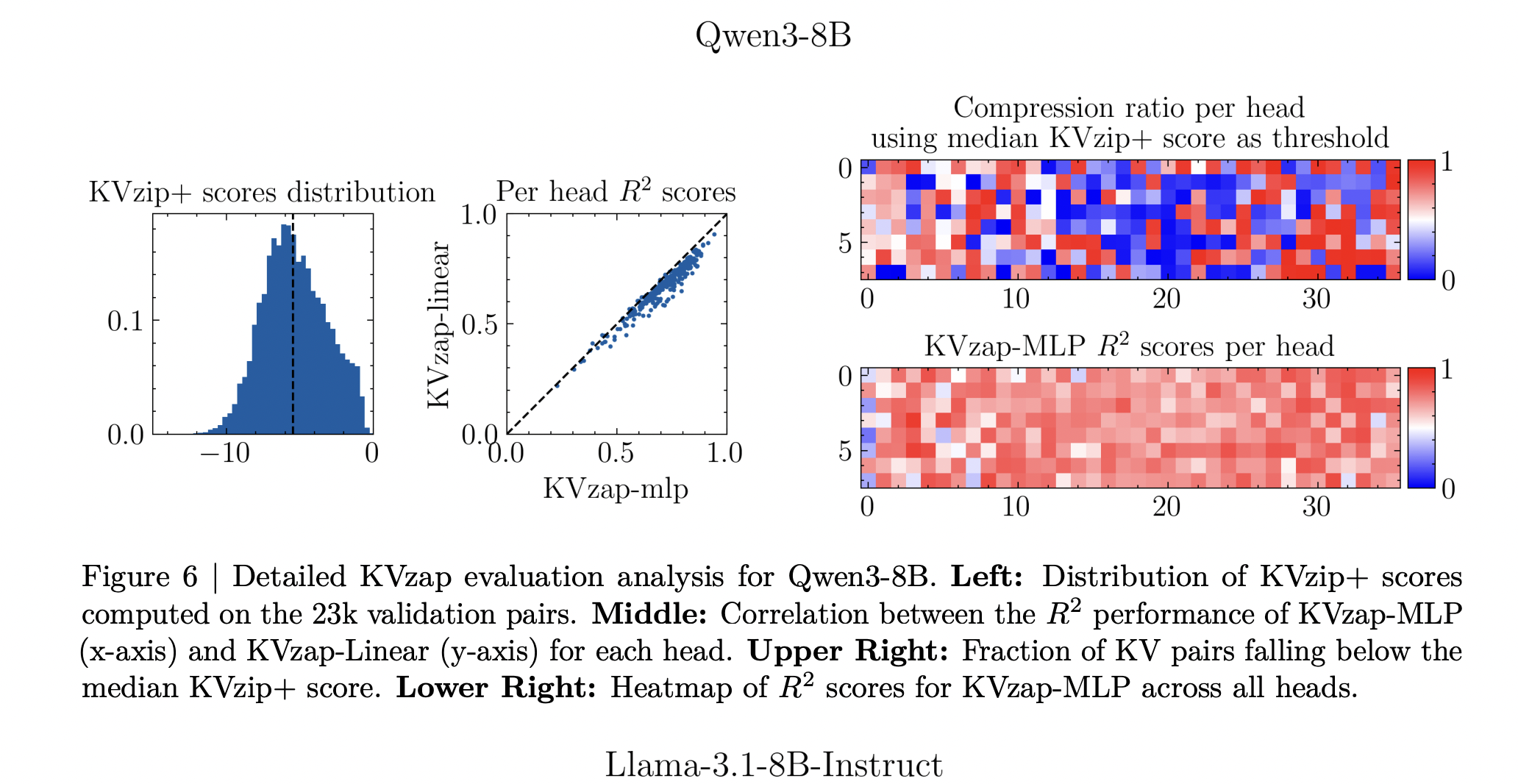

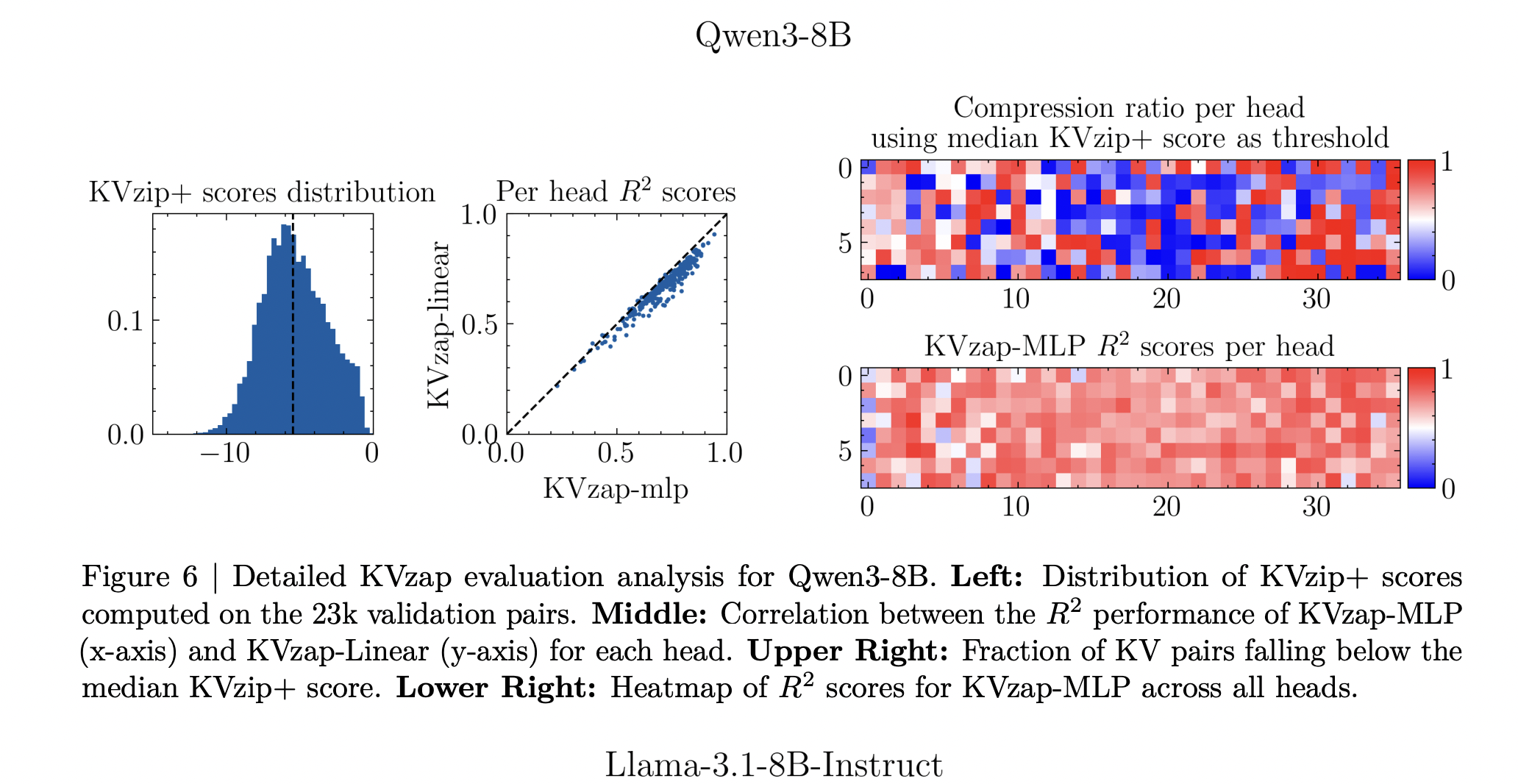

Training Nemotron uses signals from pretraining dataset samples. The research team filtered 27k signals for lengths between 750 and 1,250 tokens, sampling up to 500 signals per subgroup, and then sampling 500 token positions per signal. For each major price top they receive approximately 1.2 million training pairs and a validation set of 23k pairs. The surrogate learns to return the logged KVzip+ score from the hidden state. In all models, the squared Pearson correlation between predictors and Oracle scores reaches approximately between 0.63 and 0.77, with the MLP version consistently outperforming the linear version.

Thresholding, sliding window and negligible overhead

During inference, the KVzap model processes the hidden states and generates a score for each cache entry. Entries with scores below a certain threshold are cut off, while a sliding window of the latest 128 tokens is always kept. The research team provides a concise PyTorch-style function that invokes the model, sets the score of the local window to infinity and returns a compressed key and value tensor. In all experiments, pruning is used after the attention operation.

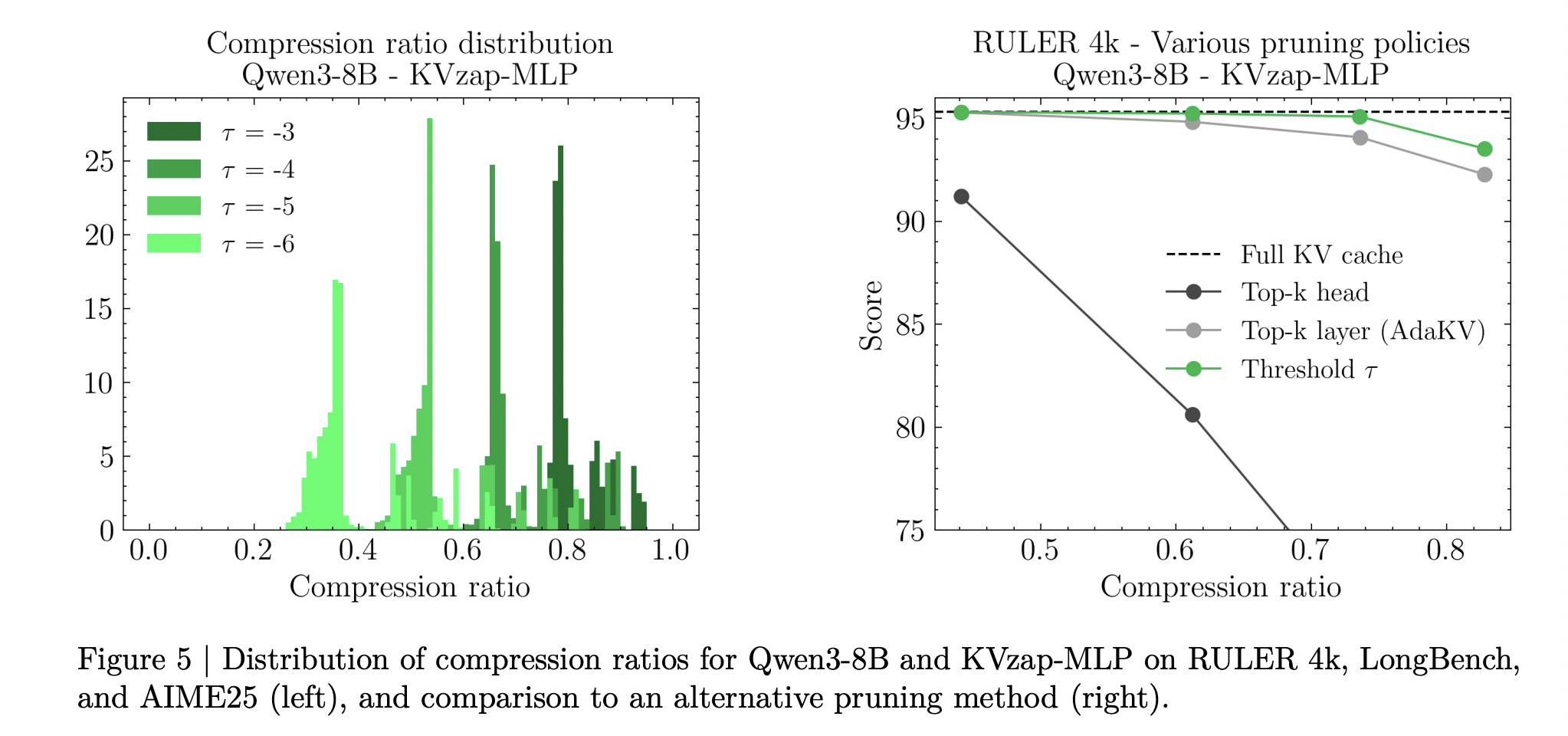

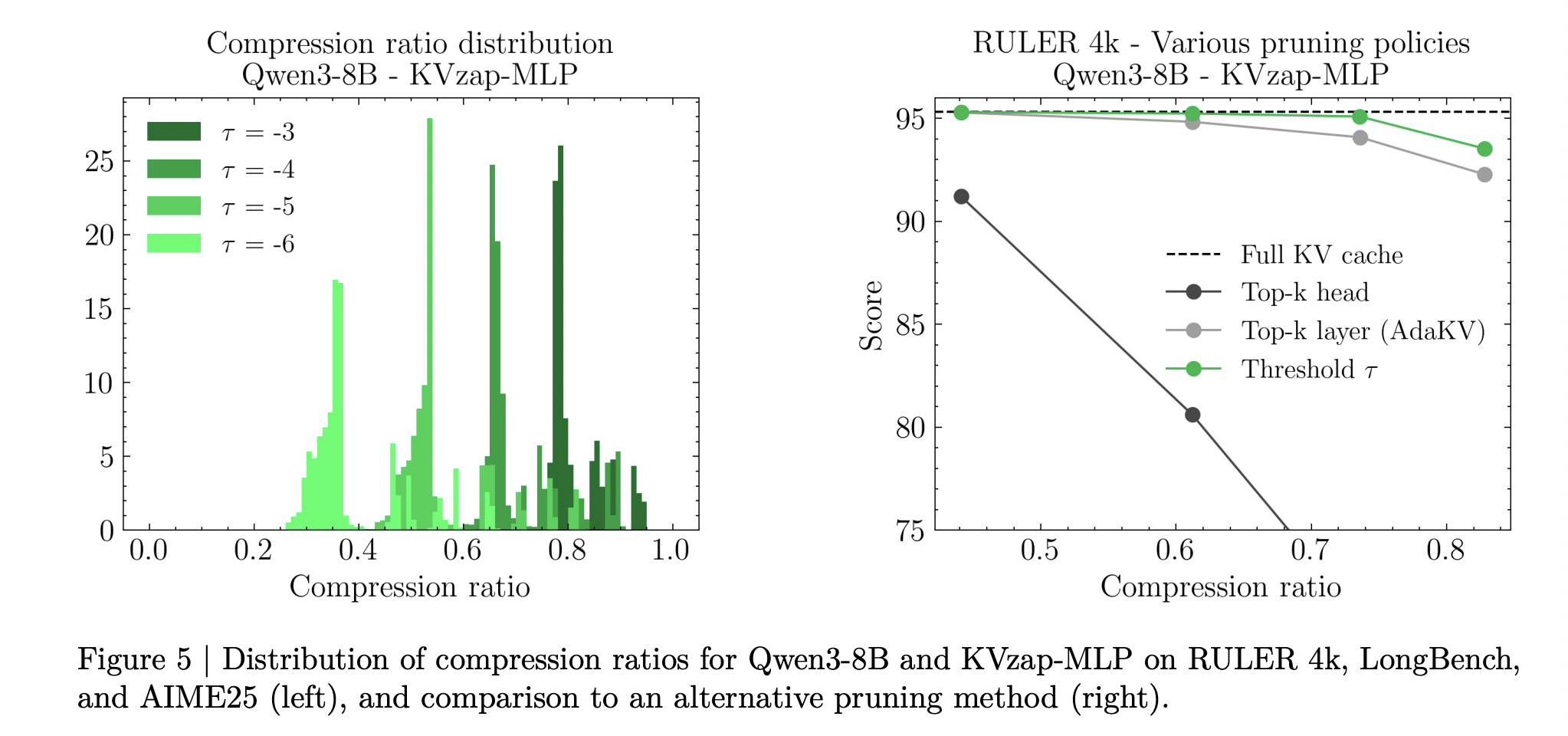

KVzap uses score thresholding instead of fixed top k selection. A single threshold produces different effective compression ratios on different benchmarks and even on signals within the same benchmark. The research team reports up to 20 percent variation in compression ratio in signals over a certain range, reflecting differences in information density.

Compute overhead is small. An analysis at the layer level shows that the additional cost of the KVZAP MLP is at most 1.1 percent of the linear projection FLOP, while the linear version adds about 0.02 percent. Relative memory overhead follows similar values. In the long context regime, the quadratic cost of attention dominates so additional FLOPs are effectively negligible.

Results on RULER, LongBench and AIME25

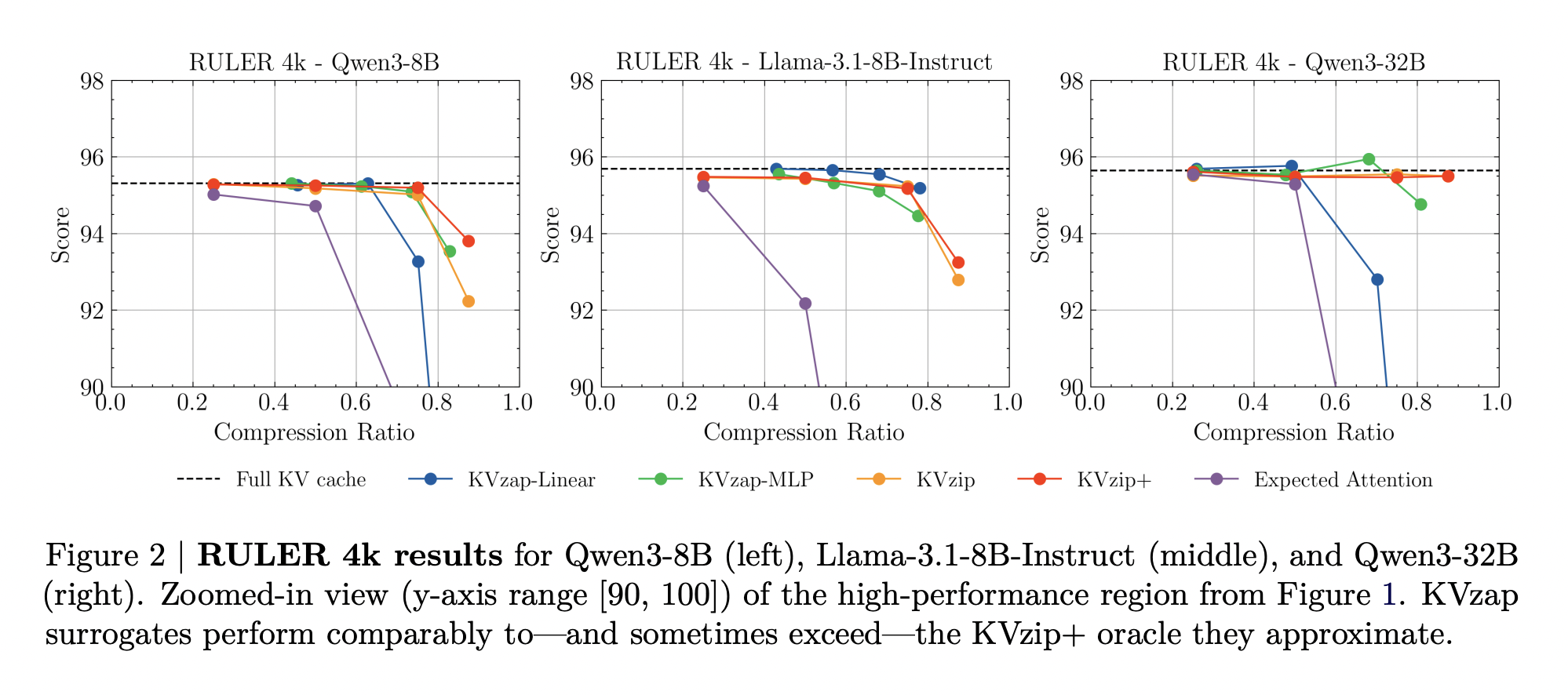

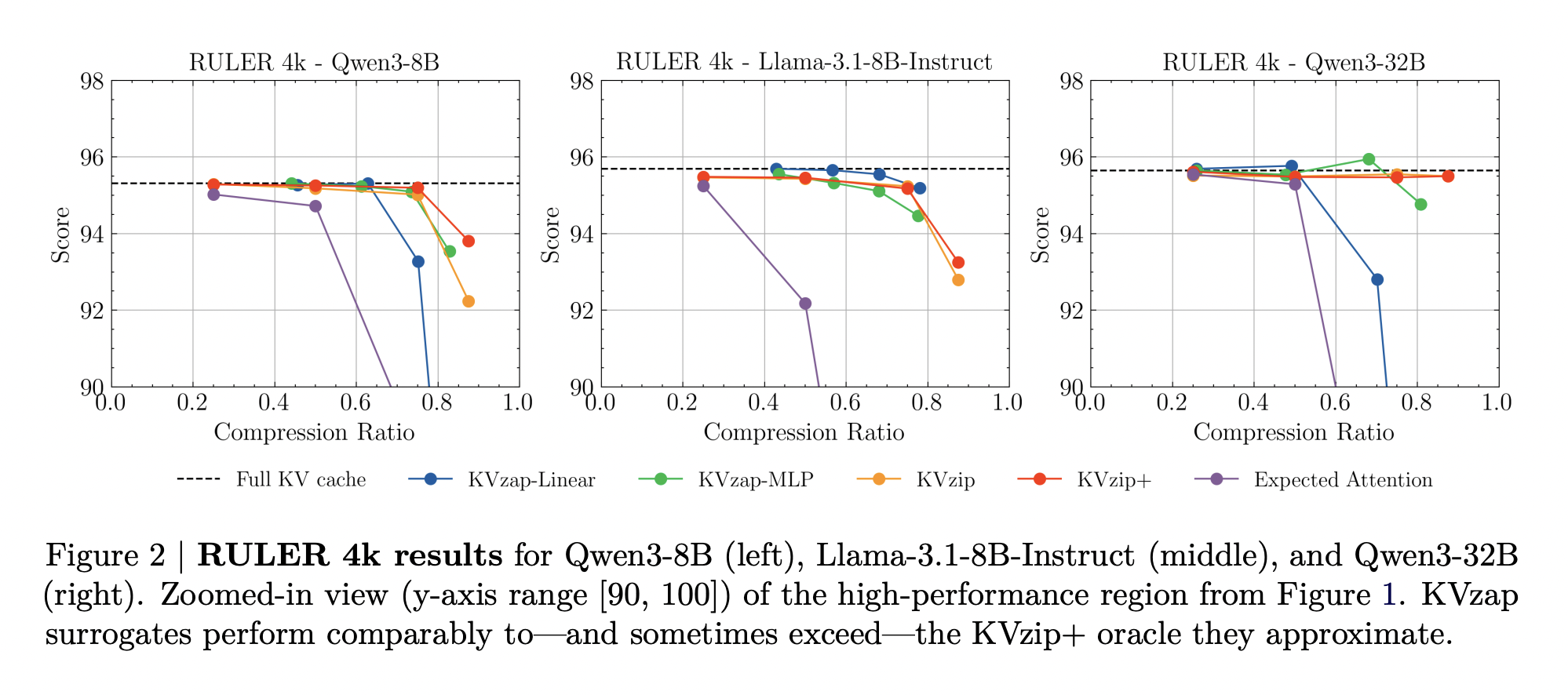

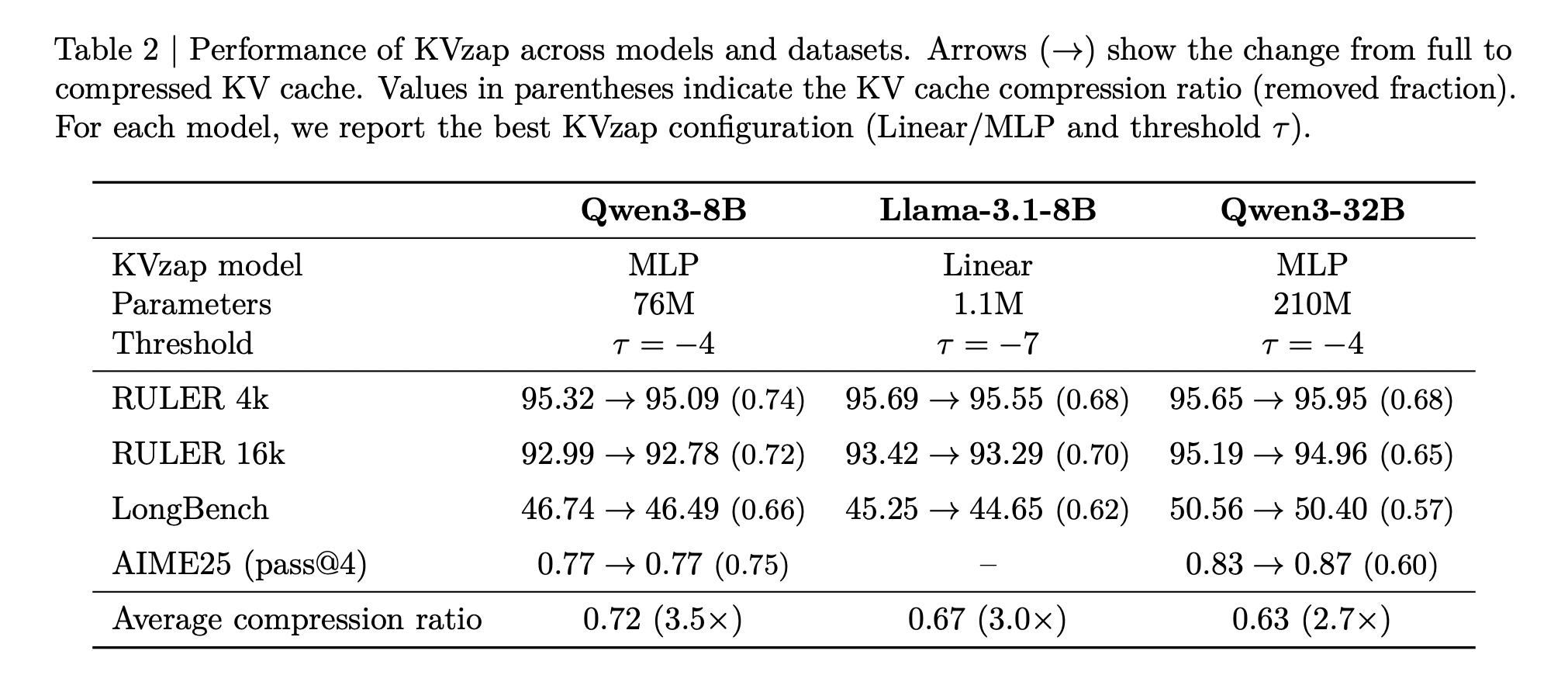

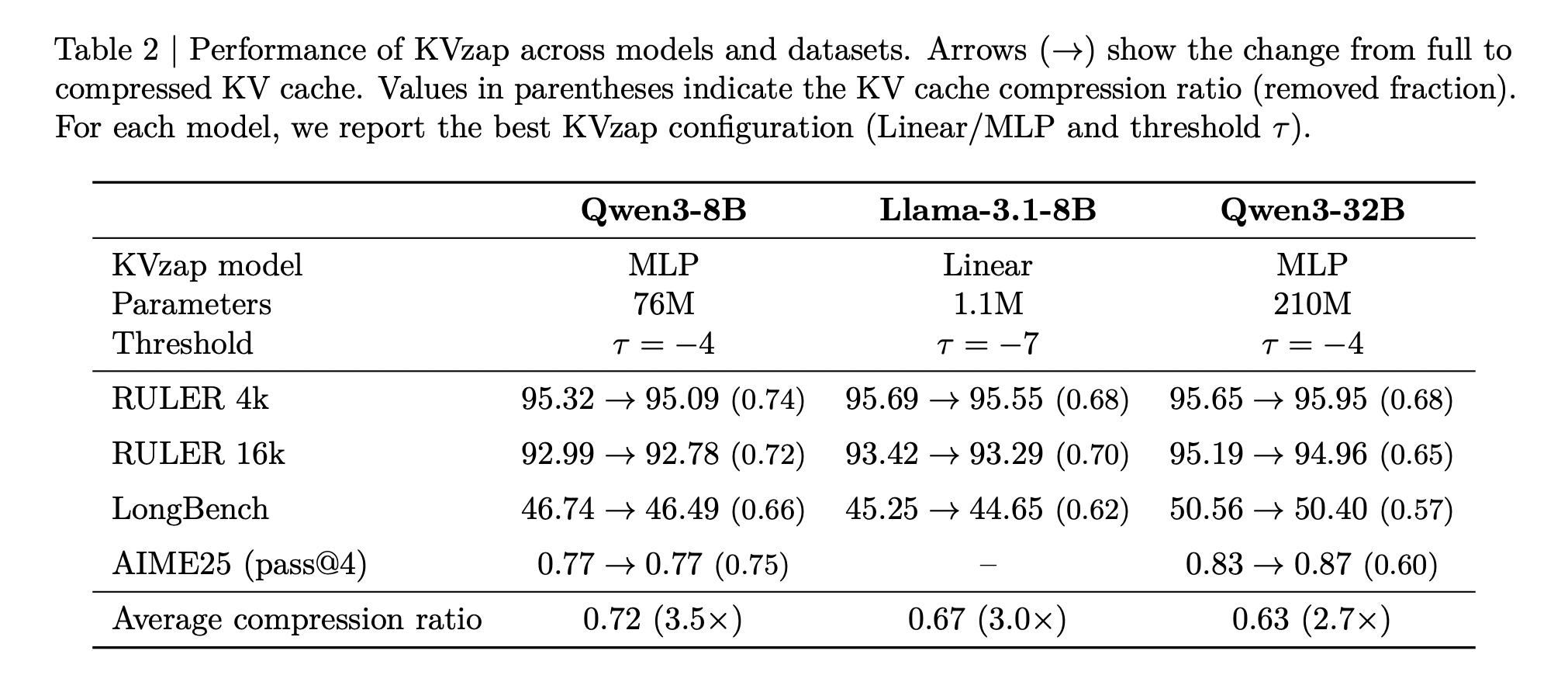

KVzap is evaluated on long context and logic benchmarks using Qwen3-8B, Llama-3.1-8B Instruct and Qwen3-32B. Long reference behavior is measured on RULER and LongBench. RULER uses synthetic tasks on sequence lengths from 4k to 128k tokens, while LongBench uses real-world documents from multiple task categories. AIME25 provides a mathematics reasoning workload with 30 Olympiad level problems which are evaluated under pass at 1 and pass at 4.

On RULER, KVzap matches the full cache baseline within a small accuracy margin while removing a large fraction of the cache. For Qwen3-8B, the best KVzap configuration RULER achieves fraction deletion above 0.7 at 4k and 16k, while keeping the average score within a few tenths of a point of the full cache. Similar behavior also occurs for Llama-3.1-8B Instruct and Qwen3-32B.

On Longbench, the same limitations lead to lower compression ratios because the documents are less repetitive. KVzap stays close to the full cache baseline up to about 2 to 3x compression, while fixed budget methods such as expected attention drop off more on many subsets as compression increases.

On AIME25, KVzap MLP maintains or slightly improves pass at 4 accuracy at about 2 times the compression and remains usable even when discarding more than half the cache. Overly aggressive settings, for example linear variants on the high threshold that remove more than 90 percent of the entries, collapse performance as expected.

Overall, the above table shows that the best KVzap configuration per model provides an average cache compression between about 2.7 and 3.5, while keeping task scores very close to the full cache baseline in RULER, LongBench and AIME25.

key takeaways

- KVzap is an input adaptive approximation of KVzip+ that learns to predict oracle KV importance scores from hidden states using small per-layer surrogate models, either a linear layer or a shallow MLP, and then prunes out KV pairs with lower scores.

- Training uses Nemotron pretraining signals where KVzip+ provides supervision, generating approximately 1.2 million examples per instance and achieving squared correlations in the 0.6 to 0.8 range between predicted and oracle scores, which is sufficient for faithful cache importance ranking.

- KVzap applies a global score threshold with a fixed sliding window of recent tokens, so compression automatically quickly adapts to information density, and the research team reports up to 20 percent variation in compression achieved across signals at the same threshold.

- Across Qwen3-8B, Llama-3.1-8B Instruct and Qwen3-32B on RULER, LongBench and AIME25, KVzap reaches approximately 2 to 4x KV cache compression while keeping accuracy very close to full cache, and it achieves state-of-the-art tradeoffs on the NVIDIA KVpress leaderboard.

- The additional computation is small, about 1.1 percent additional FLOP for the MLP variant, and KVZAP is implemented in the open source KVPress framework with ready-to-use checkpoints on the hugging face, making it practical to integrate into an existing long reference LLM serving stack.

check it out paper And GitHub repo. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.

Asif Razzaq Marktechpost Media Inc. Is the CEO of. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. Their most recent endeavor is the launch of MarketTechPost, an Artificial Intelligence media platform, known for its in-depth coverage of Machine Learning and Deep Learning news that is technically robust and easily understood by a wide audience. The platform boasts of over 2 million monthly views, which shows its popularity among the audience.