Knowledge graphs and their limitations

With the rapid development of AI applications, knowledge graphs (KGs) have emerged as a fundamental structure to represent knowledge in a machine-readable form. They organize information as triples – a head entity, a relationship and a tail entity – creating a graph-like structure where entities are nodes and relationships are edges. This representation allows machines to understand and reason about connected knowledge, supporting intelligent applications such as question answering, semantic analysis, and recommendation systems.

Despite their effectiveness, knowledge graphs (KG) have notable limitations. They often miss important contextual information, making it difficult to capture the complexity and richness of real-world knowledge. Additionally, many KGs suffer from data sparseness, where entities and relationships are incomplete or poorly linked. This lack of full annotation limits the contextual cues available during inference, creating challenges for effective reasoning even when integrated with larger language models.

context graph

Context graphs (CGs) extend traditional knowledge graphs by adding additional information such as time, location, and source details. Rather than storing knowledge as isolated facts, they capture the situation in which a fact or decision occurred, leading to a clearer and more accurate understanding of real-world knowledge.

When used with agent-based systems, context graphs also store how decisions were made. Agents need more than rules – they need to know how the rules were previously applied, when exceptions were allowed, who approved decisions and how conflicts were handled. Since agents work directly where decisions are made, they can naturally record this entire context.

Over time, these stored decision traces form a context graph that helps agents learn from past actions. This allows the system to understand not only what happened, but also why it happened, making the agent’s behavior more consistent and reliable.

What are the implications of relevant information?

Contextual information goes beyond simple entities-relationship facts and adds important layers to knowledge representation. It helps distinguish between phenomena that appear similar but occur under different circumstances, such as differences in time, space, scale, or surrounding circumstances. For example, two companies may be competitors in one market or time period but not in another. By capturing such context, systems can present knowledge in a more detailed manner and avoid treating all seemingly similar facts as the same.

In context graphs, contextual information also plays an important role in reasoning and decision making. This includes notations such as historical decisions, applicable policies, exceptions granted, approvals involved, and events related to other systems. When agents record how a decision was made – what data was used, what rules were checked, and why an exception was allowed – this information becomes a reusable reference for future decisions. Over time, these records help link entities that are not directly connected and allow the system to reason based on past results and examples rather than relying solely on fixed rules or isolated triples.

There has been a marked shift in AI systems – from static devices to decision-making agents, largely operated by major industry players. Real-world decisions are rarely based only on rules; They include exceptions, approvals and lessons from previous cases. Context graphs address this gap by capturing how decisions are made in the system – what policies were checked, what data was used, who approved the decision, and what outcome followed. By structuring this decision history as context, agents can reuse prior decisions instead of learning the same edge cases repeatedly. Some examples of this change include:

- Both Gmail’s Gemini features and the Gemini 3-based agent framework show a shift from simple help to AI in making active decisions, whether it’s managing inbox preferences or running complex workflows.

- Gmail relies on conversation history and user intent, while Gemini 3 agents use memory and state to handle longer tasks. In both cases, context matters more than single signals.

- Gemini 3 serves as an orchestration layer for multi-agent systems (ADK, Agno, Letta, eAgent), just as Gemini orchestrates summarization, authoring, and prioritization inside Gmail.

- Features like AI inbox and suggested replies rely on a persistent understanding of user behavior, just as agent frameworks like Letta and Mem0 rely on stateful memory to prevent context loss and ensure consistent behavior.

- Gmail turns emails into actionable summaries and tasks, while Gemini-powered agents automate browser, workflow, and enterprise tasks — both reflecting a broader shift toward AI systems that act, not just respond.

OpenAI

- ChatGPIT Health brings health data from different sources—medical records, apps, wearables, and notes—into one place. This creates a clear, shared context that helps the system understand health patterns over time rather than having to answer separate questions, such as how context graphs connect facts to their context.

- Using personal health history and past interactions, ChatGPT Health helps users make better-informed decisions, such as preparing for doctor visits or interpreting test results.

- Health runs in a separate, secure location, keeping sensitive information private and contained. This ensures that the health context remains accurate and preserved, which is essential for safely using context-based systems like context graphs.

JP Morgan

- JPMorgan has shown a shift toward building in-house decision systems, replacing proxy advisors with its AI tool, Proxy IQ, that collects and analyzes voting data across thousands of meetings rather than relying on third-party recommendations.

- By analyzing proxy data internally, firms can incorporate historical voting behavior, company-specific details, and firm-level policies – which aligns with the idea of context graphs that preserve decision-making patterns over time.

- Internal AI-based analytics provides JPMorgan with greater transparency, speed and consistency in proxy voting, reflecting the broader move toward context-aware, AI-powered decision making in enterprise settings.

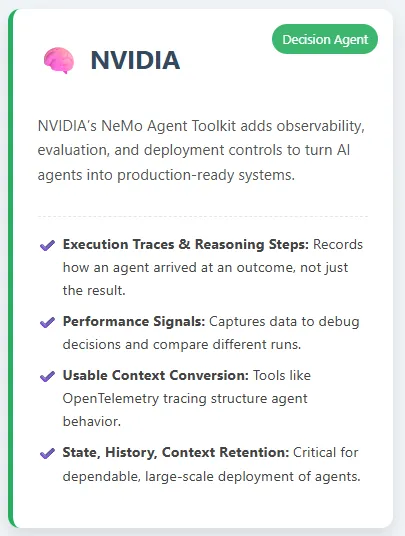

NVIDIA

- NVIDIA’s NeMo Agent Toolkit helps transform AI agents into production-ready systems by adding observation, evaluation, and deployment controls. By capturing execution traces, logic steps, and performance signals, it records how an agent arrived at an outcome – not just the end result – which aligns closely with the idea of context graphs.

- Tools like open telemetry tracing and structured assessments translate agent behavior into usable context. This makes it easier to debug decisions, compare different runs, and continually improve reliability.

- Just as DLSS 4.5 integrates AI deeply into real-time graphics pipelines, NAT integrates AI agents into enterprise workflows. Both highlight the broader shift toward AI systems that maintain state, history, and context, which is critical for reliable, large-scale deployment.

Microsoft

- Copilot Checkout and brand agents turn shopping conversations into direct purchases. Questions, comparisons, and decisions are all in one place, creating clear context as to why the customer chose the product.

- These AI agents work exactly where purchasing decisions happen – in chat and inside brand websites – allowing them to guide users and complete checkout without additional steps.

- Merchants maintain control over transactions and customer data. Over time, these interactions build useful inferences about the customer’s intent and purchasing patterns, helping future decisions be faster and more accurate.

I am a Civil Engineering graduate (2022) from Jamia Millia Islamia, New Delhi, and I have a keen interest in Data Science, especially Neural Networks and their application in various fields.