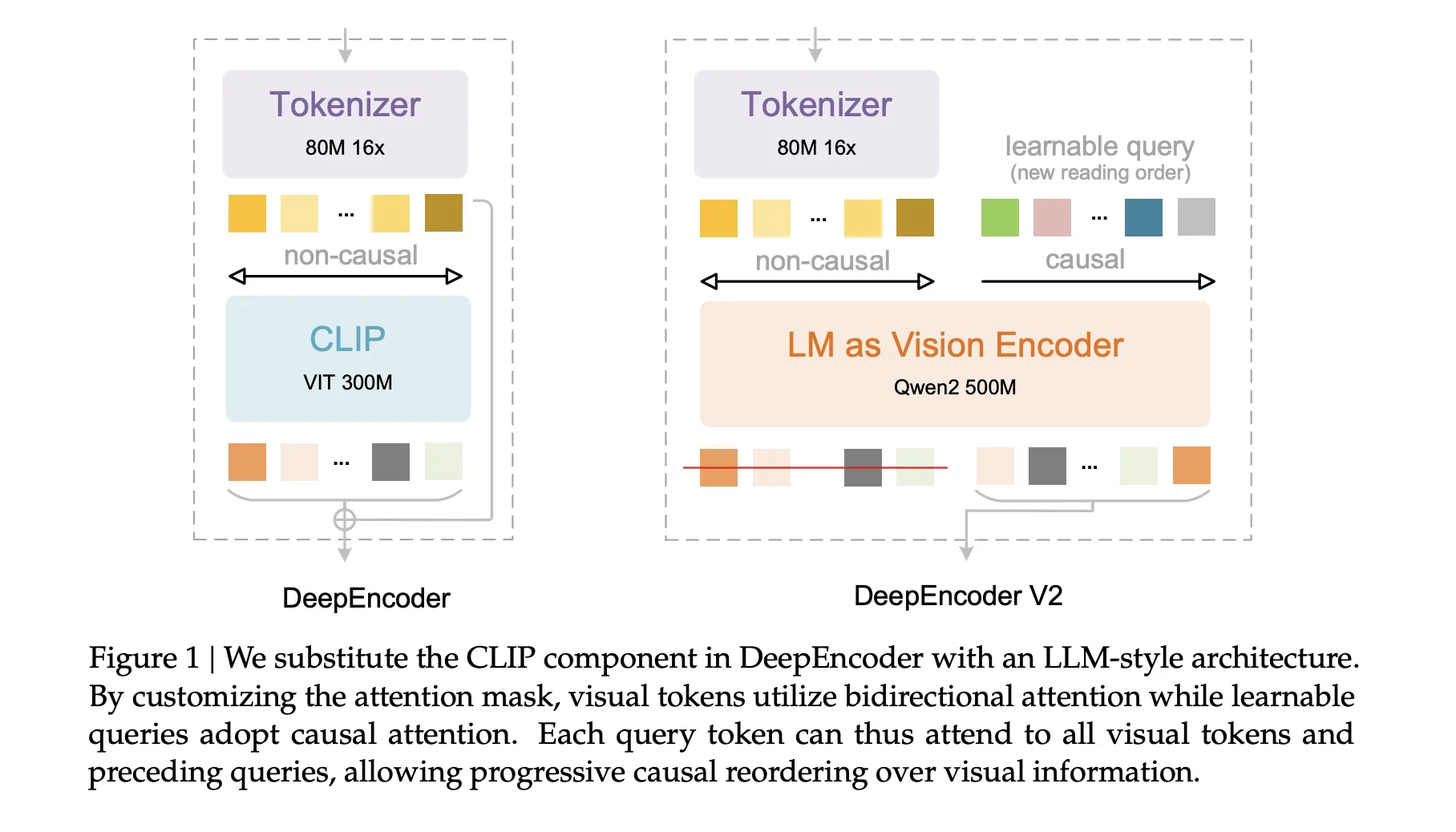

DeepSeek AI released DeepSeek-OCR 2, an open source document OCR and understanding system that reorganizes its vision encoder to read pages in a causal order that is closer to the way humans scan complex documents. The core component is DeepEncoder V2, which is a language model style transformer that converts a 2D page into a 1D sequence of visual tokens that follow a learned reading flow before text decoding even begins.

From raster sequences to causal visual flow

Most multimodal models still flatten images into a fixed raster sequence from top left to bottom right, and apply a transformer with stable positional encoding. It is a poor match for documents with multi-column layouts, nested tables, and mixed language fields. Instead human readers follow a semantic sequence that jumps between regions.

DeepSeek-OCR 2 maintains the encoder and decoder structure of DeepSeek-OCR, but replaces the original CLIP ViT based visual encoder with DeepEncoder V2. The decoder remains DeepSeek-3B-A500M, which is a MoE language model with about 3B total parameters and about 500M active parameters per token. The goal is to let the encoder perform causal reasoning on visual tokens and hand the decoder a sequence that is already aligned with the possible reading order.

Vision Tokenizer and Token Budget

Vision Tokenizer inherits from DeepSeek-OCR. It uses an 80M parameter SAM base backbone followed by 2 convolution layers. This step downsamples the image so that the visual token count is reduced by a factor of 16 and the features are compressed to an embedding dimension of 896.

DeepSeek-OCR2 uses a global and local multi crop strategy to cover dense pages without increasing token count. A global view at 1024×1024 resolution generates 256 tokens. Add up to 6 local crops with 144 tokens each at 768×768 resolution. As a result, the visual token count ranges from 256 to 1120 per page. This upper limit is slightly smaller than the 1156 token budget used in the Gundam mode of the original DeepSeek-OCR, and it is comparable to the budget used by Gemini-3 Pro on OmniDocBench.

DeepEncoder-V2, Language Model as Vision Encoder

DeepEncoder-V2 is built by instantiating a Qwen2-0.5B style transformer as a vision encoder. The input sequence is built as follows. First, create all the visual token prefixes from the tokenizer. Then a set of learnable query tokens, called causal flow tokens, are added as suffixes. Reason: The number of flow tokens equals the number of view tokens.

The attention pattern is asymmetric. Visual tokens use bidirectional attention and see all other visual tokens. Causal flow tokens use causal attention and can see all visible tokens and only the last causal flow token. Only outputs on due flow conditions are sent to the decoder. In effect, the encoder learns a mapping from a 2D grid of visual tokens to a 1D causal sequence of flow tokens that encodes the proposed reading order and local context.

It decomposes the design problem into 2 phases. DeepEncoder-V2 performs causal reasoning over scene structure and read sequence. DeepSeek-3B-A500M then performs causal decoding on the text conditioned on this rearranged visual input.

training pipeline

The training data pipeline follows DeepSeek-OCR and OCR focuses on deep content. OCR accounts for 80 percent of the data mix. The research team rebalances the sample across text, formulas, and tables using a 3:1:1 ratio so that the model sees enough structure-heavy examples.

Training takes place in 3 stages: :

in step 1Encoder pretraining combines DeepEncoder-V2 with a small decoder and uses a standard language modeling objective. The model is trained at 768×768 and 1024×1024 resolutions with multi scale sampling. Vision Tokenizer is derived from the original DeepEncoder. The LLM style encoder is initialized from the Qwen2-0.5B base. The optimizer is AdamW, with the cosine learning rate decreasing from 1e-4 to 1e-6 in 40k iterations. Approximately 160 A100 GPUs, with packing sequence length 8k and a large mix of document image text samples are used in training.

in phase 2Query enhancements integrate DeepEncoder-V2 with DeepSeek-3B-A500M and introduce multi crop views. Tokenizer is frozen. The encoder and decoder are jointly trained with 4 stage pipeline parallelism and 40 data parallel replications. The global batch size is 1280 and the schedule runs for 15k iterations with the learning rate decreasing from 5e-5 to 1e-6.

in step 3All encoder parameters are frozen. Only the DeepSeek decoder is trained to better adapt to the rearranged visual tokens. This phase uses the same batch size but a smaller schedule and a lower learning rate that drops from 1e-6 to 5e-8 in 20k iterations. Freezing the encoder at this stage more than doubles the training throughput.

Benchmark results on OmniDocBench

The main evaluation uses OmniDocBench-v1.5. This benchmark contains 1355 pages in 9 document categories in Chinese and English, including books, academic papers, forms, presentations, and newspapers. Each page is annotated with layout elements such as text spans, equations, tables and figures.

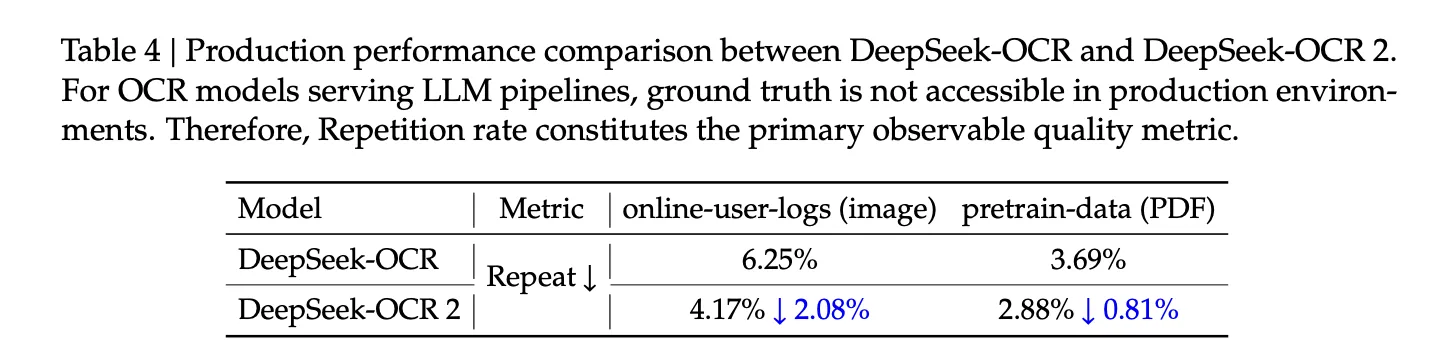

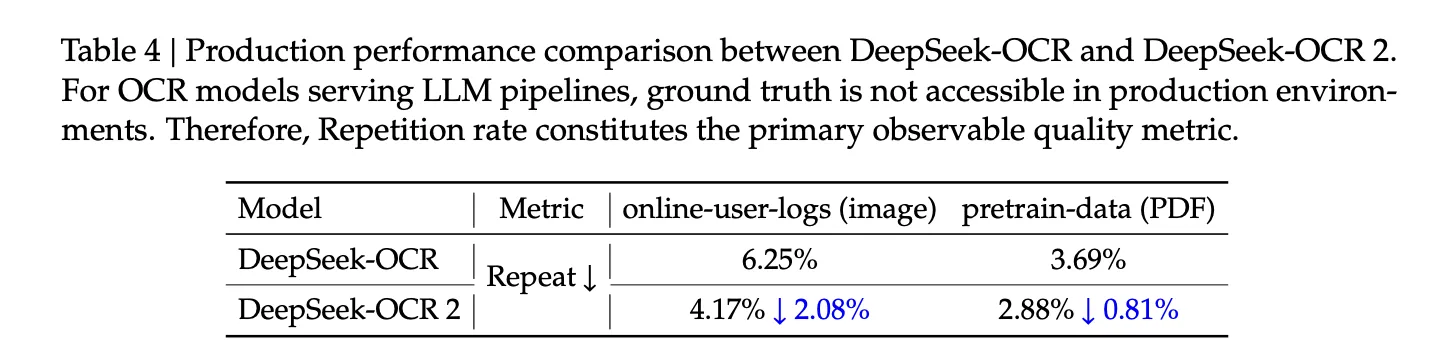

DeepSeek-OCR2 achieved an overall OmniDocBench score of 91.09 with a maximum of 1120 visual tokens. The original DeepSeek-OCR baseline score is 87.36 with a token maximum of 1156. DeepSeek-OCR2 therefore scores 3.73 points using a slightly smaller token budget.

The read order (R-order) edit distance, which measures the difference between predicted and ground truth read sequences, drops from 0.085 to 0.057. Text editing distance decreases from 0.073 to 0.048. Formula and table editing distances are also reduced, indicating better analysis of mathematics and structured fields.

When viewed as a document parser, DeepSeek-OCR-2 achieves an overall element level edit distance of 0.100. The original DeepSeek-OCR reaches 0.129 and Gemini-3 Pro reaches 0.115 under the same scene token constraints. This suggests that the causal visual flow encoder improves structural fidelity without expanding the token budget.

Category wise, DeepSeek-OCR-2 improves text editing distance for most document types, such as academic papers and books. Performance is weak on very dense newspapers, where text editing distance remains above 0.13. The research team links this to limited training data for newspapers and heavy compression at extreme text density. However, reading order metrics have improved across all categories.

key takeaways

- DeepSeek-OCR 2 replaces a CLIP ViT style encoder with DeepEncoder-V2, a Qwen2-0.5B based language model encoder that converts a 2D document page into a 1D sequence of causal flow tokens aligned with the learned read sequence.

- Vision Tokenizer uses an 80M parameter SAM base backbone with convolutions, multi crop global and local views, and keeps the visual token budget between 256 and 1120 tokens per page, which is slightly below the original DeepSeek-OCR Gundam mod, while on par with Gemini 3 Pro.

- Training involves a 3-stage pipeline, encoder pretraining, combined query enhancement with DeepSeq-3B-A500M, and fine-tuning the decoder with encoder frozen only, OCR heavy data mixing with 80 percent OCR data, and using a 3 to 1 to 1 sampling ratio on text, formulas, and tables.

- On OmniDocBench v1.5 with 1355 pages and 9 document categories, DeepSeek-OCR2 reaches an overall score of 91.09 against 87.36 for DeepSeek-OCR, reducing the reading order edit distance from 0.085 to 0.057, and 0.129 for DeepSeek-OCR and Gemini-3. Element level editing distance gets 0.100, compared to 0.115. Under the same visual token budget, Prof.

check it out paper, repo And model weight. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.

Michael Sutter is a data science professional and holds a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michael excels in transforming complex datasets into actionable insights.