Author(s): Shahidullah Kausar

Originally published on Towards AI.

Machine Learning Interview Prep Part 16

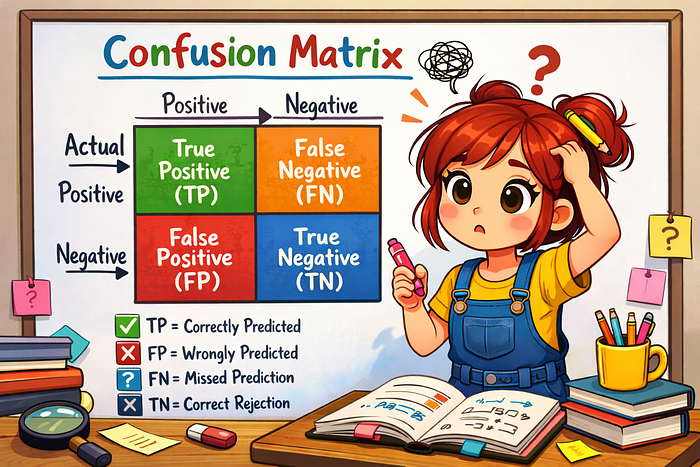

Confusion matrix is a table used to evaluate the performance of a classification model by comparing predicted labels with actual labels. It summarizes the results into four major outcomes: true positive, true negative, false positive and false negative. This structure shows not only how many predictions were correct, but also what types of errors the model made. From the confusion matrix, important metrics such as precision, accuracy, recall and F1-score can be calculated. This is particularly useful when dealing with imbalanced datasets, where accuracy alone can be misleading.

This article highlights the concept of a confusion matrix, details its key components and how it serves as an important tool in evaluating the performance of a classification model, especially in situations of class imbalance. This emphasizes the importance of computing metrics such as precision, accuracy, recall, and F1-score from the confusion matrix. The author also poses questions to test the reader’s understanding of these concepts, encouraging engagement through practical scenarios and thoughtful analysis of model performance evaluation methods.

Read the entire blog for free on Medium.

Published via Towards AI