Anthropic has launched Cloud Opus 4.6, its most capable model to date, focused on long-context reasoning, agentic coding, and high-value knowledge work. This model is built on Cloud Opus 4.5 and is now available on major cloud providers under claude.ai, Cloud API, and ID. claude-opus-4-6.

Model Focus: Agentic Action, Not a Single Answer

Opus 4.6 is designed for multi-step tasks where models must be planned, executed, and modified over time. According to the Anthropic team, they use it in Cloud Code and report that it focuses more on the hardest parts of a task, handles ambiguous problems with better judgment, and stays productive over long sessions.

The model thinks more deeply and revisits its reasoning before answering. This improves performance on difficult problems but may increase cost and latency on simpler problems. highlights the anthropic one /effort Parameter with 4 levels – low, medium, high (default), and maximum – so developers can clearly trade logic depth against speed and cost per endpoint or use case.

Beyond coding, Opus 4.6 targets practical knowledge-building tasks:

- run financial analysis

- Doing research with retrieval and browsing

- Using and creating documents, spreadsheets, and presentations

Inside Cowork, Anthropic’s autonomous work surface, the model can run multi-step workflows that diffuse these artifacts without constant human prompting.

Long-context capabilities and developer control

Opus 4.6 is the first Opus-class model with a 1M token reference window in beta. For more than 200k tokens in this 1M-reference mode, pricing increases to $10 per 1M input token and $37.50 per 1M output token. The model supports up to 128k output tokens, which is enough for very long reports, code reviews or structured multi-file editing in one response.

To make long running agents manageable, Anthropic ships several platform features around Opus 4.6:

- adaptive thinking: The model can decide when to use extended thinking based on task difficulty and context, rather than always running at maximum reasoning depth.

- effort control: 4 different effort levels (low, medium, high, maximum) expose a clean control surface for latency versus logic quality.

- Context Compaction (Beta): As the configurable context threshold is approached, the platform automatically summarizes and replaces older parts of the conversation, reducing the need for custom truncation logic.

- US estimates only: Workloads that must reside in US regions can run at 1.1× token pricing.

These controls target a common real-world pattern: Agentic workflows that collect hundreds of thousands of tokens as they interact with tools, documents, and code across multiple stages.

Product integration: Cloud Code, Excel and PowerPoint

Anthropic has upgraded its product stack so that Opus 4.6 can drive more realistic workflows for engineers and analysts.

In Cloud Code, a new ‘Agent Team’ mode (research preview) lets users create multiple agents that work in parallel and coordinate autonomously. It is intended for read-heavy tasks like codebase review. Each sub-agent can be taken interactively, including through tmuxWhich fits into terminal-centric engineering workflows.

The cloud in Excel now plans before acting, can ingest unstructured data and infer structure, and apply multi-step transformations in a single pass. When paired with the cloud in PowerPoint, users can go from raw data in Excel to structured, on-brand slide decks. The model reads layout, font, and slide masters so that the generated decks align with existing templates. Cloud in PowerPoint is currently in Research Preview for the Max, Team, and Enterprise plans.

Benchmark Profile: Coding, Search, Long Reference Retrieval

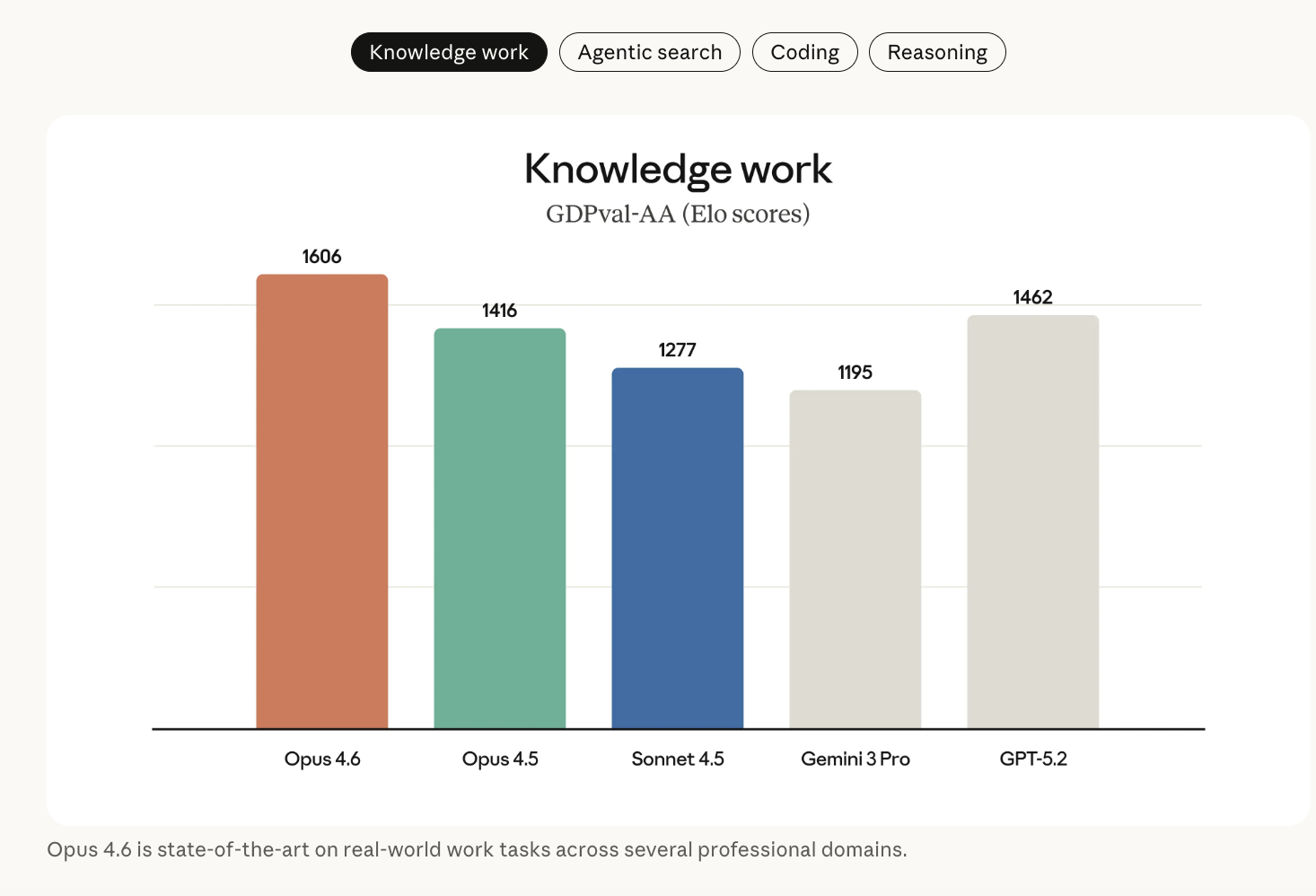

The Anthropic team positions Opus 4.6 as state-of-the-art on several external benchmarks that are important for coding agents, search agents, and professional decision support.

Key results include:

- gdpval-aa (economically valuable knowledge tasks in finance, legal, and related domains): Opus 4.6 outperforms OpenAI’s GPT-5.2 by about 144 Elo points and Cloud Opus 4.5 by 190 points. This means that, in a head-to-head comparison, Opus 4.6 beats GPT-5.2 in this evaluation about 70% of the time.

- Terminal-Bench 2.0: Opus 4.6 achieves the highest reported score on this agentive coding and systems task benchmark.

- final test of humanity: On this multidisciplinary logic test with tools (web search, code execution and others), the Opus 4.6 leads other Frontier models, including GPT-5.2 and Gemini 3 Pro configurations, under documented harnesses.

- browsecomp:Opus 4.6 performs better than any other model on this agentive search benchmark. When the cloud model is combined with a multi-agent harness, the score increases to 86.8%.

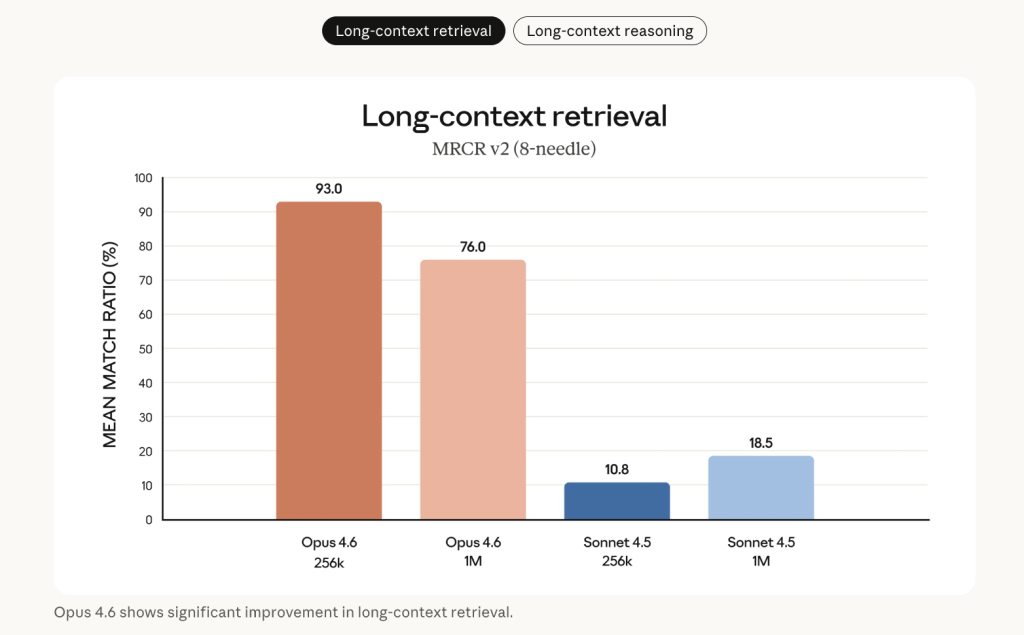

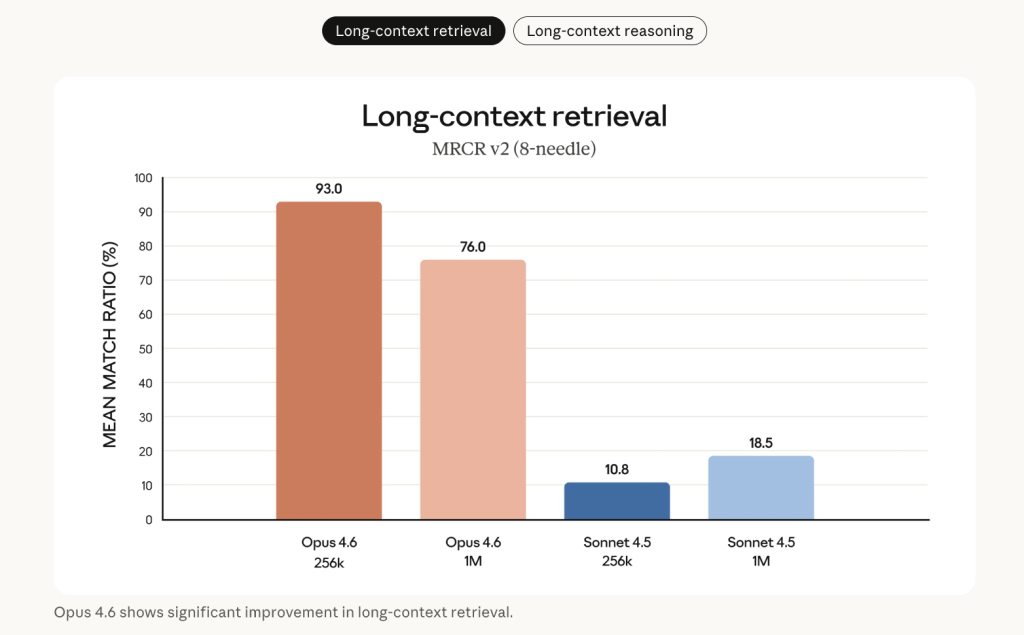

Long-context recovery is a central improvement. On the 8-needle 1M version of MRCR v2 – a ‘needle-in-a-haystack’ benchmark where facts are hidden inside 1M tokens of text – Opus 4.6 scores 76%, compared to 18.5% for Cloud Sonnet 4.5. Anthropic describes this as a qualitative change in how much context a model can actually use without context rot.

Additional performance benefits include:

- Root cause analysis on complex software failures

- multilingual coding

- long term coordination and planning

- cyber security work

- Life Sciences, where Opus 4.6 outperforms Opus 4.5 by approximately 2 times on computational biology, structural biology, organic chemistry, and phylogenetics evaluations

On Vending-Bench 2, a long-term economic performance benchmark, Opus 4.6 earns $3,050.53 more than Opus 4.5 under the reported setup.

key takeaways

- The Opus 4.6 is Anthropic’s highest-end model with a 1M-token reference (beta):Supports up to 1M input tokens and 128k output tokens, with premium pricing above 200k tokens, making it suitable for very long codebases, documents, and multi-step agentive workflows.

- Explicit control for depth and cost of reasoning through effort and adaptive thinking:Developers can tune

/effort(low, medium, high, maximum) and let ‘adaptive thinking’ decide when extended logic is needed, highlighting clear latency vs accuracy vs cost trade-offs for different routes and functions. - Strong benchmark performance on coding, search, and economic value tasks: Opus 4.6 leads on GDPval-AA, Terminal-Bench 2.0, Humanities Last Exam, BrowseComp, and MRCR v2 1M, with large gains over Cloud Opus 4.5 and GPT-class baselines in long context retrieval and tool-enhanced reasoning.

- Tight integration with Cloud Code, Excel and PowerPoint for real workloads: Agent teams in cloud code, structured Excel transformations, and template-aware PowerPoint generation position Opus 4.6 as the backbone not just for chat, but for practical engineering and analyst workflows.

check it out technical details And documentation. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.

Max is an AI analyst at Silicon Valley-based MarkTechPost, actively shaping the future of technology. He teaches robotics at Brainvine, fights spam with ComplyMail, and leverages AI daily to translate complex technological advancements into clear, understandable insights.