Author(s): telekinesis ai

Originally published on Towards AI.

Vision Language Action (VLA) models are the hottest topic in physical AI right now.

If you’re in the field of robotics or computer vision, your feed will be filled with: massive funding rounds for companies building the “ChatGPT of robotics”, the best research conferences publishing large corpus of work on new models, and best of all: Laundry Folding Robot Demo.

The promise is absolutely magical: a single end-to-end model that autonomously learns and masters any task, giving robots true general-purpose intelligence.

The harsh reality of manufacturing

Our team visits factories regularly. These sites are often in remote areas, where there are no signs of life. For those who haven’t seen it, it’s easy to imagine an automotive OEM production line, with conveyor belts stretching endlessly and robots working in perfect sync.

But the reality is that less than 10% of factories look like this. Most, especially those run by small and medium-sized enterprises (SMEs), resemble large warehouses filled with individual workspaces, oily floors and the damp, metallic smell of machine oil.

The last mile of physical AI: the huge untapped market of industrial SMEs

Small and medium-sized enterprises (SMEs) form the backbone of the global $14.8 trillion manufacturing market. These companies are currently struggling to hire workers because the work is repetitive and mind-numbing.

So the main question is: Why aren’t robots already deployed in SMEs?

The answer lies in a fundamental difference in production logic. Large OEMs operate in high-volume environments, producing the same part hundreds of thousands or even millions of times. On the other hand, SMEs operate under High-Mix Low-Volume (HMLV) Production. A single factory may produce 50 to 100 different products, each varying in geometry, size, and handling requirements. SMEs receive weekly orders as to what products they have to deliver and hence, there is a change in the product produced every day.

What differentiates this environment from academic benchmarks or lab demos is that zero-error limit. At high volumes, even a 98% success rate is inadequate. If a robot misjudges the grip and drops a part into the spindle of a CNC machine, it not only “fails the job”, it breaks $200,000 of equipment and halts production for several days.

The challenge of physical AI in manufacturing is therefore: High precision as well as high generalization.

So how can we actually bring physical AI into manufacturing?

Moving beyond VLAs: The rise of agentic skills in physical AI.

A dichotomy is emerging in the field of robotics: classical robotics are fatalistic but fragile, VLA models are generalizable but probable..

What if we could combine the best of both worlds? The answer is right before our eyes: the history of the LLM.

If we study the evolution of LLMs from chatbots to AI agents, we can quickly learn that the true value of LLMs lies when we introduce tools. In AI agents, the LLM is responsible for organizing the devices. The more powerful the modular toolset, the better the agent.

We can achieve the same in robotics agentic skills. We first need to build a larger toolset, called the Skills Library, which includes robust methods for perception and robotics. An LLM/VLM, called an agent, can then be used to orchestrate the skill. In this architecture, the agent selects the right skill for the job from a robust skill library:

- Perception Skills: 6D pose estimation, point-cloud segmentation, and anomaly detection.

- Manipulation Skills: Force-sensitive insertion, compliant grasping, and high-speed trajectory following.

- VLA as skill: Even a general purpose VLA can be a “tool” used for creative or non-standard tasks such as “clearing the workspace of debris”.

By modularizing the physical, we solve zero-error Requirement that affects the end-to-end model:

- High precision execution: the agent makes decisions What to do, but classical “skill” (for example, a peg-in-hole insertion algorithm) ensures that this is done with sub-millimetre accuracy.

- Explanation: If a robot fails, the system can report exactly which “skill” failed and why, rather than a “black box” that stopped working.

- Rapid change: To handle a new product, you don’t need to retrain the entire model. You simply update the skill sequence or provide the agent with a prompt for the part’s geometry.

Telekinesis Agentic Skill Library

telekinesis docs

Documentation of telekinesis.

docs.telekinesis.ai

Telekinesis Agent Skill Library This is a concrete example of how this agentic architecture can be implemented in practice. It is a Python library designed to help teams build physical AI systems by combining robust robotics algorithms with LLM/VLM based logic.

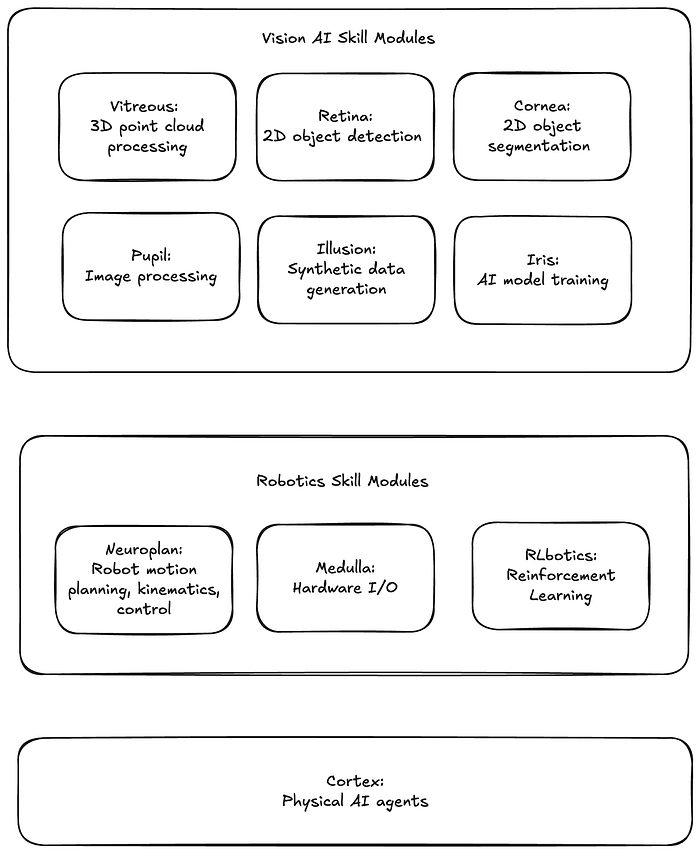

At its core, it offers two layers.

- Skills: A comprehensive set of algorithms for perception, motion planning, and control.

- Physical AI Agents: LLM/VLM Agents for Task Planning in Industrial, Mobile, and Humanoid Robots.

The library is for robotics, computer vision and research teams.

Skills are organized into skill modules. Each module covers robotics concepts ranging from 3D perception, 2D perception, robotics and physical AI agents. Skills are hosted on the cloud and can be easily accessed from Python libraries. Its purpose is to enable developers to introduce new skills into this shared shared library.

Contribute a skill to the telekinesis community

Our larger vision is to create a vibrant community of contributors that helps grow the physical AI skills ecosystem.

We want you to join us. Maybe you’re a researcher who just published a paper and created some code you’re proud of. Maybe you’re a hobbyist tinkering with robots in your garage. Maybe you’re an engineer and dealing with tough automation challenges every day. Whatever your background, if you have a skill, whether it’s a perception module, a motion planner, or a clever robot controller, we want to see it.

The idea is simple: release your skills, Let others use it, improve it, and see it deployed in real-world systems. Your work could move from the laboratory or workshop to factories, helping robots perform tasks that were previously too dangerous, repetitive or precise for humans.

If you are curious and want to explore:

Published via Towards AI