Author(s): neel shah

Originally published on Towards AI.

As an AI engineer, designing systems that interact with large language models (LLMs) like Google’s Gemini is a daily challenge. LLM API calls are inherently I/O-bound – waiting for responses from remote servers – but they can also involve CPU-intensive post-processing, such as parsing output or chaining responses. Terms like “concurrent” and “parallel” execution are important to optimize these interactions for speed, scalability, and efficiency. In this post, we will analyze concurrent vs. parallel execution, explore their hybrid form, and connect it to LLM API calls using Gemini as our example.

We will also discuss which approach is suitable for specific scenarios, including multi-agent setups, and compare strategies such as simple API calls, AI workflows, and search/reasoning agents. Finally, we’ll talk about scaling to thousands of users and provide a practical hybrid example.

Understanding Concurrency, Parallelism and Their Hybrids

Concurrency: Managing Multiple Tasks with Interleaving

Concurrency allows your system to handle multiple tasks by switching between them, often on the same core. This is perfect for I/O waits like LLM API responses, where the CPU can handle other tasks during downtime.

Analogy: A lone barista taking orders, making coffee, and serving – switching rapidly.

In AI: Use Gemini for batching prompts without blocking the main thread.

Parallelism: true simultaneous execution

Parallelism takes advantage of multiple cores or processes to run tasks at the same time. This is ideal for CPU-bound work, such as analyzing Gemini output in parallel.

Analogy: Multiple baristas each handling one customer simultaneously.

In AI: If they require heavy calculations (for example, sentiment analysis on large texts) then process the response from Gemini in the core.

Parallel Concurrent Hybrid: Combining Strengths

It blends interleaving (concurrency) with simultaneous execution (parallelism). Receive LLM responses concurrently (async I/O), then parallelize CPU-heavy processing.

Analogy: Baristas are switching between tasks while several work in parallel.

In AI: Essential for complex pipelines where API calls are concurrent, but downstream tasks (for example, data aggregation) benefit from parallelism.

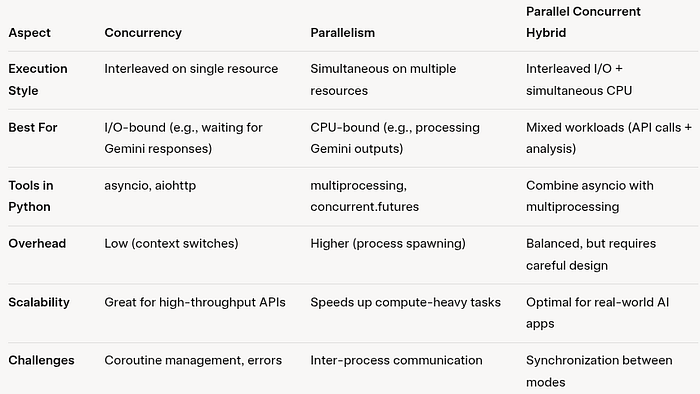

Main differences and when to use each

- better for concurrent: High volume LLM calls where latency dominates network latency. For example, generating personalized responses to users without CPU bottlenecks.

- better to parallel: When the LLM output requires intensive processing, such as running simulations or ML inference on the reactions.

- better for hybrid: End-to-end AI pipelines, such as querying Gemini concurrently and then performing parallel evaluation or chaining.

A deeper understanding between them can be made by referring to the image attached below from the ByteByteGo article.

Linking to LLM API calls: Why it matters for Geminis

LLM APIs like Gemini (via Google’s Generative AI SDK) involve sending signals and waiting for generated content. These calls can take a few seconds, and with rate limits (for example, queries per minute), inefficient execution leads to bottlenecks. Concurrency reduces latency; Parallelism speeds up post-call work. At large scale (thousands of users), poor design leads to timeouts, high costs, or service denial. Gemini’s SDK supports both sync and async calls, making it ideal for demos.

Let’s assume you’ve set up: pip install google-generativeai and configured genai.configure(api_key=”YOUR_API_KEY”).

Scenario 1: Sequential execution (baseline)

Call Gemini one signal at a time – simple but slow.

import google.generativeai as genai

import timegenai.configure(api_key="API_KEY")

def generate_text(prompt):

model = genai.GenerativeModel('gemini-1.5-flash')

response = model.generate_content(prompt)

return response.text

start_time = time.time()

prompts = ("Explain AI in 50 words", "Summarize quantum computing", "Write a haiku about robots", "Describe neural networks", "What is reinforcement learning?")

results = (generate_text(prompt) for prompt in prompts)

print(results)

print(f"Sequential took {time.time() - start_time:.2f} seconds")

- Time: Total time taken to complete the entire process: 15.10 seconds.

- Use when prototype or strict order is required.

Scenario 2: Concurrent Execution (Async)

Use Gemini’s async support to interleave calls.

import google.generativeai as genai

import asyncio

import timegenai.configure(api_key="YOUR_API_KEY_HERE")

async def generate_text_async(prompt):

model = genai.GenerativeModel('gemini-1.5-flash')

response = await model.generate_content_async(prompt)

return response.text

async def main():

prompts = (

"Explain AI in 50 words",

"Summarize quantum computing",

"Write a haiku about robots",

"Describe neural networks",

"What is reinforcement learning?"

)

tasks = (generate_text_async(prompt) for prompt in prompts)

return await asyncio.gather(*tasks)

if __name__ == "__main__":

start_time = time.time()

results = asyncio.run(main())

print(results)

print(f"Concurrent took {time.time() - start_time:.2f} seconds")

- Time: Total time taken to complete the entire process: 7.38 seconds.

- When to use: Handling user queries in web apps.

Scenario 3: Parallel Execution (Multiprocessing)

Spawn processes for simultaneous calls (useful when mixed with CPU work).

from multiprocessing import Pool

import google.generativeai as genai

import timegenai.configure(api_key="API_KEY")

def generate_text(prompt):

model = genai.GenerativeModel('gemini-1.5-flash')

response = model.generate_content(prompt)

return response.text

start_time = time.time()

prompts = ("Explain AI in 50 words", "Summarize quantum computing", "Write a haiku about robots", "Describe neural networks", "What is reinforcement learning?")

with Pool(processes=5) as pool:

results = pool.map(generate_text, prompts)

print(results)

print(f"Parallel took {time.time() - start_time:.2f} seconds")

- Time: Total time taken to complete the entire process: 7.68 seconds.

- When to use: API calls + heavy local processing.

Scenario 4: Parallel Concurrent Hybrid

Receive Gemini responses concurrently (async I/O), then parallelize CPU-bound analysis (e.g., word count on output).

import google.generativeai as genai

import asyncio

from multiprocessing import Pool

import timegenai.configure(api_key="YOUR_API_KEY_HERE")

# Async Gemini text generation

async def generate_text_async(prompt):

model = genai.GenerativeModel('gemini-1.5-flash')

response = await model.generate_content_async(prompt)

return response.text

# CPU-bound analysis (runs in multiprocessing pool)

def analyze_text(text):

# Example: word count (replace with complex CPU logic if needed)

return len(text.split())

async def main():

prompts = (

"Explain AI in 50 words",

"Summarize quantum computing",

"Write a haiku about robots",

"Describe neural networks",

"What is reinforcement learning?"

)

# Run all Gemini requests concurrently (I/O-bound)

texts = await asyncio.gather(*(generate_text_async(p) for p in prompts))

# Use multiprocessing for CPU-bound analysis

with Pool(processes=3) as pool:

results = pool.map(analyze_text, texts)

return results

if __name__ == "__main__":

start_time = time.time()

results = asyncio.run(main())

print(results) # Example: (50, 20, 7, 30, 25)

print(f"Hybrid took {time.time() - start_time:.2f} seconds")

- Time: Total time taken to complete the entire process: 7.61 seconds.

- The impact of the parallel phase in the hybrid scenario is limited due to the light analysis_text task.

Why hybrid? Async API handles waiting efficiently; Similarity speeds up analysis.

Note: The mentioned execution time may vary depending on factors such as user’s location, underlying hardware, network conditions and other parameters. However, the relative comparison over time remains consistent.

Agent Scenario: Mapping the Execution Model

In AI systems, “agents” are LLM-driven entities (for example, using Gemini) that reason, act, or collaborate. Here’s how concurrency/parallelism fits in:

- Multiple agents working simultaneously on different data:Parallel best – each agent processes independent data simultaneously (for example, agents analyze different user queries). Use multiprocessing for separation.

- Multiple agents working together on a single data: Concurrent or hybrid – Agents collaborate by interleaving (for example, one generates an idea, another critiques). Async for coordination without blocking.

- Single agent acting on single data: Sequential or Concurrent – Simple, no need for parallelism unless the task has I/O sub-steps.

- Single agent acting on multiple data: Concurrent – The agent processes data in batches asynchronously, such as the Gemini agent summarizing multiple documents.

Approaches: AI workflow vs simple API calls vs AI agents with search/logic

- simple api call:direct gemini generate_content. Best for one-time tasks (e.g., completing a chat). Use concurrent for batching. Scalable but lacks complexity.

- AI workflow: Multi-step pipeline (for example, speedy chaining with Gemini). Hybrid execution – concurrent for calls, parallel for branches. Ideal for orchestration (for example, via Langchain).

- AI agent with search/reasoning: Autonomous agents (e.g., Gemini + React patterns with tools). Concurrency for real-time logic/search; If multiple sub-agents are parallel. Best for dynamic tasks like research.

Choose based on needs: simple for speed, workflow for structure, agents for autonomy.

Scaling up to thousands of users

By and large, LLM cost and rate limitation (Gemini: ~60 QPM for free tier) dominate.

- concurrent: Handle bursts efficiently, queuing requests without heavy APIs. Use rate limits (for example, async semaphores).

- parallel: Risks rapidly reach limits; Choke with the pool. Good for offline batch processing.

- hybrid:Optimal – minimizes concurrent API latency, and maximizes parallel local computing throughput. Monitor with tools like Prometheus; Deliver via cloud (for example, Google Cloud Run).

- Tips: Cache responses, use cheaper models for non-critical tasks, and enforce retries. For thousands of users, expect hybrid to reduce response times by 5-10x compared to sequential.

conclusion

From an AI engineer’s perspective, mastering concurrent, parallel, and hybrid execution transforms LLM apps from sluggish to scalable. With Gemini, start with concurrency for most API-heavy work, layer parallelism for compute, and hybrid for production. Use wisely, profile and scale – what is your approach? Share below!

Citation

- https://bytebytego.com/guides/concurrency-is-not-parallelism/

- https://medium.com/@itIsMadhavan/concurrency-vs-parallelism-a-brief-review-b337c8dac350

Published via Towards AI