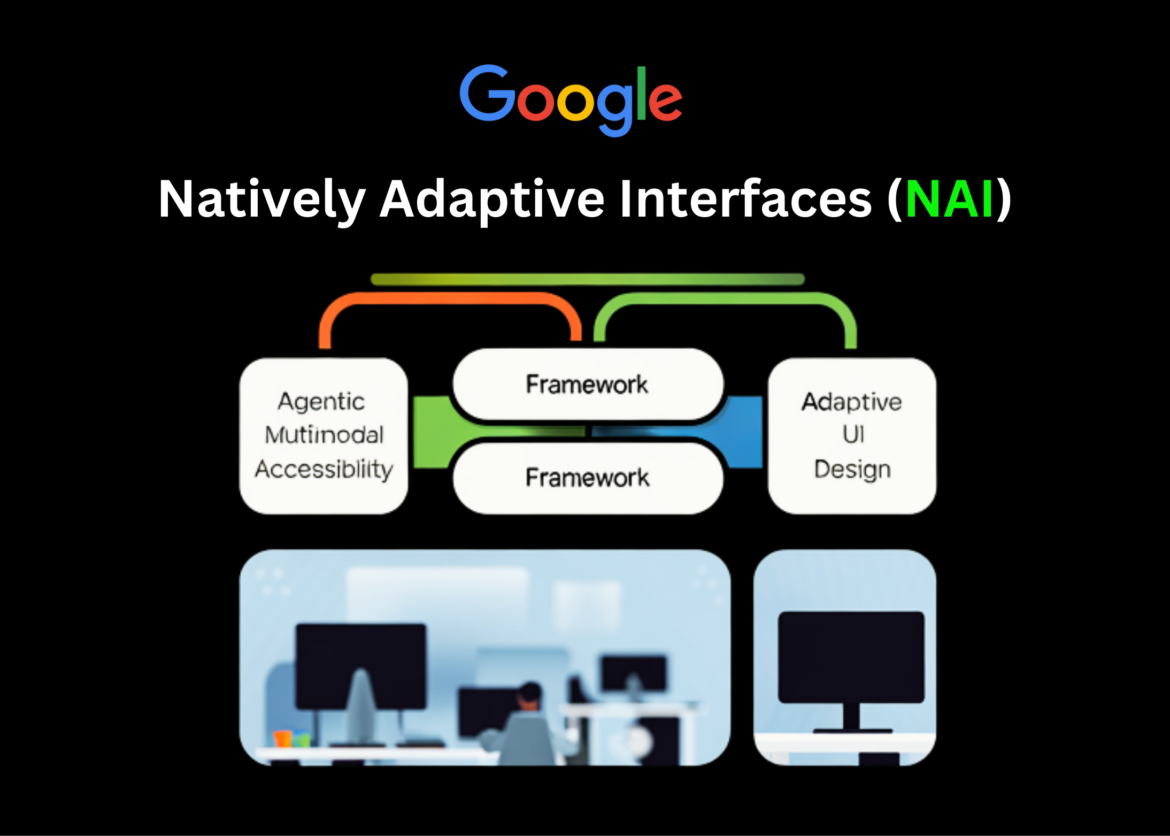

Google Research is proposing a new way of creating accessible software with Natively Adaptive Interfaces (NAI), an agentic framework where a multimodal AI agent becomes the primary user interface and adapts the application in real time to each user’s capabilities and context.

Instead of shipping a fixed UI and adding accessibility as a separate layer, NAI pushes accessibility into the core architecture. The agent observes, reasons, and then modifies the interface itself, moving from one-size-fits-all design to context-informed decisions.

What does Natively Adaptive Interface (NAI) change in the stack??

NAI starts from a simple premise: if an interface is mediated by a multimodal agent, accessibility can be controlled by that agent rather than static menus and settings.

Key features include:

- Multimodal AI Agent The primary UI is the surface. It can see text, images and layouts, listen to speech, and output text, speech, or other modalities.

- Accessibility is integrated into this agent From the beginning, this was not emphasized until later. The agent is responsible for customizing navigation, content density, and presentation style for each user.

- The design process is clearly user centricPeople with disabilities are treated as marginal users who define the needs for everyone, not as an afterthought.

This framework targets what the Google team calls the ‘accessibility gap’ – the lag between adding new product features and making them usable for people with disabilities. Embedding agents into the interface is meant to bridge this gap by allowing the system to be customized without waiting for custom add-ons.

Agent Architecture: Orchestrator and specific tools

Under NAI, the UI is supported by a multi-agent system. The main pattern is:

- One orchestrator The agent maintains shared context about the user, task, and app state.

- Specific sub-agents Implement focused capabilities, such as summarization or settings customization.

- a set of configuration pattern Defines how to detect user intent, add relevant context, adjust settings, and fix erroneous queries.

For example, in the NAI case study around accessible video, The Google team outlines core agent capabilities, such as:

- Understand user intent.

- Refine questions and manage context one by one.

- The engineer signals and calls tools in a consistent manner.

From a systems perspective, it replaces static navigation trees. Dynamic, agent-driven modules. The ‘navigation model’ is effectively a policy about which sub-agent to run in which context, and how to present its results back to the UI.

Multimodal Gemini and RAG for video and environments

NAI is explicitly built on multimodal models like Gemini and Gemma that can process voice, text, and images in the same context.

In the case of accessible videos, Google describes a 2-step pipeline:

- offline indexing

- The system generates dense visual and semantic descriptors on the video timeline.

- These descriptors are stored in an index keyed by time and content.

- Online Recovery-Augmented Generation (RAG)

- At playback time, when a user asks “What is the character wearing right now?” As the query is asked, the system retrieves the relevant descriptors.

- A multimodal model positions these descriptors as well as the question to generate a concise, descriptive answer.

This design supports interactive queries during playback, not just pre-recorded audio description tracks. The same pattern generalizes to physical navigation scenarios where the agent needs to reason over a sequence of observations and user questions.

concrete nai prototype

Google’s NAI research work is based on several deployed or pilot prototypes built with partner organizations such as RIT/NTID, The Ark of the United States, RNID, and Team Gleason.

StreetReaderAI

- Designed for blind and low vision users navigating urban environments.

- connects one AI descriptor which processes camera and geospatial data with a ai chat Interface for natural language queries.

- Maintains a temporal model of the environment, which is based on questions like ‘Where was that bus stop?’ Allows questions like. And gives answers like ‘It’s behind you, about 12 meters away’.

Multimodal Agent Video Player (MAVP)

- Focused on online video access.

- Uses the above Gemini-based RAG pipeline to provide adaptive audio description.

- Lets users control narrative density, interrupt playback with questions, and receive answers based on indexed visual content.

grammar lab

- A bilingual (American Sign Language and English) learning platform created by RIT/NTID with support from Google.org and Google.

- Uses Gemini to generate personalized multiple choice questions.

- ASL presents content through video, English captions, spoken narration and transcripts, adapting the modality and difficulty to each learner.

Design process and curb-cut effect

The NAI document describes a structured process: investigate, build, and refine, then iterate based on feedback. In a case study on video accessibility, the team:

- Target users defined across a spectrum from completely blind to visually impaired.

- Co-designed and ran user testing sessions with approximately 20 participants.

- It went through more than 40 iterations informed by 45 feedback sessions.

The resulting interface is expected to produce curb-cut effect. Features created for users with disabilities – such as improved navigation, voice interactions, and adaptive summaries – often improve usability for a much broader population, including non-disabled users who face time pressures, cognitive load, or environmental constraints.

key takeaways

- Agent is a UI, not an add-on: Natively Adaptive Interface (NAI) multimodal AI treats the agent as the primary interaction layer, so accessibility is controlled directly by the agent in the core UI, not as a separate overlay or post-hoc feature.

- Orchestrator + Sub-Agent Architecture: NAI uses a central orchestrator that maintains shared context and routes work to particular sub-agents (for example, summarization or settings customization), turning static navigation trees into dynamic, agent-driven modules.

- Multimodal Gemini+RAG for adaptive experiences: Multimodal agent video player-like prototypes build dense scene indexes and use retrieval-enhanced generation with Gemini to support interactive, grounded Q&A during video playback and other rich media scenarios.

- Actual systems: StreetReaderAI, MAVP, Grammarly Lab: NAI has been instantiated in concrete devices: StreetReaderAI for navigation, MAVP for video accessibility, and GrammarLab for ASL/English learning, all powered by multimodal agents.

- Accessibility as a key design constraint: The framework encodes accessibility in configuration patterns (detect intent, add context, adjust settings) and takes advantage of the curb-cut effect, where solutions for disabled users improve robustness and usability for a broader user base.

check it out Technical details here. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.