Apache Spark™ Structured Streaming real time modeAnnounced in summer 2025, unlocks sub-second latency use cases across industries. This article explores the AdTech use case and how real-time mode, combined with another recent Spark enhancement (TransformWithState), can be used achieve sub-second latency Delivery of advertising event data.

By eliminating the need for an external streaming engine in low-latency use cases, Databricks customers can now benefit from a simplified stack, unified governance, and seamless integration with other Databricks capabilities. By fulfilling its mission to democratize data and AI, enterprises can deliver business value more quickly with less complexity and risk.

use case overview

From Couch to Click: The Split-Second World of Streaming Ad Attribution

Picture this: You’re streaming your favorite ad-supported show. The moment the ad break begins, a rapid series of events unfold in the background – ad requests are activated based on audience profiles, auctions run in real time, and winning creatives are delivered in milliseconds. But serving ads is just the beginning.

Each impression should be associated with a downstream signal, such as clicks, view completions, or purchases. Only when these events are correlated in real time can advertisers measure true performance and ensure accurate billing – an attribution challenge that demands both speed and accuracy. In the programmatic advertising space, sub-second latency is required to effectively manage ad inventory.

Advertising contracts typically mandate a specific number of impressions (for example, a shoe company asks for one million domestic views for a new commercial). As soon as this contractual obligation is fulfilled, subsequent advertising slots may be sold to a different bidder. This impression-matching operation occurs on a large scale. The top streaming platforms handle millions of ad events simultaneously, and often accumulate hundreds of terabytes of data per day, while adhering to stringent latency standards.

Ad event processing basics

Modern programmatic advertising is not just about transferring data; It is about correlating independent, high-velocity event streams in real time. A typical pipeline must capture and reconcile three different signals:

- Advertisement request (intent): This is generated when the user starts streaming. These payloads are enriched with the correlation keys (such as device ID) and auction metadata necessary to sell slots.

- Ad Impressions (Delivery): The important “AK” signal is activated when the ad is successfully presented on the viewer’s device.

- Callback event (result): Downstream interactions that drive billing and optimization, such as clicks, view completions, or conversions.

Correlation Challenge: The complexity lies in linking these disparately timed events together. This is not a simple stateless join; This is a sophisticated stateful operation that must:

- Bridge Asynchronous Timeline: Match an “impression” with a “request” that came seconds or minutes earlier.

- Resist network chaos: Gracefully handle events that are late due to client-side latency or network partitioning.

- Manage State Lifecycle: Enforce strict termination policies (TTL or timer-based state removal) to prevent memory bloat from unclosed sessions.

- Scale elasticity: Process millions of events per second during major live sports or broadcast premieres.

legacy implementation

For years, engineers faced a brutal choice: Spark’s simplicity for analytics, or the speed of specialized engines for operations. You can’t have both. To hit sub-second SLAs, teams were forced to stitch together fragmented stacks – often maintaining massive dedicated state stores to track ad impressions.

Real World Complexity: A major media provider currently maintains a 170-node HBase cluster to manage the ecosystem for its legacy advertising platform. Not only is this expensive, it creates a governance nightmare where operational data is siphoned off to the analytical lake.

Real-time mode for operational workloads: a new era for Spark

Now Spark Streaming can address the entire spectrum of use cases. With Real-Time Mode (RTM) in Apache Spark Structured Streaming, combined with the powerful TransformWithState operator, we are seeing the convergence of analytical and operational streaming capabilities within a single, unified platform. For the first time, teams can build sophisticated, low-latency ad attribution pipelines entirely within the Spark ecosystem.

What is real-time mode?

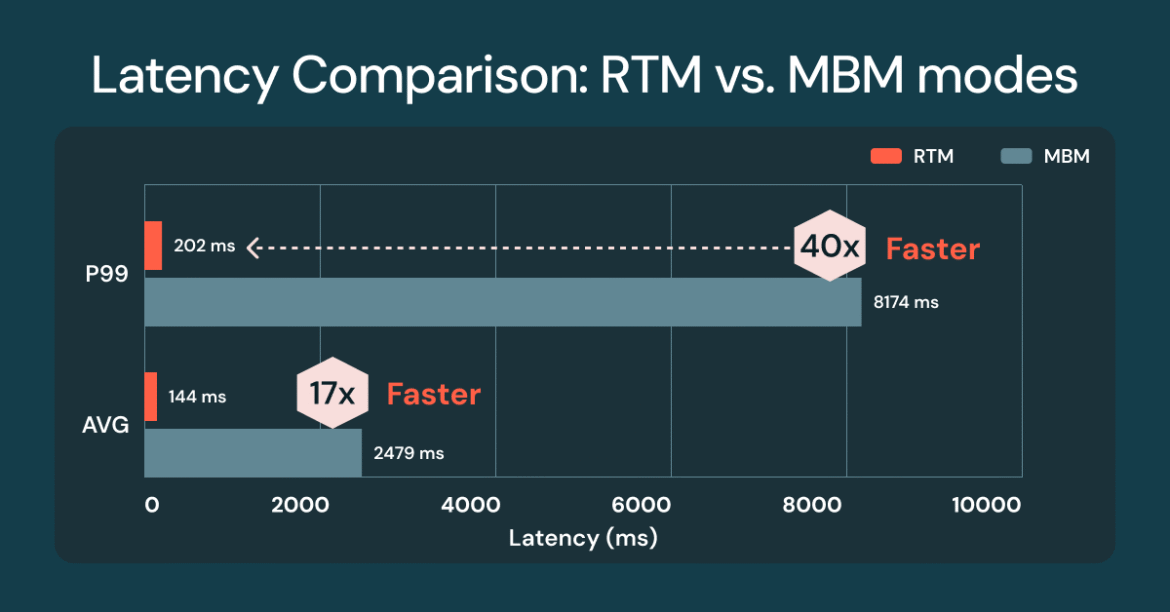

Real-time mode represents a fundamental architectural development in Spark Structured Streaming. Unlike traditional micro-batching, real-time mode processes events continuously as they arrive, achieving p99 latencies as low as single-digit milliseconds. Databricks benchmarks demonstrate notable performance characteristics:

- P99 Latencies: Single-digit milliseconds to ~300ms depending on workload complexity

- Throughput: Maintains high processing rates while maintaining consistently low latency

- Stability: Reliable sub-second performance across various load patterns

This breakthrough enables Spark to compete directly with specialized streaming engines for the most demanding operational workloads, while maintaining the familiar DataFrame API and rich ecosystem integrations that make Spark so powerful for analytical use cases.

Spoiler alert: simplicity is the star of the show

Teams can enable real-time mode with a single configuration change – no rewriting or re-platforming required – while maintaining the same structured streaming API they use today. All you have to do is apply the new trigger interval as follows:

Benefits of real-time mode with TransformWithState

The TransformWithState operator provides the sophisticated state management capabilities needed for complex event correlation, a core requirement for the ad attribution use case. Key features of TransformWithState include object-oriented state management, composite data types, timer-driven logic, automatic TTL support, and schema evolution.

Code Example: Building a Real-Time Ad Attribution Pipeline

Pipeline Architecture Overview

A production ad attribution pipeline built on real-time mode follows this high-level architecture:

- Event Ingestion: High-throughput Kafka topics receive ad requests, impressions, and callback events from distributed ad servers and client applications.

- Stateful Correlation: TransformWithState operators maintain correlation state, matching impressions to requests based on user sessions, campaign identifiers, and temporal windows.

- Attribution Argument: Custom StatefulProcessor implementations implement business rules for attribution modeling, handling complex scenarios such as see-through attribution and multi-touch attribution.

- Output Generation: Matched attribution events are written to downstream Kafka topics for real-time campaign optimization and offline analytical processing.

Implementation Deep-Dive

For an in-depth technical explanation of example code that you can run and observe the performance of RTM, read this Databricks Community Blog. The source code in that blog post produces a benchmark chart like the one shown below, and the numbers show the significant latency difference between micro-batch mode (MBM) and the new real-time mode (RTM):

state management strategy

Effective ad attribution requires sophisticated state management strategies:

- Request Tracking: Maintain active ad request status, indexed by user session and campaign identifiers, with automatic expiration based on attribution window.

- Impression Correlation: Match incoming impressions to tracked requests using composite keys, handling scenarios where multiple impressions may match the same request.

- Dealing with late event: Watermarking, combined with stateful processing, enforces late arrival policies to strike a balance between attribution accuracy, processing latency requirements, and system stability.

- State Optimization: Take advantage of TransformWithState to achieve efficient state handling, which is especially important at high event volumes.

production ideas

Performance and monitoring

Because real-time mode fundamentally changes the execution model from periodic micro-batches to continuous processing, engineers must adapt their observation strategy to prioritize tail latency and stability over simple averaging.

- Latency metrics: Track end-to-end processing time with a percentage distribution instead of average latency.

- Throughput Pattern: Observe processing rates in varying load conditions and traffic spikes.

- Error Recovery: Implement appropriate exception handling within the StatefulProcessor implementation.

conclusion

The introduction of real-time mode in Apache Spark™ Structured Streaming is a significant moment in the evolution of stream processing. For the first time, organizations can build sophisticated, millisecond-latency operational workloads – such as real-time ad attribution – entirely within the Spark ecosystem.

This breakthrough eliminates architectural fragmentation that has long plagued data engineering teams. You no longer have to choose between the analytical power of Spark and the operational performance of specialized engines. With real-time mode and TransformWithState, you can create integrated pipelines that seamlessly meet analytical and operational needs.

The implications go beyond technical capabilities. Teams can now:

- Integrated Infrastructure: Reduce operational overhead by eliminating specialized streaming engines.

- Accelerate Growth: Leverage existing Spark expertise across all streaming use cases.

- Reliability Improvement: Benefit from Spark’s mature fault tolerance and ecosystem integration.

- Enable Innovation: Focus on business logic rather than managing fragmented architectures.

Are you ready to revolutionize your real-time processing architecture? Real-time mode now available public preview On Databricks Runtime 16.4 LTS and above. We recommend using the latest dbr lts version to take advantage of the latest platform improvements. Start building next-generation streaming applications today and discover the power of millisecond-latency processing combined with the simplicity and scale of Apache Spark™.

questions to ask

What is Structured Streaming in Apache Spark™?

Structured Streaming is Apache Spark’s stream processing framework that processes asynchronous data using the same DataFrame and Dataset APIs as batch workloads, with Spark managing execution and fault tolerance.

Where can I find a structured streaming programming guide for Spark 4.0.0 and later?

As of Spark 4.0.0, the guide is divided into smaller pages available Here.

What is the difference between Kafka Streams and Spark Structured Streaming?

Kafka Streams and Spark Structured Streaming are two different systems that serve different use cases. Kafka Streams is a lightweight stream processing library designed to build applications that process data stored in Kafka topics, typically embedded directly within Kafka client applications. Spark Structured Streaming is a distributed stream processing engine designed for large-scale analytics, stateful processing, and integration with diverse data sources and sinks beyond Kafka, including files, databases, and data lakes.

What is the difference between Spark Streaming (DStream) and Apache Spark Structured Streaming?

Spark Structured Streaming is the modern and recommended streaming engine in Apache Spark. It uses the DataFrame and Dataset APIs to provide a unified programming model for batch and streaming workloads, the older Spark Streaming API based on DStream is considered legacy. Spark recommends migrating to structured streaming And avoiding new development using DStreams.