Voice AI agents are reshaping the way we interact with technology. From customer service and healthcare assistance to home automation and personal productivity, these intelligent virtual assistants are rapidly gaining popularity across industries. Their natural language capabilities, continuous availability, and increasing sophistication make them valuable tools for businesses seeking efficiency and individuals seeking a seamless digital experience.

Amazon Nova Sonic provides real-time, human-like voice conversations through a bidirectional streaming interface. It understands different speaking styles and produces expressive responses that adapt to both the words spoken and the way they are spoken. The model supports multiple languages and offers both masculine and feminine voices, making it ideal for customer support, marketing calls, voice assistants, and educational applications.

When compared with newer architectures like Amazon Nova Sonic – which combine speech understanding and generation in an end-to-end model – classic AI voice chat systems use cascading architectures with sequential processing. These systems process the user’s speech through a separate pipeline: The cascade model approach breaks down voice AI processing into separate components:

- Sound Activity Detection (VAD): Pre-processing VAD is required to detect when the user pauses or stops speaking.

- Speech-to-Text (STT): The user’s spoken words are converted into written text format by an automatic speech recognition (ASR) model.

- Large Language Model (LLM) Processing: The written text is then fed to the LLM or conversation manager, which analyzes the input and generates relevant text responses based on the context of the conversation.

- Text-to-speech (TTS): The AI’s text-based answer is then converted by the TTS model into natural-sounding spoken audio, which is then played for the user.

The following diagram shows the conceptual flow of how users interact with Nova Sonic for real-time voice conversations compared to a cascading voice assistant solution.

Main challenges of cascading architecture

While a cascading architecture offers benefits such as modular design, specialized components, and debuggability, cumulative latency and reduced interactivity are its drawbacks.

waterfall effect

Consider a voice assistant handling a simple weather question. In cascading pipelines, each processing step introduces latency and potential errors. Customer implementations showed how initial misinterpretations can complicate things through the pipeline, often resulting in irrelevant responses. This widespread impact complicated troubleshooting and negatively impacted the overall user experience.

timing is everything

Real conversations require natural time. Sequential processing can cause noticeable delays in response times. These interruptions in the flow of conversation can cause user friction.

integration challenge

Voice AI demands more than just speech processing – it requires natural interaction patterns. Customer feedback highlighted how organizing multiple components made it difficult to handle dynamic conversation elements such as interruptions or rapid exchanges. Engineering resources often focus more on pipeline management.

resource reality

Cascading architectures require independent computing resources, monitoring, and maintenance for each component. This architectural complexity impacts both development velocity and operational efficiency. As the volume of interactions increases, scaling challenges become acute, impacting system reliability and cost optimization.

Impact on sound assistant development

These insights drove key architectural decisions in Nova Sonic development, addressing the fundamental need for integrated speech-to-speech processing that enables natural, responsive voice experiences without the complexity of multi-component management.

comparing both viewpoints

To compare the speech-to-speech and cascade approaches to building voice AI agents, consider the following:

| think thought | Speech-to-speech (Nova Sonic) | cascade model |

| delay |

Optimized latency performance and TTFA We evaluate the latency performance of the Nova Sonic models using the Time to First Audio (TTFA 1.09) metric. TTFA measures the time elapsed from completion of the user’s spoken query to receipt of the first byte of response audio. Look Technical Report and Model Card. |

Potential additional latency and errors Cascade models can use multiple models in speech recognition, language understanding, and voice generation, but are challenged by additional latency and potential error propagation between stages. By using modern asynchronous orchestration frameworks like Pipecat and LiveKit, you can reduce latency. Use of streaming components and text-to-speech fillers helps maintain natural conversation flow and reduce lag |

| Architecture and development complexity |

simplified architecture Nova Sonic combines speech-to-text, natural language understanding and text-to-speech into one model with built-in tool usage and barge-in detection, providing an event-driven architecture for key input and output events and a bidirectional streaming API for a simplified developer experience. |

Potential complexity in architecture Developers need to select best-in-class models for each stage of the pipeline, while orchestrating additional components such as representative agents and tool usage, TTS fillers, and asynchronous pipelines for (VAD). |

| Model Selection and Optimization |

Less control over individual components Amazon Nova Sonic allows customization of voices, built-in tool usage, and integration of Amazon Bedrock Knowledge Base and Amazon Bedrock AgentCore. However, it provides less granular control over individual model components than fully modular cascade systems. |

Possible granular control at each step Cascade models provide greater control over each step by allowing individual tuning, replacement, and optimization of each model components such as STT, language understanding, and TTS independently. This includes Amazon Bedrock Marketplace, Amazon SageMaker AI, and fine-tuned models. This modularity enables choice and flexibility of models, making it ideal for complex or specialized capabilities requiring tailored performance. |

| cost structure |

Simplified cost structure through an integrated approach The Amazon Nova Sonic is priced on a token-based consumption model. |

Potential complexity in costs associated with multiple components Cascade models involve several components that need to be estimated to cost. This is especially important at large scale and high volumes. |

| Language and pronunciation support | Languages supported by Nova Sonic | Potentially broader language support through specialized models, including the ability to change language mid-conversation |

| area availability | Regions supported by Nova Sonic | Potentially broad area support due to the wide selection of models and the ability to self-host models on Amazon Elastic Kubernetes Service (Amazon EKS) or Amazon SageMaker. |

Both approaches also have some shared characteristics.

| Telephony and transportation options | Both cascade and speech-to-speech approaches support various telephony and transport protocols, such as WebRTC and WebSocket, which enable real-time, low-latency audio streaming over the Web and phone networks. These protocols facilitate the seamless, bidirectional audio exchange that is critical for natural conversational experiences, allowing voice AI systems to easily integrate with existing communications infrastructure while maintaining responsiveness and audio quality. |

| Evaluation, Observation, and Testing | Both cascade and speech-to-speech voice AI approaches can be systematically evaluated, observed, and tested for reliable comparison. It is recommended to invest in a voice AI assessment and observation system to gain confidence in production accuracy and performance. Such a system should be able to capture end-to-end metrics and conversation data to trace the entire input-to-output pipeline, comprehensively assessing the quality, latency, and robustness of conversations over time. |

| developer framework | Both cascade and speech-to-speech approaches are well supported by major open-source voice AI frameworks like Pipecat and LiveKit. These frameworks provide modular, flexible pipelines and real-time processing capabilities that developers can use to efficiently create, customize, and orchestrate voice AI models across a variety of components and interaction styles. |

When to use each approach

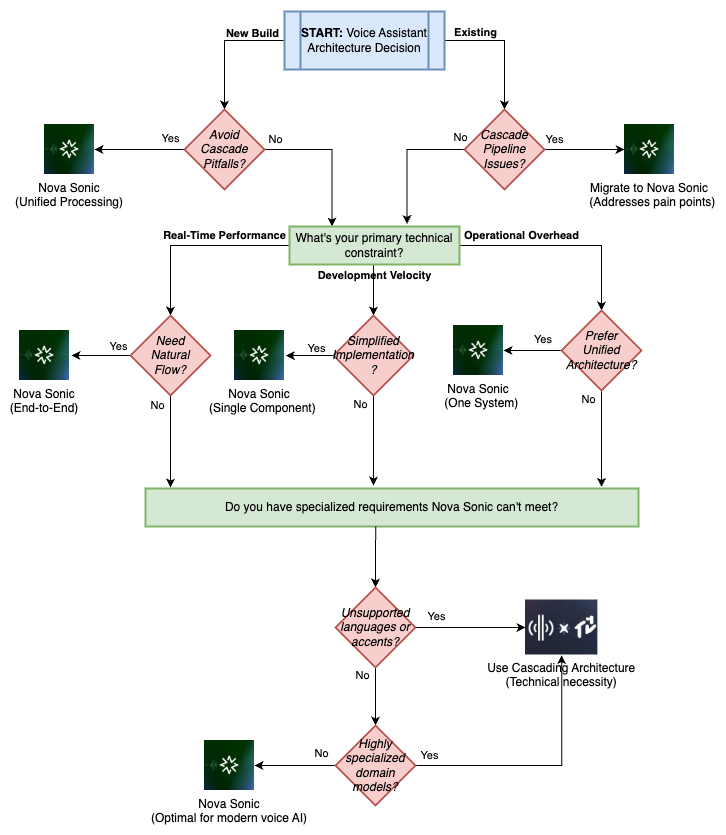

The following diagram shows a practical framework to guide your architectural decisions:

Use speech-to-speech when:

- Simplicity of implementation is important

- The use case fits within the capabilities of the Nova Sonic

- You’re looking for a real-time chat experience that feels human-like and offers low latency

Use the cascade model when:

- Customization of individual components is required

- You need to use specialized models from Amazon Bedrock Marketplace, Amazon SageMaker AI, or fine-tuned models for your specific domain

- You need support for languages or accents not covered by Nova Sonic

- Use cases requiring special processing at specific stages

conclusion

In this post, you learned how Amazon Nova Sonic is designed to solve some of the challenges faced by the cascade approach, simplifying the creation of voice AI agents and providing natural conversation capabilities. We also provided guidance on when to choose each approach to help you make informed decisions for your voice AI projects. If you’re looking to improve your Cascade Voice system, you know you have the basics in place to migrate to Nova Sonic so you can deliver seamless, real-time conversation experiences with a simplified architecture.

To learn more, check out Amazon Nova Sonic and contact your account team to learn how you can accelerate your voice AI initiatives.

resources

About the authors

Daniel Virgo A Solutions Architect at AWS, focused on AI and SaaS startups. As a former startup CTO, he enjoys collaborating with founders and engineering leaders to drive growth and innovation on AWS. Outside of work, Daniel loves traveling with a coffee in hand, appreciating nature and learning new ideas.

Daniel Virgo A Solutions Architect at AWS, focused on AI and SaaS startups. As a former startup CTO, he enjoys collaborating with founders and engineering leaders to drive growth and innovation on AWS. Outside of work, Daniel loves traveling with a coffee in hand, appreciating nature and learning new ideas.

Ravi Thakur is a Senior Solutions Architect at AWS based in Charlotte, NC. He has cross-industry experience in retail, financial services, healthcare, and energy and utilities, and is adept at solving complex business challenges using well-architected cloud patterns. His expertise spans microservices, cloud-native architecture, and generative AI. Apart from work, Ravi enjoys motorcycle riding and family vacations.

Ravi Thakur is a Senior Solutions Architect at AWS based in Charlotte, NC. He has cross-industry experience in retail, financial services, healthcare, and energy and utilities, and is adept at solving complex business challenges using well-architected cloud patterns. His expertise spans microservices, cloud-native architecture, and generative AI. Apart from work, Ravi enjoys motorcycle riding and family vacations.

Lana Zhang is a Senior Expert Solutions Architect for Generative AI at AWS within the Worldwide Expert Organization. He specializes in AI/ML with a focus on use cases such as AI voice assistants and multimodal understanding. She works closely with clients in various industries including media and entertainment, gaming, sports, advertising, financial services, and healthcare to help transform their business solutions through AI.

Lana Zhang is a Senior Expert Solutions Architect for Generative AI at AWS within the Worldwide Expert Organization. He specializes in AI/ML with a focus on use cases such as AI voice assistants and multimodal understanding. She works closely with clients in various industries including media and entertainment, gaming, sports, advertising, financial services, and healthcare to help transform their business solutions through AI.