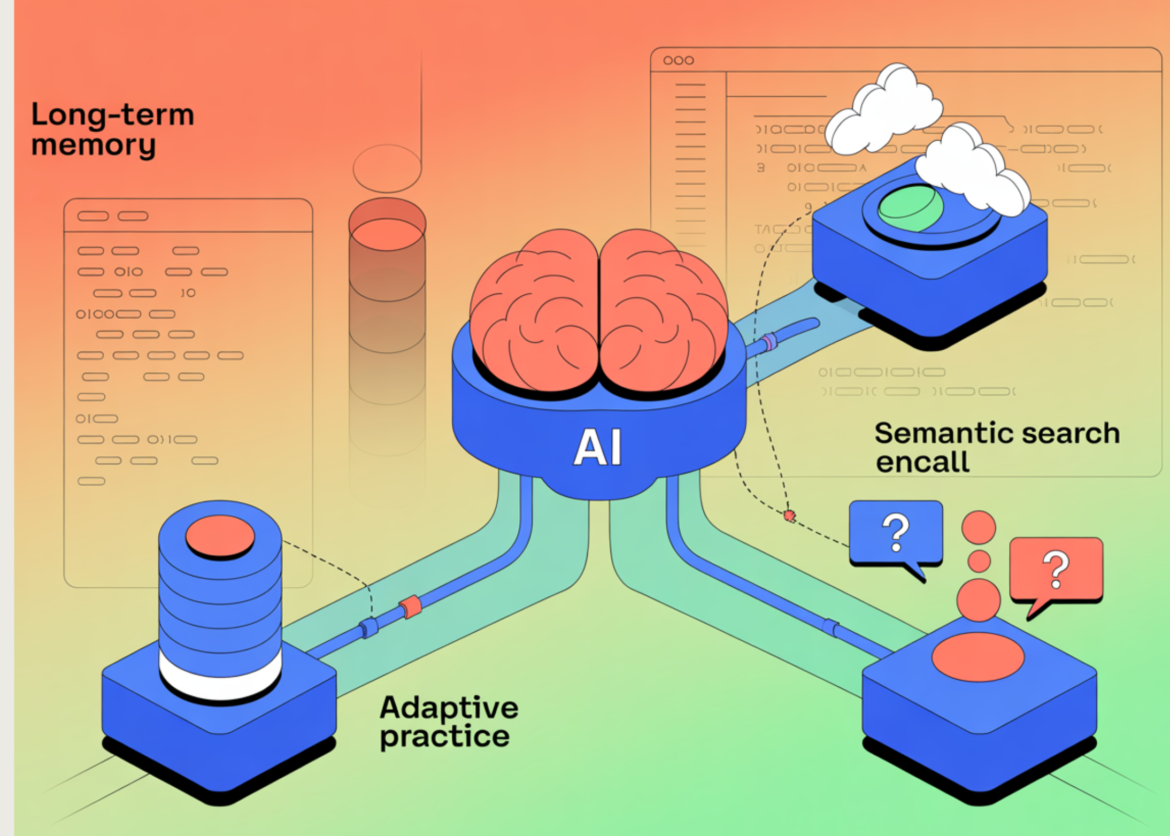

In this tutorial, we build a fully stateful personal tutor agent that moves beyond short-term chat interactions and learns continuously over time. We design the system to retain user preferences, track weak learning areas, and remember only relevant past context when providing feedback. By combining durable storage, semantic retrieval, and adaptive signaling, we demonstrate how an agent can behave more like a long-term tutor than a stateless chatbot. Additionally, we focus on keeping the agent self-managed, context-aware, and able to improve its guidance without requiring the user to repeat information.

!pip -q install "langchain>=0.2.12" "langchain-openai>=0.1.20" "sentence-transformers>=3.0.1" "faiss-cpu>=1.8.0.post1" "pydantic>=2.7.0"

import os, json, sqlite3, uuid

from datetime import datetime, timezone

from typing import List, Dict, Any

import numpy as np

import faiss

from pydantic import BaseModel, Field

from sentence_transformers import SentenceTransformer

from langchain_core.messages import SystemMessage, HumanMessage, AIMessage

from langchain_core.language_models.chat_models import BaseChatModel

from langchain_core.outputs import ChatGeneration, ChatResult

DB_PATH="/content/tutor_memory.db"

STORE_DIR="/content/tutor_store"

INDEX_PATH=f"{STORE_DIR}/mem.faiss"

META_PATH=f"{STORE_DIR}/mem_meta.json"

os.makedirs(STORE_DIR, exist_ok=True)

def now(): return datetime.now(timezone.utc).isoformat()

def db(): return sqlite3.connect(DB_PATH)

def init_db():

c=db(); cur=c.cursor()

cur.execute("""CREATE TABLE IF NOT EXISTS events(

id TEXT PRIMARY KEY,user_id TEXT,session_id TEXT,role TEXT,content TEXT,ts TEXT)""")

cur.execute("""CREATE TABLE IF NOT EXISTS memories(

id TEXT PRIMARY KEY,user_id TEXT,kind TEXT,content TEXT,tags TEXT,importance REAL,ts TEXT)""")

cur.execute("""CREATE TABLE IF NOT EXISTS weak_topics(

user_id TEXT,topic TEXT,mastery REAL,last_seen TEXT,notes TEXT,PRIMARY KEY(user_id,topic))""")

c.commit(); c.close()We set up the execution environment and import all the necessary libraries to create a stateful agent. We also define key paths and utility functions for time management and database connections. It establishes the basic infrastructure on which the rest of the system depends.

class MemoryItem(BaseModel):

kind:str

content:str

tags:List(str)=Field(default_factory=list)

importance:float=Field(0.5,ge=0,le=1)

class WeakTopicSignal(BaseModel):

topic:str

signal:str

evidence:str

confidence:float=Field(0.5,ge=0,le=1)

class Extracted(BaseModel):

memories:List(MemoryItem)=Field(default_factory=list)

weak_topics:List(WeakTopicSignal)=Field(default_factory=list)

class FallbackTutorLLM(BaseChatModel):

@property

def _llm_type(self)->str: return "fallback_tutor"

def _generate(self, messages, stop=None, run_manager=None, **kwargs)->ChatResult:

last=messages(-1).content if messages else ""

content=self._respond(last)

return ChatResult(generations=(ChatGeneration(message=AIMessage(content=content))))

def _respond(self, text:str)->str:

t=text.lower()

if "extract_memories" in t:

out={"memories":(),"weak_topics":()}

if "recursion" in t:

out("weak_topics").append({"topic":"recursion","signal":"struggled",

"evidence":"User indicates difficulty with recursion.","confidence":0.85})

if "prefer" in t or "i like" in t:

out("memories").append({"kind":"preference","content":"User prefers concise explanations with examples.",

"tags":("style","preference"),"importance":0.55})

return json.dumps(out)

if "generate_practice" in t:

return "n".join((

"Targeted Practice (Recursion):",

"1) Implement factorial(n) recursively, then iteratively.",

"2) Recursively sum a list; state the base case explicitly.",

"3) Recursive binary search; return index or -1.",

"4) Trace fibonacci(6) call tree; count repeated subcalls.",

"5) Recursively reverse a string; discuss time/space.",

"Mini-quiz: Why does missing a base case cause infinite recursion?"

))

return "Tell me what you're studying and what felt hard; I’ll remember and adapt practice next time."

def get_llm():

key=os.environ.get("OPENAI_API_KEY","").strip()

if key:

from langchain_openai import ChatOpenAI

return ChatOpenAI(model="gpt-4o-mini",temperature=0.2)

return FallbackTutorLLM()We define the database schema and initialize persistent storage for events, memories, and weak topics. We ensure that user interactions and long-term learning signals are stored reliably throughout the session. This agent enables the memory to be more durable over a single run.

EMBED_MODEL="sentence-transformers/all-MiniLM-L6-v2"

embedder=SentenceTransformer(EMBED_MODEL)

def load_meta():

if os.path.exists(META_PATH):

with open(META_PATH,"r") as f: return json.load(f)

return ()

def save_meta(meta):

with open(META_PATH,"w") as f: json.dump(meta,f)

def normalize(x):

n=np.linalg.norm(x,axis=1,keepdims=True)+1e-12

return x/n

def load_index(dim):

if os.path.exists(INDEX_PATH): return faiss.read_index(INDEX_PATH)

return faiss.IndexFlatIP(dim)

def save_index(ix): faiss.write_index(ix, INDEX_PATH)

EXTRACTOR_SYSTEM = (

"You are a memory extractor for a stateful personal tutor.n"

"Return ONLY JSON with keys: memories (list of {kind,content,tags,importance}) "

"and weak_topics (list of {topic,signal,evidence,confidence}).n"

"Store durable info only; do not store secrets."

)

llm=get_llm()

init_db()

dim=embedder.encode(("x"),convert_to_numpy=True).shape(1)

ix=load_index(dim)

meta=load_meta()We define a data model and a fallback language model when no external API key is available. How we formally represent and extract memories and weak-subject signals. This allows the agent to continuously transform raw conversations into structured, actionable memory.

def log_event(user_id, session_id, role, content):

c=db(); cur=c.cursor()

cur.execute("INSERT INTO events VALUES (?,?,?,?,?,?)",

(str(uuid.uuid4()),user_id,session_id,role,content,now()))

c.commit(); c.close()

def upsert_weak(user_id, sig:WeakTopicSignal):

c=db(); cur=c.cursor()

cur.execute("SELECT mastery,notes FROM weak_topics WHERE user_id=? AND topic=?",(user_id,sig.topic))

row=cur.fetchone()

delta=(-0.10 if sig.signal=="struggled" else 0.10 if sig.signal=="improved" else 0.0)*sig.confidence

if row is None:

mastery=float(np.clip(0.5+delta,0,1)); notes=sig.evidence

cur.execute("INSERT INTO weak_topics VALUES (?,?,?,?,?)",(user_id,sig.topic,mastery,now(),notes))

else:

mastery=float(np.clip(row(0)+delta,0,1)); notes=(row(1)+" | "+sig.evidence)(-2000:)

cur.execute("UPDATE weak_topics SET mastery=?,last_seen=?,notes=? WHERE user_id=? AND topic=?",

(mastery,now(),notes,user_id,sig.topic))

c.commit(); c.close()

def store_memory(user_id, m:MemoryItem):

mem_id=str(uuid.uuid4())

c=db(); cur=c.cursor()

cur.execute("INSERT INTO memories VALUES (?,?,?,?,?,?,?)",

(mem_id,user_id,m.kind,m.content,json.dumps(m.tags),float(m.importance),now()))

c.commit(); c.close()

v=embedder.encode((m.content),convert_to_numpy=True).astype("float32")

v=normalize(v); ix.add(v)

meta.append({"mem_id":mem_id,"user_id":user_id,"kind":m.kind,"content":m.content,

"tags":m.tags,"importance":m.importance,"ts":now()})

save_index(ix); save_meta(meta)We focus on embedding-based semantic memory using vector representations and similarity search. We encode the memories, store them in a vector index, and retain the metadata for later retrieval. This enables relevance-based recall instead of blindly loading all previous references.

def extract(user_text)->Extracted:

msg="extract_memoriesnnUser message:n"+user_text

r=llm.invoke((SystemMessage(content=EXTRACTOR_SYSTEM),HumanMessage(content=msg))).content

try:

d=json.loads(r)

return Extracted(

memories=(MemoryItem(**x) for x in d.get("memories",())),

weak_topics=(WeakTopicSignal(**x) for x in d.get("weak_topics",()))

)

except:

return Extracted()

def recall(user_id, query, k=6):

if ix.ntotal==0: return ()

q=embedder.encode((query),convert_to_numpy=True).astype("float32")

q=normalize(q)

scores, idxs = ix.search(q,k)

out=()

for s,i in zip(scores(0).tolist(), idxs(0).tolist()):

if i<0 or i>=len(meta): continue

m=meta(i)

if m("user_id")!=user_id or s<0.25: continue

out.append({**m,"score":float(s)})

out.sort(key=lambda r: r("score")*(0.6+0.4*r("importance")), reverse=True)

return out

def weak_snapshot(user_id):

c=db(); cur=c.cursor()

cur.execute("SELECT topic,mastery,last_seen FROM weak_topics WHERE user_id=? ORDER BY mastery ASC LIMIT 5",(user_id,))

rows=cur.fetchall(); c.close()

return ({"topic":t,"mastery":float(m),"last_seen":ls} for t,m,ls in rows)

def tutor_turn(user_id, session_id, user_text):

log_event(user_id,session_id,"user",user_text)

ex=extract(user_text)

for w in ex.weak_topics: upsert_weak(user_id,w)

for m in ex.memories: store_memory(user_id,m)

rel=recall(user_id,user_text,k=6)

weak=weak_snapshot(user_id)

prompt={

"recalled_memories":({"kind":x("kind"),"content":x("content"),"score":x("score")} for x in rel),

"weak_topics":weak,

"user_message":user_text

}

gen = llm.invoke((SystemMessage(content="You are a personal tutor. Use recalled_memories only if relevant."),

HumanMessage(content="generate_practicenn"+json.dumps(prompt)))).content

log_event(user_id,session_id,"assistant",gen)

return gen, rel, weak

USER_ID="user_demo"

SESSION_ID=str(uuid.uuid4())

print("✅ Ready. Example run:n")

ans, rel, weak = tutor_turn(USER_ID, SESSION_ID, "Last week I struggled with recursion. I prefer concise explanations.")

print(ans)

print("nRecalled:", (r("content") for r in rel))

print("Weak topics:", weak)We orchestrate the full tutor interaction loop combining extraction, storage, recall, and response generation. We update mastery scores, retrieve relevant memories, and dynamically generate targeted exercises. This completes the transformation from a stateless chatbot to a long-term, adaptive tutor.

Finally, we implemented a tutor agent that remembers, explains, and follows all the sessions. We showed how structured memory extraction, long-term persistence, and relevance-based recall work together to overcome the “goldfish memory” limitation common in most agents. The resulting system continually refines its understanding of the user’s vulnerabilities. It proactively generates targeted exercises, demonstrating a practical foundation for building stateful, long-horizon AI agents that improve with continuous interaction.

check it out full code here. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.