In the development of autonomous agents, the technical hurdle is shifting from model logic to the execution environment. While large language models (LLMs) can generate code and multi-step schemes, providing a functional and isolated environment to run that code remains a significant infrastructure challenge.

agent-infra sandboxAn open-source project addresses this by providing an ‘all-in-one’ (AIO) execution layer. Unlike standard containerization, which often requires manual configuration for tool-chaining, the AIO Sandbox integrates a browser, a shell, and a file system into a single environment designed for AI agents.

All-in-One Architecture

The primary architectural bottleneck in agent development is device fragmentation. Typically, an agent might need a browser to fetch the data, a Python interpreter to analyze it, and a file system to store the results. Managing these as separate services introduces latency and synchronization complexity.

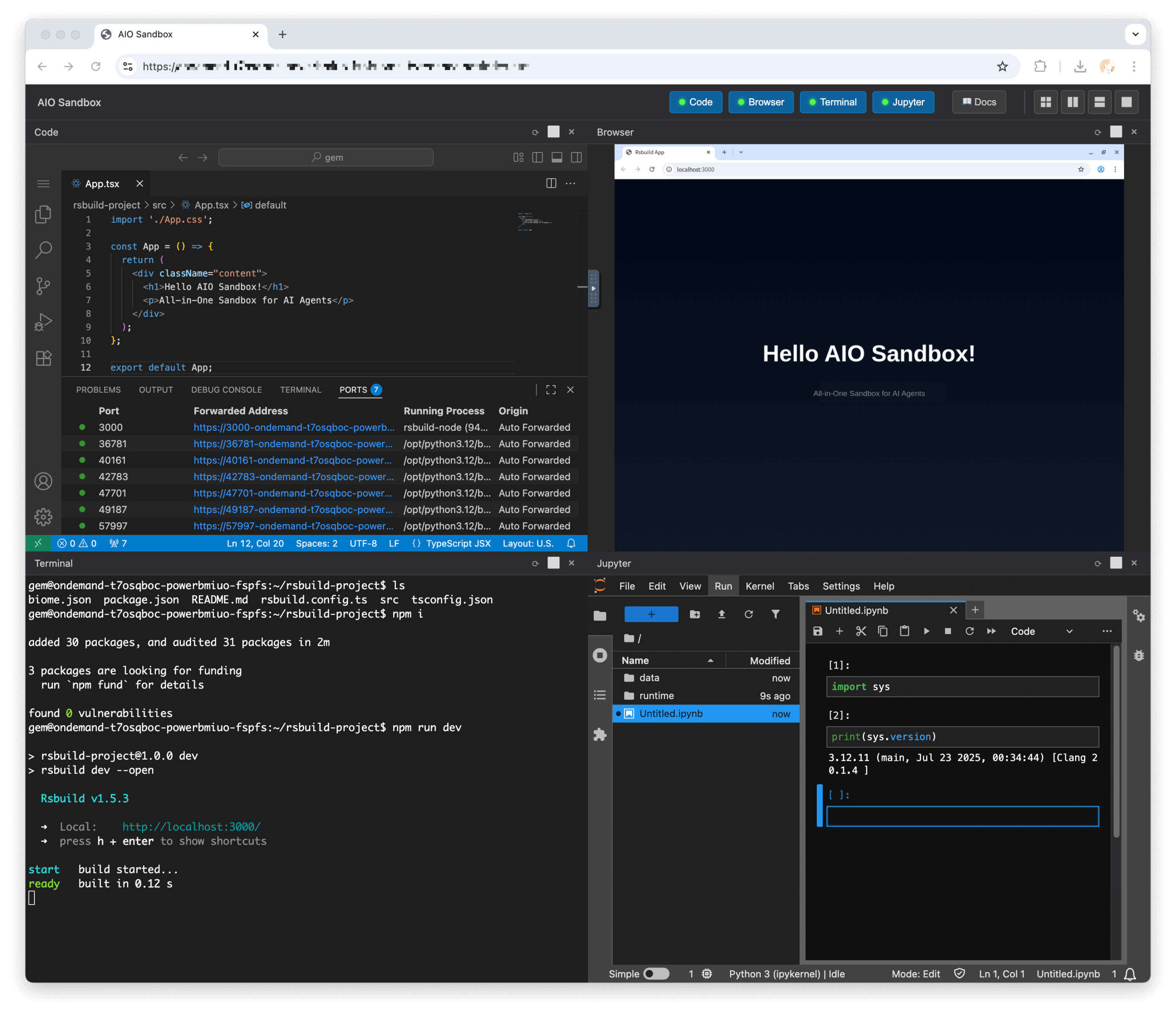

Agent-Infra consolidates these requirements into a single containerized environment. The sandbox includes:

- Computer Interaction: Chromium browser controlled via the Chrome DevTools Protocol (CDP) with documented support for Playwrite.

- Code Execution: Pre-configured runtimes for Python and Node.js.

- Standard Tooling: A bash terminal and a file system accessible to all modules.

- Development Interface: Integrated VSCode Server and Jupyter Notebook instances for monitoring and debugging.

integrated file system

One of the main technical features of the sandbox is its integrated file system. In a standard agentic workflow, an agent can download a file using a browser-based tool. In a segmented setup, that file must be programatically moved to a different environment for processing.

aio sandbox Uses a shared storage layer. This means that a file downloaded through the Chromium browser is immediately visible to the Python interpreter and the Bash shell. This shared state allows transitions between tasks – such as an agent downloading a CSV from a web portal and immediately running a data cleansing script in Python without external data management.

Model Reference Protocol (MCP) integration

Sandbox includes native support for Model Reference Protocol (MCP)An open standard that facilitates communication between AI models and tools. By providing pre-configured MCP servers, Agent-Infra allows developers to expose sandbox capabilities in LLM through a standardized protocol.

Available MCP servers include:

- Browser: For web navigation and data extraction.

- file: For operations on integrated file systems.

- shell: To execute system commands.

- Marketdown: Converting document formats to Markdown to optimize them for LLM consumption.

Isolation and deployment

The sandbox is designed for ‘enterprise-grade Docker deployments’ with a focus on isolation and scalability. Although it provides a persistent environment for complex tasks – such as maintaining a terminal session over multiple turns – it is designed to be lightweight enough for high-density deployments.

Deploy and control:

- Infrastructure: The project includes Kubernetes (K8s) deployment examples, which allow teams to leverage K8s-native features like resource limits (CPU and memory) to manage the sandbox’s footprint.

- Container Isolation: By running agent activities within a dedicated container, the sandbox provides a layer of isolation between the agent’s generated code and the host system.

- access: Through what is the environment managed? API and SDKAllows developers to programmatically trigger commands, execute code, and manage environment state.

Technical Comparison: Traditional Docker vs. AIO Sandbox

| Speciality | traditional docker approach | AIO Sandbox Approach (Agent-Infra) |

| architecture | Typically multi-container (one for the browser, one for code, one for shell). | Integrated Container: Browser, shell, Python and IDE (VSCode/Jupyter) in one runtime. |

| data handling | Moving files between containers requires volume mounts or manual API “plumbing”. | Integrated File System: Files are shared natively. Browser downloads appear immediately on Shell/Python. |

| agent integration | Custom “glue code” is required to map LLM actions to container commands. | Native MCP Support: Pre-configured model reference protocol server for standard agent discovery. |

| user interface | CLI-based; Web-UIs like VSCode or VNC require significant manual setup. | Built-in view: Integrated VNC (for Chromium), VSCode Server and Jupyter are ready out-of-the-box. |

| resource control | Managed via standard Docker/K8s cgroups and resource limitations. |

depends on the underlying Orchestrator (K8s/Docker) For resource throttling and limitations. |

| connectivity | Standard Docker Bridge/Host Networking; Requires manual proxy setup. | CDP-Based Browser Control: Typical browser interaction via the Chrome DevTools protocol. |

| steadfastness | Containers are generally long lived or reset manually; State management practice. | Stateful Session Support: Supports consistent terminals and workspace state throughout the job lifecycle. |

Scaling the Agent Stack

While the core sandbox is open-source (Apache-2.0), the platform is positioned as a scalable solution for teams building complex agentive workflows. By reducing ‘agent ops’ overhead – the tasks required to maintain the execution environment and handle dependency conflicts – the sandbox allows developers to focus on the logic of the agent rather than the underlying runtime.

As AI agents transform from simple chatbots to operators capable of interacting with the web and local files, the execution environment becomes a critical component of the stack. The agent-infra team is setting up the AIO sandbox as a standardized, lightweight runtime for this transition.

check it out repo here. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.

Michael Sutter is a data science professional and holds a Master of Science in Data Science from the University of Padova. With a solid foundation in statistical analysis, machine learning, and data engineering, Michael excels in transforming complex datasets into actionable insights.