Ten years ago, Google DeepMind’s AI program AlphaGo shocked the world by defeating South Korean Go player Lee Sedol. And in the years since, AI has turned the game upside down. It has overturned age-old theories about the best moves and introduced entirely new theories. Players now train to replicate the AI’s moves as closely as possible rather than inventing their own, even when the machine’s thinking remains mysterious to them. Today, it is essentially impossible to compete professionally without using AI. Some say that technology has taken away creativity, while others think that there is still room for human invention. Meanwhile, AI is democratizing access to training, and more female players are climbing the ranks as a result.

For Shin Jin-seo, the world’s top-ranked Go player, AI is an invaluable training partner. Every morning, he sits down at his computer and opens a program called Katago. Nicknamed “Shintelligence” for how closely his moves mimic those of an AI, he traces glowing “blue spots” that represent the program’s suggestion for the best next move, rearranging the stones on a digital grid to try to understand the machine’s thinking. “I constantly think about why the AI chose this step,” he says.

While training for a match, Shin spends most of his waking hours focusing on Katago. “It’s almost like an ascetic practice,” he says. According to a study conducted in 2022 by the Korean Baduk League, Shin’s moves matched the AI’s 37.5% of the time, well above the 28.5% average found in the study among all players.

“My game has changed a lot because I have to follow instructions suggested by the AI to some extent,” says Shin. The Korea Baduk Association says it has contacted Google DeepMind in hopes of arranging a match between Shin and AlphaGo to celebrate the 10th anniversary of his victory over Lee. A spokesperson for Google DeepMind said the company could not provide information at this time. But if there is a new match, Shin, who has trained on more advanced AI programs, is optimistic he will win. “AlphaGo still had some flaws, so I thought I could beat it if I targeted those weaknesses,” he says.

AI rewrites the Go playbook

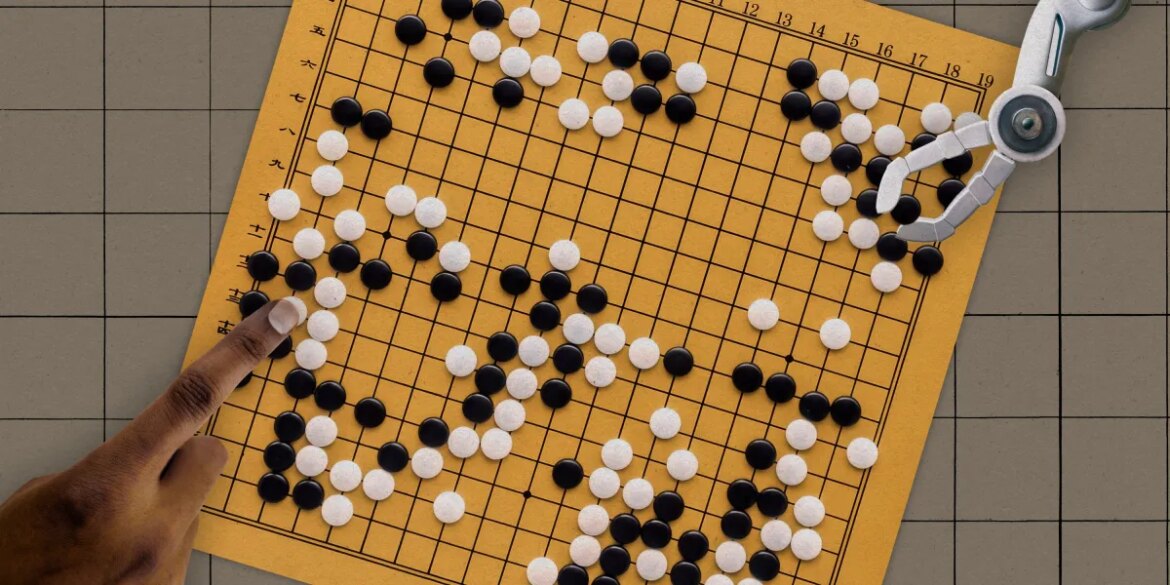

Go is an abstract strategy board game invented in China 2,500 years ago. Two players take turns placing black and white stones on a 19×19 grid, with the goal being to conquer territory by surrounding their opponent’s stones. It is a game of amazing mathematical complexity. Number of possible board configurations – about 10170-The number of atoms in the universe becomes dwarf. If chess is a war, Go is a war. You suffocate your enemy in one corner while blocking attacks in the other.

To train an AI to play Go, a vast trove of human Go moves is fed into a neural network, a computing system that mimics the web of neurons in the human brain. AlphaGo, later renamed AlphaGo Lee after its victory over Lee Sedol, was trained on 30 million Go moves and refined by playing millions of games against itself. In 2017, its successor, AlphaGo Zero, launched Go from scratch. Without studying any human games, it learned it simply by playing against itself, with moves based on the rules of the game. The blank-slate approach proved more powerful, unrestricted by the limits of human knowledge. After three days of training, it defeated AlphaGo Li in 100 games to zero.