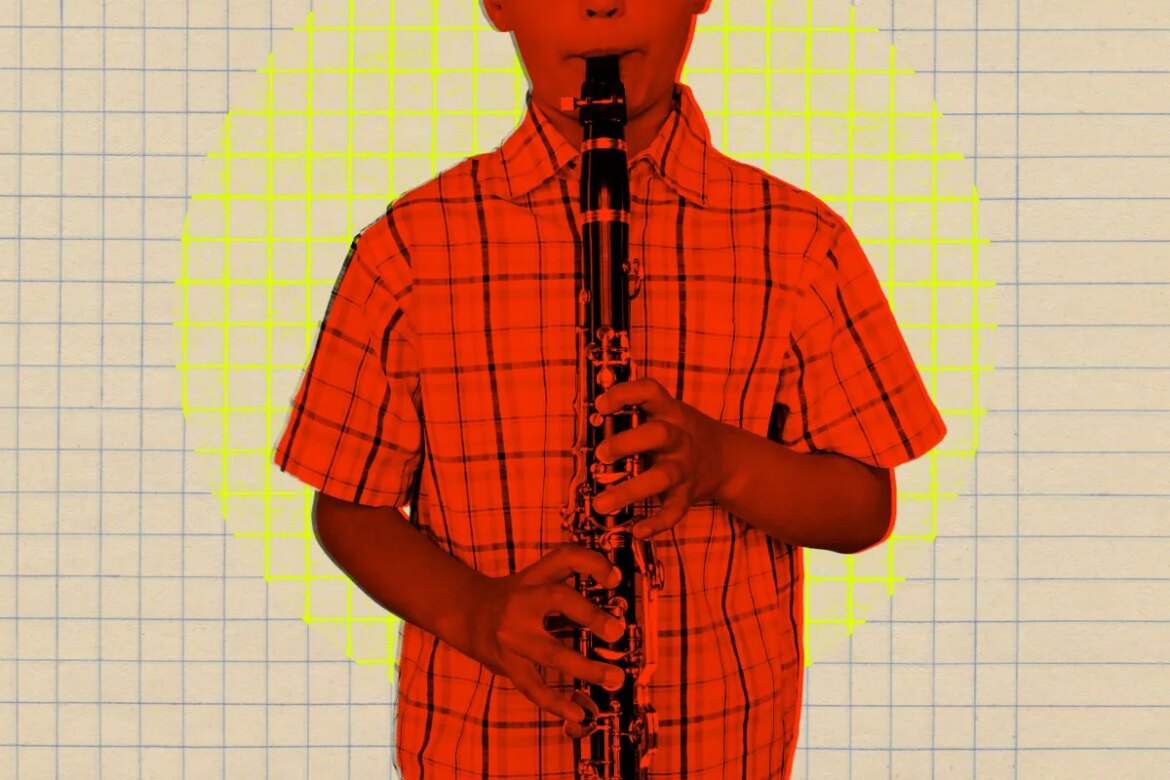

Illustration by Tag Hartman-Simkins/Futurism. Source: Getty Images

Students at Lawton Chiles Middle School in Florida were forced into lockdown last week after an alert from an AI monitoring system detected a student carrying a gun.

There’s just one issue: the “gun” was actually a band student’s clarinet.

According to, the whole matter was resolved immediately local reportingWhen the alert went off, it triggered an automatic “code red”, giving administrators no choice but to react to the AI system’s decision,

Luckily no one was hurt and the local police soon announced a lockdown. “The code red was a precaution and the children were never in any danger,” local police wrote in a letter. facebook post,

In a message to parents, school principal Dr. Melissa Laudani said the district “has multiple layers of school security, including an automated system that detects potential threats. A student was walking in the hallway, holding a musical instrument as if it were a weapon, which triggered Code Red to activate.” (It is not known what exactly constitutes a “Code Red”, as it is not mentioned in the school’s latest parent student handbook,

Instead of blaming the faulty AI system for the uproar – without which the fiasco would never have happened in the first place – the school blamed the young shehnai player.

“Although there was no threat to campus, I want to ask you to speak to your student about the dangers of pretending to have a weapon on school premises,” Laudani wrote.

It’s not known what specific system Lawton Chiles has on Overwatch, but the fact that it can’t differentiate between a clarinet with 17 keys and a rifle with no keys is worrying.

The Lawton Chiles incident comes on the heels of another similar case in which a 16-year-old teen in Baltimore was violently detained by at least eight officers at gunpoint due to AI. In that case, the school’s AI somehow mistook a small bag of Doritos for a handgun, prompting a heavily armed response from city police.

Like the Lawton Chiles story, Baltimore’s false positives could have easily been prevented if a human had been involved. Instead, both systems seem to have allowed the AI to make the call without the challenge of a human.

Omnialert, the company behind the Dorito incident, Insisted Its AI system “works with the aim of prioritizing safety and awareness through rapid human verification.”

All of this underlines how important it is that these AI school monitoring systems actually work before they force entry into schools – because when it comes to identifying students with guns, false positives can be just as deadly as false negatives.

More on monitoring: Regular people are rising up against AI surveillance cameras