Alibaba Cloud has just updated the open-source landscape. Today, the Quen team released QUEEN3.5The latest generation of their Large Language Model (LLM) family. is the most powerful version Qwen3.5-397B-A17B. This model is a sparse mixture-of-experts (MoE) system. It combines huge logic power with high efficiency.

Qwen3.5 is a native vision-language model. It is specifically designed for AI agents. It can see, code and reason 201 Languages.

Main architecture: 397B total, 17B active

Technical Specifications of Qwen3.5-397B-A17B Are impressive. Model includes 397b Total parameters. However, it uses a sparse MoE design. This means that it only activates 17b Parameters during a single forward pass.

it 17b The activation number is the most important number for developers. This allows the model to provide an intelligence of one 400B Sample. But it runs at the speed of a much smaller model. QUEEN TEAM REPORT A 8.6x To 19.0x Increased decoding throughput compared to previous generations. This efficiency solves the high cost of running AI at scale.

Efficient Hybrid Architecture: Gated Delta Network

Qwen3.5 does not use a standard transformer design. It uses ‘Efficient Hybrid Architecture’. Most LLMs rely only on meditation mechanisms. These can be slow with long text. Combination of Qwen3.5 Gated Delta Network (linear focus) with Mix of Experts (MOE).

Model includes 60 Layers. hidden dimension is size 4,096. These layers follow a specific ‘hidden layout’. Groups layout layers into sets 4.

- 3 The blocks use gated DeltaNet-Plus-MOE.

- 1 The block uses gated attention-plus-MOE.

- This pattern repeats 15 arrival time 60 Layers.

Technical details include:

- Gated DeltaNet: it uses 64 Linear focus peak for value (V). it uses 16 Key for Queries and Keys (QK).

- MOE Structure: the model has 512 Total expert. each token is activated 10 Rooted experts and 1 Shared Expert. it is equal 11 Active experts per token.

- vocabulary: The model uses a padded vocabulary 248,320 Token.

Native Multimodal Training: Early Fusion

Qwen3.5 is one Basic Vision-Language Model. Many other models later added sighting capabilities. Qwen3.5 used ‘Early Fusion’ training. This means that the model learned from images and text at the same time.

Trillions of multimodal tokens were used in training. This makes Qwen3.5 better at visual logic than before Qwen3-VL version. It is highly capable of ‘agent’ functions. For example, it can look at UI screenshots and generate accurate HTML and CSS code. It can also analyze long videos with second level accuracy.

Model supports this Model Reference Protocol (MCP). It also handles complex function-calling. These features are important for building agents who control apps or browse the web. In IFBENCH test, it scored 76.5. This score beats many proprietary models.

Solving Memory Wall: 1M Reference Length

Long-form data processing is a core feature of Qwen3.5. The base model has a basic context window 262,144 (256K) tokens. hosted Quen3.5-Plus The version goes even further. it supports 1M token.

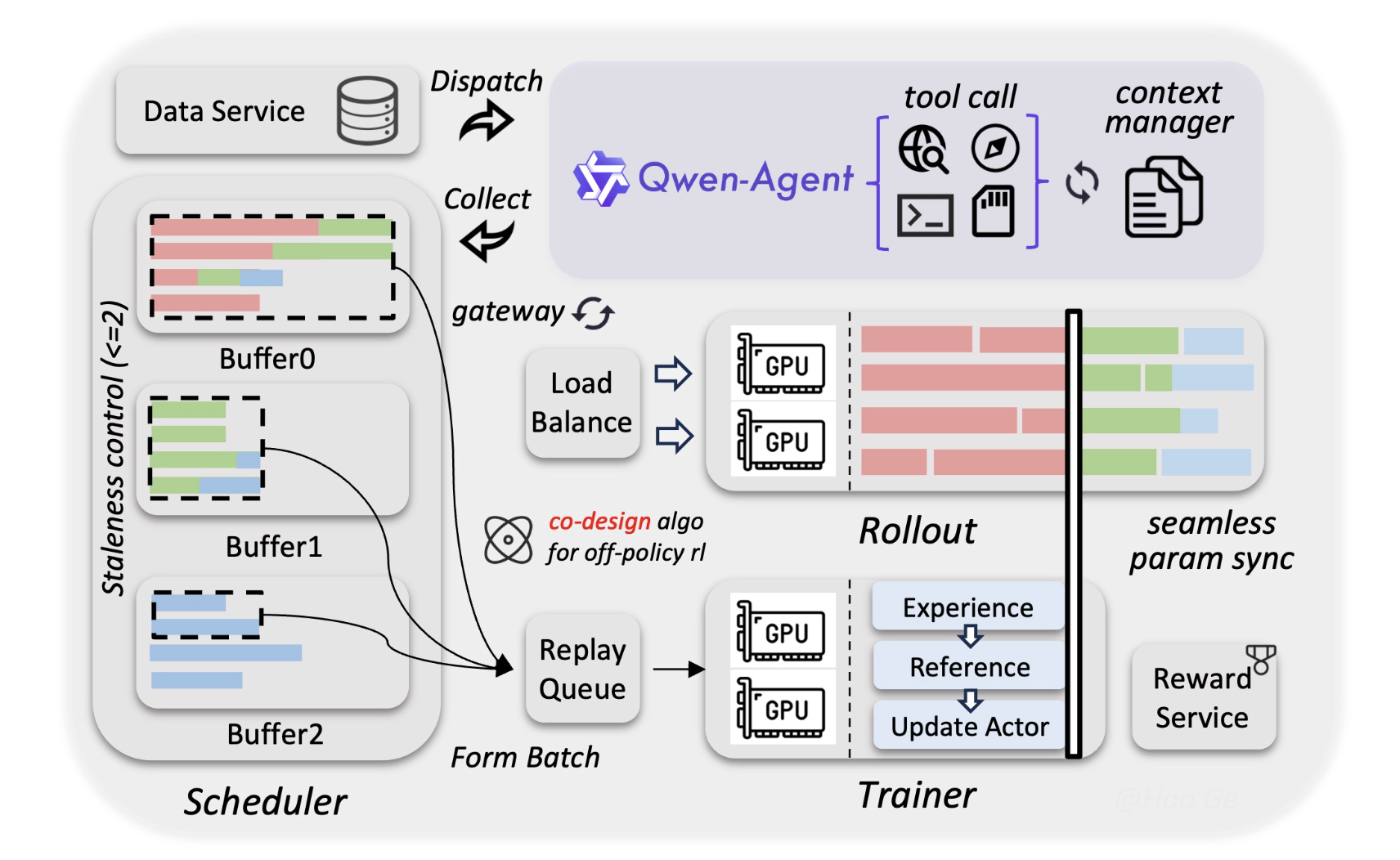

The Alibaba Quen team used a new asynchronous reinforcement learning (RL) framework for this. This ensures that the model remains accurate even at the end 1M Symbolic document. To Dave, this means you can feed the entire codebase into one prompt. You don’t always need a complex recovery-augmented generation (RAG) system.

Performance and Benchmarks

The model excels in technical areas. it got high marks Humanity’s Ultimate Test (HLE-Verified). This is a difficult criterion for AI knowledge.

- Coding: It shows similarity with top-tier closed-source models.

- Mathematic: The model uses ‘adaptive device use’. It can write Python code to solve math problems. It then runs the code to verify the answer.

- Languages: it supports 201 Different languages and dialects. That’s a big leap 119 Languages in previous version.

key takeaways

- Hybrid Efficiency (MOE + Gated Delta Network): Qwen3.5 uses one 3:1 ratio of Gated Delta Network (linear focus) for standard gated attention cross block 60 Layers. This hybrid design allows 8.6x To 19.0x Increased decoding throughput compared to previous generations.

- Huge Scale, Small Footprint: Qwen3.5-397B-A17B features 397b Total parameters but only activates 17b per token. you received 400b-class Intelligence with very small model inference speed and memory requirements.

- Native Multimodal Foundation: Unlike ‘bolted-on’ vision models, Qwen3.5 was trained through initial fusion Over trillions of text and image tokens simultaneously. This makes it a top tier visual agent, scoring 76.5 But IFBENCH To follow complex instructions in visual contexts.

- 1M Token Reference: While the base model supports native 256k token reference, hosted Quen3.5-Plus handles up to 1M Token. This huge window allows developers to process entire codebases or 2-hour videos without the need for complex RAG pipelines.

check it out technical details, model weight And GitHub repo. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.