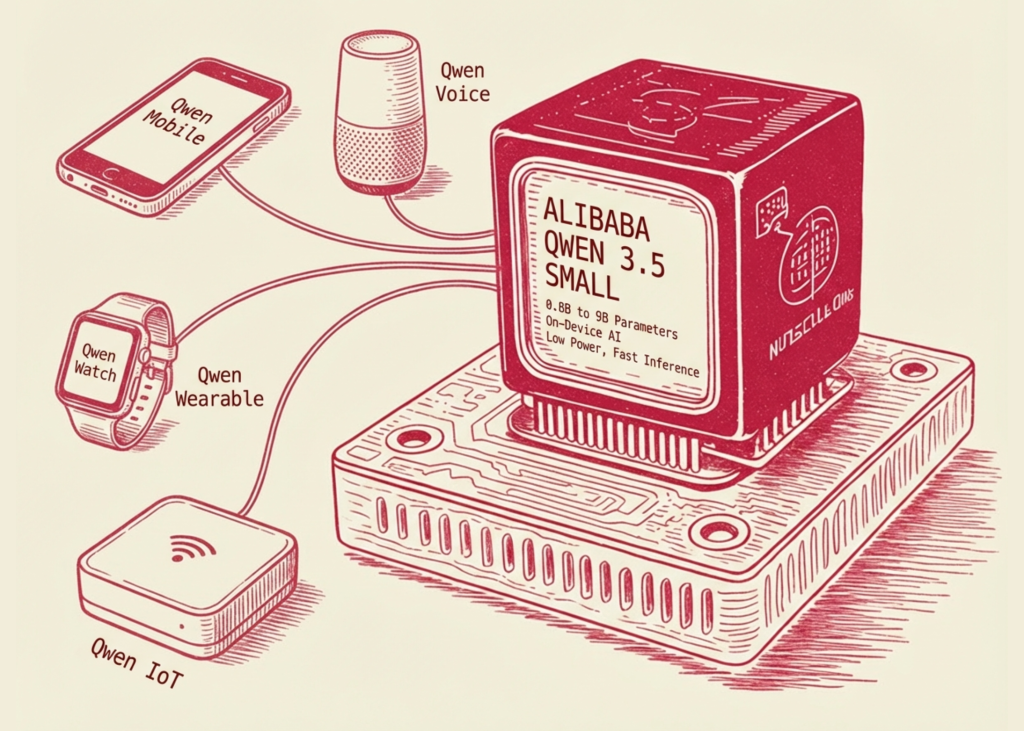

Alibaba’s Quan team has released Qwen3.5 miniature model seriesA collection of large language models (LLMs) ranging from 0.8b to 9b parameters. While the industry trend has historically favored increasing parameter count to achieve ‘marginal’ performance, this release focuses on ‘More intelligence, less calculation.‘These represent a shift toward deploying AI enabled on consumer hardware and edge devices without traditional trade-offs in model logic or multimodality.

The series is currently available hugging face And modelscopeWhich includes both instructional and base versions.

Model Hierarchy: Optimization by Scale

Qwen3.5 small series is classified four different levels, Each is optimized for specific hardware constraints and latency requirements:

- Qwen3.5-0.8B and Qwen3.5-2B: These models are designed for high-throughput, low-latency applications shore equipment. By optimizing the deep token training process, these models provide a low VRAM footprint, making them compatible with mobile chips and IoT hardware.

- Quen3.5-4b: This model serves as a multimodal base For mild agents. It bridges the gap between pure text models and complex visual-language models (VLMs), allowing agentic workflows that require visual understanding – such as UI navigation or document analysis – while remaining small enough for local deployment.

- Quen3.5-9B: The flagship of the small series, the focus is on the 9B version logic and reasoning. It is specifically designed to reduce performance gaps with significantly larger models (e.g. 30B+ parameter variants) through advanced training techniques.

Native Multimodality vs Visual Adapter

One of the important technical changes in Qwen3.5-4B and above is native multimodal capabilities. In earlier iterations of small models, multimodality was often achieved through ‘adapters’ or ‘bridges’ that connected a pre-trained vision encoder (such as CLIP) to a language model.

In contrast, Qwen3.5 incorporates multimodality directly into the architecture. This basic approach allows the model to process visual and textual tokens within the same latent space from the early stages of training. This results in better spatial reasoning, better OCR accuracy, and more cohesive scene-based responses compared to adapter-based systems.

Scaled RL: Increasing Reasonability in Compact Models

The performance of Qwen3.5-9B is largely attributed to its implementation Scaled Reinforcement Learning (RL). Unlike standard supervised fine-tuning (SFT), which teaches a model to mimic high-quality text, scaled RL uses reward signals to optimize the correct logic paths.

The benefits of scaled RL in the 9B model include:

- Better instructions following: Models are more likely to follow complex, multi-step system signals.

- Reduction in hallucinations: By strengthening logical consistency during training, the model exhibits high reliability in fact-retrieval and mathematical reasoning.

- Efficiency in Estimation: The 9B parameter count allows faster token generation (higher tokens per second) than the 70B model, while maintaining competitive logic scores on benchmarks such as MMLU and GSM8K.

Summary table: Qwen3.5 small series specifications

| model size | primary use case | Main technical characteristics |

| 0.8B/2B | Edge Devices/IoT | Low VRAM, high speed estimation |

| 4b | mild agent | Basic Multimodal Integration |

| 9b | logic and reasoning | Scaled RL for marginal-closing performance |

By focusing on architectural efficiency and advanced training paradigms such as scaled RL and native multimodality, the Qwen3.5 series provides a viable path for developers to build sophisticated AI applications without the overhead of large-scale, cloud-dependent models.

key takeaways

- More Intelligence, Less Calculation: The series (0.8B to 9B parameters) prioritizes architectural efficiency over raw parameter scale, enabling high-performance AI on consumer-grade hardware and edge devices.

- Native Multimodal Integration (4B Model): Unlike models that use ‘bolted-on’ vision towers, the 4B version features a native architecture where text and visual data are processed in a unified latent space, significantly improving spatial reasoning and OCR accuracy.

- Marginal-level reasoning via scaled RL: 9b model leverage Scaled Reinforcement Learning effectively closing the performance gap with a model 5x to 10x its size by optimizing logical reasoning paths instead of just symbolic prediction.

- Optimized for Edge and IoT: The 0.8b and 2b models are developed for ultra-low latency and minimal VRAM footprint, making them ideal for locale-first applications, mobile deployments, and privacy-sensitive environments.

check it out model weight. Also, feel free to follow us Twitter And don’t forget to join us 120k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.