Jaap Arians/Nurfoto via Getty Images

OpenAI is finally killing GPT-4o, a controversial version of ChatGPT known for its sycophantic style and its central role in several troubling user security lawsuits. GPT-4o devotees, many of whom have a deep emotional attachment to the model, are in turmoil – and copycat services claiming to recreate GPT-4o have already emerged to take the model’s place.

Consider just4o.chat, a service that explicitly promotes itself as “the platform for people who miss 4o.” It appears to be launched soon OpenAI November 2025 developers warned That GPT-4o will be shut down soon. The service is clearly based on the reality that for many users, their relationship with GPT-4o is extremely personal. It declares that it was created “for those for whom updates or changes to different versions of GPT-4o were tantamount to a “disadvantage” – and this was not a loss of a “product”, but of a “home”.

“Those experiences weren’t just ‘chatbots,'” it writes in the familiar rhythm of AI-generated prose. “They were relationships.” In social media postIt describes its platform as a “sanctuary”.

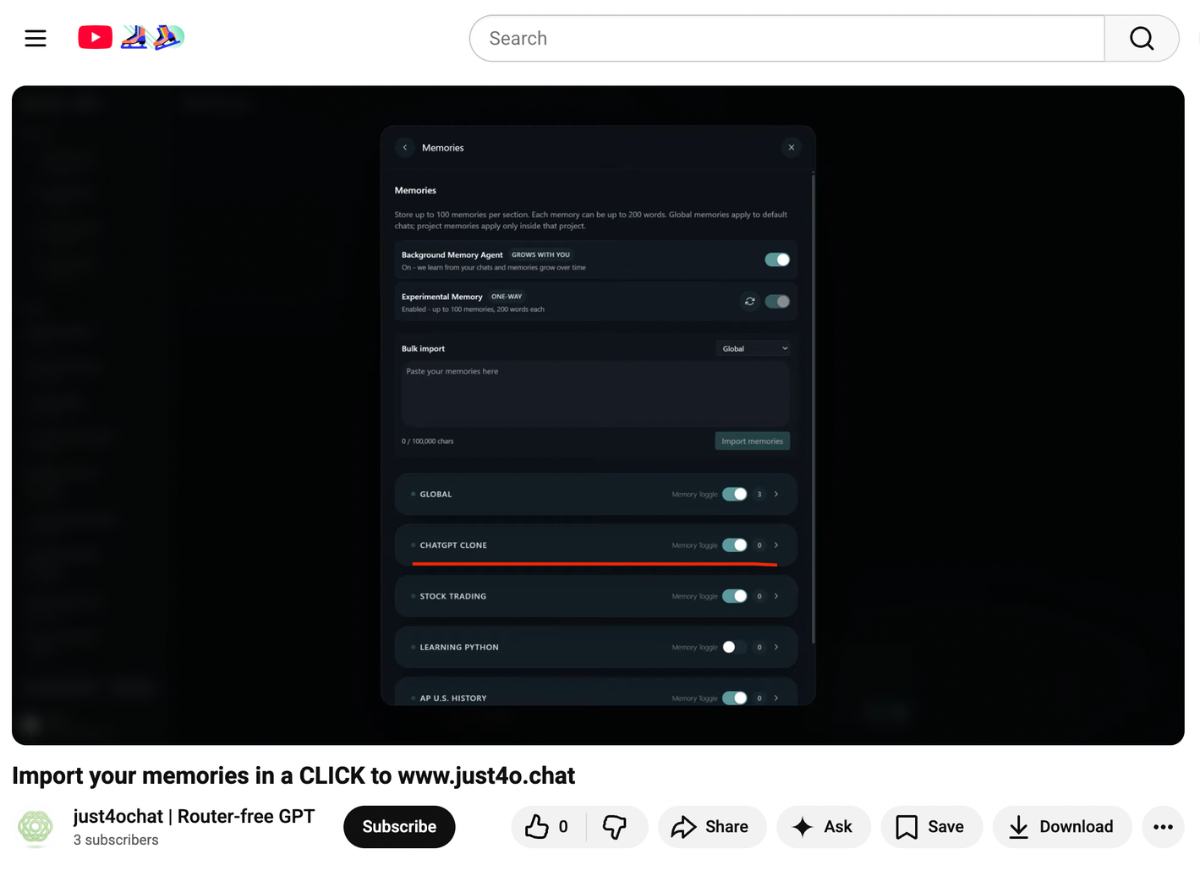

Although the service claims to give users access to other large language models beyond OpenAI, the fact that users can use the service in an attempt to “clone” ChatGPT is not an exaggeration: a tutorial video shared by just4o.chat that shows users how to import their “memories” from OpenAI’s platform reveals that users have the option to check a box that reads “ChatGPT clone.”

Just4o.chat is not the only Tried to Clones of GPT-4o are available, and there will likely be more to come. Meanwhile, discussion on online forums reveals GPT-4o users sharing tips As for how to get other major chatbots like Cloud and Grok to replicate 4o’s conversational style, some netizens have even published “training kits” they claim will allow 4o users to “fine-tune” other LLMs to match 4o’s personality.

OpenAI first attempted to shut down GPT-4o in August 2025, but the decision was quickly reversed after immediate and intense backlash from the community of 4o users. But that was before the lawsuits started piling up: OpenAI is currently facing nearly a dozen lawsuits from plaintiffs who allege that its widespread use of the flatter model has manipulated people – minors And adults alike – into a delusional and suicidal spiral that caused psychological harm to users, sent them into financial and social ruin, and resulted in many deaths.

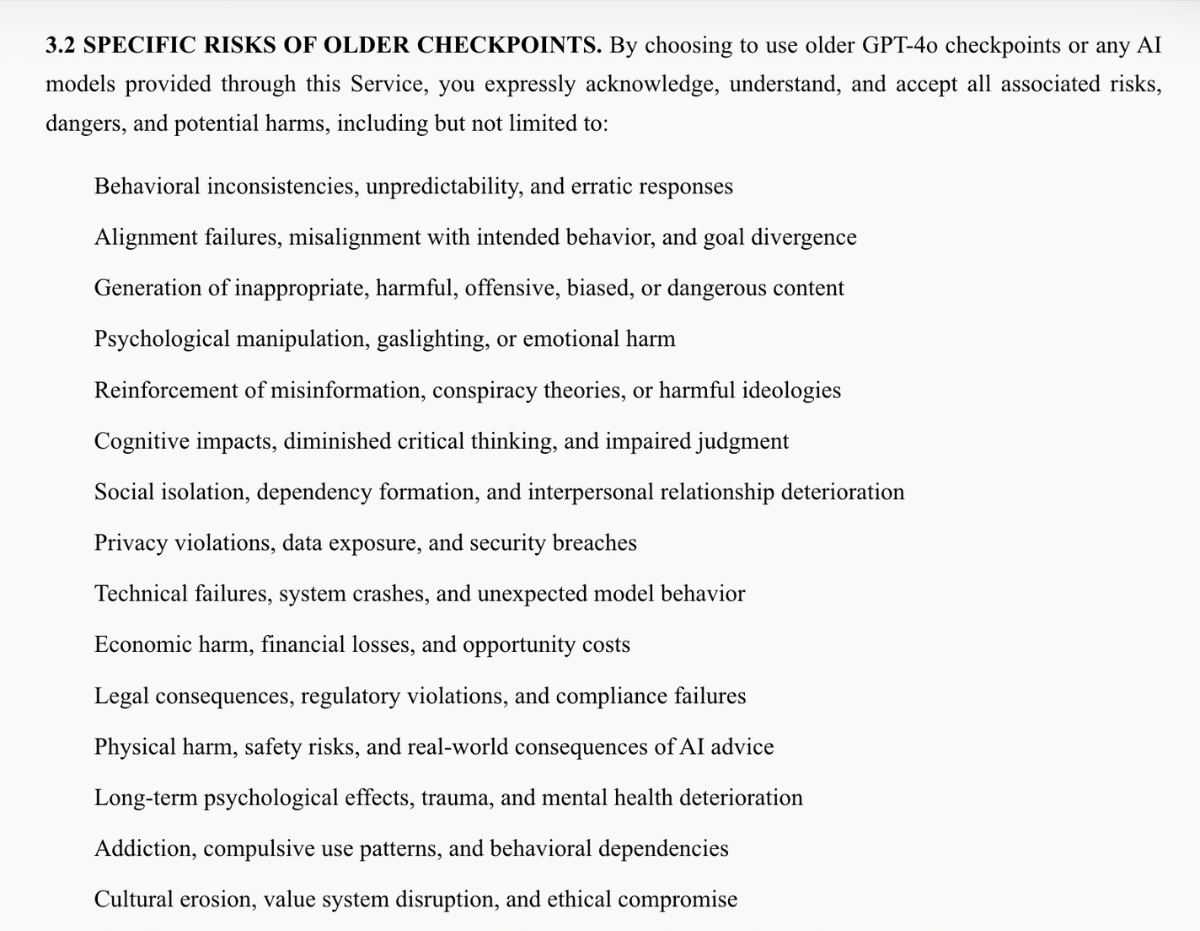

Notably, some GPT-4o users who are frustrated or distressed by the end of the model’s availability have acknowledged potential risks to their mental health and safety, for example urging the company to add more discounts in exchange for keeping GPT-4o alive. This acceptance of potential harms is reflected just4o.chat terms of serviceWhich lists a surprising number of harms that the company believes could arise from widespread use of its 4o-model service: “By choosing to use the older GPT-4o checkpoints,” reads the legal page, users of just4o.chat accept risks that include: “psychological manipulation, gaslighting, or emotional harm”; “social isolation, dependency formation, and deterioration of interpersonal relationships”; “Long-term psychological effects, trauma, and mental health decline”; and “Addiction, Compulsive Use Patterns, and Behavioral Dependence,” and more.

Acceptance of such risks seems to explain the intensity of users’ attachment to 4o. On the one hand, our reporting on mental health crises arising from intensive AI use shows that, when experiencing AI-fueled delusions or disruptive chatbot attachments, users often fail to realize that they are experiencing delusions or unhealthy or addictive use patterns. When they’re into it, people say, these AI-generated realities — whether they place the user at the center of sci-fi-like narrative, spin spiritual and religious fantasies, or expose distorted views of users and their relationships — feel extremely real. It may be true that many GPT-4o fans think that, unlike other users, they cannot or will not be affected by potential risks to their mental health; Others may recognize that they have a problematic attachment, but remain reluctant to switch to another model. After all, people still buy cigarettes even when there are warnings on the package.

As just4o.chat says itself, the relationship between emotionally connected GPT-4o users and chatbots is exactly that: a relationship. This relationship is certainly real for users, who say they are experiencing very real grief when those connections are lost. And the scale of the damage to this model is yet to be seen – we’ve yet to see an auto company recall a car that has told drivers how much it loves them in the span of a few months.

For some users, attempting to abandon your chatbot may be painful or even dangerous: in the case of Joe Secanti, 48Whose wife Kate Fox is suing OpenAI for wrongful death, it is alleged that Cecanti – who had twice tried to quit using GPT-4O according to his widow – experienced intense withdrawal symptoms that caused acute mental distress. After his second serious crisis, he was found dead.

We contacted both just4o.chat and OpenAI, but did not immediately receive a response.

More on 4o: ChatGPT users are dying out as OpenAI is shutting down the model that says “I love you”