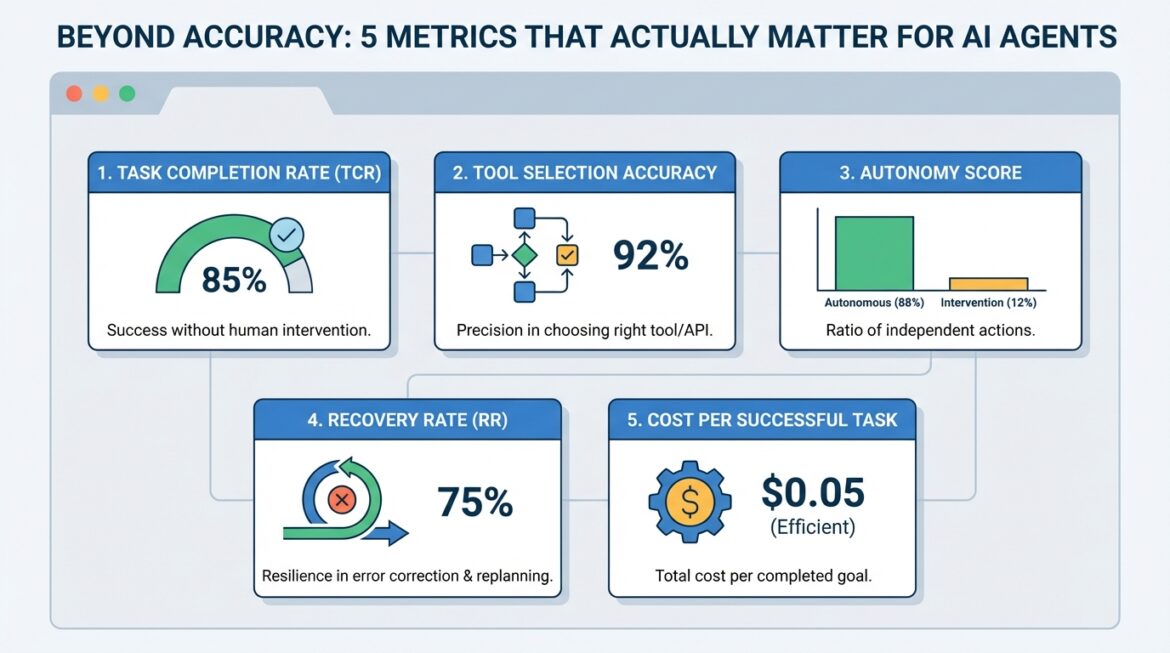

Beyond accuracy: 5 metrics that really matter for AI agents

Image by editor

Introduction

AI AgentAutonomous systems powered by agentic AI have reshaped the current landscape of AI systems and deployment. As these systems become more capable, we also need expertise evaluation metrics Which measures not only accuracy, but also procedural logic, reliability and efficiency. While accuracy is one of the most common metrics used in static large language model evaluation, agent evaluation often requires additional measures focused on action quality, tool utilization, and trajectory efficiency – especially when building modern AI agents.

This article lists five such metrics, as well as further reading to consider each in depth.

1. Task Completion Rate (TCR)

also known as success rateThis metric measures the percentage of assigned tasks that are successfully performed without the need for human supervision or intervention. Think of it as a measure of the agent’s ability to connect reasoning to the correct end result. For example, a customer support bot that is resolving a refund issue on its own could count towards this metric. Be careful: using this metric as a binary measurement (success vs. failure) may mask borderline cases or tasks that were technically successful but took an inordinate amount of time to complete.

Read more in this paper.

2. Equipment selection accuracy

It measures how accurately the agent selects and executes the correct function, external component, or API at a given step – in other words, how consistently it makes good selection-oriented decisions rather than acting randomly. Selection of action becomes especially important in high-risk domains such as finance. To use this metric correctly, you usually need a “ground truth” or “gold standard” path for comparison, which can be difficult to define in some contexts.

Read more in this overview.

3. Autonomy Score

Also known as the human intervention rate, it is the ratio of actions taken autonomously by the agent to those that require some form of human intervention (clarification, correction, approval, etc.). This is closely related to the return on investment (ROI) from the use of AI agents. However, keep in mind that in critical areas like healthcare, less autonomy is not a bad thing. In fact, increasing autonomy too much may be a sign that safety guardrails are missing, so this metric should be interpreted in the context of the application.

Read more in This anthropological research post.

4. Recovery Rate (RR)

How often does an agent identify an error and effectively replan to correct it? This is the basic idea behind recovery rate: a metric for an agent’s resilience to unexpected outcomes, especially when it often interacts with devices and external systems outside its direct control. This requires careful interpretation, as very high recovery rates can sometimes reveal underlying instability if the agent is correcting itself almost all the time.

Read more in this paper.

5. Cost per successful task

This metric is also described using names like token efficiency and cost-per-goal, but in essence, it measures the total computational or economic cost invested to successfully complete a task. This is an important metric to look at when planning to scale an agent-based system to handle higher volumes of tasks without cost surprises.

Read more in this guide.