Author(s): Kushal Banda

Originally published on Towards AI.

standard rag Pipelines have a fatal flaw: they recover once and hope for the best. When retrieved documents do not match the user’s intent, the system generates confident nonsense. No feedback loop. No self-improvement. No second chance.

agent rag Changes it. Instead of blindly generating answers from whatever documents are returned, an agent first evaluates relevance. If the retrieved content doesn’t cut it, the system rewrites the query and tries again. This creates a self-healing recovery pipeline that handles edge cases gracefully.

This article walks you through building a production-grade agentic RAG system using Langgraph for orchestration and Redis as a vector store. We’ll cover the architecture, decision logic, and state machine wiring that makes it all work.

The problem with “recovery and prayer”

Picture this: Your knowledge base includes a detailed document titled “Parameter-Efficient Training Methods for Large Language Models”. A user asks, “What is the best way to improve LLM?”

There is a semantic similarity, but it is not strong enough. Your retriever instead pulls back the parts that are tangentially relevant to the model architecture. LLM, with no way of knowing that the reference is wrong, produces a plausible-sounding but incorrect answer.

The user loses trust. Your RAG system looks broken.

Traditional RAGs have no mechanism to detect this failure mode. It treats retrieval as a one-shot operation: query in, document out, answer generated. Done.

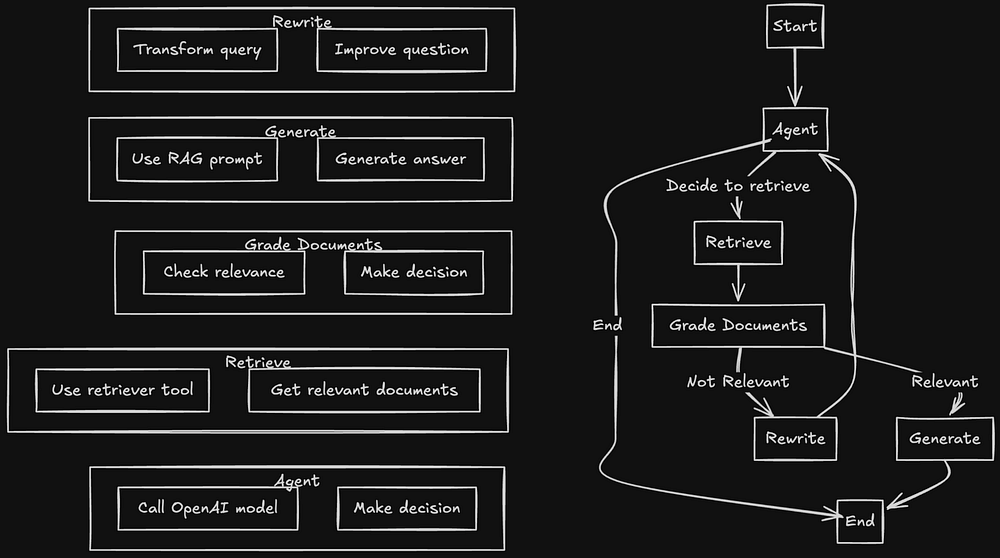

Agent RAG initiated checkpoints. An agent decides whether to retrieve or not. The grading phase evaluates whether the retrieved documents are relevant or not. A rewrite step corrects failed queries. The system continues to loop until it finds a relevant reference or its retry budget is exhausted.

architectural flow

The system breaks down into six distinct components, each with a single responsibility:

configuration layer Handles environment variables and API client setup. Redis connection string, OpenAI key, model name; All centralized in one place.

retriever setup Downloads source documents (in this case, Lilian Weng’s blog posts on agents), splits them into chunks, embeds them with OpenAI’s embedding model, and stores everything in Redis. RedisVectorStoreThe retriever is then wrapped up as a device that the agent can make calls to,

agent node Receives the user’s question and makes the first decision: should I call the retriever tool, or can I answer it directly? If the question requires external knowledge, the agent invokes retrieval.

grade edge Evaluates whether the retrieved documents are relevant to the original question. This is an important check point. Relevant documents are passed down from generation to generation. Irrelevant documents trigger rewriting.

rewrite node Transforms the original question into a better search query. The user’s vocabulary was highly colloquial. Key words were missing. The rewriter reformats the new query and sends it back to the agent for another retrieval attempt.

generate node Takes relevant documents and prepares final answer. This only runs when the grading phase confirms that the context is appropriate.

decision flow

Here’s how a query moves through the system:

User Question

↓

Agent ─────────────────────────────────┐

↓ │

(Calls retriever tool) │

↓ │

Retrieve documents │

↓ │

Grade documents │

↓ │

┌─────────────────┐ │

│ Relevant? │ │

└────────┬────────┘ │

│ │

Yes │ No │

│ │ │

↓ └────→ Rewrite query ──────┘

Generate

↓

Answer

The feedback loop from “rewrite” back to “agent” is what makes it an agent. The system does not fail silently; It adapts and tries again.

project structure

The codebase follows a clear separation of concerns:

src/

├── config/

│ ├── settings.py # Environment variables

│ └── openai.py # Model names and API clients

├── retriever.py # Document ingestion and Redis vector store

├── agents/

│ ├── nodes.py # Agent, rewrite, and generate functions

│ ├── edges.py # Document grading logic

│ └── graph.py # LangGraph state machine

└── main.py # Entry point

Each file performs a function. configuration remains inside config/agent logic resides inside agents/Retriever handles all vector store operations, This simplifies testing and debugging,

Configuration: centralizing secrets and clients

The configuration layer serves two purposes: loading environment variables and providing persistent API clients across the codebase.

settings.py Loads index names from Redis connection strings, OpenAI API keys, and environment variables. All configuration lives here, not scattered across files.

openai.py Creates the embedding model and the LLM client instance. When you need to switch from gpt-4o-mini In a different model, you change a file. When you need to adjust the embedding dimensions, you change a file. No hunting through the codebase.

This pattern means more than what it looks like. Production systems evolve. Models are deprecated. API keys rotate. Centralizing the configuration means that these changes happen in one place.

Retriever: building a knowledge base with Redis

The retriever handles the ingestion pipeline: fetching documents, splitting them into chunks, generating embeddings, and storing everything in Redis for fast similarity searching.

The sources are Lillian Weng’s blog posts on document agents and prompt engineering. are loaded through WebBaseLoaderDivide into manageable pieces using RecursiveCharacterTextSplitterAnd embedded with OpenAI’s embedding model.

Stores vectors via Redis RedisVectorStoreWrapped as langchain tool using retriever create_retriever_toolThis wrapping is important: it lets the agent call retrieval as a device, which means the agent can decide whether to retrieve or not,

Why Redis? Speed and simplicity. Redis handles vector similarity search without the operational overhead of a dedicated vector database. For systems that are already running Redis, it adds RAG capabilities without new infrastructure.

Agent Nodes: Decision Makers

three tasks in nodes.py Handle basic logic:

agent’s work Receives the current state (including the user’s queries and any message history) and decides what to do next. It has access to tools including a retriever. If the question requires external knowledge, the agent calls the retriever tool. If not, it gives a straight answer.

rewrite function Takes a question that failed recovery grading and rewords it. The rewriter prompts the LLM to generate better search queries; Which is more likely to pull back relevant documents. This reconstructed query is sent back to the agent for a second attempt.

generate function Generates the final answer. It receives the original question and relevant documents (now confirmed relevant by the grading phase) and generates a response based in that context.

Every function is stateless. State flows through the graph, not through function internals. This makes it easier to test and debug the system.

Edge Logic: Grading Document Relevance

grade_documents work in edges.py That checkpoint is what makes this system agentic.

After retrieval, this function evaluates each document against the original query. Is this document relevant? Does it contain information that will help answer the question?

The grading logic uses LLM calls with a structured signal. The prompt asks the model to evaluate relevance and return a binary decision: relevant or not relevant.

If the document passes the grade, the function returns "generate"Routing the flow for answer generation. If documents fail, it returns "rewrite"Triggering query improvements.

This evaluation step captures failure modes that eliminate standard RAG systems. Instead of arising from irrelevant context, the system gets another chance to find better documents.

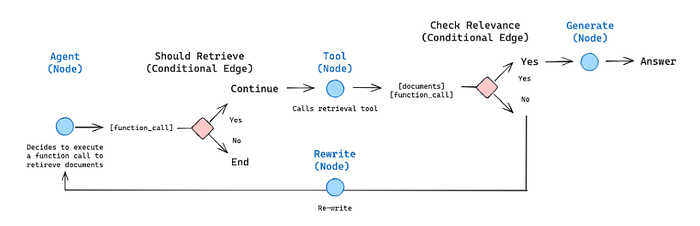

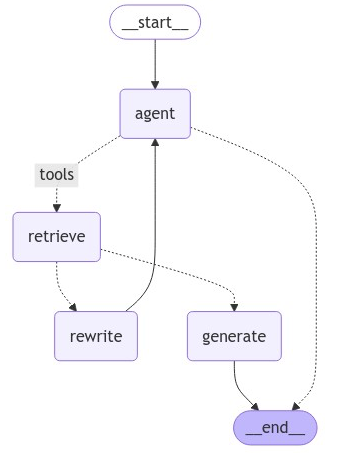

Graph Wiring: Langgraph State Machine

graph.py Connects everything together using Langgraph’s state machine primitives.

The graph defines nodes (agents, retrieve, generate, rewrite) and edges (connections between them, including conditional routing based on grading results).

The wiring looks like this:

- Start → Agent:Each query starts on the agent node

- Agent → Retrieve: If the agent calls the retriever tool, the flow moves to retrieval

- Recover → Grade:After retrieval, documents are classified

- grade → generate (if relevant): Relevant documents flow from generation to generation

- grade → rewrite (if not relevant): trigger irrelevant document rewriting

- Rewrite → Agent: The rebuilt query goes back to the agent.

- Generate → Terminate: reply comes back

Langgraf takes over state management. Each node receives the current state and returns updates. The graph engine routes messages based on conditional edge logic.

Runtime: what happens when you run main.py

The entry point creates the graph, the user sends the query, and streams the results.

build_graph() Constructs the Langgraph state machine and initializes the retriever tool. This happens once at startup.

When a question comes up, the flow goes like this:

- The agent receives the question and decides to call recovery

- return documents from redis

- The grading phase evaluates relevance

- If relevant, generation produces answers

- If not relevant, rewrite re-formulates the query and the loop continues.

main.py The script streams node output to the console, so you can watch decision making in real time. You see when recovery occurs, when grading passes or fails, and when rewriting begins.

Why does this architecture matter?

Three properties make agentic RAG superior to standard RAG:

self-improvement: The system detects and corrects bad recovery. No silent failure. No convincing wrong answers from irrelevant context.

transparency: State machine specifies decision points. You can log every routing decision. You can audit why the system chose to rewrite. Debugging becomes streamlined.

modularity:Each component has a single responsibility. Swap Redis for Pinecone by replacing Retriever. Swap OpenAI for Anthropic by changing the configuration. Don’t care about architecture.

When to use this pattern

Agent RAG makes sense when:

- Your queries are different in phrasing and your users don’t write like your document

- You need to explain why the system recovered what it did

- You are willing to trade latency for accuracy (rewrite adds LLM calls)

- Your way to failure for wrong answers is worse than slow answers

It becomes excessive when:

- Your questions are predictable and consistent

- Latency requirements are strict and cannot tolerate retries

- Your recovery quality is already high enough

wrapping up

The standard RAG treats retrieval as a black box: query goes in, documents come out, hope they’re relevant. Agent RAG opens that box and adds a checkpoint.

The combination of Langgraph and Redis gives you a production-ready foundation. Langgraph handles state machine complexity. Redis handles fast vector searching. The grading and rewriting logic handles edge cases that break simple systems.

The code for this implementation is available here github repoClone it, run it and adapt it to your use case,

Your RAG system doesn’t have to fail silently. Give it the ability to try again.

resources

🌐Connect

For more on AI, data formats and LLM systems follow me:

Published via Towards AI