Image by editor

# Introduction

rise of large language model (LLM) like GPT-4, llamaAnd cloud Artificial intelligence has changed the world. These models can write code, answer questions, and summarize documents with incredible efficiency. For data scientists, this new era is really exciting, but it also presents a unique challenge, which is that the performance of these powerful models is fundamentally tied to the quality of the data that powers them.

While most of the public discussion focuses on the models themselves, artificial neural networks and the mathematics of attention, the overlooked hero of the LLM era is data engineering. The old rules of data management are not being changed; They are being upgraded.

In this article, we’ll look at how the role of data is changing, the critical pipelines needed to support both training and inference, and new architectures, such as tileWhich are defining how we build applications. If you are a beginning data scientist looking to understand where your work fits into this new paradigm, this article is for you.

# Moving from BI to AI-ready data

Traditionally, the focus was primarily on data engineering business Intelligence (BI). The goal was to transfer data from operational databases such as transaction records to the data warehouse. This data was highly structured, clean, and organized into rows and columns to answer questions like, “What were the sales last quarter?“

The age of LLM demands a thorough approach. now we need support artificial intelligence (AI). This includes dealing with unstructured data such as text in PDF, transcripts of customer calls, and code in GitHub repositories. The goal is no longer just to collect this data, but to transform it so that a model can understand it and reason about it.

This shift requires a new kind of data pipeline that handles different data types and prepares them for three different stages of the LLM lifecycle:

- Pre-training and fine-tuning: Teaching the model or providing him/her expertise for a task.

- Inference and Reasoning: Helping the model access new information when asked questions.

- Evaluation and Observability: Ensuring that the model operates accurately, safely, and without bias.

Let’s analyze the data engineering challenges at each of these stages.

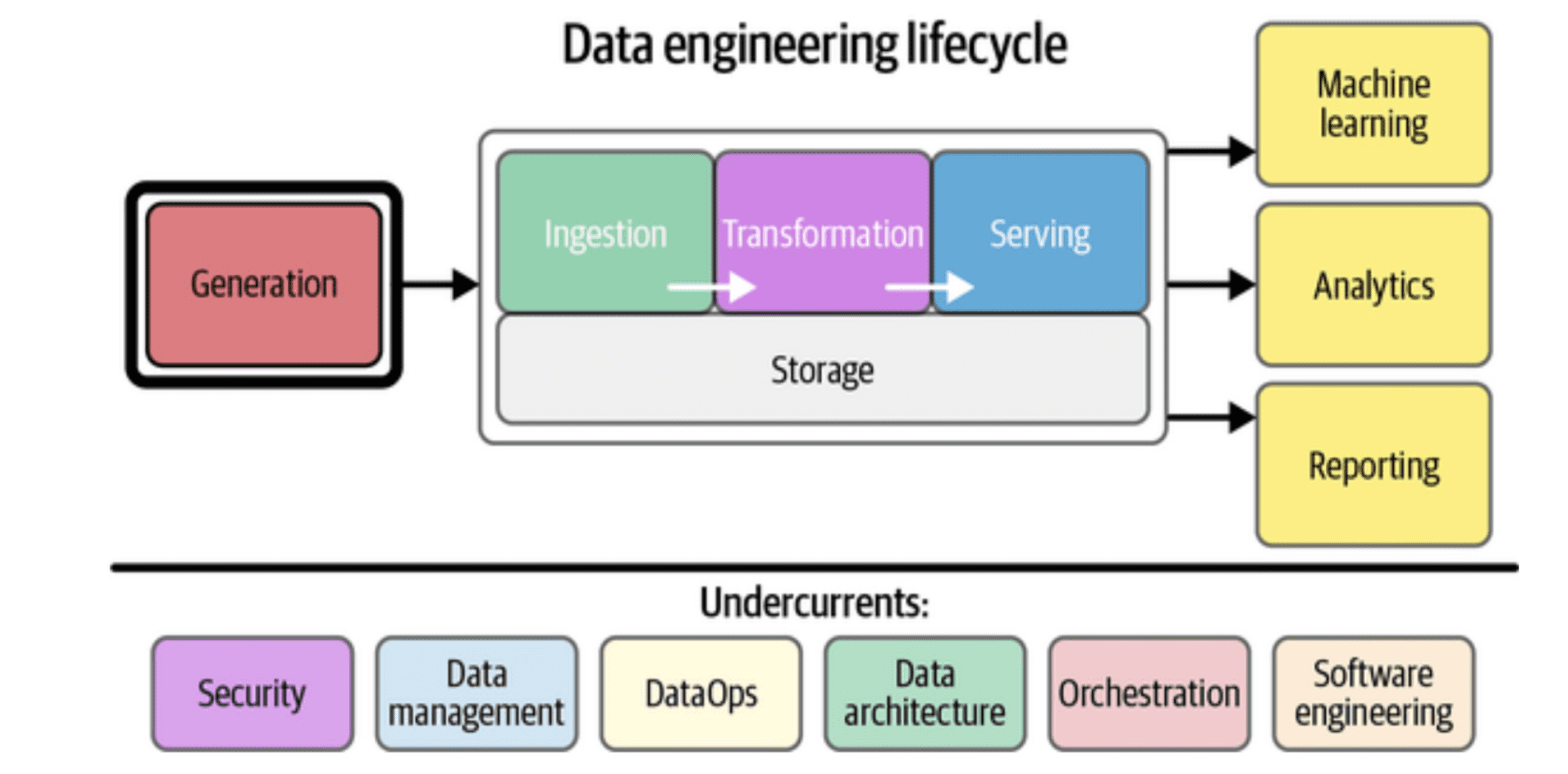

Figure_1: Data Engineering Lifecycle

# Step 1: Engineering Data for LLM Training

Before a model can be helpful, it must be trained. This phase is data engineering on a large scale. The goal is to assemble a high-quality dataset of text that represents a significant portion of the world’s knowledge. Let’s take a look at the columns of the training data.

// Understanding the three pillars of training data

When creating datasets for pre-training or fine-tuning an LLM, data engineers should pay attention to three important aspects:

- LLMs learn by statistical pattern recognition. To understand even the slightest difference, grammar and logic, they need to be exposed to trillions of tokens (pieces of words). This means consuming petabytes of data from sources such as normal crawl, GitHubScientific papers, and web archives. Huge volumes require distributed processing frameworks such as apache spark To handle the data load.

- A model trained only on legal documents will be poor at writing poetry. A different dataset is important for generalization. Data engineers must build pipelines pulling from thousands of different domains to create a balanced dataset.

- Quality is the most important factor that should be considered. This is where the real work begins. The Internet is full of noise, spam, boilerplate text (like navigation menus), and false information. A now famous paper from Databricks, “The Secret Sauce Behind the 1,000x LLM Training Speedup“, highlighting that data quality is usually more important than model architecture.

- Pipelines must remove low-quality material. This includes deduplication (removing nearly identical sentences or paragraphs), filtering text that is not in the target language, and removing unsafe or harmful content.

- You should know where your data comes from. If a model behaves unexpectedly, you need to track its behavior back to the source data. this is the custom data lineageAnd this becomes an important compliance and debugging tool

For a data scientist, understanding that a model is only as good as its training data is the first step toward building reliable systems.

# Step 2: Adopting the RAG Architecture

Although training the foundation model is a big task, most companies do not need to build it from scratch. Instead, they take an existing model and connect it to their personal data. This is where Retrieval-Augmented Generation (RAG) has become the dominant architecture.

RAG solves a major problem of time lag in LLM training. If you ask a model trained in 2022 to predict a news event in 2023, it will fail. RAG gives the model a way to “see” information in real time.

A typical LLM data pipeline for RAG looks like this:

- You have internal documents (PDFs, Confluence Pages, Slack archives). A data engineer creates a pipeline to contain these documents.

- LLMs have a limited “context window” (the amount of text they can process at once). You can’t throw a 500 page manual on a model. Therefore, the pipeline should intelligently break documents into small, digestible chunks (for example, a few paragraphs each).

- Each part is passed through another model (an embedding model) that converts the text into a numerical vector, a long list of numbers that represent the meaning of the text.

- These vectors are then stored in a special database designed for speed: a vector database.

When a user asks a question, the process is reversed:

- The user’s query is converted into a vector using the same embedding model.

- The vector database performs a similarity search, finding those parts of the text that are semantically most similar to the user’s question.

- Those relevant parts are sent to the LLM along with the original question, with the indication, “Answer the question based on the following context only.”

// Tackling the data engineering challenge

The success of RAG completely depends on the quality of the intake pipeline. If the breakdown strategy is bad, the context will be broken. If the embedding model does not match your data, retrieval will bring up irrelevant information. Data engineers are responsible for controlling these parameters and building reliable pipelines that make RAG applications work.

# Step 3: Building a Modern Data Stack for LLM

The process for building these pipelines is changing. As a data scientist, you will be faced with a new “stack” of technologies designed to handle vector search and LLM orchestration.

- Vector Database: These are the core of the RAG stack. Unlike traditional databases that search for exact keyword matches, vector databases search based on meaning.

- Orchestration Framework: These tools help you tie together signals, LLM calls, and data retrieval into one cohesive application.

- Data Processing: Good old fashioned ETL (Extract, Transform, Load) is still important. Tools like Spark are used to clean and prepare the huge datasets required for fine-tuning.

The key point is that the modern data stack is not a replacement for the old data stack; This is an extension. You still need your data warehouse (like Snowflake or BigQuery) for structured analysis, but now you need a vector store along with it to power AI features.

Image_2: Modern Data Stack for LLM

# Step 4: Evaluation and Observation

The final piece of the puzzle is evaluation. In traditional machine learning, you can measure model performance with simple metrics like accuracy (was this image of a cat or a dog?). With generative AI, the evaluation is more nuanced. If the model writes a paragraph, is it accurate? Is it clear? is it safe?

Data engineering plays a role here through LLM overview. We need to track the data flowing through our systems to debug failures.

Consider a RAG application that returns poor answers. Why did it fail?

- Was the corresponding document missing from the vector database? (data ingestion failure)

- Was the document in the database, but the search failed to retrieve it? (recovery failure)

- Was the document retrieved, but LLM ignored it and made an answer? (generation failure)

To answer these questions, data engineers create pipelines that log entire interactions. They store the user query, the retrieved context, and the final LLM response. By analyzing this data, teams can identify bottlenecks, filter out poor retrievals, and build datasets to improve models for better performance in the future. This closes the loop, turning your application into a continuous learning system.

# concluding remarks

We are entering a phase where AI is becoming the primary interface through which we interact with data. For data scientists, this represents a huge opportunity. The skills required to clean, structure and manage data are more valuable than ever.

However, the context has changed. You should now think about unstructured data with the same care that you previously applied to structured tables. You must understand how training data shapes the model behavior. You should learn to design LLM data pipelines that support retrieval-enhanced generation.

Data engineering is the foundation on which reliable, accurate, and secure AI systems are built. By mastering these concepts, you are not only keeping up with the trend; You are building the infrastructure for the future.

Shittu Olumide He is a software engineer and technical writer who is passionate about leveraging cutting-edge technologies to craft compelling narratives, with a keen eye for detail and the ability to simplify complex concepts. You can also find Shittu Twitter.