Transformers use a mix of attention and experts for scale calculations, but they still lack a native way to perform knowledge discovery. They recalculate the same local patterns over and over again, wasting depth and FLOPs. DeepSeek’s new Engram module targets exactly this gap by adding a conditional memory axis that works with MoE rather than replacing it.

At a high level, engrams modernize classic ngram embeddings and turn them into a scalable, O(1) lookup memory that plugs directly into the Transformer backbone. The result is a parametric memory that stores stable patterns such as common phrases and entities, while the spinal cord focuses on difficult logic and long-distance conversations.

How does an engram fit into a DeepSeek transformer?

The proposed approach uses the DeepSeek v3 tokenizer with a 128k vocabulary and pre-trains on 262B tokens. The backbone is a 30 block transformer with size 2560 hidden. Each block uses multi head latent attention with 32 heads and connects to the feed forward network through manifold constrained hyper connections with expansion rate 4. Optimization uses the Muon optimizer.

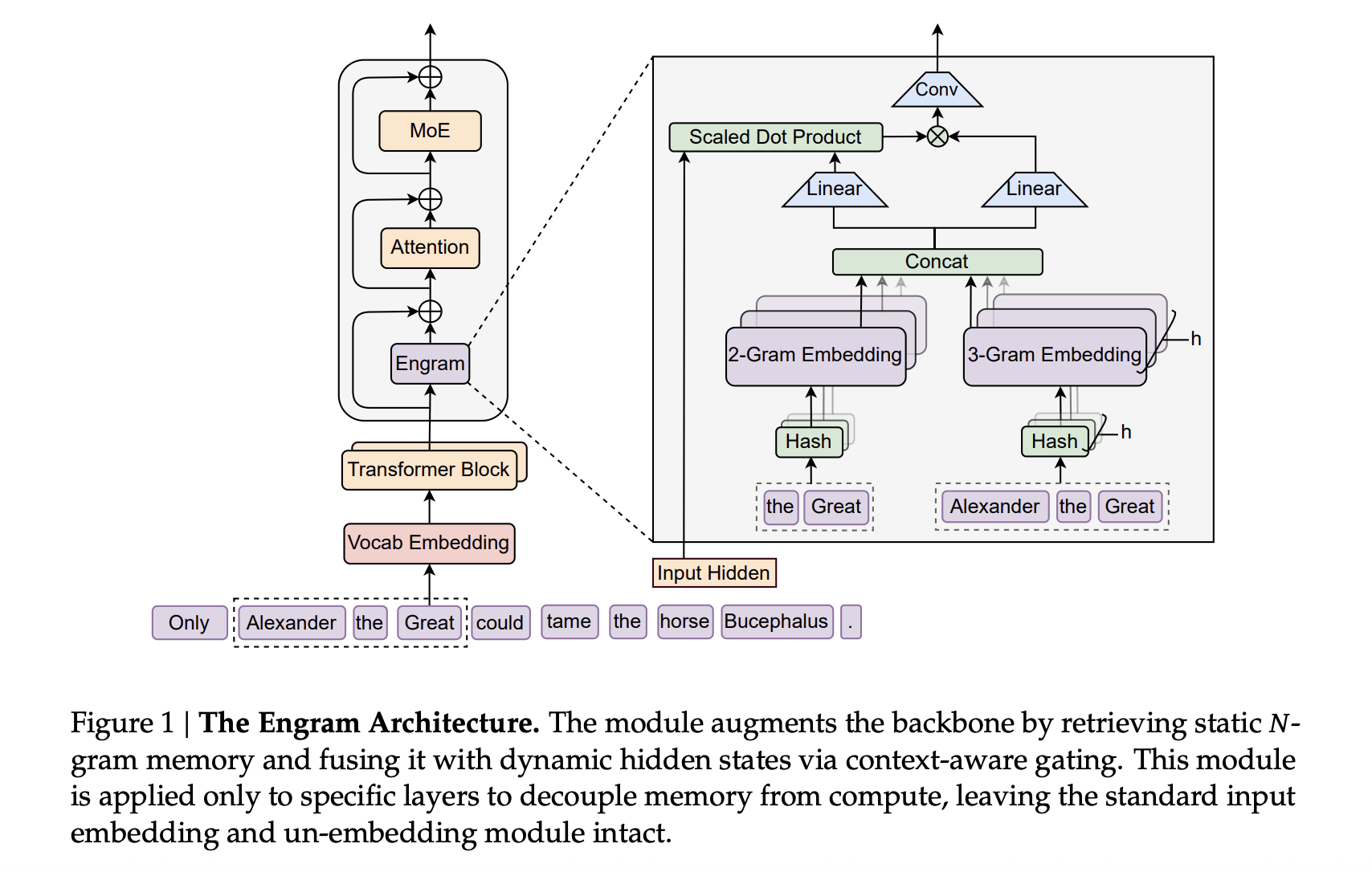

The engram connects to this backbone as a sparse embedding module. It is built from hashed n gram tables, with multi-head hashing in prime-sized buckets, a shallow convolution on the n gram contexts, and a context aware gating scalar in the range of 0 to 1 that controls how much of the recovered embedding is injected into each branch.

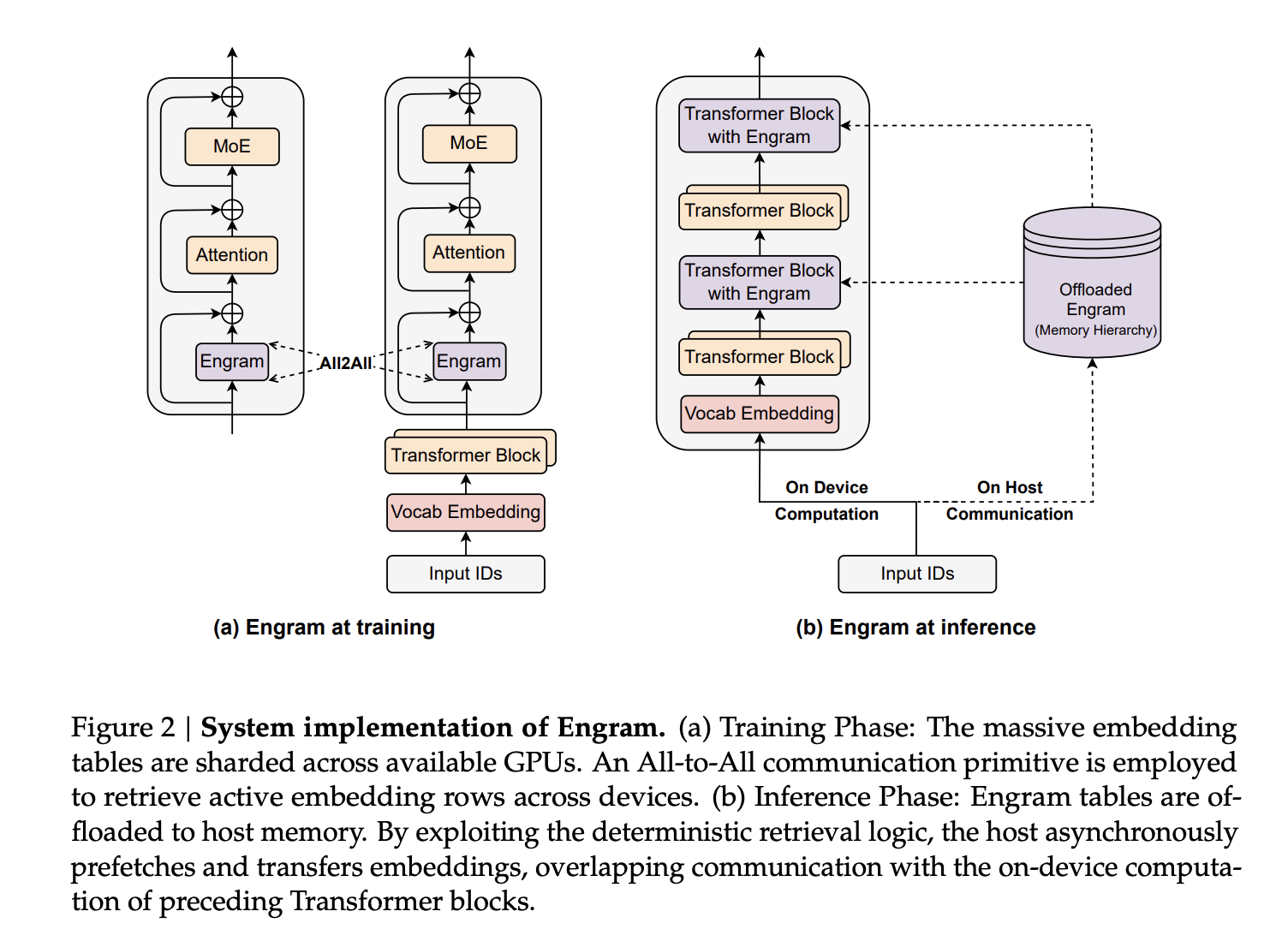

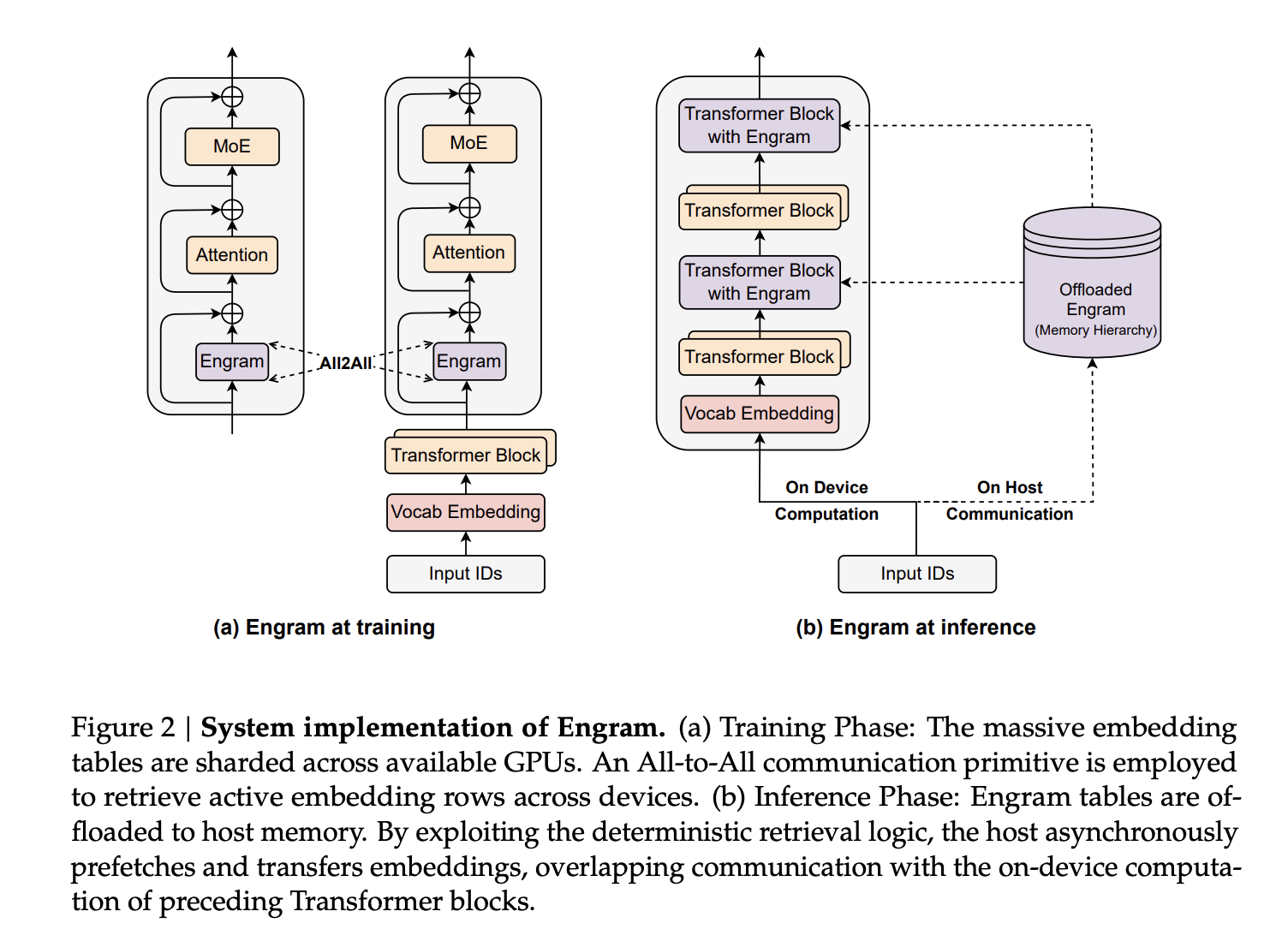

In larger-scale models, the Engram-27B and Engram-40B share the same transformer backbone as the MOE-27B. MoE-27B replaces dense feed forward with DeepSeekMoE, using 72 rooted experts and 2 shared experts. Engram-27b reduces the routed experts from 72 to 55 and reallocates those parameters into 5.7B of engram memory, keeping the total parameters at 26.7B. The engram module uses N equal to {2,3}, 8 engram heads, dimension 1280 and is inserted at layers 2 and 15. Engram 40B expands Engram memory to 18.5B parameters while keeping active parameters constant.

Sparsity allocation, a second scaling knob next to MOE

The main design question is how to divide the sparse parameter budget between routed experts and conditional memory. The research team has formalized this as a sparsity allocation problem, in which the allocation ratio ρ is defined as the fraction of idle parameters assigned to MoE experts. In a pure MoE model ρ equals 1. Reducing ρ reallocates the experts’ parameters into engram slots.

At the mid-scale models 5.7b and 9.9b, broad ρ gives a clear U-shaped curve of validation loss versus allocation ratio. The engram models match the pure MoE baseline, even when ρ drops to about 0.25, which matches about half the rooted experts. Looks best when about 20 to 25 percent of the sparse budget is devoted to engrams. This optimum is stable in both computation regimes, suggesting a strong partition between conditional computation and conditional memory under fixed sparsity.

The research team also studied the infinite memory regime on a fixed 3B MOE backbone trained for 100B tokens. They engram table measures approximately 2.58e5 to 1e7 slots. Validation loss follows an almost perfect power law in log space, meaning more conditional memory continues to pay off without additional computation. Engrams also outperform overencoding, another n gram embedding method that averages out vocabulary embeddings under the same memory budget.

massive pre-training results

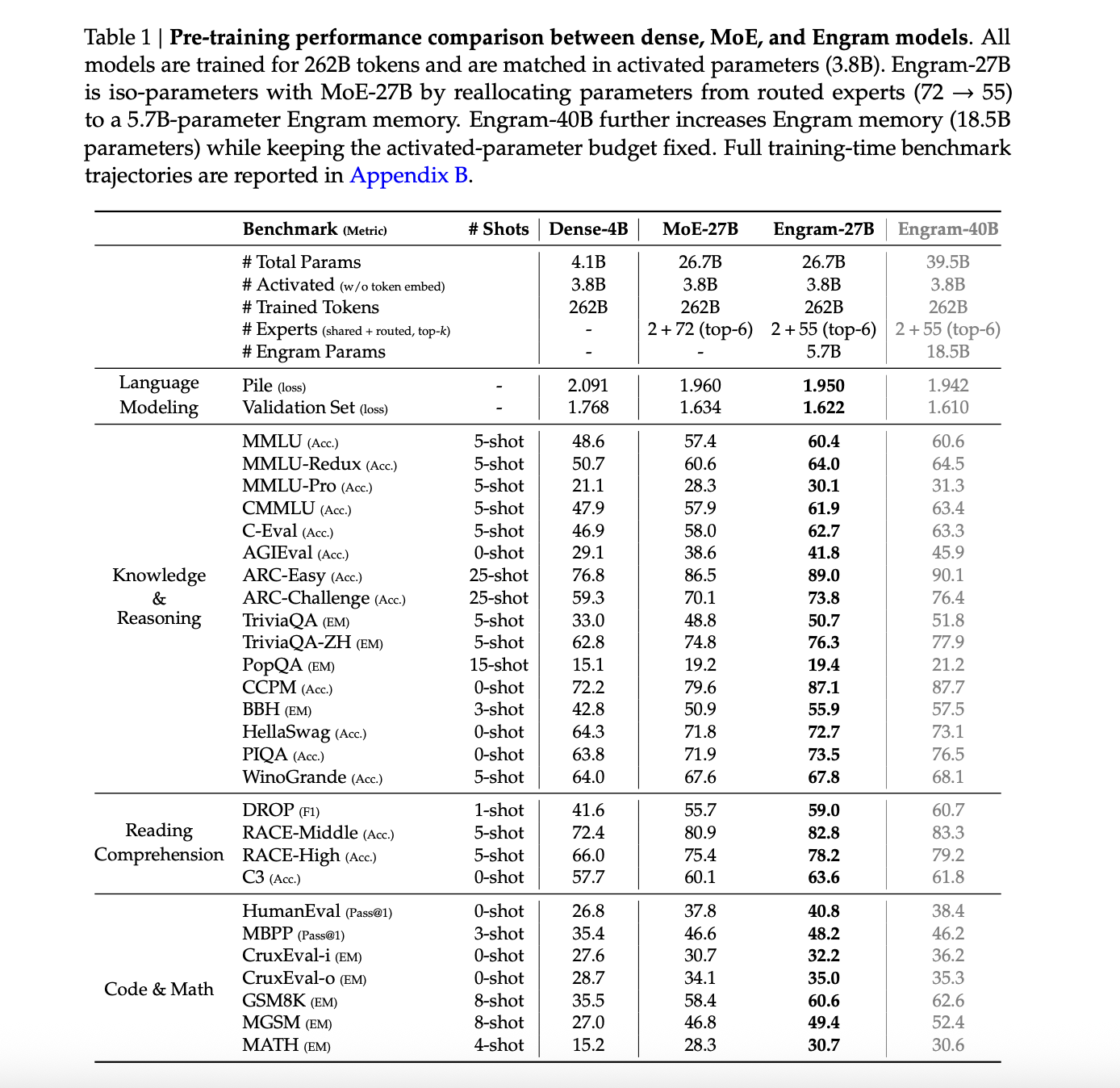

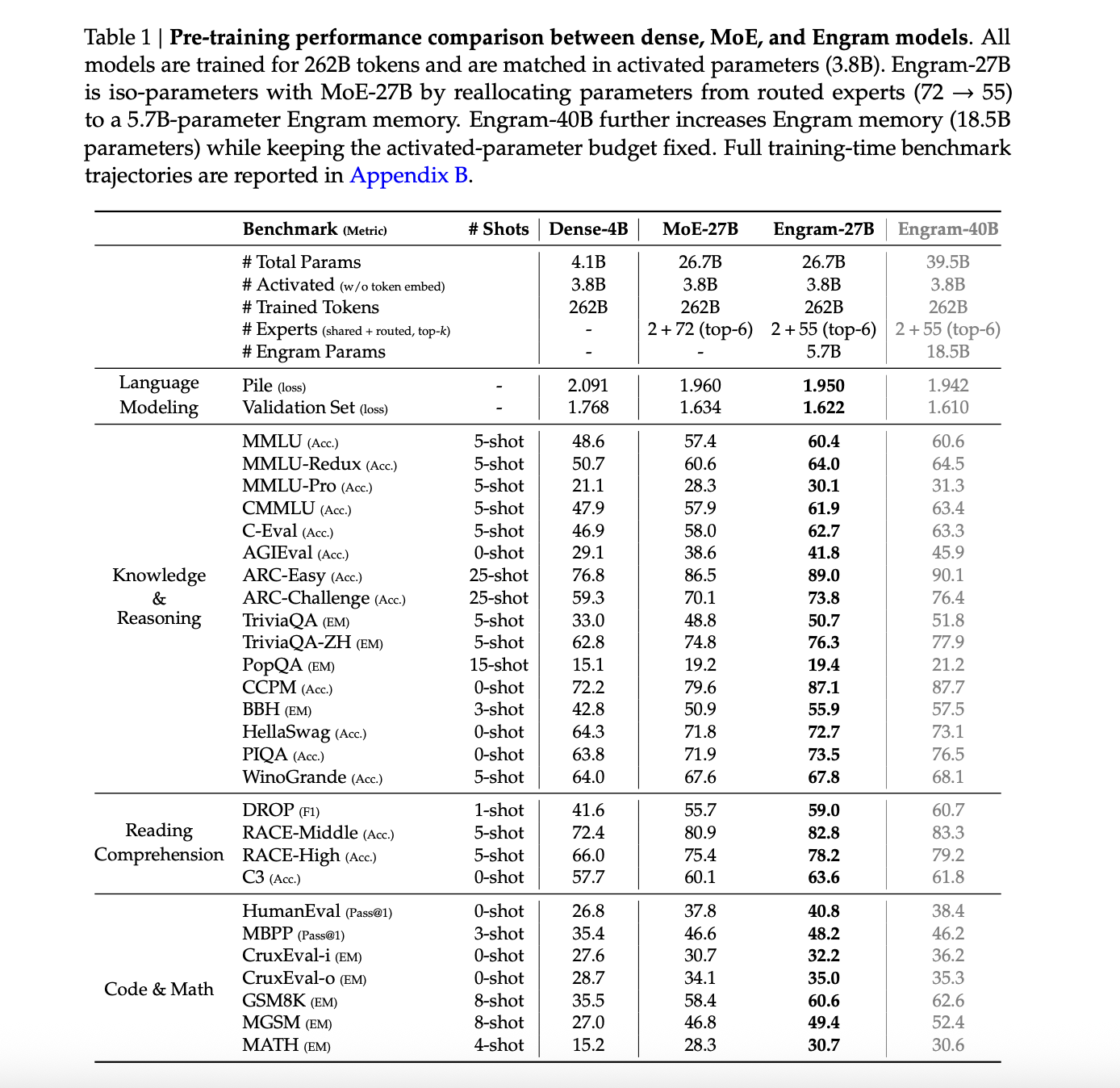

The main comparison involves four models trained on the same 262B token course with 3.8B active parameters in all cases. These are Dense 4b with 4.1b total parameters, MOE 27b and Engram 27b at 26.7b total parameters, and Engram 40b at 39.5b total parameters.

On The Pile test set, the language modeling loss is 2.091 for MoE 27B, 1.960 for Engram 27B, 1.950 for Engram 27B variants, and 1.942 for Engram 40B. Dense 4B pile loss has not been reported. Validation loss on the internally held set has decreased from 1.768 for MoE 27B to 1.634 for Engram 27B and 1.622 and 1.610 for Engram variants.

On all measures of knowledge and reasoning, Engram-27B is consistently superior to MOE-27B. MMLU increased from 57.4 to 60.4, CMMLU increased from 57.9 to 61.9 and C-Eval increased from 58.0 to 62.7. ARC Challenge has increased from 70.1 to 73.8, BBH from 50.9 to 55.9 and DROP F1 from 55.7 to 59.0. Code and math functions have also improved, for example HumanEval from 37.8 to 40.8 and GSM8K from 58.4 to 60.6.

Engram 40B generally beats these numbers, even though the authors note that it is likely trained on 262B tokens because its training loss continues to diverge from the baseline near the end of pre-training.

Long term behavior and mechanical effects

After pre-training, the research team expands the context window to 32768 tokens using 30B high-quality long context tokens, using YaRN for 5000 steps. They compare MoE-27B and Engram-27B at checkpoints corresponding to 41k, 46k and 50k pre-training steps.

On LongPPL and RULER in the 32k context, Engram-27B matches or exceeds MoE-27B under three conditions. With about 82 percent pre-training FLOPs, the Engram-27B ruler at 41k steps matches LongPPL while improving accuracy, for example multi query NIAH 99.6 vs 73.0 and QA 44.0 vs 34.5. Under ISO loss at 46k and ISO flop at 50k, the Engram 27B improves on both confusion and all ruler categories, including VT and QA.

Mechanistic analysis uses LogitLens and centered kernel alignment. Engram variants show KL divergence according to the lower layer between the intermediate log and the final prediction, especially in the early blocks, meaning that the representations become ready for prediction sooner. CKA similarity maps show that shallower engram layers align best with deeper MOE layers. For example, layer 5 in Engram-27b roughly aligns with layer 12 in the MOE baseline. Overall, this supports the view that engrams effectively increase model depth by loading static reconstructions into memory.

Ablation studies on a 12 layer 3B MOE model with 0.56B active parameters add 1.6B engram memory as the reference configuration, use N equal to {2,3} and put engrams on layers 2 and 6. Sweeping a single engram layer in depth shows that initial insertion at layer 2 is optimal. Component separation highlights three major parts, multi branch integration, context aware gating and tokenizer compression.

Sensitivity analysis shows that factual knowledge depends largely on engrams, with TriviaQA falling to about 29 percent of its original score when engram output is suppressed on inference, while reading comprehension tasks retain about 81 to 93 percent performance, for example C3 at 93 percent.

key takeaways

- Engram adds a conditional memory axis to Sparse LLM so that consecutive N gram patterns and entities can be retrieved via O(1) hashed lookups, while Transformer Backbone and MOE experts focus on dynamic reasoning and long-range dependencies.

- Under a fixed parameter and FLOP budget, reallocating about 20 to 25 percent of the sparse capacity from MOE experts to ngram memory reduces verification loss, indicating that conditional memory and conditional computation are complementary rather than competing.

- In large-scale pre-training on 262B tokens, Engram-27B and Engram-40B outperform the MoE-27B baseline on language modeling, knowledge, logic, code, and mathematics benchmarks with the same 3.8B active parameters, while keeping the Transformer backbone architecture unchanged.

- Long context expansion for 32768 tokens using YaRN shows that Engram-27b matches or improves on LongPPL and clearly improves ruler scores, especially in Haystack and variable tracking in multi-query-needle, even when trained with less or equal compute compared to MOE-27b.

check it out paper And GitHub repo. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.

Check out our latest releases ai2025.devA 2025-focused analytics platform that models launches, benchmarks and transforms ecosystem activity into a structured dataset that you can filter, compare and export.

Asif Razzaq Marktechpost Media Inc. Is the CEO of. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. Their most recent endeavor is the launch of MarketTechPost, an Artificial Intelligence media platform, known for its in-depth coverage of Machine Learning and Deep Learning news that is technically robust and easily understood by a wide audience. The platform boasts of over 2 million monthly views, which shows its popularity among the audience.