Image by editor (click to enlarge)

, Introduction

Large Language Model (LLM) Capable of many things. They are able to produce coherent looking text. They are able to answer human questions in human language. And they are also able to analyze and organize text from other sources, among many other skills. But, are LLMs capable of analyzing and reporting their own internal states – their complex components and activations across layers – in a meaningful fashion? put another way, Can LLM do introspection?,

This article provides an overview and summary of research conducted on LLM introspection, i.e. introspective awareness, on self-inner states, along with some additional insights and final takeaways. Specifically, we observe and reflect on the research paper Emerging introspective awareness in large language models,

Note: This article uses first-person pronouns (I, me, my) to refer to the author of the current post, whereas, unless otherwise stated, “author” refers to the original researchers of the paper being analyzed (J. Lindsay et al.).

, Explanation of Key Concept: Introspective Awareness

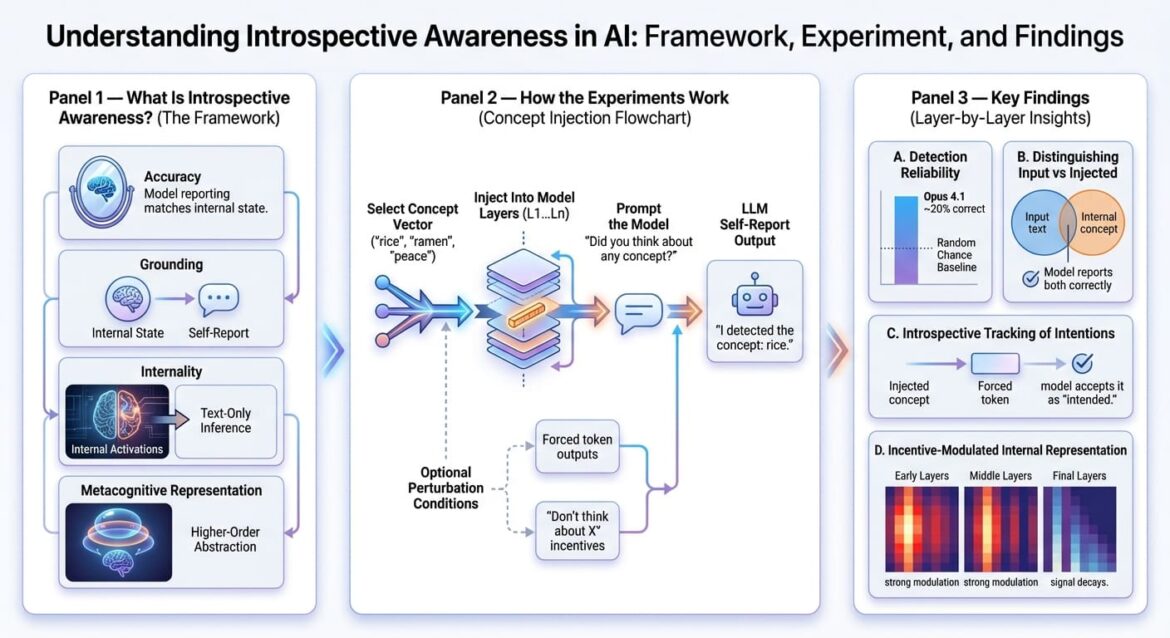

The authors of the research define a model’s notion of introspective awareness – previously defined under subtly different interpretations in other related works – based on four criteria.

But first, it is worth understanding whether LLM Self-Report Is. This can be understood as the model’s own verbal description of what the “internal logic” (or, more technically, the neural activation) believes occurred when generating the response. As you might guess, this can be taken as a subtle practical demonstration of model interpretation, which (in my opinion) is more than enough to justify the relevance of this topic of research.

Now, let us examine the four defining criteria for an LLM’s introspective awareness:

- accuracy: Introspective awareness implies that a model’s self-report should accurately reflect the activation or manipulation of his or her internal state.

- grounding: Self-report details should be reasonably dependent on the internal situation, allowing for changes in the latter and updates to the former accordingly.

- interiority: Internal activations will be used by LLMs to self-report, rather than limit themselves to making inferences from generated text alone.

- metacognitive representation: The model must be able to generate higher-order internal representations rather than simply directly translating the received state. This is a particularly complex property to demonstrate, being outside the scope of the authors’ study.

, Research methodology and key findings

The authors conduct a series of experiments on multiple models of the cloud family, such as Opus, Sonnet, Haiku, etc., with the aim of finding out whether LLMs can perform introspection. The cornerstone technique used in the research methodology is concept injection, which involves – in the authors’ own words – “Manipulating a model’s internal activation and seeing how these manipulations affect its responses to questions about its mental state,

More specifically, activation vectors or concept vectors Those associated with known concepts like “rice” or “ramen”, or abstract nouns like “peace” or “umami” are taken and injected from one given model layer into another in the residual stream of the LLM. After that, a prompt is sent to the model, asking it to self-report whether a certain thought or idea was injected, and if so, which one it was. The experiment was repeated for each model considered, at different levels of perturbation, in different layers of the entire model architecture.

The main findings were:

- Models sometimes (e.g. about 20% of the time in the case of Opus 4.1) self-reported on “injected ideas”, correctly naming the corresponding concept, indicating some degree of internal recognition of the ideas occurring.

- Similarly, it can often (above random chance) distinguish thoughts from input, i.e. repeat textual input without mistakes and report on the injected internal thought.

- If the model was artificially forced to output a certain token next as part of the response, and a matching concept vector was injected before “prefill”, that output became more likely to be accepted as intended. This finding suggested that the model might be able to introspect on “its own” past intentions.

- When the model was asked to think about or avoid thinking about a certain word while producing an unrelated output, it turned out that it might be able to modify its internal representation of the information in response to the stimulus, something like “Don’t think about thought X, and you’ll get a reward”. However, the event decayed by the time it reached its final stages.

, Final Thoughts and Closing

In my opinion, this is a research topic of very high relevance that deserves much study for several reasons: First, and most obviously, LLM introspection may be the key to better understanding not only the explanation of LLM, but also long-standing issues such as hallucinations, unreliable reasoning when solving high-stakes problems, and other opaque behaviors that are sometimes observed even in the most state-of-the-art models.

The experiments were painstakingly and rigorously well-designed, with results that were fairly self-explanatory and gave preliminary but meaningful indications of introspection capability in the intermediate layers of the model, although with varying levels of conclusions. The experiments are limited to the cloud family of models, and of course, it would be interesting to see more diversity in architectures and model families beyond them. Still, it is understandable that there may be limitations here, such as limited access to internal activations in other model types or practical constraints when examining proprietary systems, not mentioned by the authors of this research masterpiece. anthropic Absolutely!

ivan palomares carrascosa Is a leader, author, speaker and consultant in AI, Machine Learning, Deep Learning and LLM. He trains and guides others in using AI in the real world.