Author(s): um

Originally published on Towards AI.

As software architects and service owners, we often focus on “Day 1” of our services: design, tech stack, clean code. But the engineering reality is that 90% of the lifecycle of a service is “day 2”: operation, maintenance, debugging, and fighting fires.

We create microservices, and then we become their slaves. We wake up to alerts, we manually grep logs for the same recurring errors, and we context-switch from high-value work to perform mundane triage.

The industry’s answer has been “copilots” – AI assistants that wait for you to ask questions. But senior engineers don’t just want a smarter CLI. They need a partner.

We are in a ‘power loom’ moment for software engineering. Centuries ago, the introduction of mechanized looms did not eliminate the need for weavers; It transformed them from manual laborers to laborers system supervisor. They went from throwing shuttles by hand to managing batteries of machines that produced ten times the output with higher precision.

Same thing is happening with us also. > We are transforming from ‘hand-weavers of code’ to ‘architects of agency’. Our role is expanding from manually preparing logs and triaging exceptions to designing service patron Who perform those tasks on our behalf. The SE is not being replaced; Their leverage is being increased manifold.

This article introduces a different architectural pattern: the service patron Approach an autonomous agent who sits Together Your service understands its internal logic, and has the agency to investigate, report, and even fix issues without human intervention.

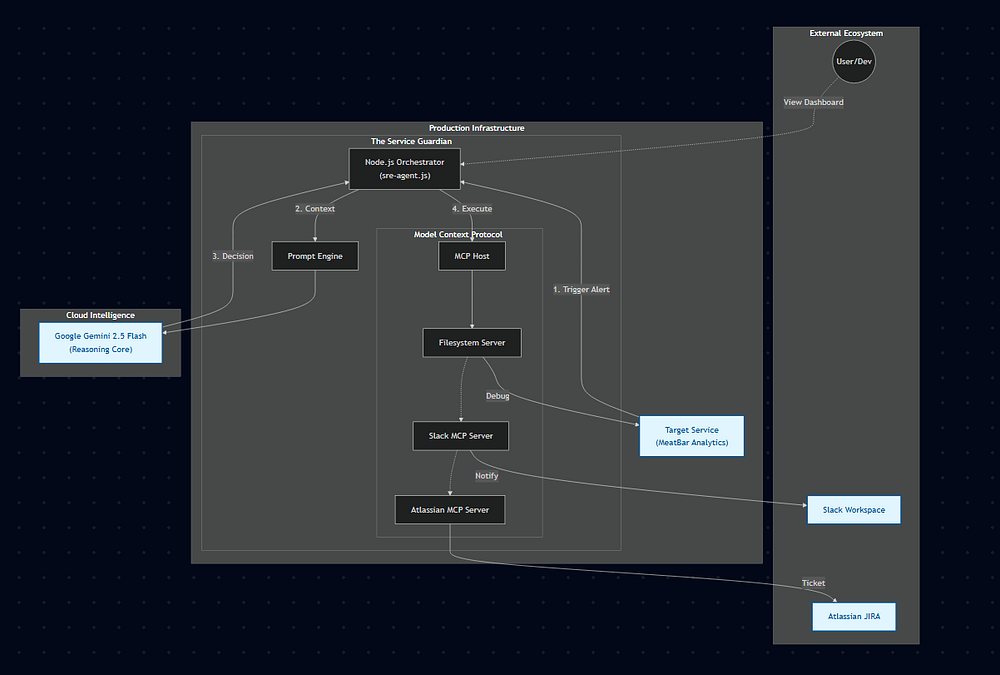

Here’s how to build a Service Guardian using Node.js, Google’s Gemini 2.5 Flash, and Model Context Protocol (MCP), and why every service owner should create one.

See it in action

I deployed Service Guardian to a live environment and introduced a breaking schema change. The following video shows the agent detecting crashes, analyzing SQL mismatches, and filing JIRA tickets – completely autonomously.

Operational Note: While this demo uses manual triggers for clarity, the architecture is designed for seamless integration with production telemetry (e.g., CloudWatch, Prometheus webhooks) for fully automated event ingestion.

Paradigm Shift: Ownership vs. Management

Traditional operational tooling is defunct: dashboards, log aggregators, alert thresholds. Users take action on them. A service protector is active. It acts on behalf of the user.

Imagine a special agent who knows codebase. When an exception is thrown:

- It doesn’t just page the on-call engineer.

- This gives rise to a process.

- This pulls the stack trace.

- It reads the relevant source code (leveraging direct file access).

- It recognizes that a

db.allThe rapper was missing in the new commitment. - It drafts a JIRA ticket with exact solution And loosens the link.

This is not science fiction. This is a pattern that can be built today with standardized open protocols.

architecture

1. ARM: Model Reference Protocol (MCP)

The biggest hurdle in building custom agents used to be “tool fatigue”. Connecting LLM to a specific Postgres DB, JIRA instance, and Slack channel meant writing glue code for weeks.

MCP solves this. It treats tools like microservices.

- Does the agent need to be given access to the database? Spin up a Postgres MCP server.

- Does it need to provide access to the internal wiki? Spin up the Confluence MCP server.

In this implementation, effectively zero API integration code was written. the system simply uses atlassian-mcp-server And instructs the agent: “Here are the tools. Use them.”

For service patrons, speed is an attribute. You can’t wait 30 seconds to consider the existential implications of LLM NullPointerException.

Gemini 2.5 flash was chosen to balance the huge reference window and sub-second latency. This allows the agent to ingest huge chunks of log and code files in a single pass (“YOLO mode”) and reason over them instantly.

3. Nervous System: Event Loop

The agent is effectively a Node.js process wrapping the LLM interaction. This creates a “run loop” that reflects how a senior engineer thinks:

// The "Service Owner" Mental Model

while (goal !== COMPLETE) {

1. Observe (Read Log / Webhook)

2. Orient (Search Codebase / Check Docs)

3. Decide (Plan Fix)

4. Act (Execute Tool)

}

This loop runs inside the infrastructure, behind the firewall, ensuring that sensitive data never leaves the control boundary except for the guess token.

Why does this matter to architects?

We generally define “architecture” as the structure of software – classes, interfaces, databases.

The argument here is that automated operations should become part of the definition of software architecture. If you design a service, you must also design the agent that maintains it.

- Self-healing: Agents can roll back deployment if metrics deviate.

- Self-documentation: Agents can update the readme when code changes.

- Knowledge retention: When a senior developer leaves, the agent retains “tribal knowledge” of how to debug the system because he or she has access to the runbooks and history.

Result

A demo “Service Guardian” was deployed for the Node.js Analytics service. When a breaking schema change was introduced:

- Without agent: Detection may take more than 45 minutes.

- With Guardian: The agent caught the crash triggered by ALERT, analyzed the new SQL query against the old schema, identified the mismatch, and filed a JIRA ticket with the correct SQL – all in 45 seconds.

conclusion

The future of software engineering is not limited to just writing code. It’s about designing systems that can take care of themselves.

By adopting the Service Guardian pattern and leveraging standards like MCP, we can stop being “on-call martyrs” and start becoming true architects – building systems that are robust, autonomous, and resilient by design.

GitHub: https://github.com/utkarshmehta/serviceGuardian

Published via Towards AI