Author(s): neel shah

Originally published on Towards AI.

As an AI engineer who has spent countless hours modifying retrieval systems and grappling with hallucinations in large language models (LLM), I have seen firsthand how retrieval-augmented generation (RAG) has evolved from a straightforward tool into something much more dynamic. Today, I want to look at the differences between traditional “simple” RAG and its more advanced counterpart, agentic RAG – especially when it comes to keyword-based vs. semantic/contextual search mechanisms. We’ll also explain what actually makes an AI system an “agent”, and I’ll offer some key insights on the challenges, benefits, and trade-offs I’ve encountered in real-world implementation.

If you’re building AI applications, understanding this change isn’t just academic; This is important to create systems that are accurate, adaptable, and scalable. Let’s break it down step by step.

What makes an AI agent?

Before we compare RAG variants, let’s clarify what elevates a system from a mere tool to an “agent”. In my experience, an AI agent is not just a passive responder – it is an autonomous entity capable of sensing its environment, making decisions, and taking actions to achieve goals. Here’s what defines someone:

- autonomy: Agents act independently, often without constant human intervention. They can break complex tasks into subtasks and execute them sequentially or parallel.

- perception and reasoning: They use sensors (like APIs or retrieval tools) to gather data, then reason, plan, or even reason on it by learning from feedback.

- action oriented: Unlike static models, agents interact with the world – querying databases, calling external tools, or iterating over their own outputs.

- adaptability: They handle uncertainty by refining their approach, such as re-routing based on new information or error handling.

- goal-directed behavior: Everything has a purpose, whether it’s answering a question accurately or optimizing a process.

In the context of RAG, this agentic quality turns a simple query-response loop into a sophisticated workflow. Think of it as giving your AI a “brain” that not only remembers facts but actively hunts down, validates, and synthesizes them.

Simple RAG vs. Agentic RAG: Main Differences

At its core, RAG addresses the limitations of standalone LLMs by injecting external knowledge during generation. But the devil is in the details – specifically how the retrieval occurs and whether it involves loops or embedding it in iterative processes.

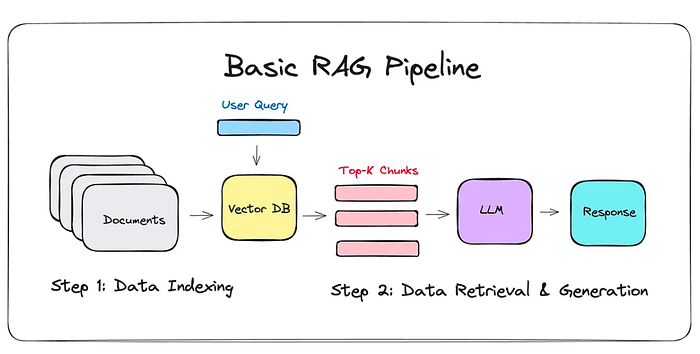

Simple RAG: the one-shot approach

Simple RAG is like a quick library lookup: you embed the user’s query, retrieve relevant documents from the vector store (using semantic similarity or keyword matching), and feed them into the LLM for response. This is effective but limited.

- workflow:Query → embed (if semantic) → retrieve (top-k matches) → generate response.

- contextual/semantic search: Uses embeddings to capture meaning (for example, through models like BERT or OpenAI’s Text-Embedding-ADA). The query is vectorized, and cosine similarity finds the “relevant” segments. It handles nuances better but can reproduce noise if embeddings are not smoothed out.

- no loop: It’s linear – one recovery, one generation. If the data is out of date or contradictory, you’re stuck with hallucinations or incomplete answers.

- Pros:Low latency, cheap to run, easy to implement.

- Shortcoming:static; Cannot handle multi-hop queries (for example, “What effect does X have on Y?”) or real-time updates.

In my projects, simple RAG shines for basic Q&A bots but collapses under complex, evolving queries – like stock analysis where market data changes hourly.

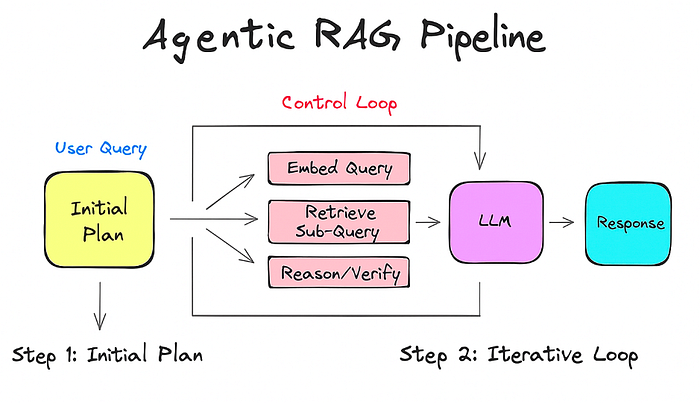

Agentic RAG: Iterative Loop with Embeddings

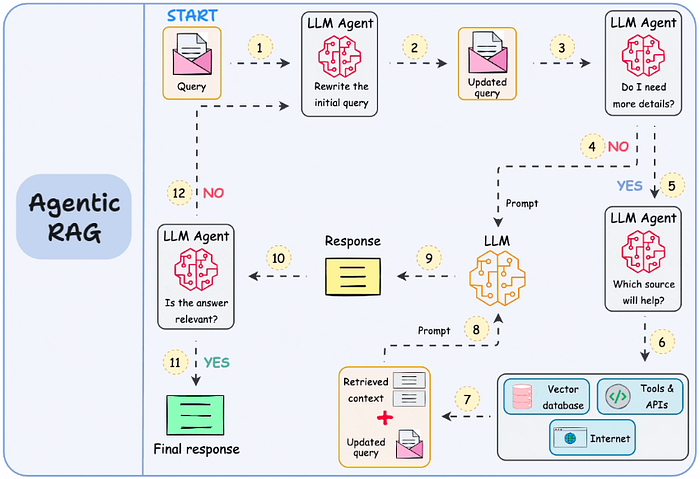

The agent takes this to the next level by introducing a RAG loop, making the system more “agent-like”. Here, recovery is not a one-off; This is part of a feedback loop where the AI critiques its own outputs, refines the questions, and iterates.

- workflow:Query → Initial planning → Loop (embed query/subquery → Retrieve → Reason/verify → Refine if necessary) → Final synthesis and generation.

- loop with embedding:This is magic sauce. Embeddings are not just for initial retrieval; They are reapplied in each iteration. For example:

- Start with comprehensive embedding-based search.

- Analyze the results, generate sub-queries (for example, “Verify fact X from source Y”).

- Re-embed those sub-queries, get more targeted information, and loop until confidence increases.

- This may include hybrid search: combining keywords for accuracy with semantic embedding for relevance.

- contextual/semantic search: Runs the core loop, allowing the agent to dynamically explore related concepts. Tools like Langchain or Laminedex make this seamless.

- agentic element: Loop enables decision making (for example, “Is this data reliable? Re-send to another source”) and optimization (for example, switch from text to multimodal retrieval if images are needed).

- Pros: Handles complexity, minimizes errors through validation.

- Shortcoming: More computation-intensive due to iterations.

The difference depends on reactivity: ordinary RAG is a straight shot; The agent is a conversation with RAG data, looping through the embeddings to build a stronger understanding.

The Strategic Power of Hybrid Search: Why Keyword Search Matters After Embedding

One of the most powerful patterns I have discovered in production systems is the strategic use of BM25 or keyword search as a secondary filter after initial embedding-based retrieval. This hybrid approach is not just theoretical – it solves real problems that pure semantic search struggles with, especially in e-commerce and product search scenarios.

embedding blindspot problem

Although embeddings excel at capturing semantic meaning, they may miss important exact-match requirements expected by users. Consider an e-commerce scenario where a customer searches for “nike air max shoes size 10”.

- Initial Embedding Recovery: Vector search may return semantically similar items such as “Adidas running sneakers,” “athletic footwear,” or even “Nike apparel” because these share conceptual space in the embedding model. While semantically related, these results miss specific brand and product needs.

- keyword refinement stage:After the initial embedding-based retrieval pulls, say, 100 potentially relevant products, a BM25/keyword search serves as a fine-grained filter:

- Filter for exact match: “Nike” and “Air Max”

- Please ensure availability of size: “Size 10”

- Eliminate false positives caught by embeddings due to extensive semantic similarity

And also, in hybrid search for applications like e-commerce where a customer asks for shoes, we cannot serve socks – keyword filtering ensures category accuracy, preventing unrelated items like socks from appearing in a search for shoes despite the semantic overlap in shoes.

Real-World E-Commerce Examples

Scenario 1: Find Shoes

User Question: “Waterproof Hiking Boots Under $200”

Step 1 (Embedding): Recovers 100 items including:

- hiking shoes (good)

- Rain boots (semantically related to “waterproof”)

- Expensive mountaineering boots (hiking related)

- Waterproof jacket (shares the ‘waterproof’ reference)

Step 2 (Keyword Filter):Applies BM25 scoring for:

- “Shoes” (removes jacket)

- Price Filter <$200 (removes expensive items)

- “Waterproof” as exact feature match.

- Result: 15 highly relevant, affordable waterproof hiking boots

Scenario 2: Find socks

User Question: “merino wool socks for running”

Step 1 (Embedding): Retrieves items like:

- Merino Wool Base Layers (Matching Materials)

- Running Shoes (Activity Match)

- Cotton Athletic Socks (Activity + Category Matching)

- Woolen sweater (matching materials)

Step 2 (Keyword Filter): :

- Exact Match: “Socks” (eliminates base layers, shoes, sweaters)

- Material Specification: “Merino” or “Wool”

- Activity reference: “running” or “athletic”

- Result:Precise merino wool socks designed for athletic use

Why does this hybrid approach work?

- precision without losing memory:Embedding casts a wide net to ensure that we do not miss relevant items due to vocabulary mismatch, while providing surgical precision to eliminate keyword noise.

- Handling user intent: E-commerce users often have conceptual requirements as well as specific requirements (brand, size, content). The hybrid approach respects both explicit and implicit aspects of their query.

- computational efficiency: Instead of re-embedding many sophisticated queries, we perform an expensive embedding lookup and then perform cheaper text-based filtering on the candidate set.

- Category-Specific Optimization: In fashion and apparel, characteristics such as color, shape, and material are often more important than semantic similarity. A customer searching for “red socks” specifically wants red socks, not “burgundy stockings” which might be closer semantically.

Implementation in Agentic RAG

In an agentic system, this hybrid search becomes even more powerful because the agent can dynamically decide when to emphasize embeddings versus keywords based on query analysis:

- Broader exploratory questions: rely more on embeddings

- Find specific products: apply aggressive keyword filtering

- Multi-constraint queries: Use embeddings to find categories, then use keywords to satisfy constraints

This strategic layer transforms RAG from a simple retrieval mechanism into an intelligent search orchestrator that understands both semantics and accuracy – exactly what modern applications demand.

conclusion

Both Simple RAG and Agentic RAG have unique strengths, making them suitable for different use cases depending on the needs of the application. Simple RAG excels in scenarios where speed and simplicity are paramount, such as real-time chatbots or voice-to-voice inquiries. For example, in customer-facing applications where low latency is critical to avoid frustrating users, Simple RAG’s one-shot approach provides quick, straightforward responses with minimal computational overhead. On the other hand, Agent RAG shines in domains where recall and accuracy cannot be compromised, such as corporate or government applications. Its iterative loops and hybrid search capabilities ensure accurate, verified output, making it ideal for complex queries requiring deep reasoning or dynamic data synthesis. By understanding the difference between speed versus depth, simplicity versus precision, AI engineers can choose the right approach to creating systems that meet specific demands while pushing the boundaries of intelligent automation. What do you think? Leave a comment if you’ve experimented with these methods!

Credits for some of the images used:

- https://ai.plainenglish.io/building-agentic-rag-with-langgraph-mastering-adaptive-rag-for-production-c2c4578c836a

- https://medium.com/@drjulija/what-is-retrieval-augmented-nation-rag-938e4f6e03d1

Published via Towards AI