Image by author

# Introduction

AI has moved beyond just chatting large language model (LLM) to give them the hands and feet that allow them to function in the digital world. These are often called Python AI agents – autonomous software programs powered by LLM that can sense their environment, make decisions, use external tools (such as APIs or code execution), and take actions to achieve specific goals without constant human intervention.

If you want to experiment with creating your own AI agent, but are feeling overwhelmed by complex frameworks, you are in the right place. today we are going to see smolagentsA powerful but incredibly simple library developed by hugging face.

By the end of this article, you will understand what makes SmallAgent unique, and more importantly, you will have a working code agent that can fetch live data from the Internet. Let’s explore the implementation.

# Understanding Code Agents

Before we start coding, let’s understand the concept. An agent is essentially an LLM equipped with tools. You give the model a goal (like “get the current weather in London”), and it decides which tools to use to achieve that goal.

What makes the Hugging Face agents in the SmallAgents library special is their approach to reasoning. Unlike many frameworks, which generate JSON or text to decide which tool to use, SmallAgent agents are code agents. This means they write Python code snippets to link their tools and logic together.

It is powerful because the code is precise. It is the most natural way to express complex instructions such as loops, conditionals, and data manipulation. Instead of guessing how to connect devices, LLM simply writes Python scripts to do it. As an open-source agent framework, Smolagents is transparent, lightweight, and perfect for learning the basics.

// Prerequisites

To follow along, you will need:

- Python knowledge. You should be comfortable with variables, functions, and pip install.

- A huggy face token. Since we are using the Hugging Face ecosystem, we will be using their free Estimates API. You can get tokens by signing up on huggingface.co And going to your settings.

- Google account is optional. If you don’t want to install anything locally, you can run this code google collab Notebook.

# setting up your environment

Let’s prepare our workspace. Open your terminal or new Colab notebook and install the library.

mkdir demo-project

cd demo-projectNext, let’s set up our security token. It is best to store it as an environment variable. If you’re using Google Colab, you can use the Secrets tab in the left panel to add HF_TOKEN and then access it through userdata.get('HF_TOKEN').

# Creating Your First Agent: The Weather Fetcher

For our first project, we will create an agent that can receive weather data for a given city. To do this, the agent needs a tool. A tool is just a function that an LLM can call. We will use a free, public API called wttr.inWhich provides weather data in JSON format.

// Install and setup

Create a virtual environment:

A virtual environment isolates your project’s dependencies from your systems. Now, let’s activate the virtual environment.

Windows:

MacOS/Linux:

you will see (env) in your terminal when activated.

Install required packages:

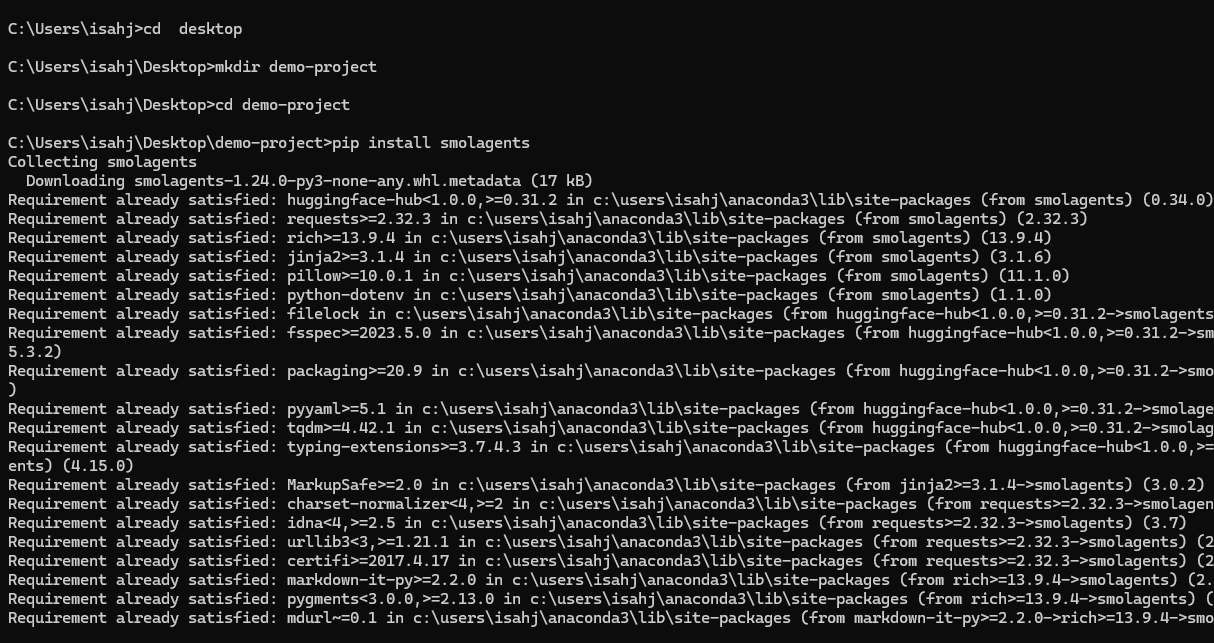

pip install smolagents requests python-dotenvWe’re deploying SmallAgent, Hugging Face’s lightweight agent framework, for building AI agents with device-usage capabilities; DemandHTTP library for making API calls; And python-dotenvwhich will load the environment variables .env file.

That’s all – everything with just one order. This simplicity is a core part of the SmallAgent philosophy.

Figure 1: Installing SmallAgent

// Setting up your API token

create a .env File in your project root and paste this code. Please replace the placeholder with your actual token:

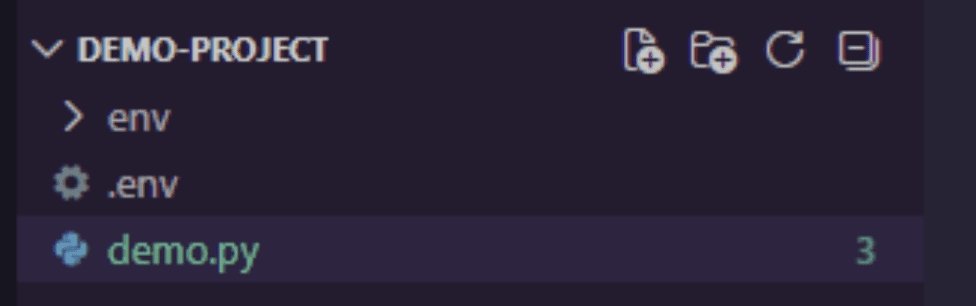

HF_TOKEN=your_huggingface_token_hereGet your token from Huggingface.co/settings/tokens. Your project structure should look like this:

Figure 2: Project Structure

// import library

open your demo.py File and paste the following code:

import requests

import os

from smolagents import tool, CodeAgent, InferenceClientModelrequests:to make HTTP calls to the Weather APIos:to read environment variables safelysmolagents:Hugging Face’s lightening agent structure provides:@tool: A decorator for defining agent-callable functions.CodeAgent: An agent that writes and executes Python code.InferenceClientModel: Connects to Hugging Face’s hosted LLM.

In Smallagents, defining a device is straightforward. We will create a function that will take the city name as input and return the weather conditions. Add the following code to your demo.py file:

@tool

def get_weather(city: str) -> str:

"""

Returns the current weather forecast for a specified city.

Args:

city: The name of the city to get the weather for.

"""

# Using wttr.in which is a lovely free weather service

response = requests.get(f"https://wttr.in/{city}?format=%C+%t")

if response.status_code == 200:

# The response is plain text like "Partly cloudy +15°C"

return f"The weather in {city} is: {response.text.strip()}"

else:

return "Sorry, I couldn't fetch the weather data."Let’s break it down:

- we import

toolDecorator from Smolagants. This decorator turns our regular Python functions into a tool that the agent can understand and use. - docstring (

""" ... """) Inget_weatherWork is important. The agent reads this description to understand what the tool does and how to use it. - Inside the function, we make a simple HTTP request wttr.inA free weather service that returns forecasts in plain text.

- type prompt (

city: str) Tell the agent what input to provide.

This tool is a perfect example of calling in action. We’re giving the agent a new capability.

// configuring llm

hf_token = os.getenv("HF_TOKEN")

if hf_token is None:

raise ValueError("Please set the HF_TOKEN environment variable")

model = InferenceClientModel(

model_id="Qwen/Qwen2.5-Coder-32B-Instruct",

token=hf_token

)The agent needs a brain – a large language model (LLM) that can reason about actions. Here we use:

Qwen2.5-Coder-32B-Instruct: A powerful code-centric model hosted on Hugging FaceHF_TOKEN: Your Hugging Face API token, stored in a.envfile for protection

Now, we need to create the agent itself.

agent = CodeAgent(

tools=(get_weather),

model=model,

add_base_tools=False

)CodeAgent There is a special agent type which:

- Writes Python code to solve problems

- executes that code in a sandboxed environment

- Can chain multiple tool calls together

Here, we are instantiating a CodeAgent. We pass it a list containing our get_weather Tools and model objects. add_base_tools=False Logic dictates that we not include any default tools, keeping our agent simple for now.

// driving agent

This is the exciting part. Let’s give your agent a task. Run the agent with a specific prompt:

response = agent.run(

"Can you tell me the weather in Paris and also in Tokyo?"

)

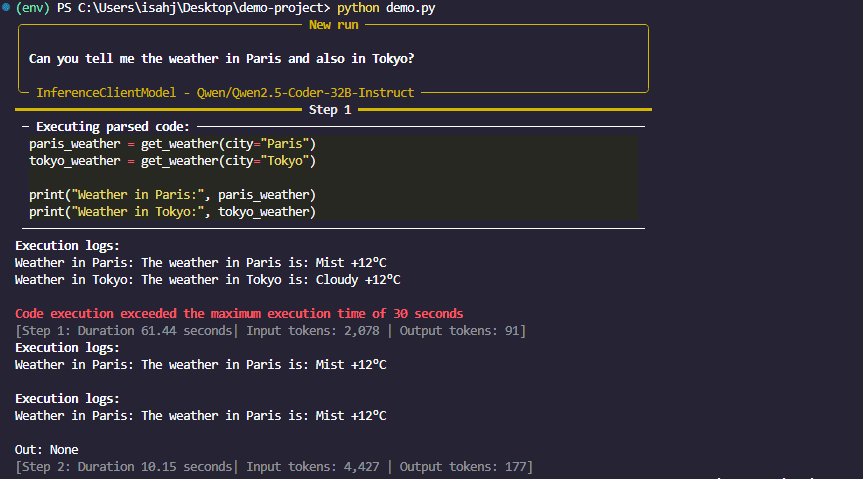

print(response)when you call agent.run()Agent:

- Your sign reads.

- Because of what equipment it requires.

- caller generates code

get_weather("Paris")Andget_weather("Tokyo"). - Executes the code and returns the result.

Figure 3: Smallagent reaction

When you run this code, you will see the magic of the Hugging Face agent. The agent receives your request. He sees that he has a device called get_weather. It then writes a small Python script in its “brain” (using LLM) that looks something like this:

This is what the agent thinks, not the code you write.

weather_paris = get_weather(city="Paris")

weather_tokyo = get_weather(city="Tokyo")

final_answer(f"Here is the weather: {weather_paris} and {weather_tokyo}")

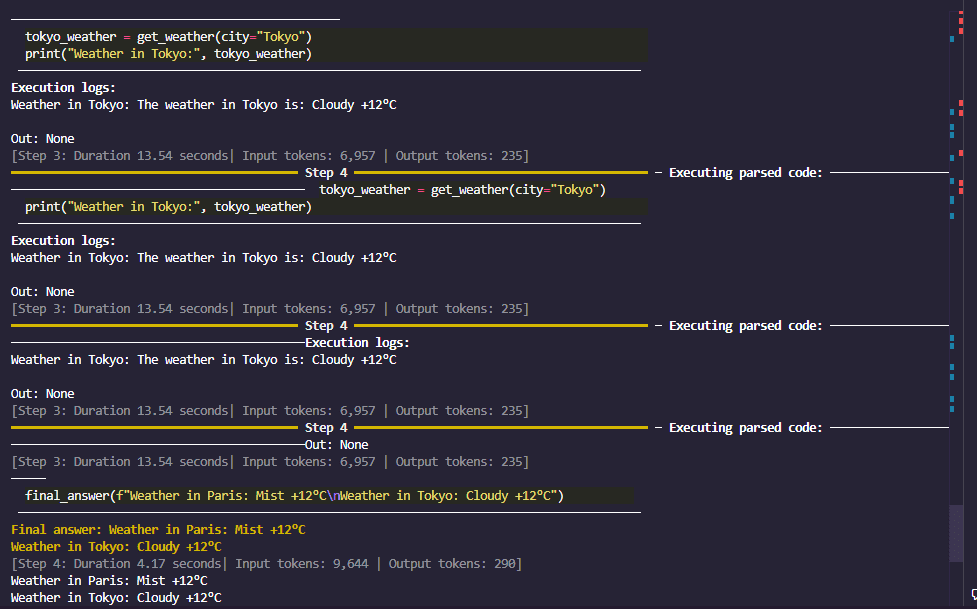

Figure 4: Smolagent final reaction

It executes this code, fetches the data, and returns a friendly reply. You have just created a code agent that can browse the web through an API.

// How it works behind the scenes

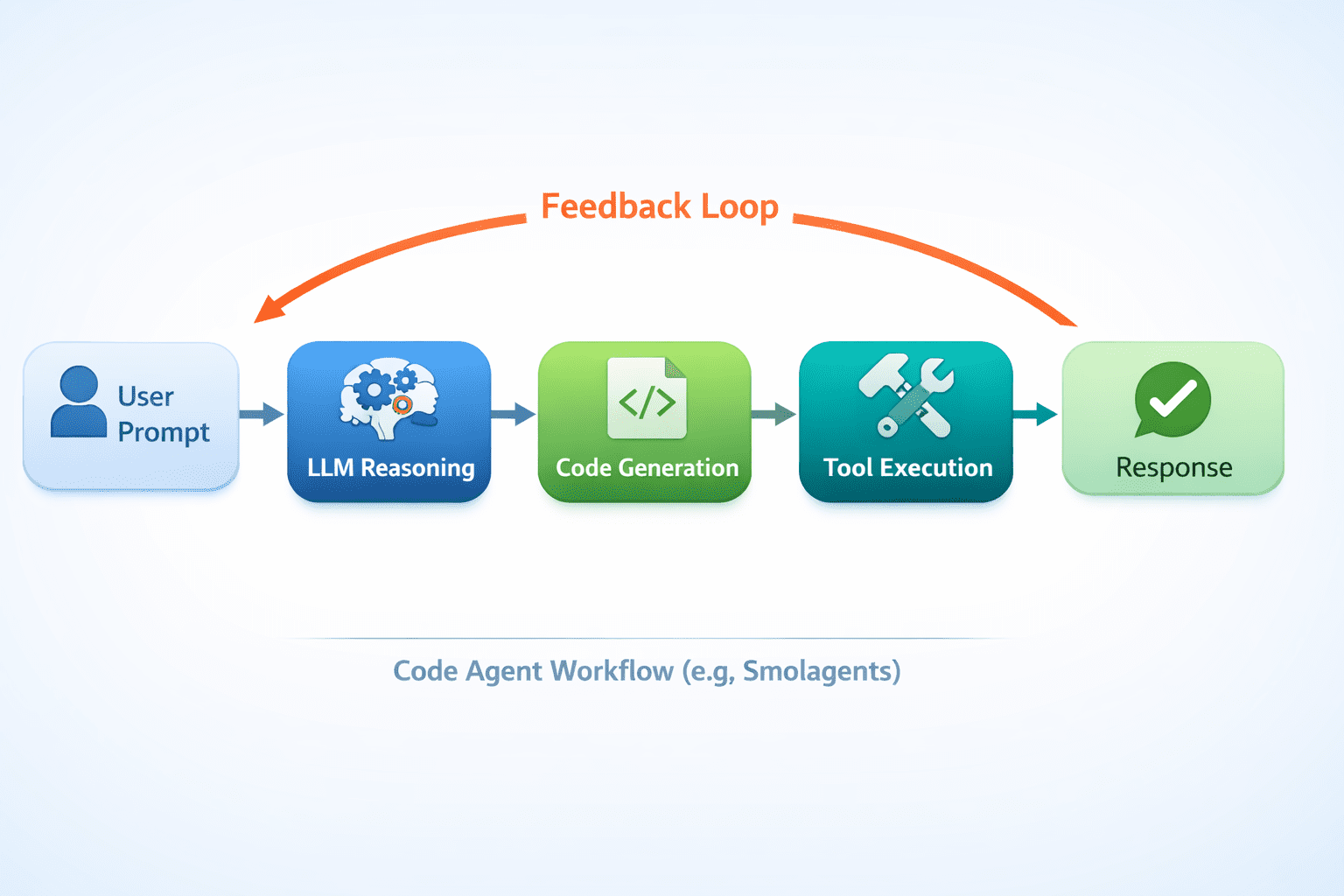

Figure 5: Internal workings of an AI code agent

// Taking it further: adding more devices

The power of agents grows from their toolkit. What if we want to save the weather report to a file? We can create another tool.

@tool

def save_to_file(content: str, filename: str = "weather_report.txt") -> str:

"""

Saves the provided text content to a file.

Args:

content: The text content to save.

filename: The name of the file to save to (default: weather_report.txt).

"""

with open(filename, "w") as f:

f.write(content)

return f"Content successfully saved to {filename}"

# Re-initialize the agent with both tools

agent = CodeAgent(

tools=(get_weather, save_to_file),

model=model,

)agent.run("Get the weather for London and save the report to a file called london_weather.txt")Now, your agent can receive data and interact with your local file system. This combination of skills is what makes Python AI agents so versatile.

# conclusion

In just a few minutes and with less than 20 lines of basic logic, you have created a functional AI agent. We’ve seen how Smolagents simplifies the process of creating code agents that write and execute Python to solve problems.

The beauty of this open-source agent framework is that it removes the boilerplate, so you can focus on the fun part: building tools and defining tasks. Now you’re not just chatting with an AI; You’re collaborating with someone who can do the work. This is just the beginning. Now you can explore giving your agent access to the Internet through the Search API, connecting it to a database, or letting it control the web browser.

// References and learning resources

Shittu Olumide He is a software engineer and technical writer who is passionate about leveraging cutting-edge technologies to craft compelling narratives, with a keen eye for detail and the ability to simplify complex concepts. You can also find Shittu Twitter.