Google has officially shifted the Gemini era into high gear with the release of Gemini 3.1 ProFirst version update in the Gemini 3 series. This release is not just a minor patch; It is a targeted attack on the ‘agent’ AI market, focusing on logic consistency, software engineering and tool-use reliability.

For developers, this update signals a change. We are moving from a ‘just chatting’ model to a ‘working’ model. Gemini 3.1 Pro is designed as the core engine for autonomous agents that can navigate file systems, execute code and reason through scientific problems with a success rate that now rivals – and in some cases – even surpasses, the industry’s most elite Frontier models.

Comprehensive reference, accurate output

One of the most immediate technological advances is managing scale. Gemini 3.1 Pro Preview Maintains Mass 1M token Input context window. To put this into perspective for software engineers: you can now feed the model an entire medium-sized code repository, and it will have enough ‘memory’ to understand cross-file dependencies without losing the plot.

However, the real news is this 65k tokens output limit. This is a significant leap forward for developers creating 65k window long-form generators. Whether you’re building a 100-page technical manual or a complex, multi-module Python application, the model can now get the job done in a single turn without suddenly hitting a ‘maximum tokens’ wall.

doubling the reasoning power

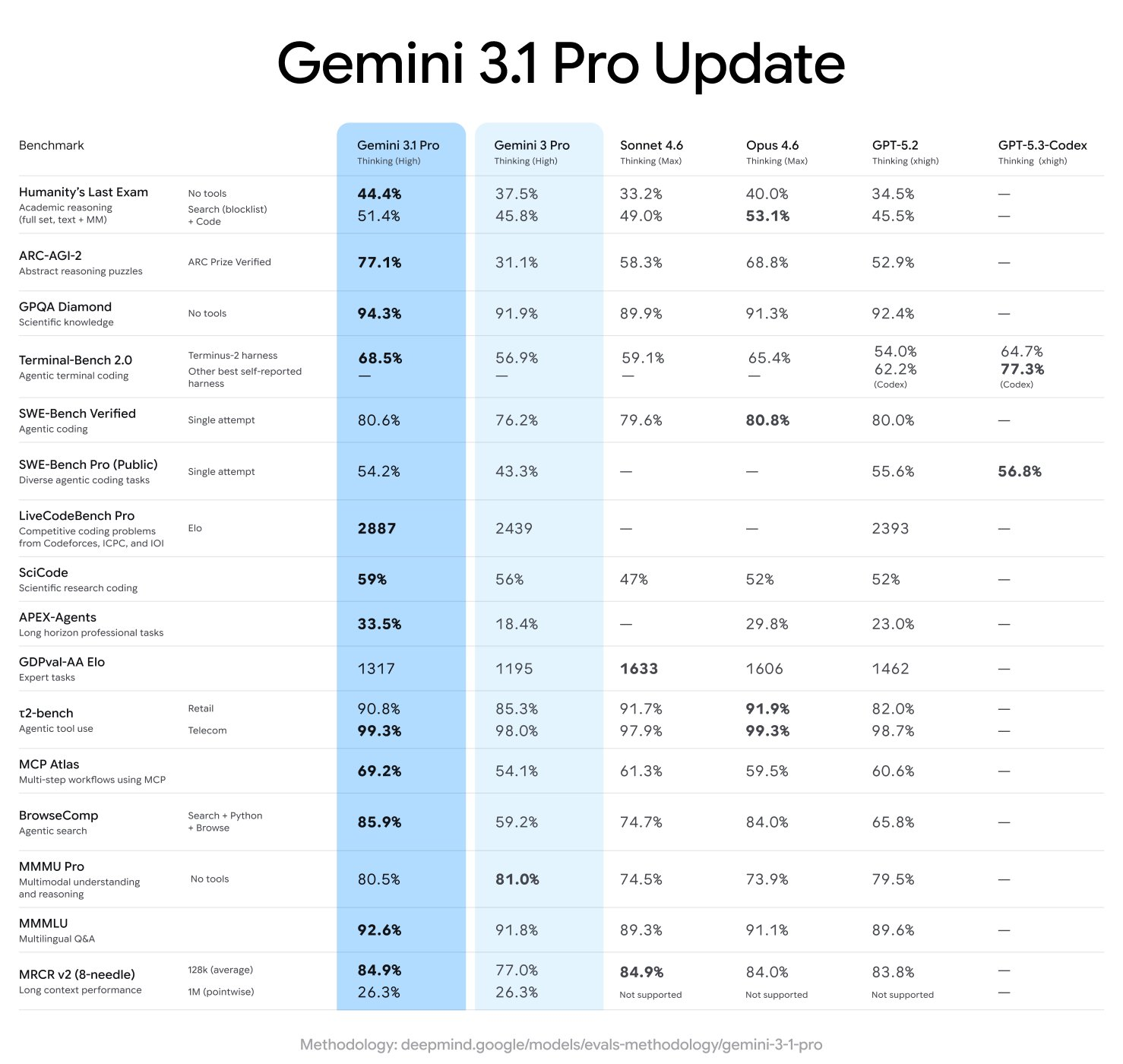

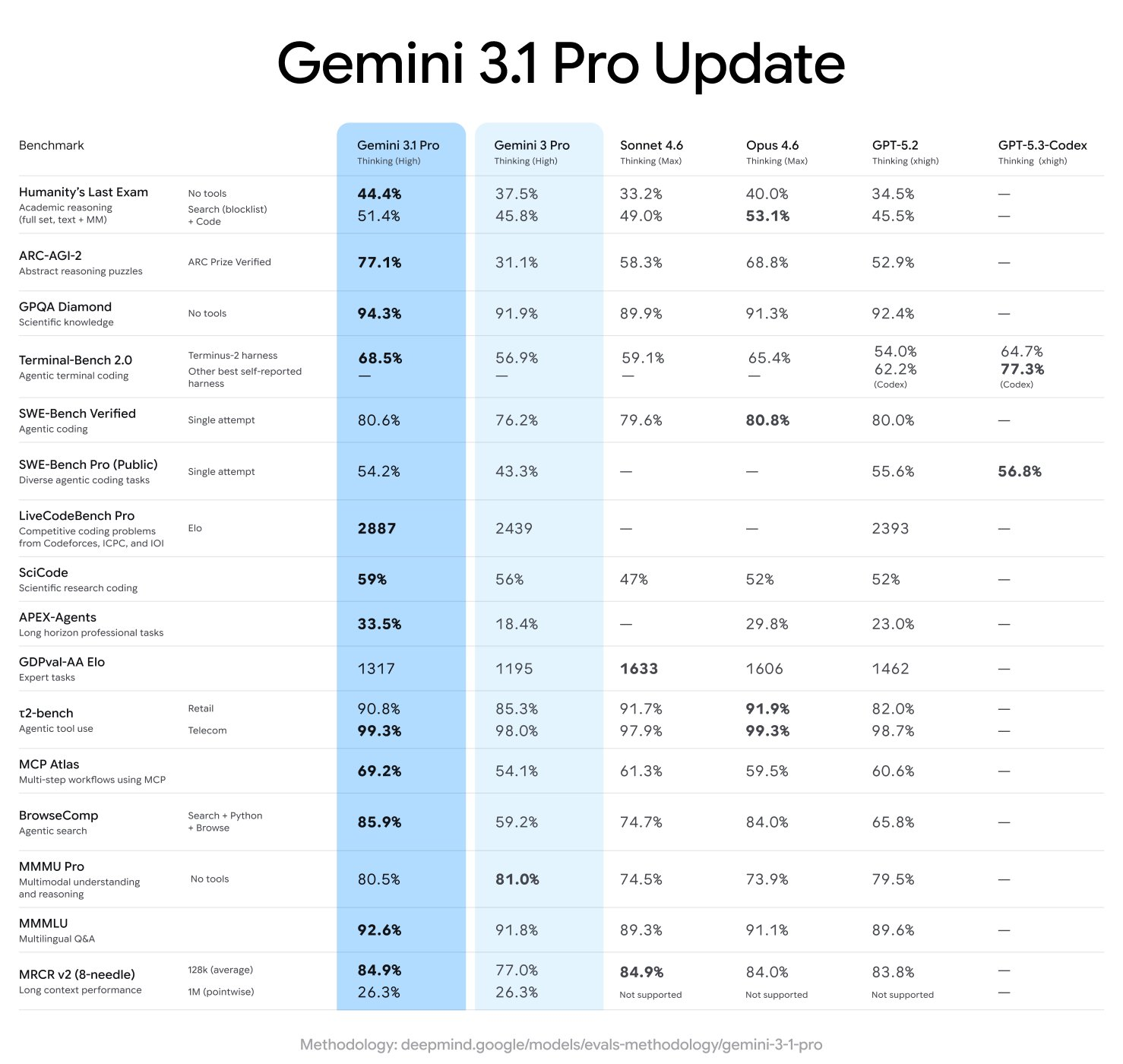

If Gemini 3.0 was about introducing ‘critical thinking’, Gemini 3.1 is about making that thinking efficient. The performance jump on strict parameters is notable:

| benchmark | score | what does it measure |

| ARC-AGI-2 | 77.1% | Ability to solve completely new logic patterns |

| GPQA Diamond | 94.1% | graduate level scientific reasoning |

| SciCode | 58.9% | Python programming for scientific computing |

| terminal-bench hard | 53.8% | Agentic coding and terminal use |

| Humanity’s Last Test (HLE) | 44.7% | Arguments against near-human limitations |

77.1% Here’s the key data on ARC-AGI-2. The Google team claims this represents more than double the logical performance of the original Gemini 3 Pro. This means that the model is less likely to rely on matching patterns from its training data and is more able to ‘figure it out’ when it encounters a new edge case in the dataset.

Agentic Toolkit: Custom Tools and Antigravity‘

The Google team is making a clear move to the developer’s terminal. Along with the main model, they launched a special endpoint: gemini-3.1-pro-preview-customtools.

This endpoint is optimized for developers who mix Bash commands with custom functions. In previous versions, models often struggled to prioritize which tool to use, sometimes resulting in hallucinations in the search when reading a local file would suffice. customtools Variant specifically tuned to prioritize tools view_file Or search_codeWhich makes it a more reliable backbone for autonomous coding agents.

This release also deeply integrates google antigravityThe company’s new agentive development platform. Developers can now use a new ‘Medium’ thinking level. This allows you to toggle ‘logic budget’ – using high-depth thinking for complex debugging while switching to medium or low for standard API calls to save latency and cost.

API breaking changes and new file methods

For those who are already building on the Gemini API, there is a small but important change. In Interaction API v1betafield total_reasoning_tokens has been renamed total_thought_tokens. This change aligns with ‘idea signatures’ introduced in the Gemini 3 family – encrypted representations of the model’s internal logic that must be passed back to the model to maintain context in multi-turn agentic workflows.

The model’s appetite for data has also increased. Major updates to file management include:

- 100MB file limit: API upload limit quintupled from the previous 20MB 100 MB.

- Direct YouTube Support: now you can make a pass youtube url As a direct media source. The model ‘views’ the video via URL rather than requiring manual upload.

- Cloud Integration: support for cloud storage bucket and private databases with pre-signed URLs as direct data sources.

economics of intelligence

The pricing for the Gemini 3.1 Pro Preview remains aggressive. For signals less than 200k tokens, input costs are $2 per 1 million tokensand the output is $12 per 1 million. For references over 200k, prices range up to $4 input and $18 output.

When compared to competitors like Cloud Opus 4.6 or GPT-5.2, the Google team is positioning Gemini 3.1 Pro as an ‘efficiency leader’. According to the data of artificial analysisThe Gemini 3.1 Pro now holds the top spot on their Intelligence Index, while the cost to run it is almost half that of its closest range competitors.

key takeaways

- HUGE 1M/65K REFERENCE WINDOW: The model maintains a 1M token Input window for massive data and repositories, while significantly upgrading the output range 65k tokens For long-form code and document creation.

- A Leap in Logic and Reasoning: performance on ARC-AGI-2 reached the benchmark 77.1%Which represents more than double the logic capacity of previous versions. It also achieved a 94.1% On GPQA Diamond for graduate level science work.

- Dedicated Agent Endpoint: The Google team made a special introduction

gemini-3.1-pro-preview-customtoolsendpoint. It is specially adapted to give priority to bash command and system tools (e.g.view_fileAndsearch_code) for more reliable autonomous agents. - API breaking changes: Developers must update their codebase as fields

total_reasoning_tokenshas been renamedtotal_thought_tokensIn v1beta Interaction API to better align with models’ internal “thought” processing. - Advanced file and media handling: API file size limit increased by 20 MB 100 MB. Additionally, developers can now pass youtube url Directly in the prompt, allowing the model to analyze video content without the need to download or re-upload files.

check it out technical details And try it here. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.