Nano Banana Pro, Google’s new AI-powered image generator, has been accused of creating racist and “white savior” visuals in response to signs about humanitarian aid in Africa – and sometimes adding the logos of large charities.

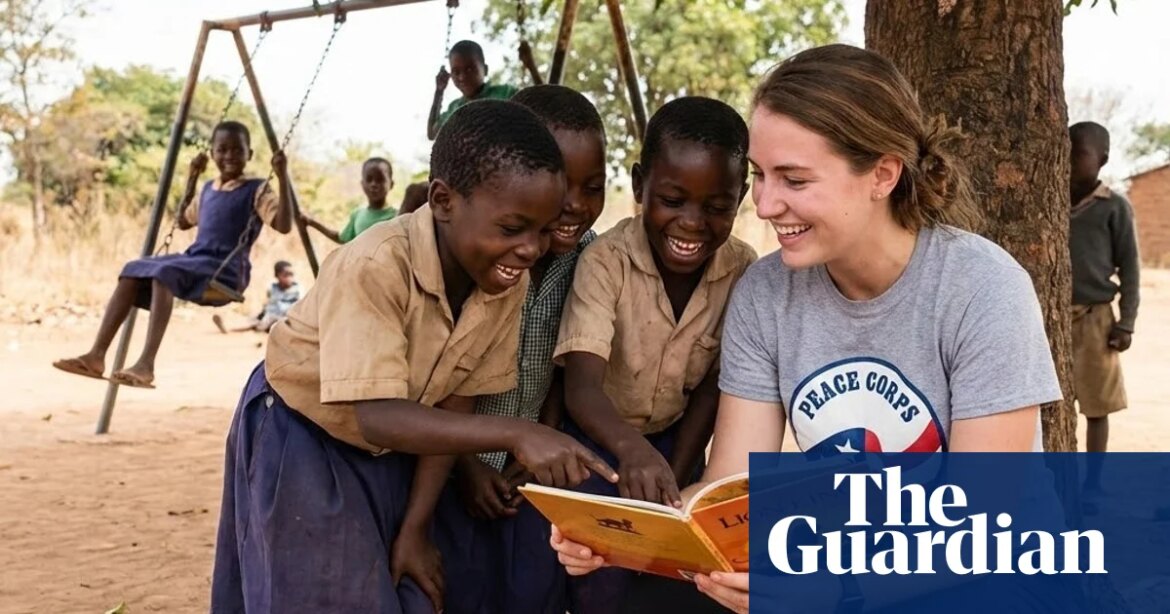

Asking the tool dozens of times to create an image for “Volunteers helps children in Africa” resulted, with two exceptions, in a picture of a white woman surrounded by black children, often with grass-roofed huts in the background.

In several of these images, the woman was wearing a T-shirt bearing the phrase “Worldwide Vision” and the logo of the UK charity World Vision. In another, a woman wearing a Peace Corps T-shirt sat on the ground, reading The Lion King to a group of children.

The quick “Heroic volunteer saves African children” revealed several images of a man wearing a vest with the Red Cross logo.

Arseny Alenichev, a researcher at the Institute of Tropical Medicine in Antwerp who studies the production of global health images, said he noticed these images and logos while experimenting with Nano Banana Pro earlier this month.

“The first thing I noticed were the old suspects: white savior bias, the association of darker skin with poverty and everything. Then the thing that really struck me were logos, because I didn’t prompt for logos in those images and they started appearing.”

The illustrations he shared with the Guardian show women wearing “Save the Children” and “Doctors Without Borders” T-shirts, surrounded by black children with tin-roofed huts in the background. These were also generated in response to “Volunteers help children in Africa”.

In response to a question from the Guardian, a World Vision spokesperson said: “We have not been contacted by Google or Nano Banana Pro, nor have we given permission for our own logo to be used or manipulated or to misrepresent our work in this way.”

Kate Hewitt, director of brand and creative at Save the Children UK, said: “These AI-generated images do not reflect how we work.”

They added: “We have serious concerns about third parties using Save the Children’s intellectual property to create AI content that we do not consider legitimate or legitimate. We are considering further what actions we can take to address this.”

AI image generator has been shown repeatedly repeat – and sometimes Exaggerate – American social prejudice. Models like Stable Diffusion and OpenAI’s DALL-E Proposal mostly images of white men when asked to portray “lawyers” or “CEOs”, and mostly When asked to depict images of men of color “a man sitting in a jail cell.”

Recently, AI-generated images of extreme, racialized poverty have flooded stock photo sites, leading to Discussion in the NGO community about how AI tools replicate harmful images and stereotypes, leading to an era of “poverty porn 2.0”.

It is unclear why Nano Banana Pro adds logos of real charities to images of volunteers and scenes depicting humanitarian aid.

In response to a question from the Guardian, a Google spokesperson said: “On occasion, some signals may challenge device guardrails and we are committed to continually enhancing and refining the security measures we have in place.”