Author(s): compensation

Originally published on Towards AI.

Large language models (LLMs) are powerful, but they require significant hardware resources to run locally. Many users rely on open-source models because of their accessibility, as closed source models often come with restrictive licensing and high costs. In this blog, I will explain how open-source LLMs work, using DeepSeek as an example.

Installing Olama and running LLM locally

To get started, you need to install OlamaWhich provides an easy way to run and manage LLM locally. follow these steps:

- Download and install Olama from the official website: https://ollama.com

- Or install via command line:

curl -fsSL https://ollama.com/install.sh | sh

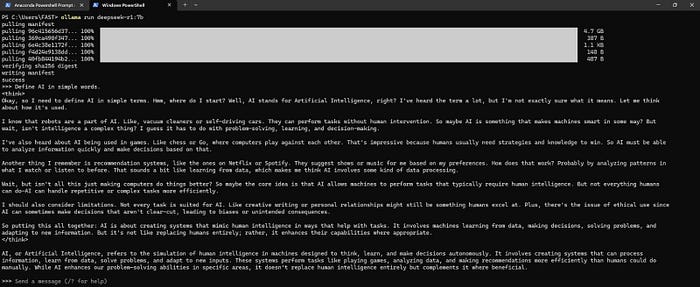

Download and run a model locally

Once Olama is installed, you can easily download and run Olama using command line CMD:

Download and run DeepSeek-R1 7B:

ollama run deepseek-r1:7b

Download and run DeepSeek-R1 32B: :

ollama run deepseek-r1:32b

When you run any of the above commands, it downloads the model and starts estimation mode for LLM, like this:

experiment setup

i used Olama To run two different DeepSeek models:

- DeepSeek-R1 7B (smaller model)

- DeepSeek-R1 32B (Large Model)

Hardware Used:

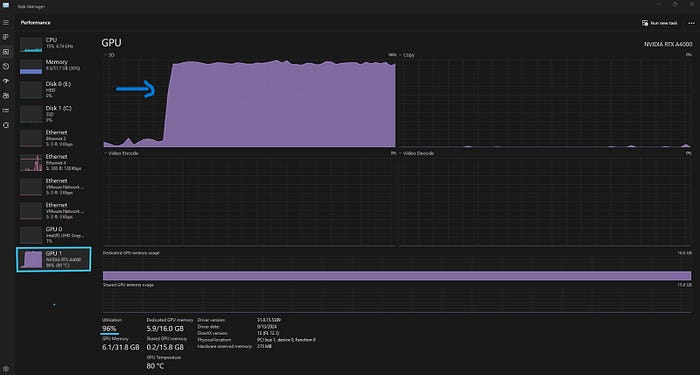

- GPU: Nvidia RTX A4000 (16GB VRAM)

- CPU: Intel Core i7–13700

- To hit: 32 GB

- V(Video)RAM: 32 GB

Model Storage and Execution Insights

The DeepSeek-R1 7B requires 4GB of disk storage.

When I start speculating about this model, it runs entirely on the GPU as it fits comfortably within the 16GB VRAM. During inference, the model expands in memory due to internal calculations (which I will discuss further). However, this expansion remains within the VRAM limits, allowing the model to run entirely on the GPU without requiring a fallback to the CPU.

DeepSeek-R1 32B requires 20GB of disk storage.

it requires 20 GB disk storage. However, during inference, it exceeds the GPU memory limit. 48 GB VRAM Due to internal calculations. As a result, the system automatically loads part of the model CPURunning in hybrid mode (CPU + GPU) to balance the workload and ensure smooth execution.

Why does VRAM usage increase?

While the base model is 20GB, VRAM usage increases significantly during inference due to internal calculations. When we download a model, we only store its weights (parameters) to disk. However, during inference, performing calculations using these weights results in additional memory usage. Since LLMs are transformer-based models, they generate key-value matrices and use multiple attention heads, which requires substantial memory. The primary causes of VRAM expansion include activation functions, which store intermediate computation values, and key-value matrices, which are generated dynamically to handle queries efficiently, both of which contribute to increased VRAM consumption.

performance monitoring

I monitored the execution using task manager To monitor real time GPU and CPU usage. My main points:

- The smaller models run entirely on the GPU, providing quick estimate.

- Large models switch automatically CPU-GPU hybrid execution When VRAM is exceeded.

- Monitoring resource usage helps Optimize model selection Based on available hardware.

conclusion

run Open-Source LLM Locally is a viable alternative to expensive cloud-based solutions. DeepSeek Model with Olama Deliver a seamless experience while dynamically managing hardware limitations. Understanding GPU-CPU balance Important for efficient deployment.

Stay tuned for more details!

Published via Towards AI