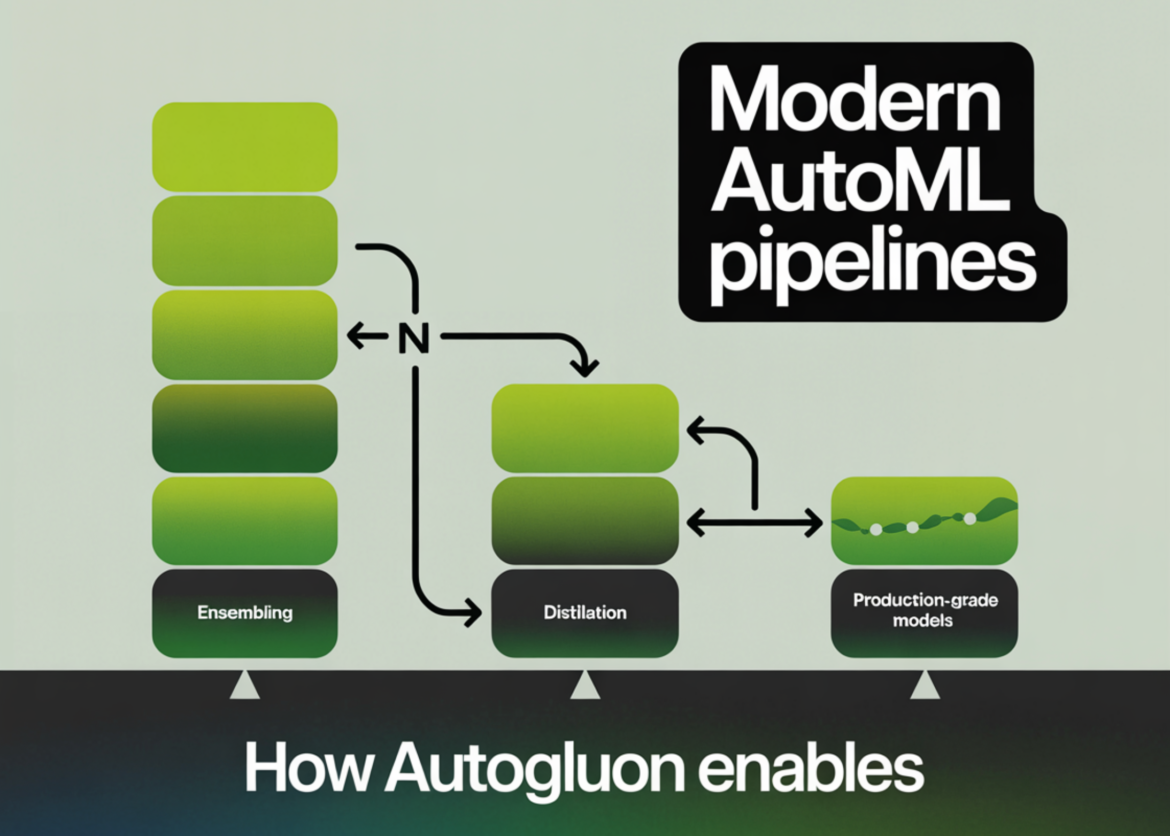

In this tutorial, we build a production-grade tabular machine learning pipeline using autogluonTaking mixed types of real-world datasets from raw ingestion to deployment-ready artifacts. We train high-quality stacked and bagged ensembles, evaluate performance with robust metrics, conduct subgroup and feature-level analyses, and then optimize the model for real-time inference using refit-full and distillation. Throughout the workflow, we focus on practical decisions that balance accuracy, latency, and deployability. check it out full code here.

!pip -q install -U "autogluon==1.5.0" "scikit-learn>=1.3" "pandas>=2.0" "numpy>=1.24"

import os, time, json, warnings

warnings.filterwarnings("ignore")

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.metrics import roc_auc_score, log_loss, accuracy_score, classification_report, confusion_matrix

from autogluon.tabular import TabularPredictorWe set up the environment by installing the required libraries and importing all the main dependencies used in the pipeline. We configure alerts to keep the output clean and ensure that numerical, tabular, and evaluation utilities are ready. check it out full code here.

from sklearn.datasets import fetch_openml

df = fetch_openml(data_id=40945, as_frame=True).frame

target = "survived"

df(target) = df(target).astype(int)

drop_cols = (c for c in ("boat", "body", "home.dest") if c in df.columns)

df = df.drop(columns=drop_cols, errors="ignore")

df = df.replace({None: np.nan})

print("Shape:", df.shape)

print("Target positive rate:", df(target).mean().round(4))

print("Columns:", list(df.columns))

train_df, test_df = train_test_split(

df,

test_size=0.2,

random_state=42,

stratify=df(target),

)

We load a real-world mixed-type dataset and perform light preprocessing to produce a clean training signal. We define the target, remove excessive leaking columns, and validate the dataset structure. We then create a stratified train-test split to maintain class balance. check it out full code here.

def has_gpu():

try:

import torch

return torch.cuda.is_available()

except Exception:

return False

presets = "extreme" if has_gpu() else "best_quality"

save_path = "/content/autogluon_titanic_advanced"

os.makedirs(save_path, exist_ok=True)

predictor = TabularPredictor(

label=target,

eval_metric="roc_auc",

path=save_path,

verbosity=2

)

We detect hardware availability to dynamically select the most appropriate autogluon training preset. We configure a persistent model directory and initialize the tabular predictor with an appropriate evaluation metric. check it out full code here.

start = time.time()

predictor.fit(

train_data=train_df,

presets=presets,

time_limit=7 * 60,

num_bag_folds=5,

num_stack_levels=2,

refit_full=False

)

train_time = time.time() - start

print(f"nTraining done in {train_time:.1f}s with presets="{presets}"")We train high quality groups using bagging and stacking within a controlled time budget. We rely on AutoGluon’s automatic model discovery to efficiently detect robust architectures. We also record the training time to understand the computational cost. check it out full code here.

lb = predictor.leaderboard(test_df, silent=True)

print("n=== Leaderboard (top 15) ===")

display(lb.head(15))

proba = predictor.predict_proba(test_df)

pred = predictor.predict(test_df)

y_true = test_df(target).values

if isinstance(proba, pd.DataFrame) and 1 in proba.columns:

y_proba = proba(1).values

else:

y_proba = np.asarray(proba).reshape(-1)

print("n=== Test Metrics ===")

print("ROC-AUC:", roc_auc_score(y_true, y_proba).round(5))

print("LogLoss:", log_loss(y_true, np.clip(y_proba, 1e-6, 1 - 1e-6)).round(5))

print("Accuracy:", accuracy_score(y_true, pred).round(5))

print("nClassification report:n", classification_report(y_true, pred))We evaluate the trained models using a hosted test set and inspect the leaderboard to compare performance. We compute probabilistic and discrete predictions and derive key classification metrics. This gives us a comprehensive view of model accuracy and calibration. check it out full code here.

if "pclass" in test_df.columns:

print("n=== Slice AUC by pclass ===")

for grp, part in test_df.groupby("pclass"):

part_proba = predictor.predict_proba(part)

part_proba = part_proba(1).values if isinstance(part_proba, pd.DataFrame) and 1 in part_proba.columns else np.asarray(part_proba).reshape(-1)

auc = roc_auc_score(part(target).values, part_proba)

print(f"pclass={grp}: AUC={auc:.4f} (n={len(part)})")

fi = predictor.feature_importance(test_df, silent=True)

print("n=== Feature importance (top 20) ===")

display(fi.head(20))We analyze model behavior through subgroup performance slicing and permutation-based feature importance. We identify how performance varies across meaningful segments of the data. This helps us assess robustness and interpretability before deployment. check it out full code here.

t0 = time.time()

refit_map = predictor.refit_full()

t_refit = time.time() - t0

print(f"nrefit_full completed in {t_refit:.1f}s")

print("Refit mapping (sample):", dict(list(refit_map.items())(:5)))

lb_full = predictor.leaderboard(test_df, silent=True)

print("n=== Leaderboard after refit_full (top 15) ===")

display(lb_full.head(15))

best_model = predictor.get_model_best()

full_candidates = (m for m in predictor.get_model_names() if m.endswith("_FULL"))

def bench_infer(model_name, df_in, repeats=3):

times = ()

for _ in range(repeats):

t1 = time.time()

_ = predictor.predict(df_in, model=model_name)

times.append(time.time() - t1)

return float(np.median(times))

small_batch = test_df.drop(columns=(target)).head(256)

lat_best = bench_infer(best_model, small_batch)

print(f"nBest model: {best_model} | median predict() latency on 256 rows: {lat_best:.4f}s")

if full_candidates:

lb_full_sorted = lb_full.sort_values(by="score_test", ascending=False)

best_full = lb_full_sorted(lb_full_sorted("model").str.endswith("_FULL")).iloc(0)("model")

lat_full = bench_infer(best_full, small_batch)

print(f"Best FULL model: {best_full} | median predict() latency on 256 rows: {lat_full:.4f}s")

print(f"Speedup factor (best / full): {lat_best / max(lat_full, 1e-9):.2f}x")

try:

t0 = time.time()

distill_result = predictor.distill(

train_data=train_df,

time_limit=4 * 60,

augment_method="spunge",

)

t_distill = time.time() - t0

print(f"nDistillation completed in {t_distill:.1f}s")

except Exception as e:

print("nDistillation step failed")

print("Error:", repr(e))

lb2 = predictor.leaderboard(test_df, silent=True)

print("n=== Leaderboard after distillation attempt (top 20) ===")

display(lb2.head(20))

predictor.save()

reloaded = TabularPredictor.load(save_path)

sample = test_df.drop(columns=(target)).sample(8, random_state=0)

sample_pred = reloaded.predict(sample)

sample_proba = reloaded.predict_proba(sample)

print("n=== Reloaded predictor sanity-check ===")

print(sample.assign(pred=sample_pred).head())

print("nProbabilities (head):")

display(sample_proba.head())

artifacts = {

"path": save_path,

"presets": presets,

"best_model": reloaded.get_model_best(),

"model_names": reloaded.get_model_names(),

"leaderboard_top10": lb2.head(10).to_dict(orient="records"),

}

with open(os.path.join(save_path, "run_summary.json"), "w") as f:

json.dump(artifacts, f, indent=2)

print("nSaved summary to:", os.path.join(save_path, "run_summary.json"))

print("Done.")We optimize the trained ensemble for inference by concatenating the obtained models and benchmarking latency improvements. We optionally distill the combination into fast models and validate robustness through save-reload checks. Additionally, we export structured artifacts required for production handoff.

Finally, we implemented an end-to-end workflow with AutoGluon that transforms raw tabular data into production-ready models with minimal manual intervention while maintaining strong control over accuracy, robustness, and estimation efficiency. We performed systematic error analysis and feature importance assessment, optimized large ensembles through refitting and distillation, and validated deployment readiness using latency benchmarking and artifact packaging. This workflow enables the deployment of high-performance, scalable, interpretable, and well-suited tabular models for real-world production environments.

check it out full code here. Also, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletter. wait! Are you on Telegram? Now you can also connect with us on Telegram.