3 o’clock in the morning problem

It’s three in the morning and something is broken. The dashboard is out of date, an SLA has slipped, and everyone is guessing which part of the platform has gone out of line. A task may run for hours without timeout. A pipeline may have updated a table that has not been read for several months. A cluster may still be on the old runtime. Perhaps the only person who knows the owner of the job is on vacation.

These are the patterns that plague data teams: wasted computation from unused pipelines, reliability gaps from missing health rules, sanity issues from outdated runtimes, and delays caused by unclear ownership. They appear quietly, grow slowly, and then suddenly become the thing that keeps the on-call engineer awake.

Databricks System Tables Provide a consistent layer to quickly identify these issues by exposing job metadata, task timelines, execution behavior, configuration history, lineage, cost signals, and ownership in one place.

with new launch System Tables for Lakeflow JobsYou now have access to extended schemas that provide rich execution details and metadata signals and enable more advanced overviews.

Deep and centralized visibility of all your data made easy with System Tables

What are system tables?

Databricks System Tables is a set of read-only, Databricks-managed tables system Catalog that provides operational and observational data for your account. They come out of the box and cover a wide range of data including jobs, pipelines, groups, billing, lineage and much more.

|

Social class |

what it tracks |

|

lakeflow jobs |

Task configuration, task definitions, run timeline |

|

lakeflow spark declarative pipeline |

Pipeline metadata, update history |

|

Billing |

Determining cost based on usage, workload |

|

genealogy |

Table-level read/write dependencies |

|

clusters |

Configuration, count usage |

Why do system tables matter to overview?

System Tables support cross-workspace analysis within a region, enabling data engineering teams to easily analyze any workload behavior and operational patterns at scale from a single, queryable interface. Using these tables, data practitioners can monitor the health of all their pipelines, surface cost-saving opportunities and quickly identify failures for improved reliability.

Some Systems Use Tables scd type 2 Semantically, preserving the complete change history by inserting a new row for each update. This enables configuration auditing and historical analysis of platform state over time.

Lakeflow System Tables

Lakeflow System Tables Keep data for the last 365 days and include the following tables.

For a complete list of system tables and their relationships, see documentation.

Jobs Overview Tables (Generally Available)

system.lakeflow.jobs– SCD2 metadata for jobs including configuration and tags. Useful for inventory, governance and configuration drift analysis.system.lakeflow.job_tasks– The SCD2 table describes all job tasks, their definitions and dependencies. Useful for understanding large scale task structures.system.lakeflow.job_run_timeline– Immutable timeline of job positions, calculations and runs over time. Ideal for SLA and performance trend analysis.system.lakeflow.job_task_run_timeline– The timeline of individual tasks runs within each task. Helps identify bottlenecks and task-level problems.

Pipeline Overview Tables (Public Preview)

system.lakeflow.pipelines– SCD2 metadata table for SDP pipelines, enabling cross-workspace pipeline visibility and change tracking.system.lakeflow.pipeline_update_timeline– Support of immutable execution logs, historical debugging, and optimization for pipeline updates.

Lakeflow System Tables have seen rapid growth in popularity, with millions of queries being run every day, representing a 17x increase year-on-year. This surge underscores the value that data engineers derive from Lakeflow System Tables, which have become a critical component of day-to-day overviews for many Databricks Lakeflow customers.

Let’s look at the use cases made possible by the recently expanded and now generally available Jobs System Tables.

System Tables in Real Life: Operational Health for Lakeflow Jobs

As a data engineer in a central platform team, you are responsible for managing hundreds of jobs across multiple teams. Your goal is to maintain the data platform Cost-efficient, reliable and performingEnsuring that teams follow governance and operational best practices.

To do this, you are ready to audit your Lakeflow jobs and pipelines for four main purposes:

- Optimize costs: Identify scheduled tasks that update datasets that are never used downstream.

- Ensure Reliability: Enforce timeouts and runtime limits to prevent runaway jobs and SLA violations.

- Maintain cleanliness: Verify consistent runtime versions and configuration parameters.

- Assign Accountability: Identify job owners to streamline follow-up and improvements.

Pattern 1: Find jobs that generate unused data

Problem: Scheduled jobs run faithfully, updating tables that no downstream consumers ever read. These are often the easiest cost savings if you can find them.

Approach: Join the Lakeflow Jobs tables with the Lineage and Billing tables to identify producers without consumers based on cost.

What To Do Next: Review the top offenders with their owners. It may be safe to stop some immediately. Others may require depreciation planning if external systems outside of Databricks depend on them.

Pattern 2: Find jobs without timeouts or term limits

Problem: Jobs without timeouts can run indefinitely. A stuck task burns up the computation for hours or even days before anyone notices. Apart from increasing costs, it can also lead to SLA violations, so you need to detect overruns and take action before deadlines or downstream processes are affected.

Approach: Query the current job configuration for missing timeout and duration limit settings.

What To Do Next: Cross-reference the job_run_timeline with the historical runtime to determine realistic limits. A job that typically runs 20 minutes may have a timeout of 1 hour and a duration limit of 30 minutes. A job that varies widely may require investigation first.

Pattern 3: Locate legacy runtime versions

Problem: Obsolete runtimes do not have security patches, performance improvements, and are subject to upcoming EOL deadlines. But with hundreds of jobs, tracking who is still on older versions is hard.

Approach: Query job task configuration for runtime volumes and mark anything below your limit.

What To Do Next: Prioritize upgrades based on EOL deadline. Share this list with job owners and track progress in follow-up questions.

Pattern 4: Identify job owners for solutions

Problem: When a function fails or is not configured correctly, you need to know who to contact to fix the problem.

Approach: Query system tables to easily identify job owners for each action.

What To Do Next: Reach out to job owners to assign ownership for issues that need action.

Together, these patterns help you optimize costs, keep data fresh, enforce reliability guardrails, and provide clear ownership for remediation. They form the foundation of operational observation.

Bringing it all together: Driving insights with dashboards

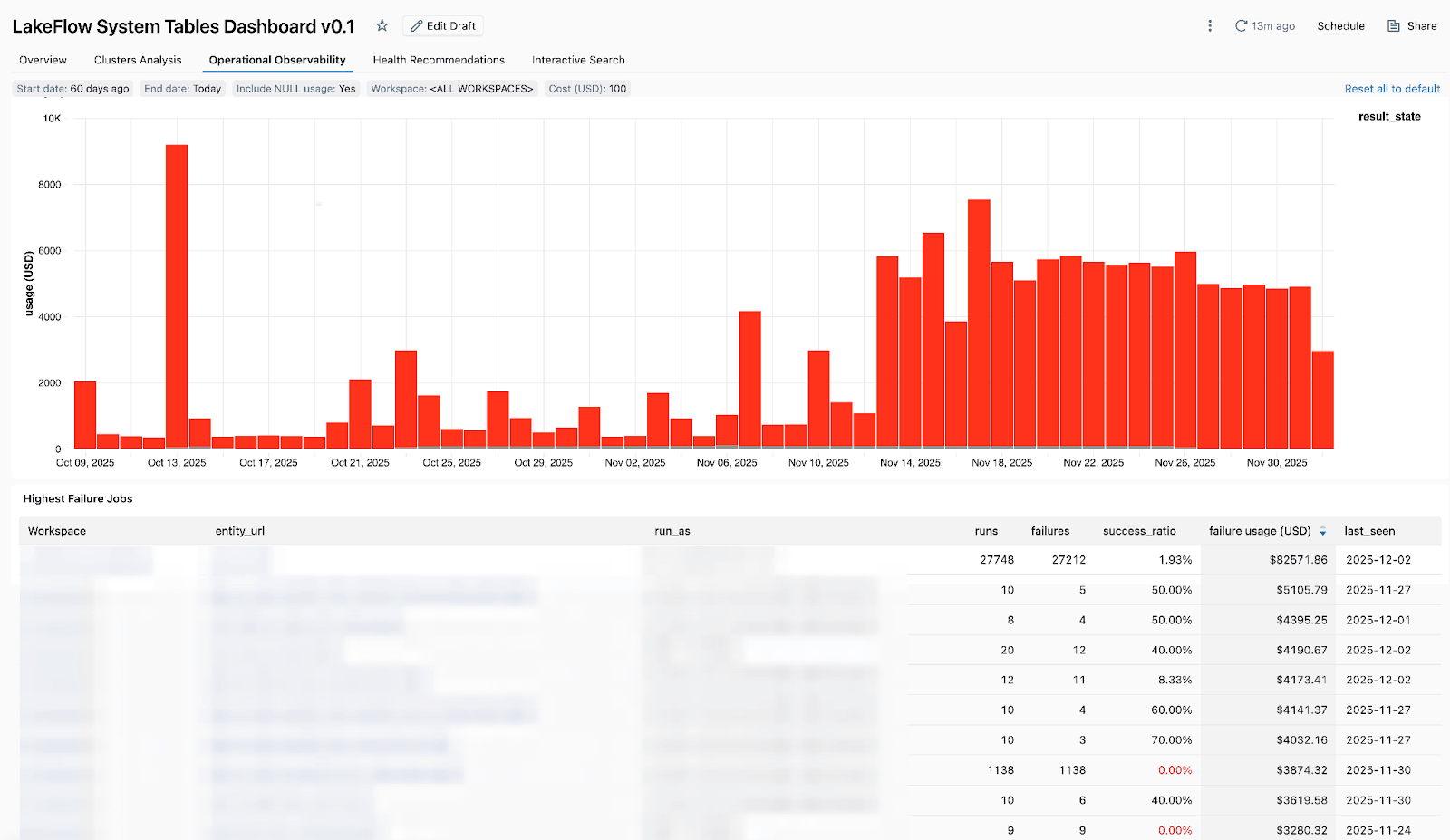

It is useful to run these queries ad-hoc. But for day-to-day tasks, you’ll want a shared view that your entire team can refer to.

The Lakeflow dashboard gives me a bird’s-eye view of jobs across all my work areas – not just at the cost level, but also down to pipeline hygiene and operations: tracking spend, identifying aging pipelines, monitoring failures and spotting optimization opportunities – Zoe Van Noppen, Data Solutions Architect, Cubigo

To get started, import the dashboard into your workspace. For step-by-step instructions, contact the official documentation.

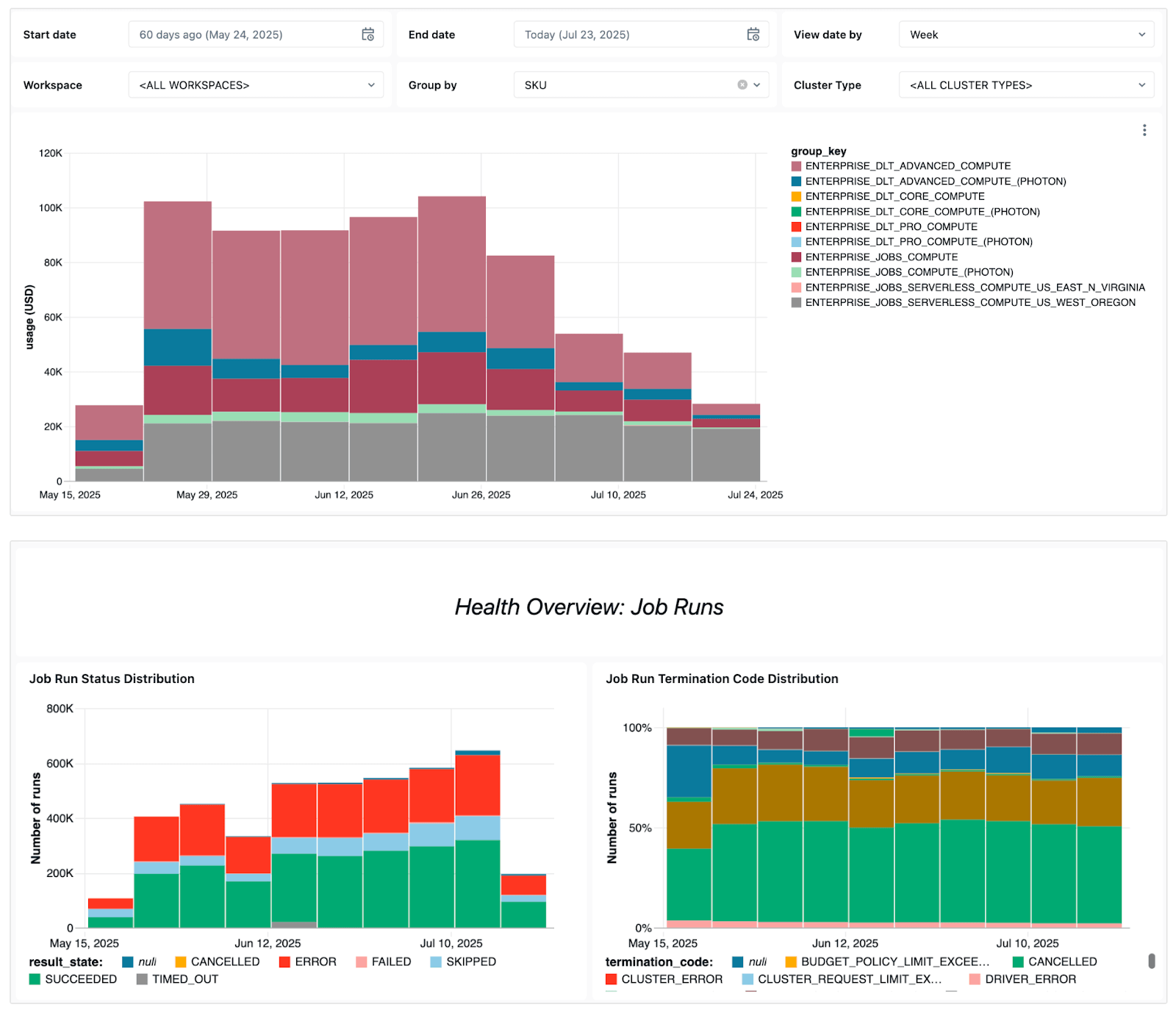

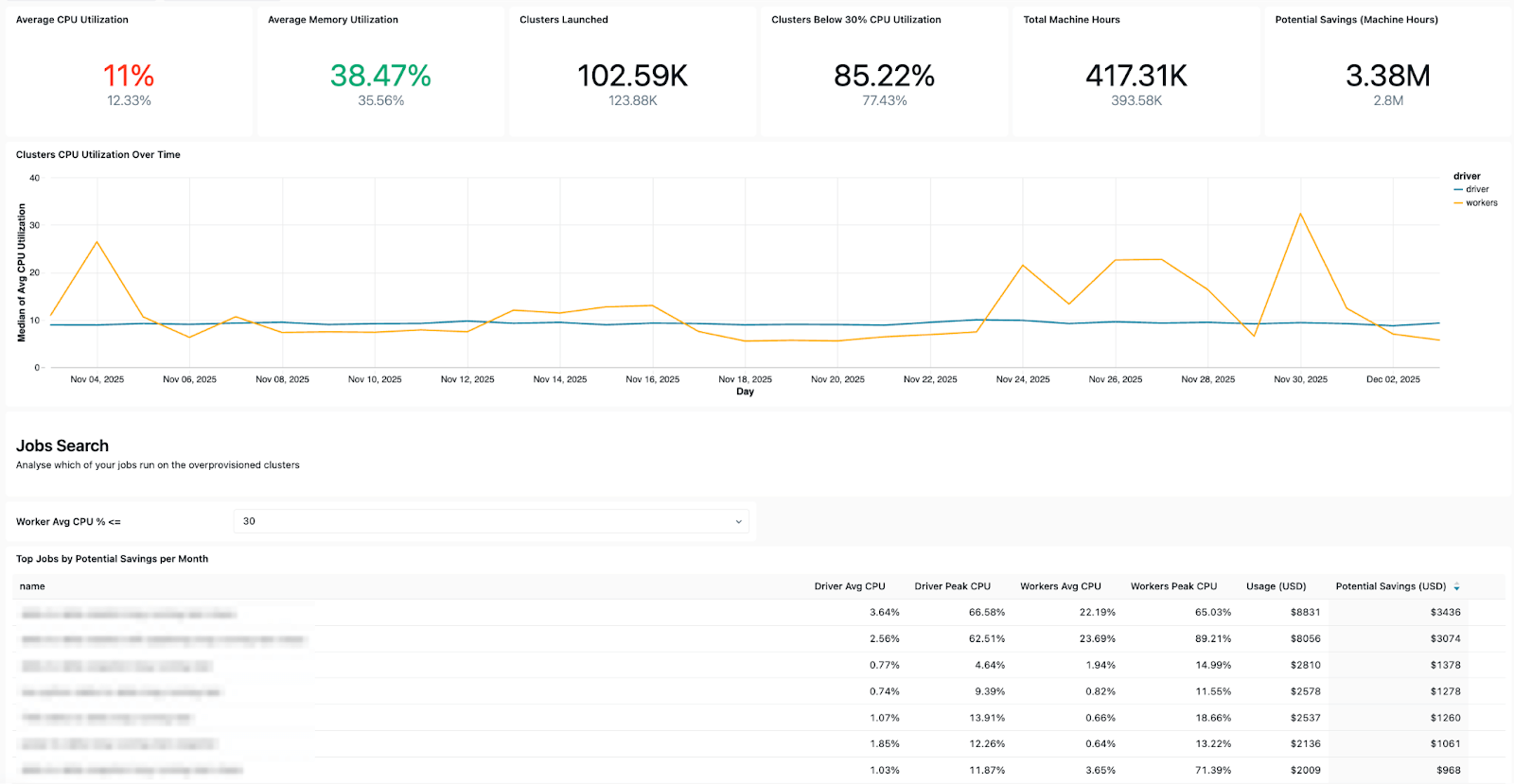

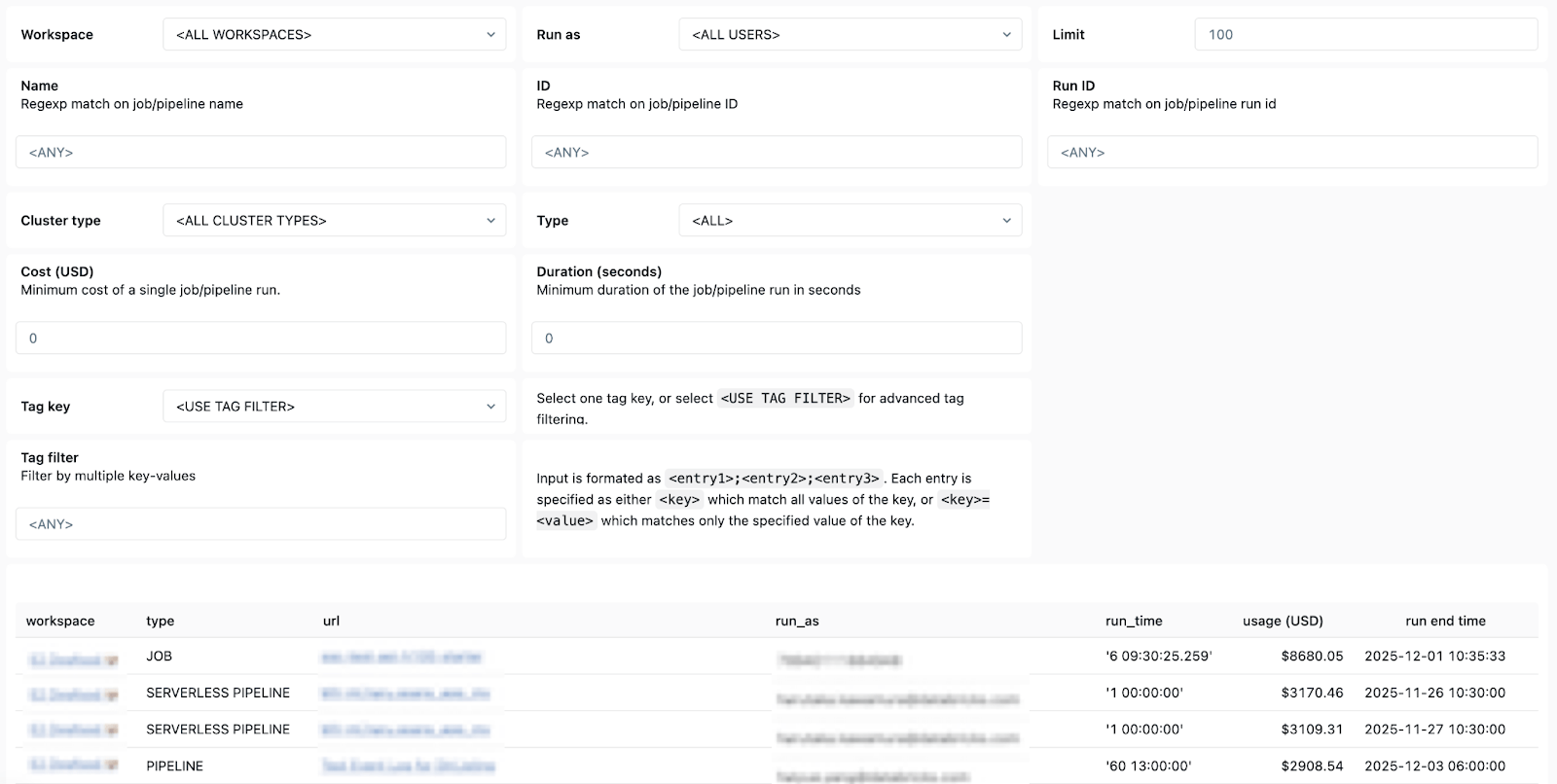

The dashboard displays several key operational indicators, including:

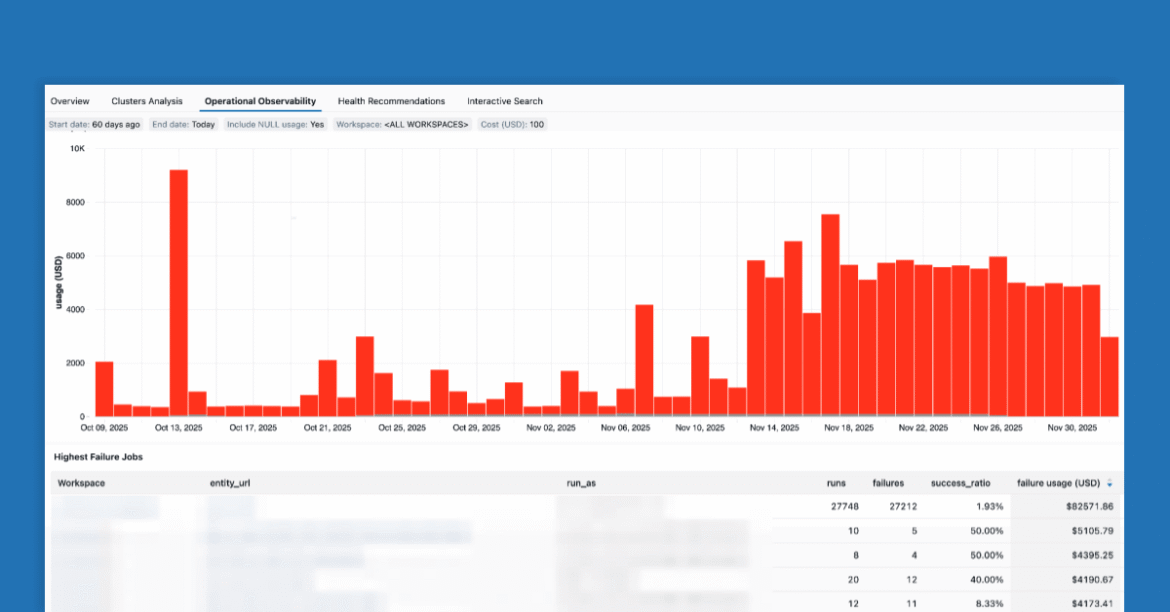

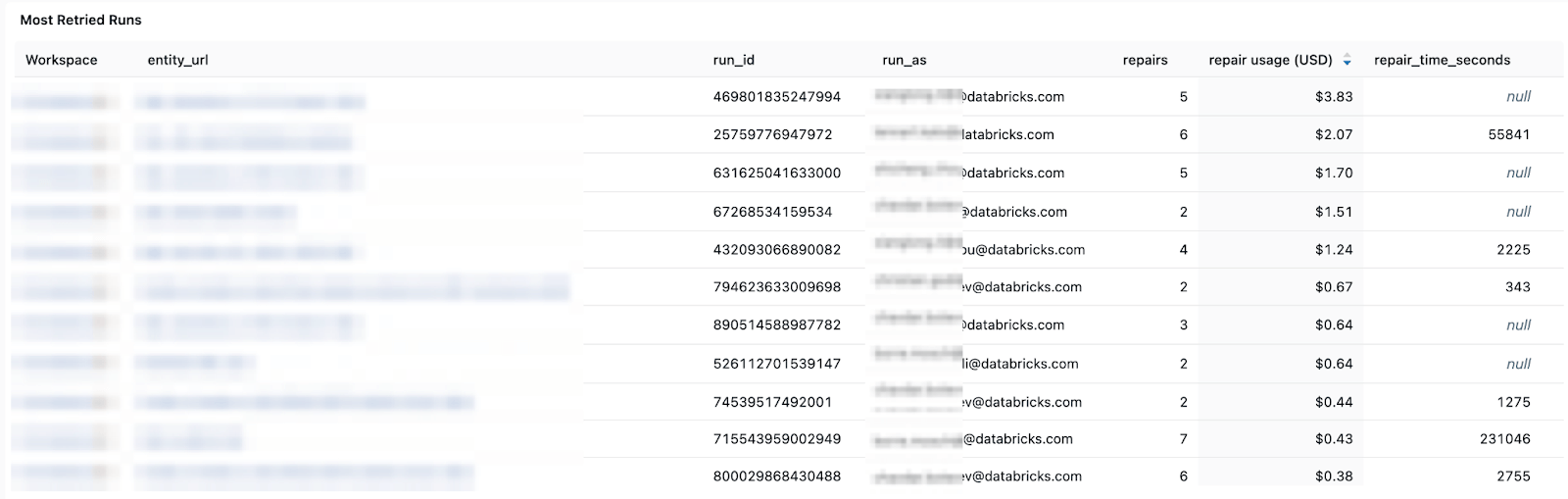

- Tendencies to failure – You can see overall error trends and common error messages to see which jobs fail most often.

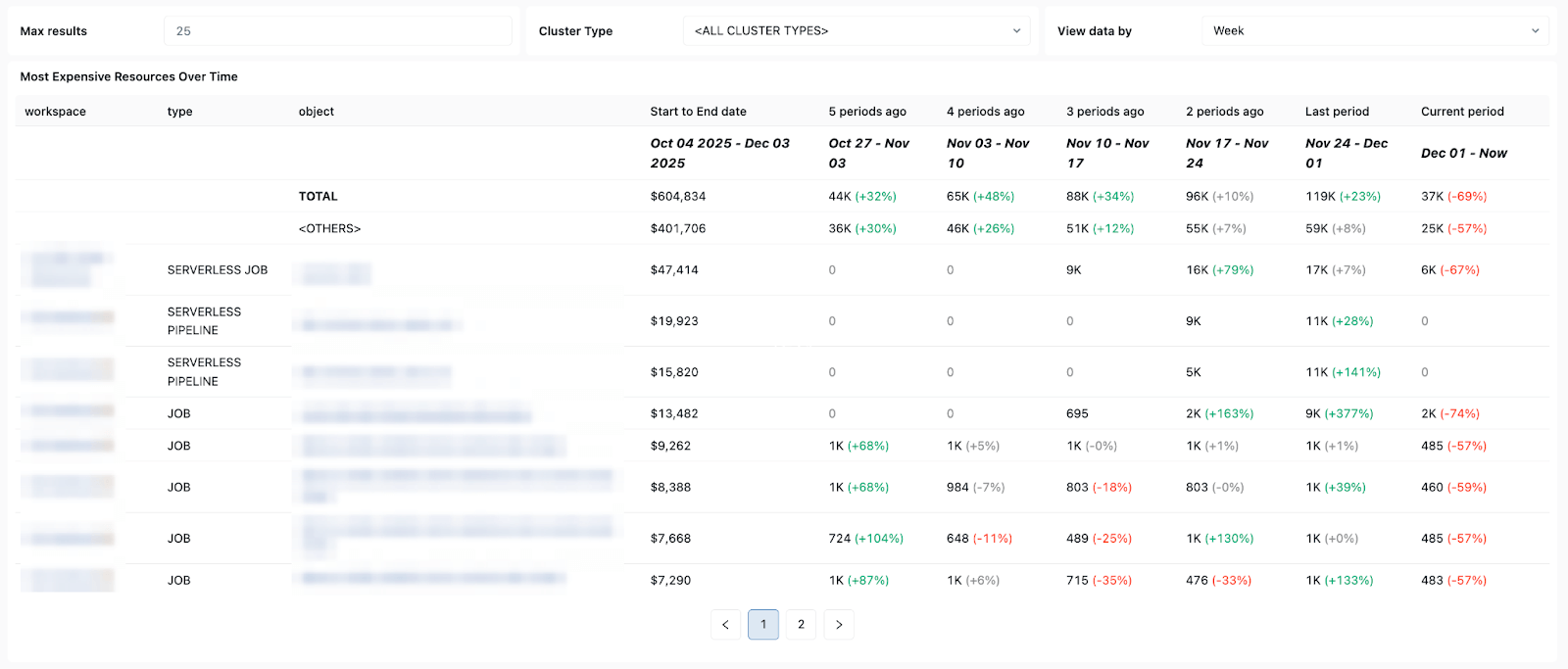

- High cost jobs – So you can identify the most expensive jobs and individual jobs that ran in the last 30 days or more. The table below is sorted by the highest cost jobs in the selected period and shows their cost trends over time.

- Configuration Insights – Allows you to check cluster efficiency, health rules, timeouts and runtime volumes for operational hygiene.

- ownership details – So you can easily find “run-as” users and job creators and know who to contact.

In short, Databricks System Tables makes it easy to efficiently monitor, audit, and troubleshoot Lakeflow jobs at scale and across scopes. With clear, simple and accessible views of your jobs and pipelines available in dashboard templates, every data engineer using Lakeflow can gain advanced overview and ensure consistently production-ready, cost-effective and reliable pipelines.

System Tables turns your platform telemetry into a queryable asset. Instead of piecing together signals from five different devices, you write SQL against a unified schema and get answers in seconds.

Your 3 a.m. self will thank you.

To learn more about System Tables, see the following resources:

New to Databricks? Try Databricks for free today!