Picture this: Your company is sitting on tens of petabytes of data. To put it in perspective, if I had a penny for each byte and stacked them, I would have enough to get to Pluto and back, with some change.

This is the reality we face at Rocket Mortgage, and it’s probably not too different from the reality your organization is facing.

💡

All that data represents a goldmine of insights, but the challenge has always been to make it accessible to those who need it most (executives, analysts and decision makers who understand the business but don’t necessarily code SQL in their sleep.)

That’s why we created Rocket Analytics, and today I want to take you behind the scenes of how we built a text-to-SQL application using Agentic tile (recovery-enhanced generation).

This tool fundamentally changes the way our teams interact with data, helping them focus on what they do best: asking strategic and thoughtful questions, while the system handles the technical heavy lifting.

What does Rocket Analytics actually do

Here’s how it works in practice: A user asks a natural language question, like:

“Tell me the count of debts for the last six months.”

Behind the Scenes:

- Converts the query to a SQL query

- executes it against the relevant database

- Returns results in a clean, understandable format

💡

But the real magic comes when users ask follow-up questions. They can request insights based on preliminary results, or prepare dashboards that highlight actionable patterns and trends.

During a recent demo, someone went from raw loan calculations to a comprehensive dashboard that showed:

- total loan closed

- average daily loan

- Maximum Daily Loan Dates

- trend analysis

– All within seconds. For executives and stakeholders in the mortgage industry, where speed of decision making is critical, this capability is transformative.

the architecture behind the magic

Rocket Analytics depends on several key components, each of which plays a vital role in delivering accurate results.

Query transformation: making queries understandable

The journey begins when a user enters their question. Large language models, while powerful, do not inherently understand our specific business context or data structures.

For example ask ChatGPT:

“Can you give me your top ten days for loan production?”

It won’t know exactly what you mean.

Our query transformation module transforms natural language into clear, actionable instructions. The same question becomes:

“Write a SQL query to find out the top ten days based on loan closings.”

This step is important because we never feed actual data to the model – only metadata about our database structures.

Creation and management of knowledge base

Before we can answer questions, we need a broad knowledge base. We:

- Convert database metadata to embeddings using Amazon Titan

- Store them in the FAISS (Facebook AI Similarity Search) vector store

- Include information about all tables, schema, relationships, and business context

This knowledge base is the basis that allows the system to understand which tables may be relevant to a given query.

Intelligent retrieval: finding the right data source

When a query comes in, we do a compound search:

- Semantic similarity search identifies potentially relevant tables

- Keyword triggers ensure that important tables are included, even if semantic search alone would miss them

For example, a knowledge base with 15 tables can be narrowed down to the most relevant 4-5. This ensures that the LLM is not overloaded and errors are reduced.

Re-ranking for accuracy

Once the candidate tables are retrieved, we use Cohair’s re-ranker model (BART-based) to refine the selection. This classification system evaluates the likelihood of each document being relevant to the user’s question.

This step is essential to prevent hallucinations, ensuring that the LLM generates accurate SQL queries based only on relevant metadata.

Prompt Engineering: Art and Science

The crafting prompt consists of several layers:

- Standard Guidelines: Always answer within context; Use exact table and column names

- Adaptive Guidelines: Some One-Shot Examples for Tricky Question Types

- Domain-specific references: Helps models understand mortgage-industry terminology

A typical sign looks like this:

💡

“Given the two tables below and their detailed metadata information, please answer the question at the end of the prompt, keeping in mind the guidelines below…”

This carefully crafted hint ensures accuracy when guiding the model through complex queries.

Execution and Post-Processing

Once LLM generates a SQL query:

- execute it against the appropriate database

- Post-process the results to make them user-friendly

- Users can view the results as is or ask follow-up questions

For example, a user reviewing six months of loan data might ask:

“Can you show me some insight?”

The system will automatically generate visualizations in seconds and highlight actionable trends.

Agentic framework: orchestrating complexity.

So far, we have described a single agent handling queries in a domain. But organizations often need cross-domain insights.

Our agentic framework offers:

- a chief orchestrator agent

- Sub-agents with expertise in domains like sales, marketing and operations

- Each sub-agent has its own knowledge base and customized signals

Currently, each agent operates within domain constraints. The next step is multi-agent coordination, which enables cross-domain queries without manual integration.

Performance optimization: speed and cost matter

Two strategies have made a big difference:

semantic caching

- Avoid unnecessary processing by reusing tables and prompts for semantically similar queries

- Handling subtleties like “last six months” vs. “last ten days” requires careful tuning

quick caching

- caches static signal components

- Reduces computation and improves latency and cost efficiency

These optimizations help scale Rocket Analytics across teams without slowing down queries.

Measuring success: how do we know it’s working

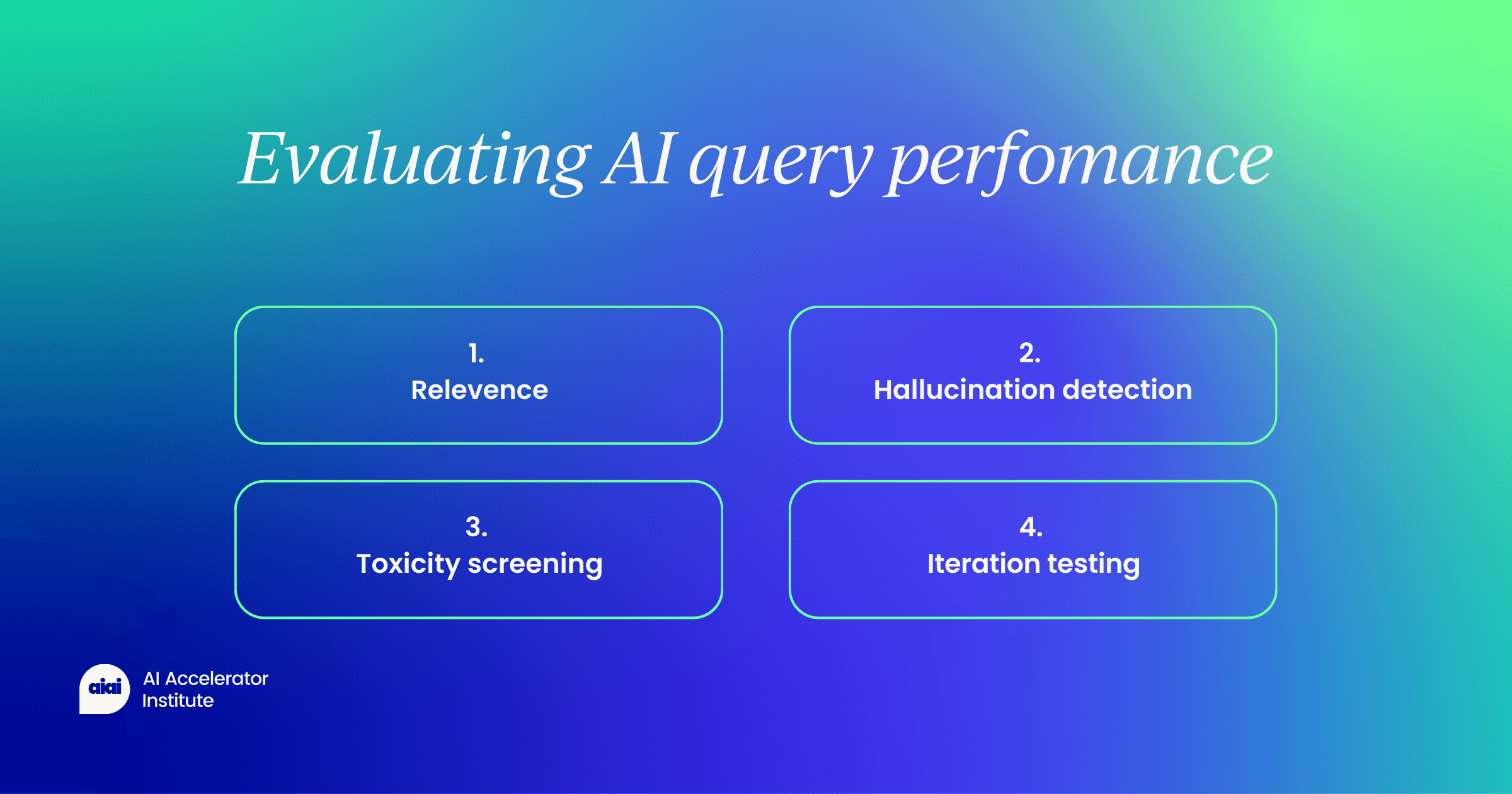

We evaluate systems along several key metrics:

- Relevance: Are the retrieved tables actually relevant? Checked using LLM-A-Judge

- Hallucination detection: compares the output against the golden dataset

- Toxicity screening: ensures all responses are professional

Multiple iterations per query give a reliable performance overview and help catch edge cases.

Real-world impacts: who benefits and how?

Rocket Analytics helps teams across the organization:

Sales & Marketing

- Analyze Campaign Effectiveness

- Identify seasonal trends

- Compare strategies across segments

Operation

- Monitor performance metrics

- optimize processes

finance

- generate instant reports

- analyze trends

Previously, it would have taken days for these insights to be processed by analysts; Now they are available in a matter of seconds.

The human element: trust but verify

AI accelerates insightBut human oversight is important:

- Human-in-the-loop checkpoints for high-risk queries

- Regular audits to catch irregularities or emerging errors

- Ensures speed and accuracy without compromising confidence

Looking ahead: development continues

Our roadmap includes:

- Multi-Agent Coordination for Cross-Domain Insights

- continuous performance improvement

- Expanded domain coverage for more teams

By democratizing data access, Rocket Analytics enables any team member to based on data Decisions without SQL expertise.

final thoughts

building With a text-to-SQL system agentic rag Taught us:

- AI can enhance, not replace, human intelligence

- Speed, accuracy and accessibility matter more than just complexity

- Thoughtful implementation and user-centric design are key

By removing technical barriers, Rocket Analytics empowers more people to explore, analyze, and act on data, creating a competitive advantage.

The goal is not to build the most sophisticated AI but to build the most useful AI.

Arjun Barley, Staff Data Scientist at Rocket Mortgage, gave this presentation to us Agentic AI Summit, New York, 2025.