How do you design an LLM agent that makes decisions to get Without hand-tuned heuristics or additional controllers, what to store in long-term memory, what to keep in short-term context, and what to discard? Can a single policy learn to manage both memory types through the same action space as text generation?

Researchers from Alibaba Group and Wuhan University introduce Agentic memory, or edgememA framework that lets large language model agents learn how to manage both long-term and short-term memory as part of a single policy. Instead of relying on hand-written rules or external controllers, the agent uses memory tools integrated into the model’s action space to decide when to store, retrieve, summarize, and forget.

Why do current LLM agents struggle with memory?

Most agent frameworks treat memory as two loosely coupled systems,

Long-term memory stores user profiles, task information, and past interactions in sessions. Short-term memory is the current context window, which holds active dialogs and retrieved documents.

Existing systems design these two parts separately. Long-term memory is controlled through an external store such as a vector database with simple add and retrieve triggers. Short-term memory is managed with retrieval augmented generation, sliding windows or summary schedules.

This separation creates many issues.

- Long-term and short-term memory are optimized independently. Their conversation is not trained from beginning to end.

- Inferences decide when to commit to memory and when to summarize. These rules are delicate and leave out rare but important events.

- Additional controllers or specialist models increase cost and system complexity.

AgeMem removes the external controller and turns memory operations over to the agent policy itself.

Memory as a tool in agent action space

In EdgeMem, memory operations are exposed as tools. At each step, the model can emit either normal text tokens or tool calls. The framework defines 6 devices.

for long term memory,

ADDStores a new memory item with contents and metadata.UPDATEModifies an existing memory entry.DELETERemoves obsolete or low value items.

for short term memory,

RETRIEVEPerforms semantic search on long-term memory and injects retrieved items into the current context.SUMMARYCompresses the length of dialogue into short summaries.FILTERRemoves reference sections that are not useful for future argumentation.

Interaction protocol has a structured format. Each step starts with a

Three-stage reinforcement learning for integrated memory

AgeMem is trained with reinforcement learning in such a way that it connects long-term and short-term memory behavior.

state at time Tea This includes current conversation context, long-term memory stores, and task specifications. The policy chooses either a token or a tool call as the action. The training trajectory for each sample is divided into 3 stages,

- Step 1, long-term memory formation: The agent interacts in a casual setting and views information that will become relevant later. it uses

ADD,UPDATEAndDELETETo form and retain long-term memory. The short-term context naturally increases during this phase. - Step 2, short-term memory control under distractors:The short-term context has been reset. Long-term memory persists. Agents now receive distracting content that is relevant but not essential. It should manage short-term memory using

SUMMARYAndFILTERTo hold useful material and eliminate noise. - Step 3, Integrated Reasoning: The last question comes. The agent is retrieved from long-term memory using

RETRIEVEControls short-term context, and generates answers.

The important detail is that long-term memory persists across all stages while short-term memory is cleared between Stage 1 and Stage 2. This design forces the model to rely on retrieval rather than residual context and highlights realistic long-term dependencies.

Reward Design and Phase Wise GRPO

AgeMem uses a stepped version of Group Relative Policy Optimization (GRPO). For each task, the system samples several trajectories that form an ensemble. A terminal reward is calculated for each trajectory, then normalized within the group to obtain a profit signal. This benefit is propagated to all stages of the trajectory so that intermediate tool choices can be trained using the final result.

Total reward has three main components,

- A task award that scores answer quality between 0 and 1 using an LLM judge.

- A reference award that measures the quality of short-term memory operations, including compression, initial summarization, and preservation of query relevant content.

- A memory reward that measures long-term memory quality, including the fraction of high-quality stored items, the usefulness of maintenance operations, and the relevance of retrieved items to the query.

Equal weights are used for these three components so that each contributes equally to the learning signal. A penalty term is added when the agent exceeds the maximum allowed dialogue length or when the context exceeds the limit.

Experimental setup and main results

The research team fine-tuned AgeMem on the HotpotQA training partition and evaluated it on 5 benchmarks:

- ALFWorld for text based embedded functions.

- SciWorld for science themed environments.

- BabyAI for following instructions.

- PDDL work for the scheme.

- HotpotQA for multi hop question answers.

Metrics include success rates for ALFworld, Scienceworld and BabyAI, progress rates for PDDL tasks, and LLM judge scores for HotpotQA. They also define a memory quality metric using the LLM evaluator that compares stored memories to HotpotQA supporting facts.

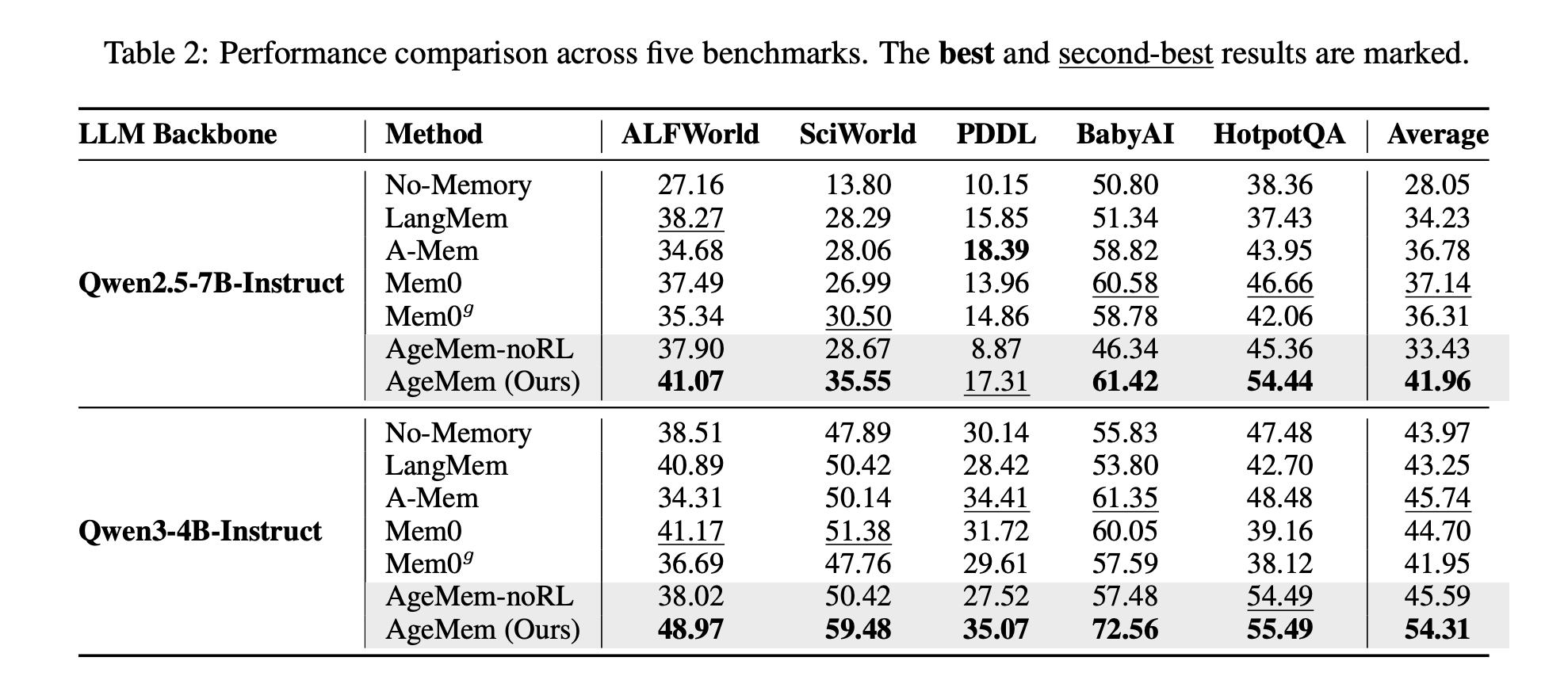

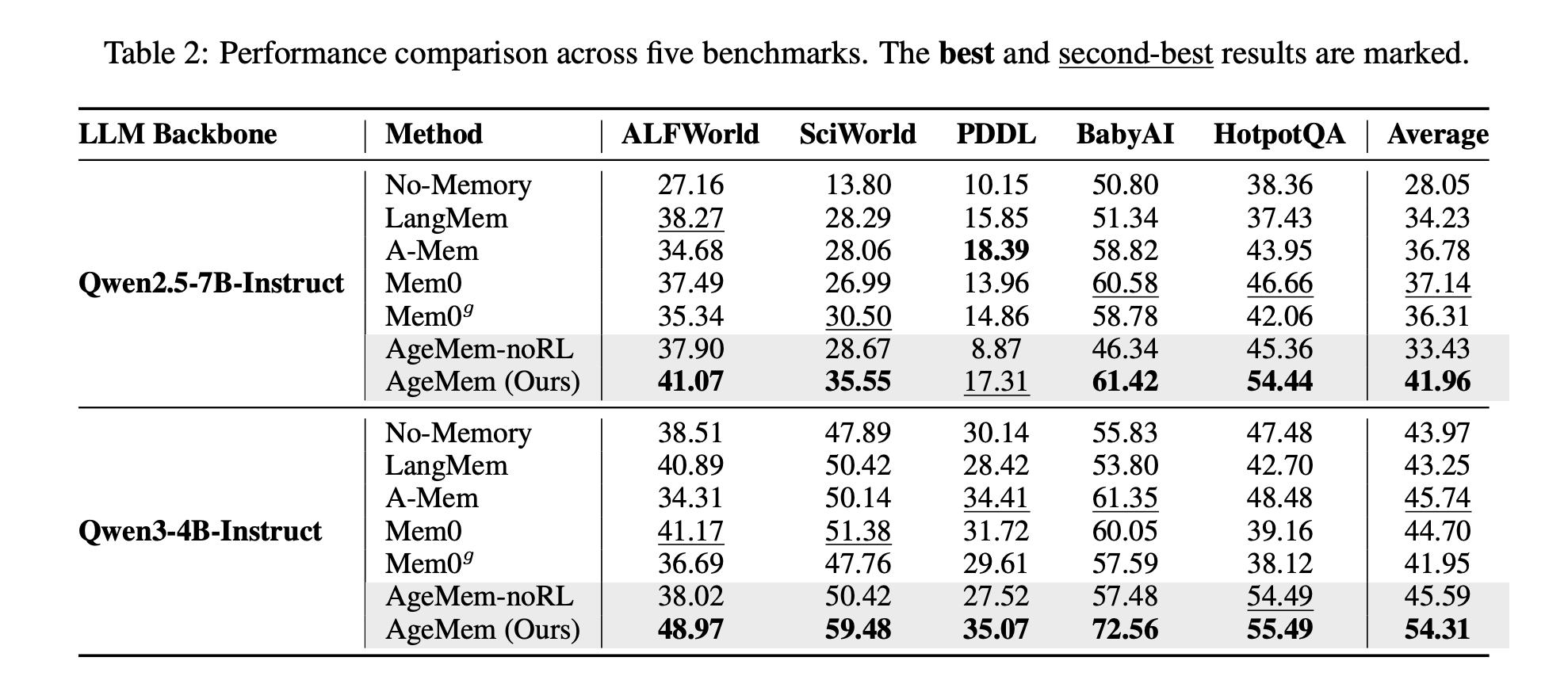

The baselines include langmem, amem, mem0, mem0g, and no memory agent. The backbones are Qwen2.5-7B-Instruct and Qwen3-4B-Instruct.

On Qwen2.5-7B-Instruct, AgeMem reaches an average score of 41.96 across 5 benchmarks, while the best baseline, Mem0, reaches 37.14. On Qwen3-4B-Instruct, AgeMem reaches 54.31 compared to 45.74 for the best baseline, A Mem.

Memory quality also improves. On HotpotQA, AgeMem reaches 0.533 with Qwen2.5-7B and 0.605 with Qwen3-4B, all higher than the baseline.

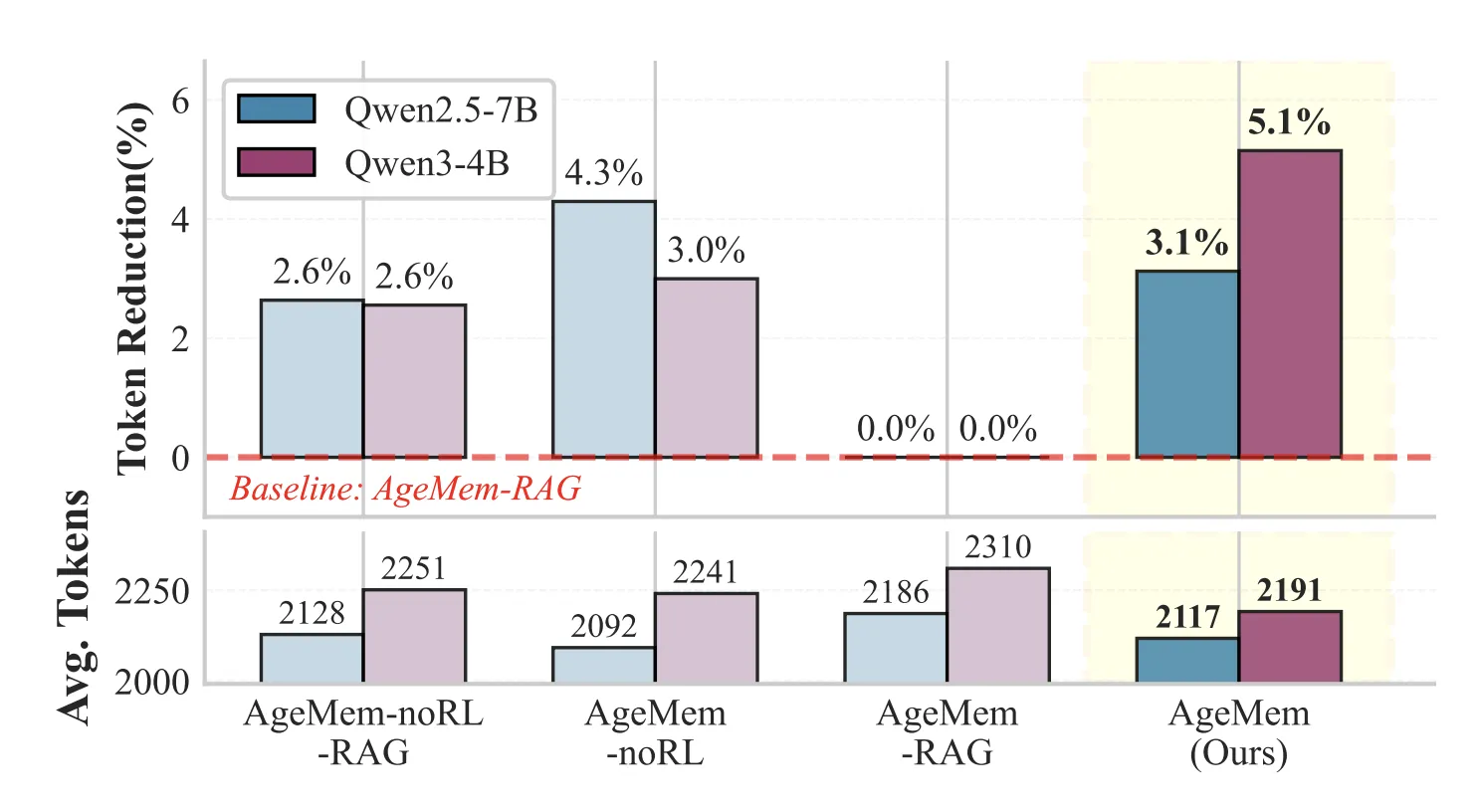

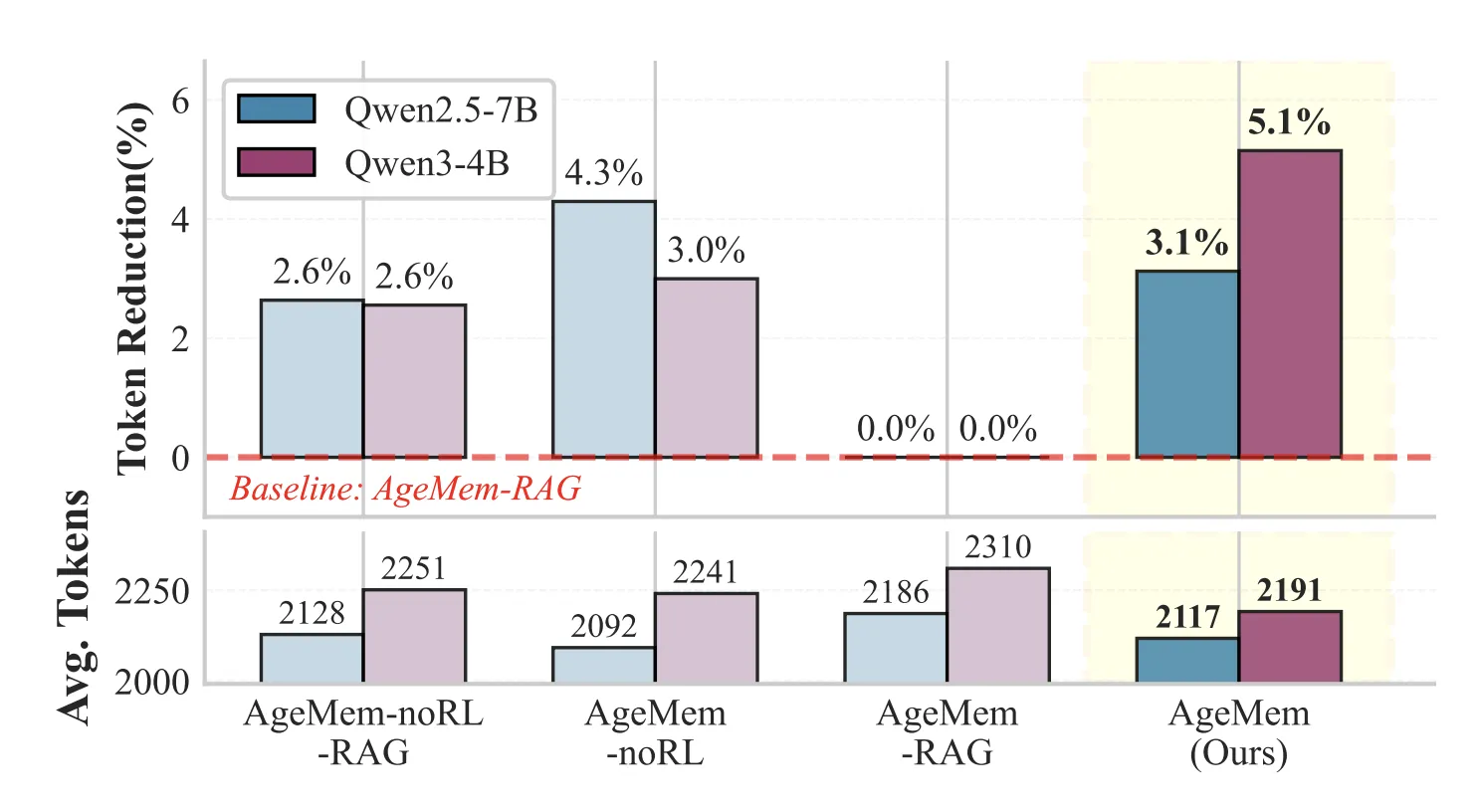

Short-term memory devices reduce prompt length while preserving performance. On HotpotQA, configurations with the STM tool use about 3 to 5 percent fewer tokens per prompt than variants that replace the STM tool with the recovery pipeline.

Ablation studies confirm that each component matters. Simply adding a long-term memory tool on top of a no-memory baseline already provides clear benefits. Adding reinforcement learning to these tools further improves the scores. The full system, with both long-term and short-term tools and RL, delivers an improvement of 21.7 percentage points compared to the no-memory baseline on ScienceWorld.

Implications for LLM agent design

AgeMem suggests a design pattern for future agentic systems. Memory should be handled as part of the learned policy, not as two external sub-systems. By turning storage, retrieval, summarization, and filtering into explicit tools and training them jointly with language generation, the agent learns when to remember, when to forget, and how to efficiently manage context over long periods of time.

key takeaways

- AgeMem turns memory operations into explicit tools, so the same policy that generates the text also decides when to do

ADD,UPDATE,DELETE,RETRIEVE,SUMMARYAndFILTERMemory. - Long-term and short-term memory are jointly trained through a three-stage RL setup, where long-term memory remains intact throughout all stages and the short-term context is reset to implement retrieval-based reasoning.

- The reward function combines task accuracy, context management quality, and long-term memory quality with equal weighting, as well as penalties for context overflow and excessive dialogue length.

- In ALFWorld, SciWorld, BabyAI, PDDL tasks and HotpotQA, AgeMem on Qwen2.5-7B and Qwen3-4B consistently outperforms memory baselines like LangMem, A Mem and Mem0 on average score and memory quality metrics.

- Short-term memory devices reduce prompt length by about 3 to 5 percent compared to a RAG style baseline while maintaining or improving performance, suggesting that learned summarization and filtering can replace handmade context management rules.

check it out full paper hereAlso, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletterwait! Are you on Telegram? Now you can also connect with us on Telegram.

Check out our latest releases ai2025.devA 2025-focused analytics platform that models launches, benchmarks and transforms ecosystem activity into a structured dataset that you can filter, compare and export.

Asif Razzaq Marktechpost Media Inc. Is the CEO of. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. Their most recent endeavor is the launch of MarketTechPost, an Artificial Intelligence media platform, known for its in-depth coverage of Machine Learning and Deep Learning news that is technically robust and easily understood by a wide audience. The platform boasts of over 2 million monthly views, which shows its popularity among the audience.