In this tutorial, we demonstrate how we use A type of bird To create a portable, in-database feature engineering pipeline that looks and feels like Pandas but executes entirely inside the database. We show how we connect to DuckDB, securely register data inside the backend, and define complex transformations using window functions and aggregations without pulling raw data into local memory. Keeping all transformations lazy and backend-agnostic, we demonstrate how to write analytics code once in Python and rely on Ibis to translate it into efficient SQL. check it out full code here,

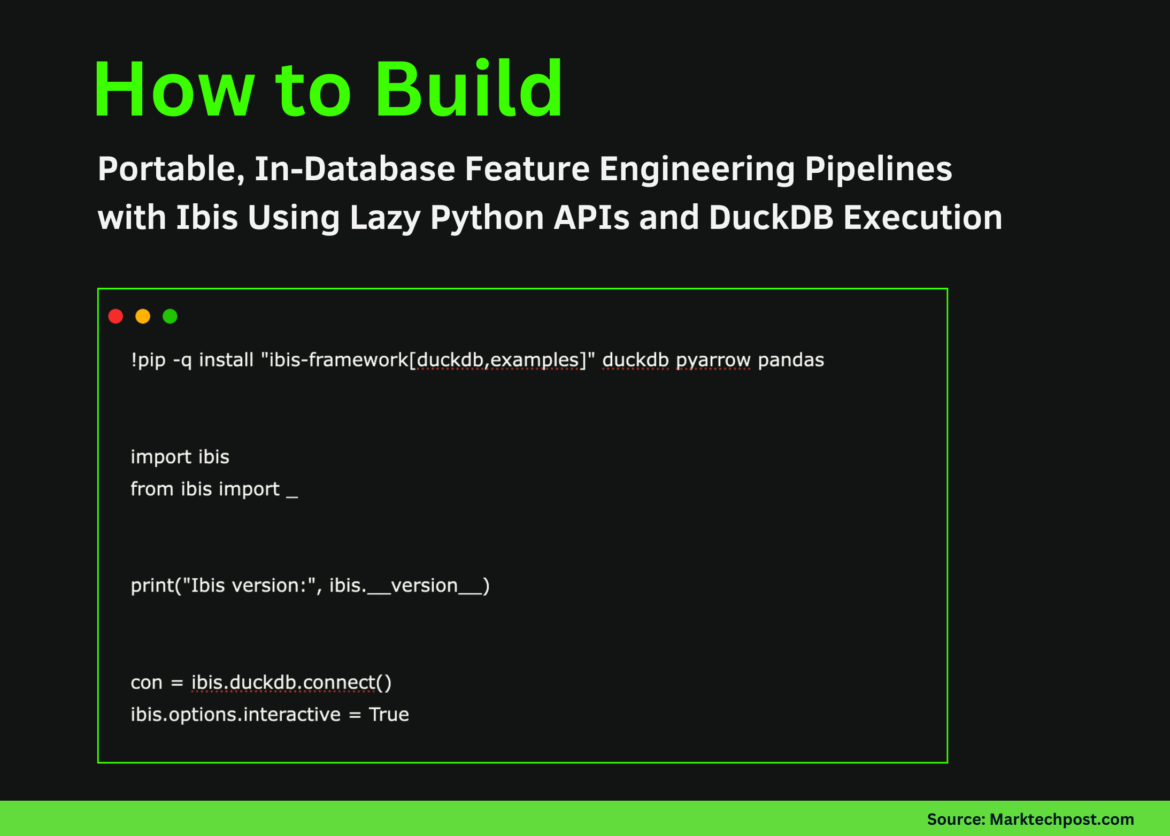

!pip -q install "ibis-framework(duckdb,examples)" duckdb pyarrow pandas

import ibis

from ibis import _

print("Ibis version:", ibis.__version__)

con = ibis.duckdb.connect()

ibis.options.interactive = TrueWe install the necessary libraries and start the Ibis environment. We establish a DuckDB connection and enable interactive execution so that all subsequent operations remain lazy and backend-driven. check it out full code here,

try:

base_expr = ibis.examples.penguins.fetch(backend=con)

except TypeError:

base_expr = ibis.examples.penguins.fetch()

if "penguins" not in con.list_tables():

try:

con.create_table("penguins", base_expr, overwrite=True)

except Exception:

con.create_table("penguins", base_expr.execute(), overwrite=True)

t = con.table("penguins")

print(t.schema())We load the Penguin dataset and explicitly register it inside the DuckDB catalog to ensure it is available for SQL execution. We verify the table schema and confirm that the data now resides inside the database instead of in local memory. check it out full code here,

def penguin_feature_pipeline(penguins):

base = penguins.mutate(

bill_ratio=_.bill_length_mm / _.bill_depth_mm,

is_male=(_.sex == "male").ifelse(1, 0),

)

cleaned = base.filter(

_.bill_length_mm.notnull()

& _.bill_depth_mm.notnull()

& _.body_mass_g.notnull()

& _.flipper_length_mm.notnull()

& _.species.notnull()

& _.island.notnull()

& _.year.notnull()

)

w_species = ibis.window(group_by=(cleaned.species))

w_island_year = ibis.window(

group_by=(cleaned.island),

order_by=(cleaned.year),

preceding=2,

following=0,

)

feat = cleaned.mutate(

species_avg_mass=cleaned.body_mass_g.mean().over(w_species),

species_std_mass=cleaned.body_mass_g.std().over(w_species),

mass_z=(

cleaned.body_mass_g

- cleaned.body_mass_g.mean().over(w_species)

) / cleaned.body_mass_g.std().over(w_species),

island_mass_rank=cleaned.body_mass_g.rank().over(

ibis.window(group_by=(cleaned.island))

),

rolling_3yr_island_avg_mass=cleaned.body_mass_g.mean().over(

w_island_year

),

)

return feat.group_by(("species", "island", "year")).agg(

n=feat.count(),

avg_mass=feat.body_mass_g.mean(),

avg_flipper=feat.flipper_length_mm.mean(),

avg_bill_ratio=feat.bill_ratio.mean(),

avg_mass_z=feat.mass_z.mean(),

avg_rolling_3yr_mass=feat.rolling_3yr_island_avg_mass.mean(),

pct_male=feat.is_male.mean(),

).order_by(("species", "island", "year"))We define a reusable feature engineering pipeline using pure Ibis expressions. We compute derived features, apply data cleaning, and use window functions and grouped aggregations to build advanced, database-native features while keeping the entire pipeline lazy. check it out full code here,

features = penguin_feature_pipeline

print(con.compile(features))

try:

df = features.to_pandas()

except Exception:

df = features.execute()

display(df.head())We implement the feature pipeline and compile it into DuckDB SQL to verify that all changes have been pushed to the database. We then run the pipeline and return only the final aggregated results for inspection. check it out full code here,

con.create_table("penguin_features", features, overwrite=True)

feat_tbl = con.table("penguin_features")

try:

preview = feat_tbl.limit(10).to_pandas()

except Exception:

preview = feat_tbl.limit(10).execute()

display(preview)

out_path = "/content/penguin_features.parquet"

con.raw_sql(f"COPY penguin_features TO '{out_path}' (FORMAT PARQUET);")

print(out_path)We materialize the engineered features as a table directly inside DuckDB and lazily query it for validation. We also export the results to a Parquet file, demonstrating how we can delegate database-computed features to downstream analytics or machine learning workflows.

In conclusion, we built, compiled, and executed an advanced feature engineering workflow entirely inside DuckDB using Ibis. We demonstrated how to inspect the generated SQL, insert the results directly into the database, and export them for downstream use while preserving portability in the analytical backend. This approach reinforces the core idea behind Ibis: we keep computation close to the data, minimize unnecessary data movement, and maintain a single, reusable Python codebase that spans from local experiment to production databases.

check it out full code hereAlso, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletterwait! Are you on Telegram? Now you can also connect with us on Telegram.

Check out our latest releases ai2025.devA 2025-focused analytics platform that models launches, benchmarks and transforms ecosystem activity into a structured dataset that you can filter, compare and export.

Asif Razzaq Marktechpost Media Inc. Is the CEO of. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. Their most recent endeavor is the launch of MarketTechPost, an Artificial Intelligence media platform, known for its in-depth coverage of Machine Learning and Deep Learning news that is technically robust and easily understood by a wide audience. The platform boasts of over 2 million monthly views, which shows its popularity among the audience.