3

INSTANCE_I = int(np.clip(INSTANCE_I, 0, len(X_test)-1))

x = X_test.iloc(INSTANCE_I).values

y_true = float(y_test.iloc(INSTANCE_I))

pred = float(model.predict((x))(0))

iv = explainer.explain(x, budget=int(BUDGET_LOCAL), random_state=0)

baseline = float(getattr(iv, "baseline_value", 0.0))

main_effects = extract_main_effects(iv, feature_names)

pair_df = extract_pair_matrix(iv, feature_names)

print("n" + "="*90)

print("LOCAL EXPLANATION (single test instance)")

print("="*90)

print(f"Index={INDEX} | max_order={MAX_ORDER} | budget={BUDGET_LOCAL} | instance={INSTANCE_I}")

print(f"Prediction: {pred:.6f} | True: {y_true:.6f} | Baseline (if available): {baseline:.6f}")

print("nTop main effects (signed):")

display(main_effects.reindex(main_effects.abs().sort_values(ascending=False).head(TOP_K).index).to_frame())

print("nASCII view (signed main effects, top-k):")

print(ascii_bar(main_effects, top_k=TOP_K))

print("nTop pairwise interactions by |value| (local):")

pairs = ()

for i in range(n_features):

for j in range(i+1, n_features):

v = float(pair_df.iat(i, j))

pairs.append((feature_names(i), feature_names(j), v, abs(v)))

pairs_df = pd.DataFrame(pairs, columns=("feature_i", "feature_j", "interaction", "abs_interaction")).sort_values("abs_interaction", ascending=False).head(min(25, len(pairs)))

display(pairs_df)

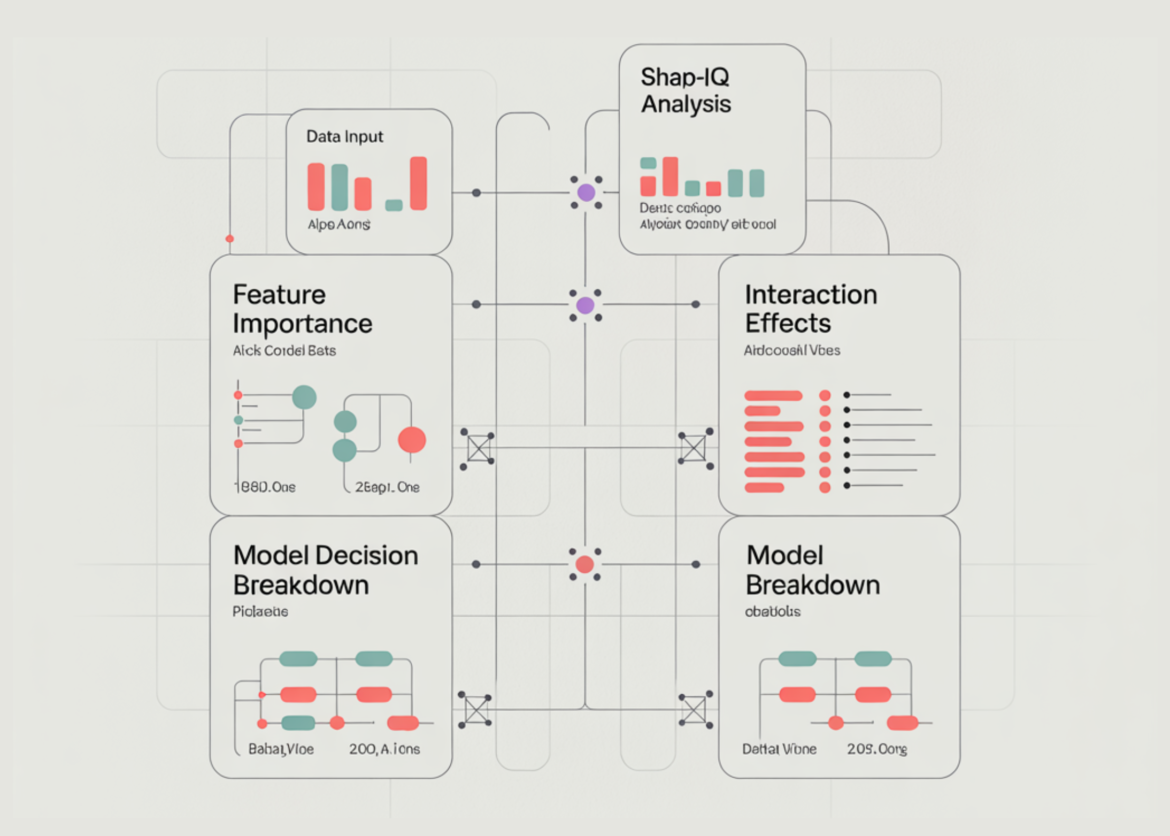

fig1 = plot_local_feature_bar(main_effects, TOP_K)

fig2 = plot_local_interaction_heatmap(pair_df, list(main_effects.abs().sort_values(ascending=False).head(TOP_K).index))

fig3 = plot_waterfall(baseline, main_effects, TOP_K)

fig1.show()

fig2.show()

fig3.show()

if GLOBAL_ON:

print("n" + "="*90)

print("GLOBAL SUMMARIES (sampled over multiple test points)")

print("="*90)

GLOBAL_N = int(np.clip(GLOBAL_N, 5, len(X_test)))

sample = X_test.sample(n=GLOBAL_N, random_state=1).values

global_main, global_pair = global_summaries(

explainer=explainer,

X_samples=sample,

feature_names=feature_names,

budget=int(BUDGET_GLOBAL),

seed=123,

)

print(f"Samples={GLOBAL_N} | budget/sample={BUDGET_GLOBAL}")

print("nGlobal feature importance (mean |main effect|):")

display(global_main.head(TOP_K))

top_feats_global = list(global_main("feature").head(TOP_K).values)

sub = global_pair.loc(top_feats_global, top_feats_global)

figg1 = px.bar(global_main.head(TOP_K), x="mean_abs_main_effect", y="feature", orientation="h", title="Global Feature Importance (mean |main effect|, sampled)")

figg1.update_layout(yaxis={"categoryorder": "total ascending"})

figg2 = px.imshow(sub.values, x=sub.columns, y=sub.index, aspect="auto", title="Global Pairwise Interaction Importance (mean |interaction|, sampled)")

figg1.show()

figg2.show()

print("nDone. If you want it faster: lower budgets or GLOBAL_N, or set MAX_ORDER=1.")