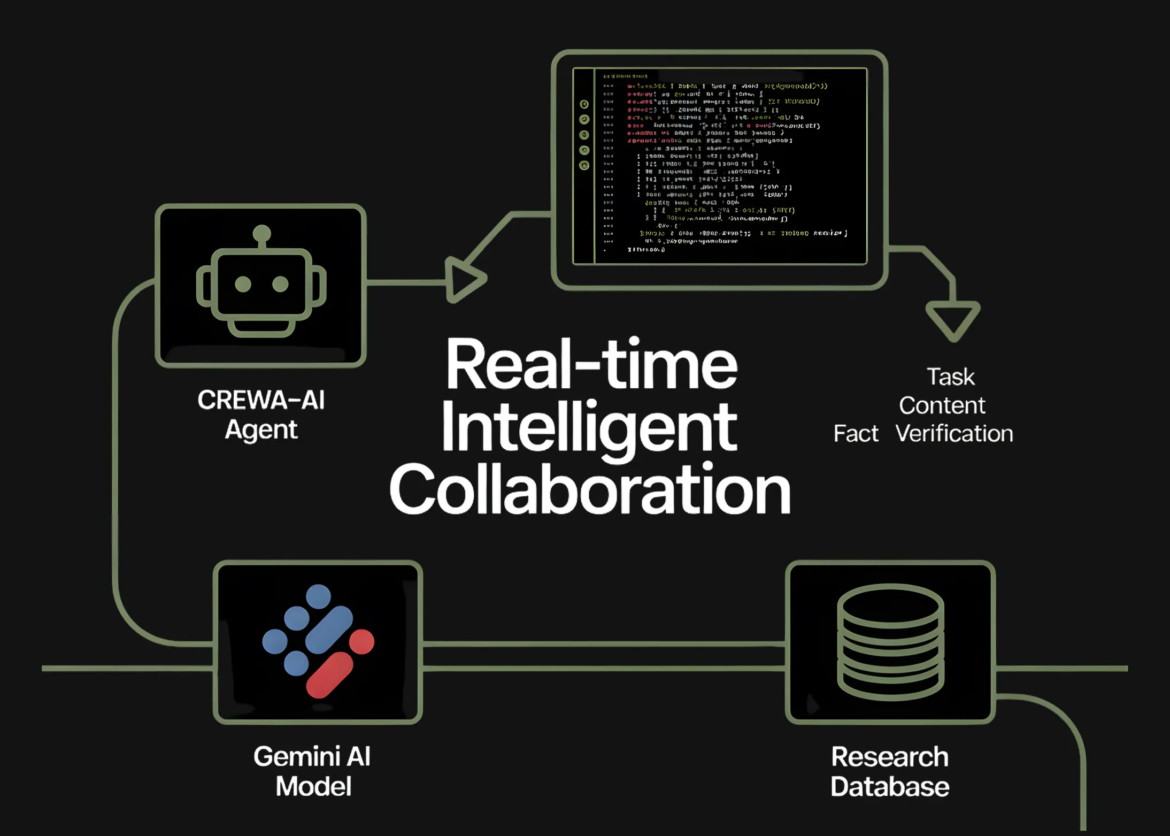

In this tutorial, we implement how we create a small but powerful two-agent CrewAI Systems that collaborate using the Gemini flash model. We set up our environment, authenticate securely, define specialized agents, and organize the tasks that flow from research to structured writing. As we run teams, we see how each component works together in real time, giving us a practical understanding of modern agentic workflows powered by LLM. With these steps, we clearly see how multi-agent pipelines become practical, modular, and developer-friendly. check it out full code here,

import os

import sys

import getpass

from textwrap import dedent

print("Installing CrewAI and tools... (this may take 1-2 mins)")

!pip install -q crewai crewai-tools

from crewai import Agent, Task, Crew, Process, LLMWe set up our environment and installed the necessary CrewAI packages so we could get everything running smoothly in Colab. We import the necessary modules and lay the foundation of our multi-agent workflow. This step ensures that our runtime is clean and ready for the next agents we create. check it out full code here,

print("n--- API Authentication ---")

api_key = None

try:

from google.colab import userdata

api_key = userdata.get('GEMINI_API_KEY')

print("✅ Found GEMINI_API_KEY in Colab Secrets.")

except Exception:

pass

if not api_key:

print("ℹ️ Key not found in Secrets.")

api_key = getpass.getpass("🔑 Enter your Google Gemini API Key: ")

os.environ("GEMINI_API_KEY") = api_key

if not api_key:

sys.exit("❌ Error: No API Key provided. Please restart and enter a key.")We authenticate ourselves securely by retrieving or entering the Gemini API key. We ensure that the key is safely stored in the environment so that the model can work without any interruption. This step assures us that our agent framework can reliably communicate with the LLM. check it out full code here,

gemini_flash = LLM(

model="gemini/gemini-2.0-flash",

temperature=0.7

)

We configure the Gemini Flash model that our agents rely on for logic and generation. We choose temperatures and model versions to balance creativity and accuracy. This configuration becomes the shared intelligence that drives all agent actions. check it out full code here,

researcher = Agent(

role="Tech Researcher",

goal="Uncover cutting-edge developments in AI Agents",

backstory=dedent("""You are a veteran tech analyst with a knack for finding emerging trends before they become mainstream. You specialize in Autonomous AI Agents and Large Language Models."""),

verbose=True,

allow_delegation=False,

llm=gemini_flash

)

writer = Agent(

role="Technical Writer",

goal="Write a concise, engaging blog post about the researcher"s findings',

backstory=dedent("""You transform complex technical concepts into compelling narratives. You write for a developer audience who wants practical insights without fluff."""),

verbose=True,

allow_delegation=False,

llm=gemini_flash

)

We define two special agents, a researcher and a writer, each with a clear role and background. We design them so that they complement each other, allowing one to find insights while the other turns them into polished writing. Here, we begin to see how multi-agent collaboration takes shape. check it out full code here,

research_task = Task(

description=dedent("""Conduct a simulated research analysis on 'The Future of Agentic AI in 2025'. Identify three key trends: 1. Multi-Agent Orchestration 2. Neuro-symbolic AI 3. On-device Agent execution Provide a summary for each based on your 'expert knowledge'."""),

expected_output="A structured list of 3 key AI trends with brief descriptions.",

agent=researcher

)

write_task = Task(

description=dedent("""Using the researcher's findings, write a short blog post (approx 200 words). The post should have: - A catchy title - An intro - The three bullet points - A conclusion on why developers should care."""),

expected_output="A markdown-formatted blog post.",

agent=writer,

context=(research_task)

)

We create two tasks that assign specific responsibilities to our agents. We let the researcher generate structured insights and then give the output to a writer to create a complete blog post. This step shows how we cleanly handle sequential task dependencies within CrewAI. check it out full code here,

tech_crew = Crew(

agents=(researcher, writer),

tasks=(research_task, write_task),

process=Process.sequential,

verbose=True

)

print("n--- 🤖 Starting the Crew ---")

result = tech_crew.kickoff()

from IPython.display import Markdown

print("nn########################")

print("## FINAL OUTPUT ##")

print("########################n")

display(Markdown(str(result)))We aggregate agents and tasks into a single team and run an entire multi-agent workflow. We see how the system executes step by step and produces the final Markdown output. This is where everything comes together, and we see our agents collaborating in real time.

Finally, we appreciate how seamlessly CrewAI allows us to create coordinated agent systems that think, research, and write together. We experience first-hand how defining roles, tasks, and process flows allows us to modify complex tasks and achieve consistent output with minimal code. This framework empowers us to build richer, more autonomous agentic applications, and we are confident in expanding this foundation to larger multi-agent systems, production pipelines, or more creative AI collaborations.

check it out full code hereFeel free to check us out GitHub page for tutorials, code, and notebooksAlso, feel free to follow us Twitter And don’t forget to join us 100k+ ml subreddit and subscribe our newsletterwait! Are you on Telegram? Now you can also connect with us on Telegram.

Asif Razzaq Marktechpost Media Inc. Is the CEO of. As a visionary entrepreneur and engineer, Asif is committed to harnessing the potential of Artificial Intelligence for social good. Their most recent endeavor is the launch of MarketTechPost, an Artificial Intelligence media platform, known for its in-depth coverage of Machine Learning and Deep Learning news that is technically robust and easily understood by a wide audience. The platform boasts of over 2 million monthly views, which shows its popularity among the audience.