Last updated by Editorial Team on April 10, 2026

Author(s): Utkarsh Mittal

Originally published on Towards AI.

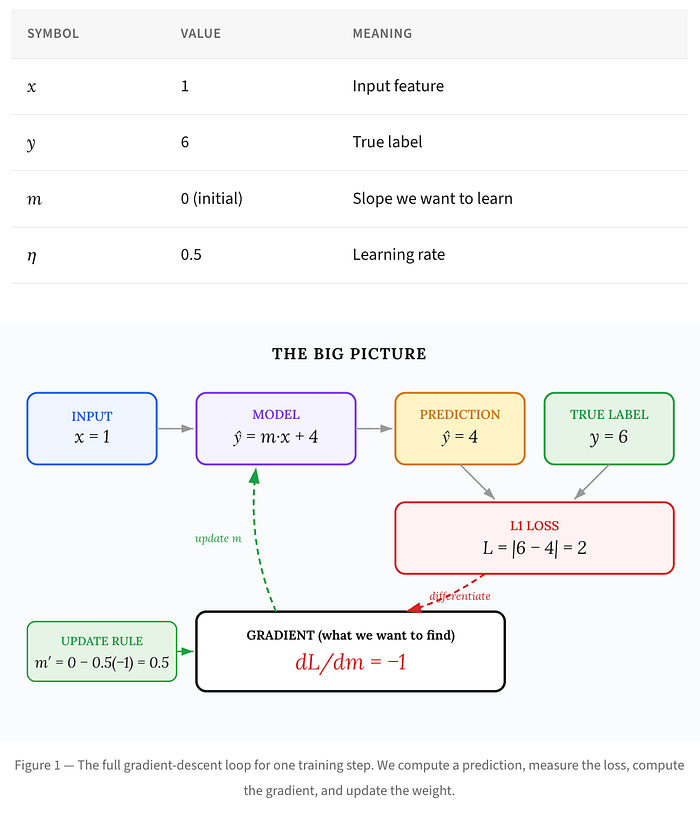

A complete, step-by-step walkthrough of how gradient descent with full-value loss works – with diagrams you can actually follow.

If you’ve ever read a deep learning tutorial and encountered a derivative that appeared out of nowhere, this article is for you. We’re going to break down one of the simplest – yet most instructive – gradient calculations in machine learning: the gradient of the L1 (full-value) loss with respect to a single weight.

The article explains the sequential computation of the L1 loss through a structured approach, starting from a simple regression model and discussing its components, the loss function, and how to obtain the gradient with respect to the weights. It emphasizes clarity using concrete examples and progressively builds understanding through the chain rule in calculus. The summary concludes by comparing the insensitivity of the L1 loss to outliers and the responsiveness of the L2 loss to error magnitude, ultimately providing guidance on when to use each loss function effectively.

Read the entire blog for free on Medium.

Published via Towards AI

We build enterprise-grade AI. We will also teach you how to master it.

15 Engineers. 100,000+ students. The AI Academy side teaches what actually avoids production.

Get started for free – no commitments:

→ 6-Day Agent AI Engineering Email Guide – One Practical Lesson Per Day

→ Agents Architecture Cheatsheet – 3 Years of Architecture Decisions in 6 Pages

Our courses:

→ AI Engineering Certification – 90+ lessons from project selection to deployed product. The most comprehensive practical LLM course.

→ Agent Engineering Course – Hands-on with production agent architectures, memory, routing, and eval frameworks – built from real enterprise engagements.

→ AI for Work – Understand, evaluate, and apply AI to complex work tasks.

Comment: The content of the article represents the views of the contributing authors and not those of AI.